Natural language processing it powerpoint presentation slides

Natural language processing NLP is a field of computer science notably, a branch of AI concerning the capacity of computers to interpret text and spoken words in the same manner that humans can. Check out our competently designed NLP IT template that gives a brief idea about the current business problems such as spam emails, unstructured data and the benefits of NLP in eliminating the issues. In this PowerPoint Presentation, we have covered the overview of natural language processing, including various approaches, techniques, tools, and it works. In addition, this template contains components, phases, architecture, and its challenges and difficulty with computers. Furthermore, this template includes natural language processing with other technologies such as log mining, text mining, and a difference between classical and deep learning-based NLP. Moreover, this PPT caters to the implementation of NLP in its application in various sectors such as business, healthcare, web mining, etc. Lastly, this deck comprises the impacts of NLP implementation on business, a 30-60-90 days plan of NLP implementation, and a roadmap. Download this 100 percent editable template and customize it based on needs now.

- Google Slides is a new FREE Presentation software from Google.

- All our content is 100% compatible with Google Slides.

- Just download our designs, and upload them to Google Slides and they will work automatically.

- Amaze your audience with SlideTeam and Google Slides.

-

Want Changes to This PPT Slide? Check out our Presentation Design Services

- WideScreen Aspect ratio is becoming a very popular format. When you download this product, the downloaded ZIP will contain this product in both standard and widescreen format.

-

- Some older products that we have may only be in standard format, but they can easily be converted to widescreen.

- To do this, please open the SlideTeam product in Powerpoint, and go to

- Design ( On the top bar) -> Page Setup -> and select "On-screen Show (16:9)” in the drop down for "Slides Sized for".

- The slide or theme will change to widescreen, and all graphics will adjust automatically. You can similarly convert our content to any other desired screen aspect ratio.

Compatible With Google Slides

Get This In WideScreen

You must be logged in to download this presentation.

PowerPoint presentation slides

Deliver an informational PPT on various topics by using this Natural Language Processing IT Powerpoint Powerpoint Presentation. This deck focuses and implements best industry practices, thus providing a birds-eye view of the topic. Encompassed with seventy nine slides, designed using high-quality visuals and graphics, this deck is a complete package to use and download. All the slides offered in this deck are subjective to innumerable alterations, thus making you a pro at delivering and educating. You can modify the color of the graphics, background, or anything else as per your needs and requirements. It suits every business vertical because of its adaptable layout.

People who downloaded this PowerPoint presentation also viewed the following :

Content of this Powerpoint Presentation

Slide 1: This slide introduces Natural Language Processing (IT). State Your Company Name and begin.

Slide 2: This is an Agenda slide. State your agendas here.

Slide 3: This slide presents Table of Content for the presentation.

Slide 4: This slide shows Table of Content for the presentation.

Slide 5: This slide displays Table of Content highlighting Current Problems Faced by Company.

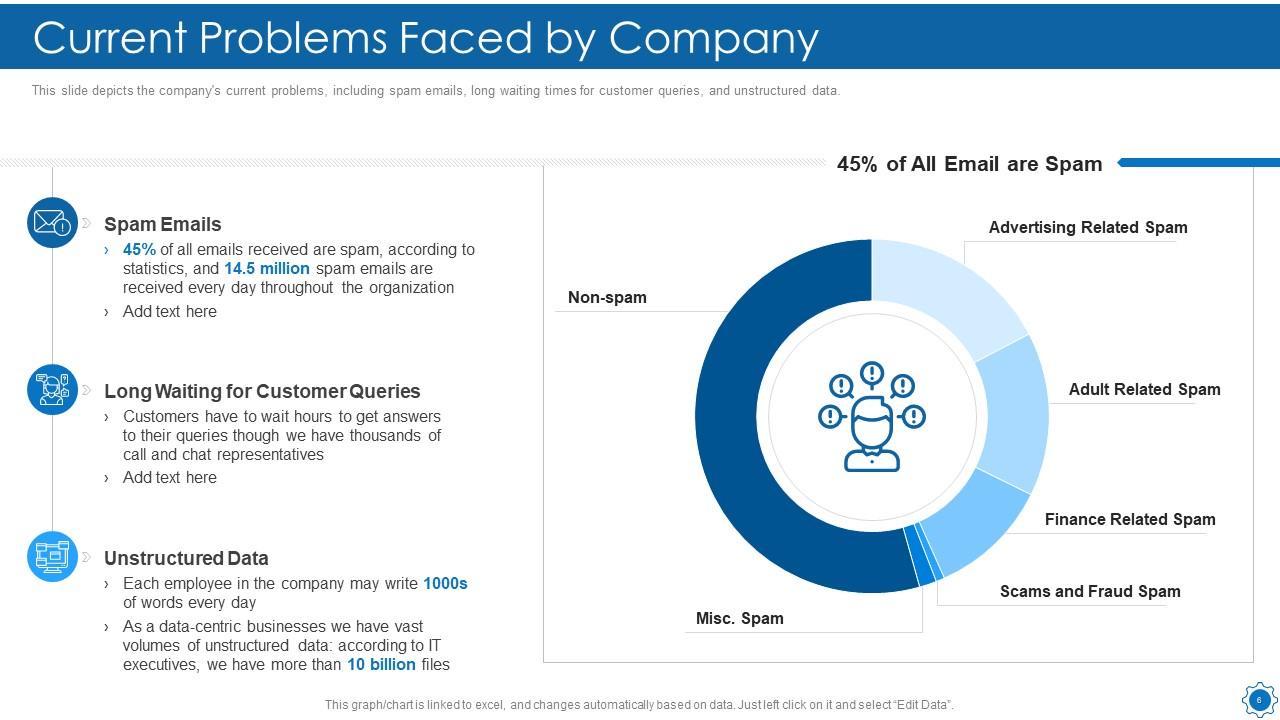

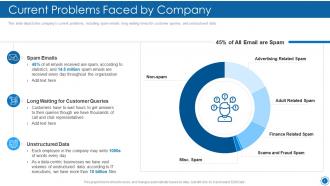

Slide 6: This slide depicts the company's current problems, including spam emails, long waiting times for customer queries, etc.

Slide 7: This slide shows Table of Content for the presentation.

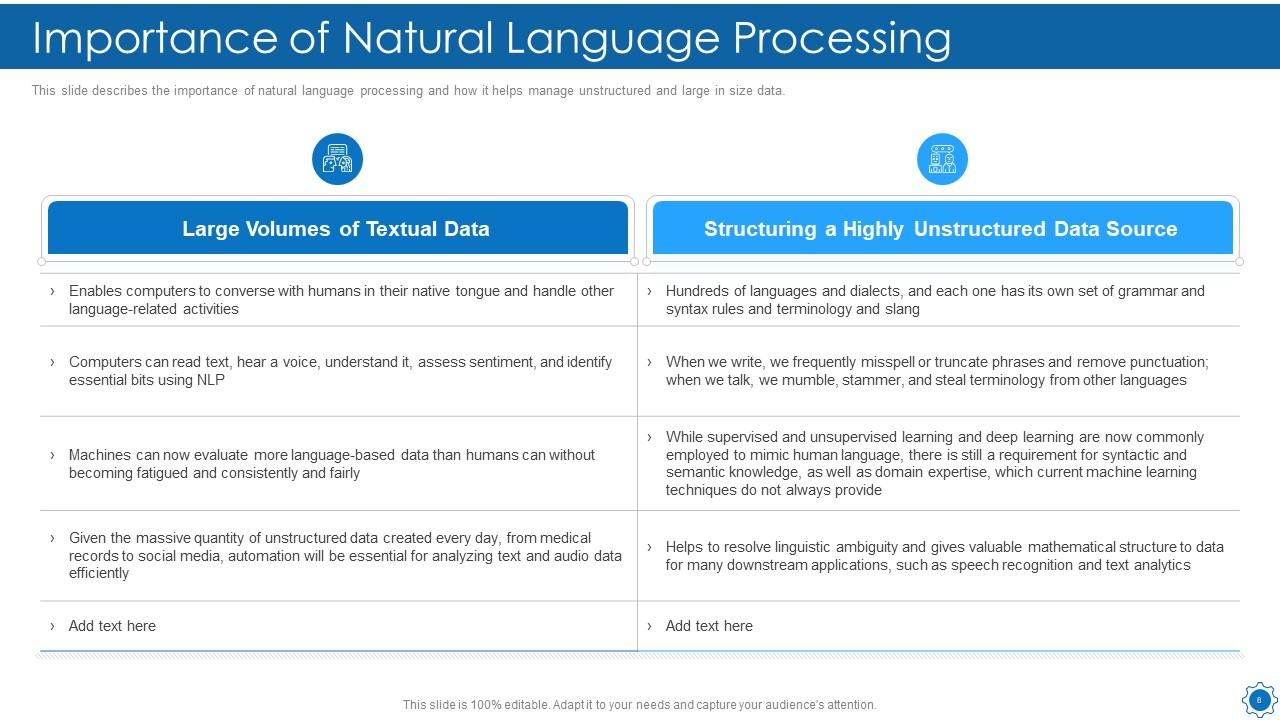

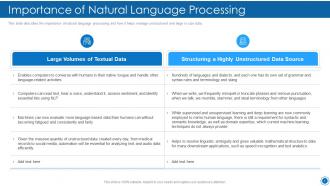

Slide 8: This slide presents importance of natural language processing and how it helps manage unstructured and large in size data.

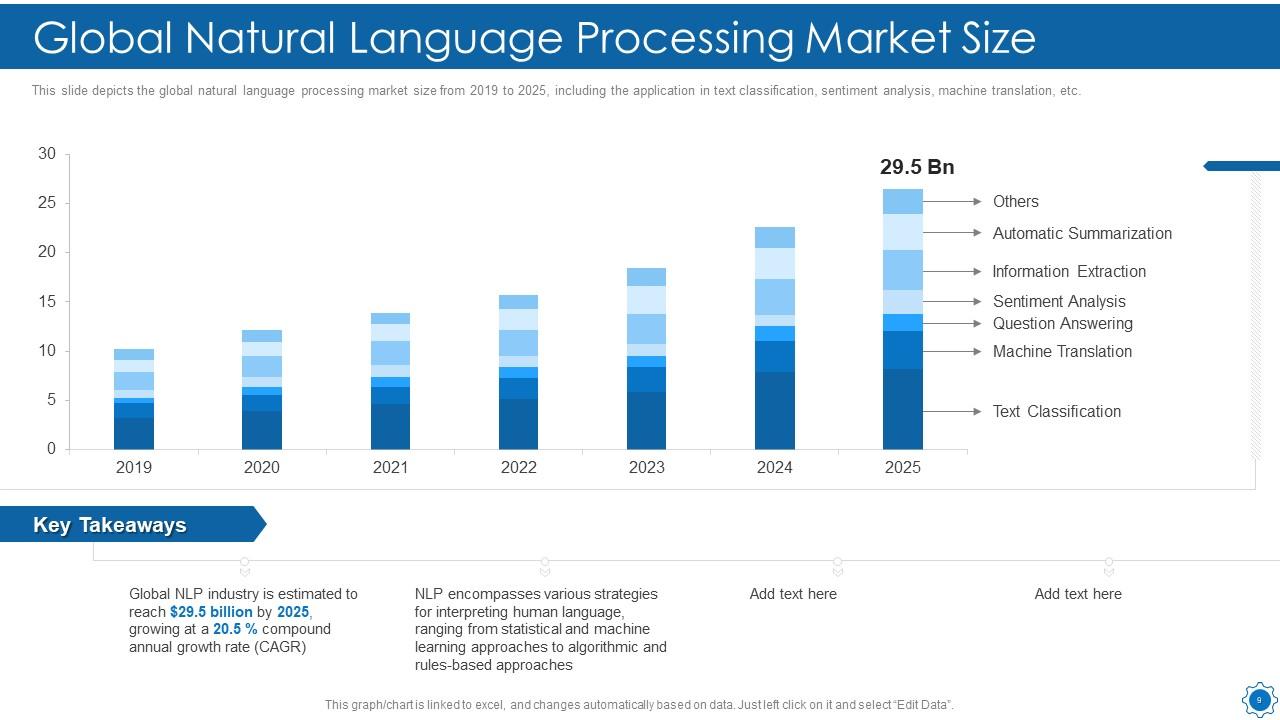

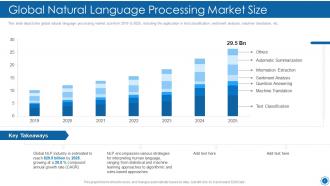

Slide 9: This slide shows Global Natural Language Processing Market Size.

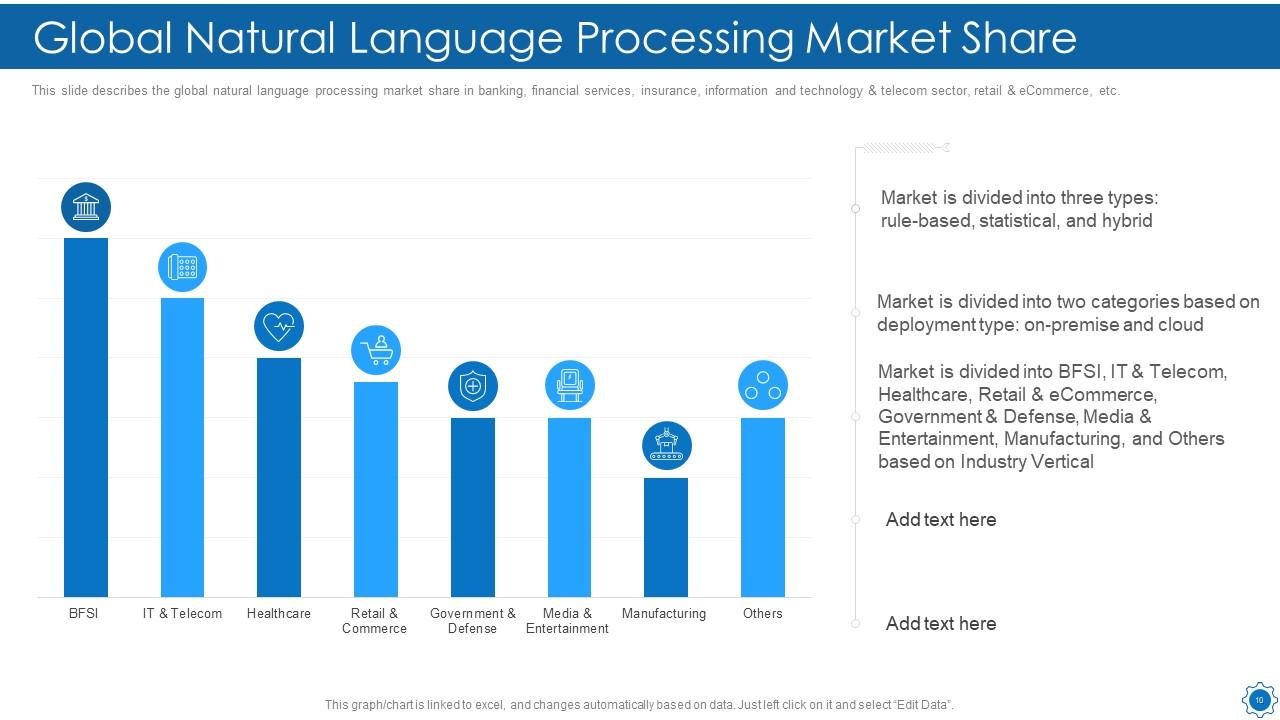

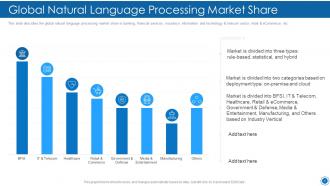

Slide 10: This slide displays Global Natural Language Processing Market Share.

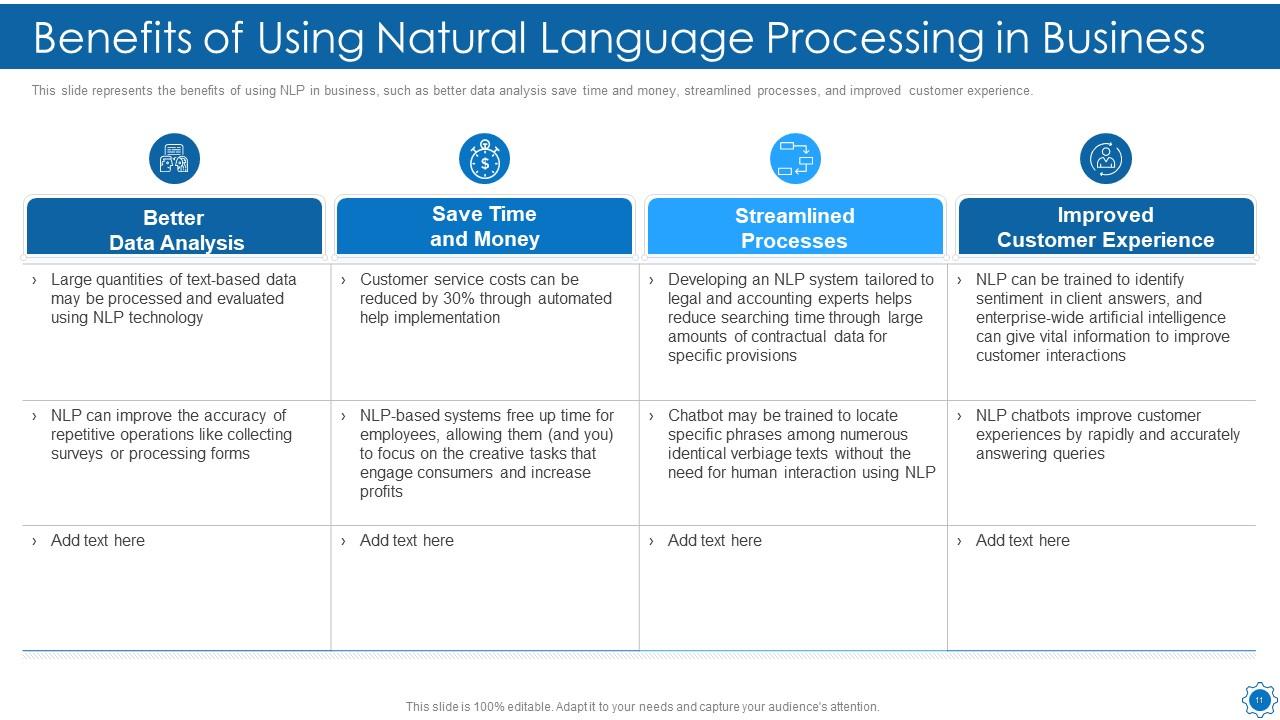

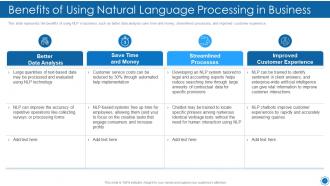

Slide 11: This slide shows Benefits of Using Natural Language Processing in Business.

Slide 12: This slide shows Table of Content for the presentation.

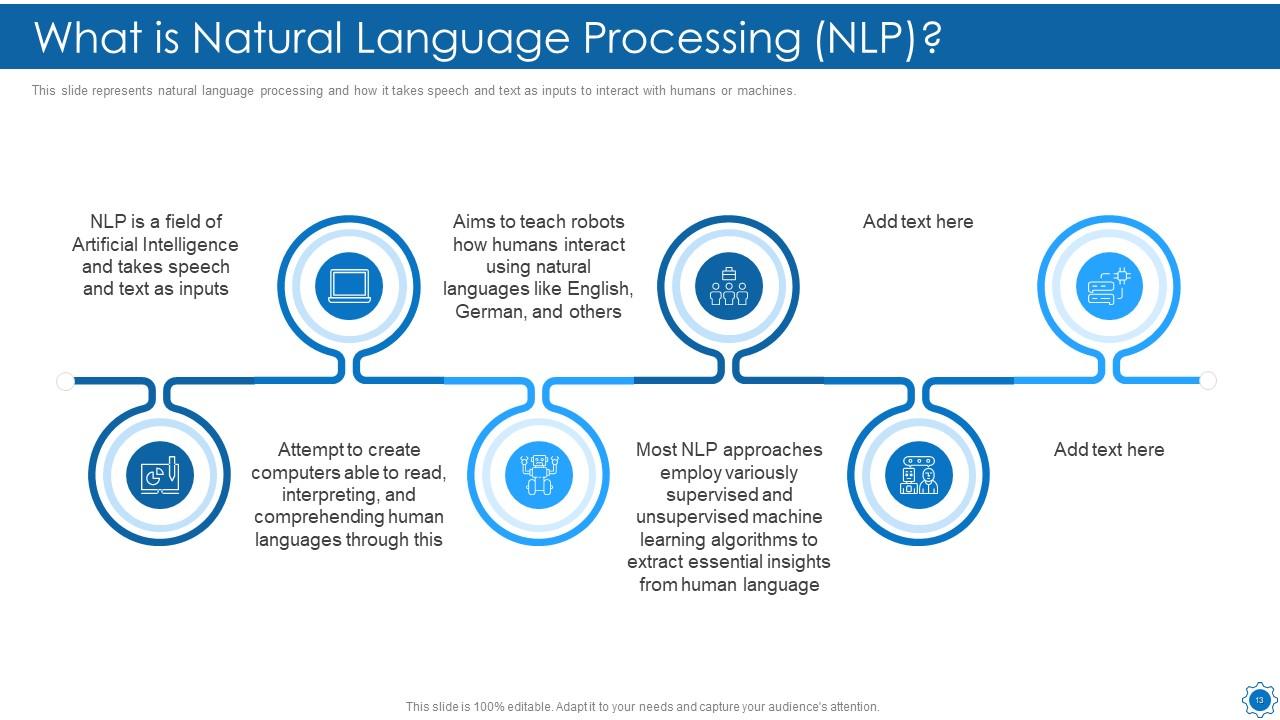

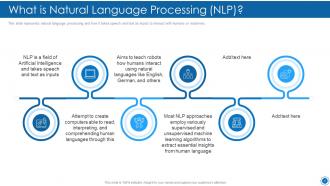

Slide 13: This slide represents natural language processing and how it takes speech and text as inputs.

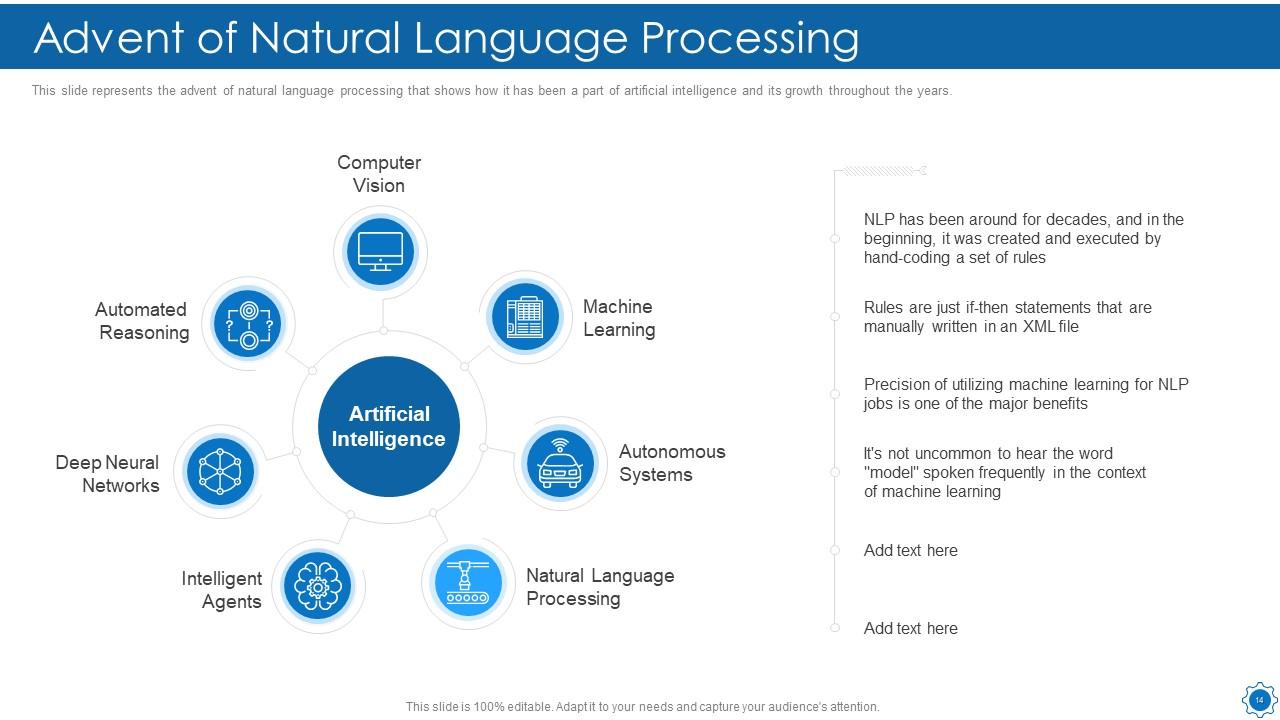

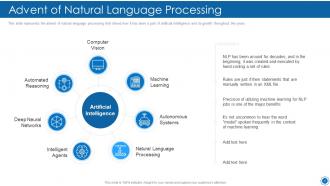

Slide 14: This slide shows Advent of Natural Language Processing.

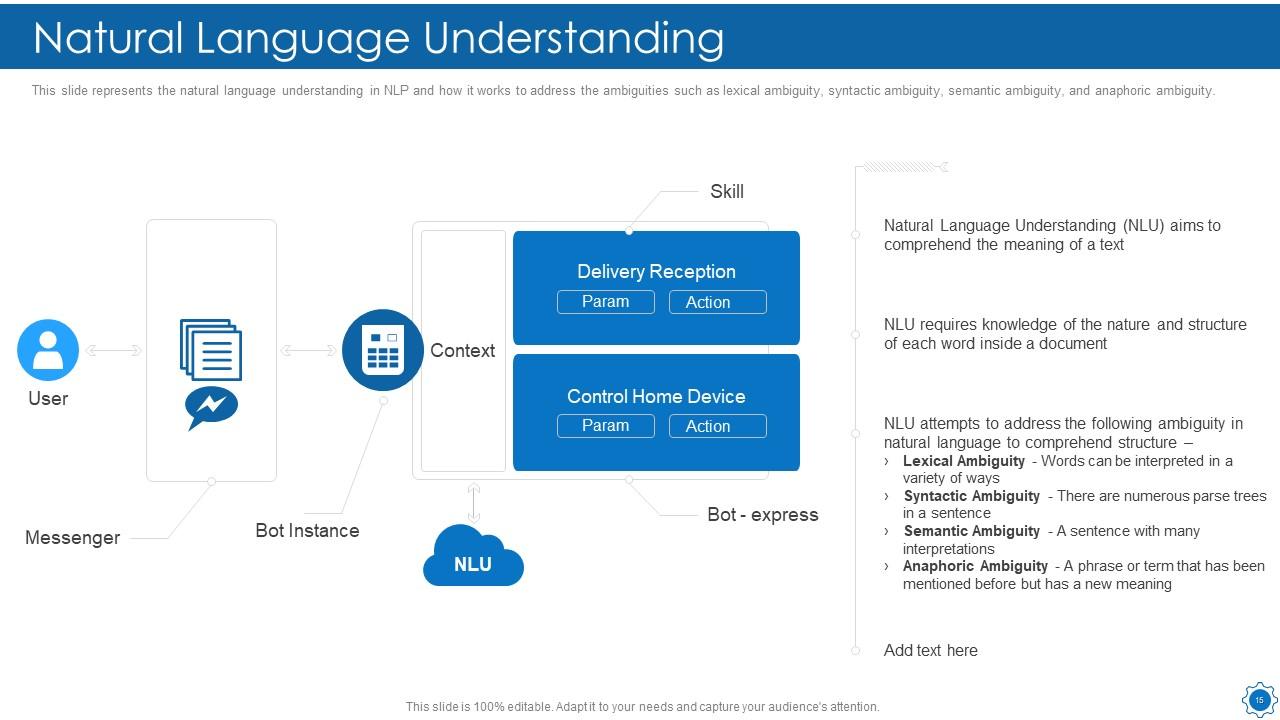

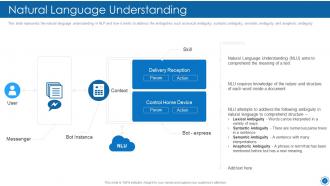

Slide 15: This slide displays the natural language understanding in NLP and how it works to address the ambiguities.

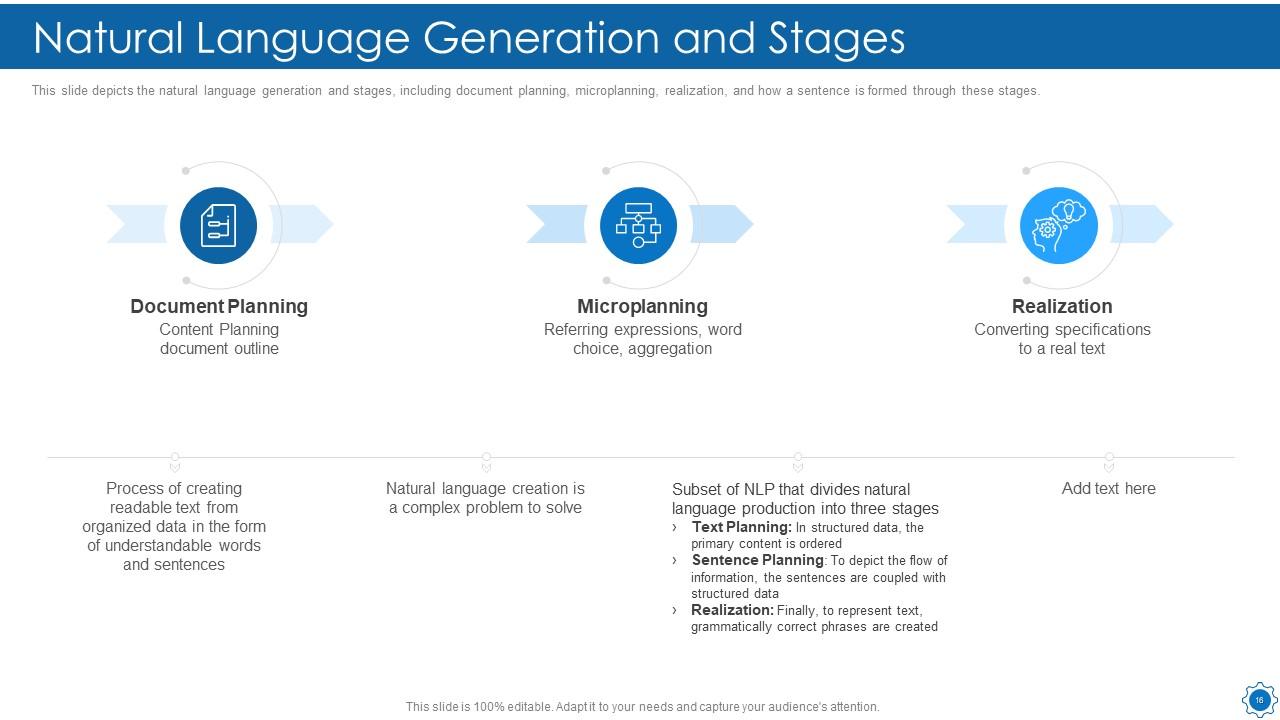

Slide 16: This slide depicts the natural language generation and stages, including document planning, microplanning, etc.

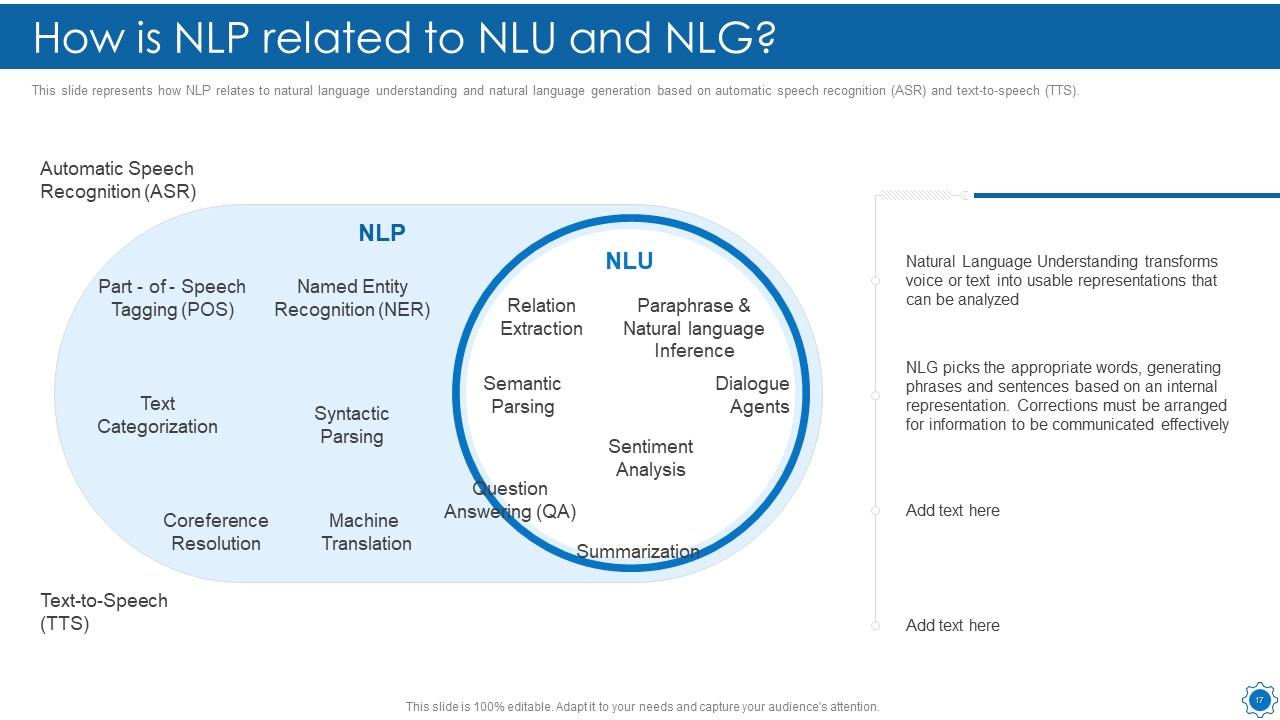

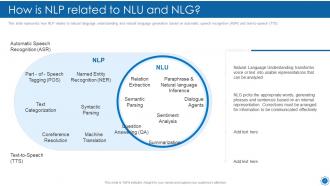

Slide 17: This slide represents how NLP relates to natural language understanding and natural language generation.

Slide 18: This slide presents Table of Content for the presentation.

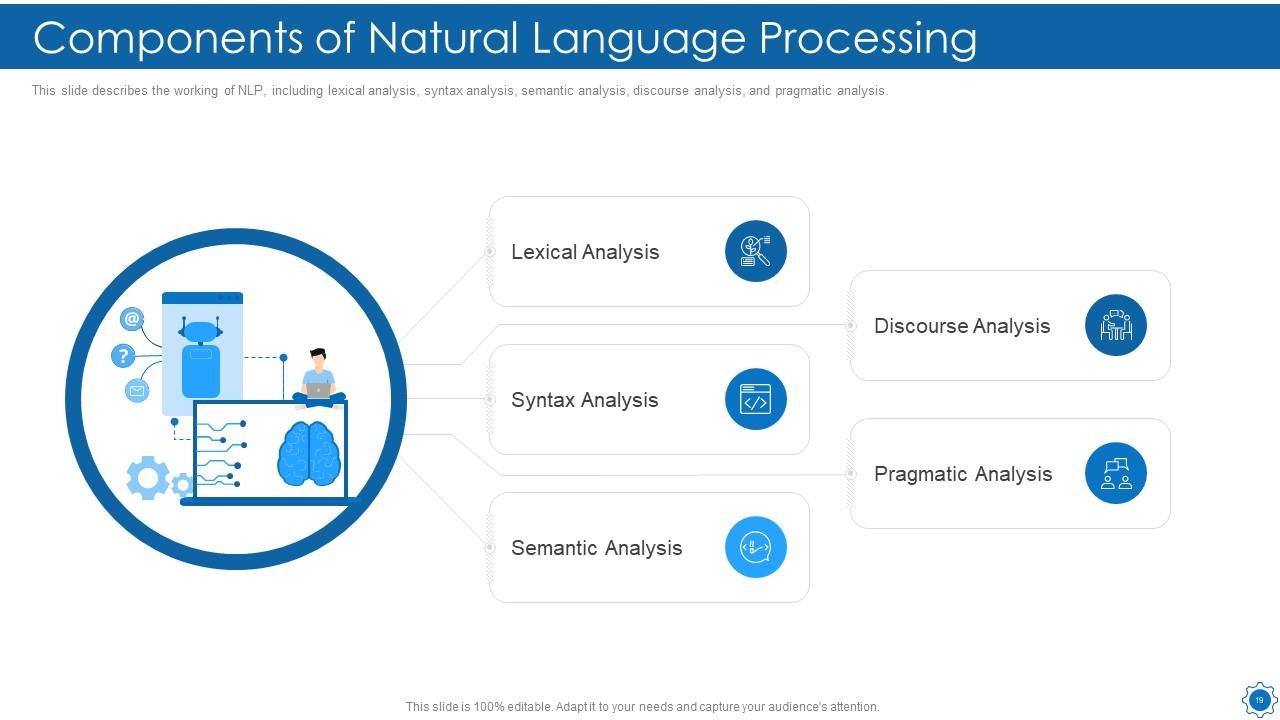

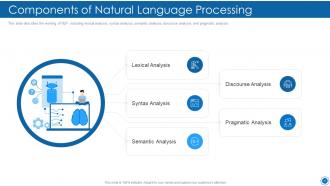

Slide 19: This slide shows Components of Natural Language Processing.

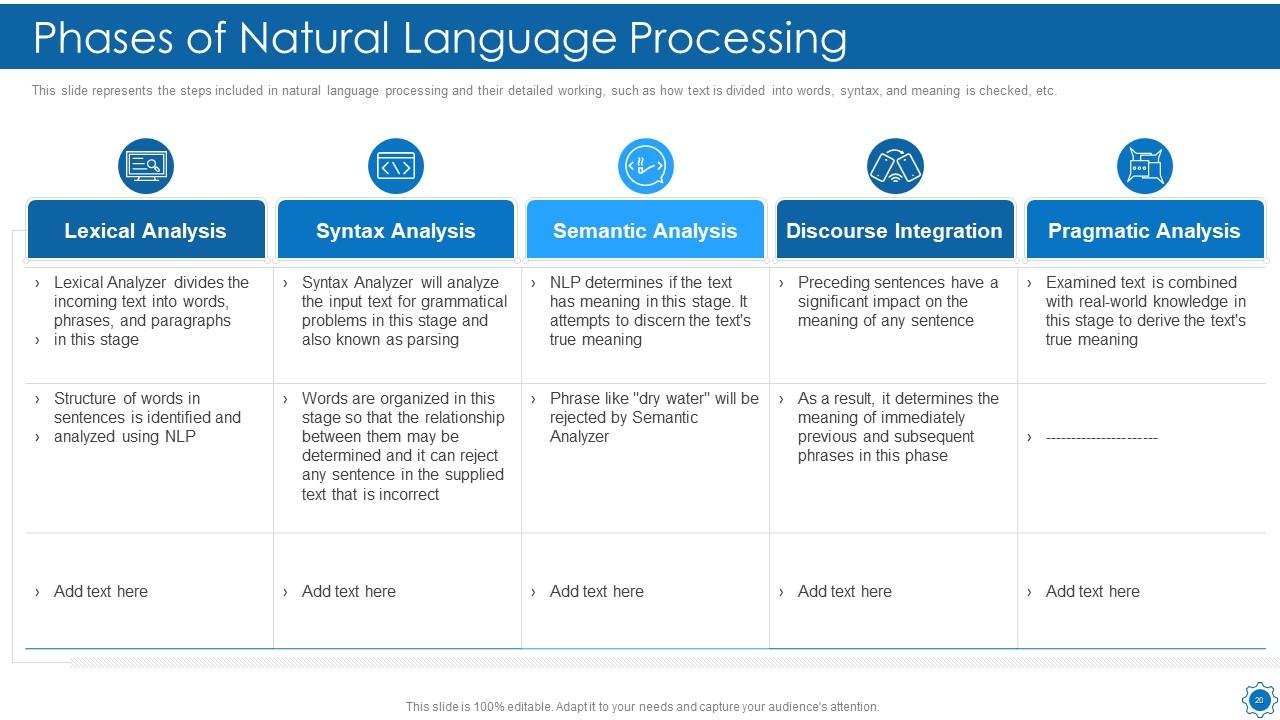

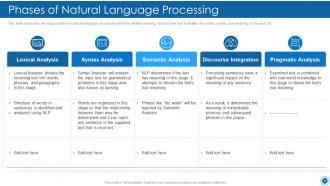

Slide 20: This slide displays the steps included in natural language processing and their detailed working.

Slide 21: This slide shows Table of Content for the presentation.

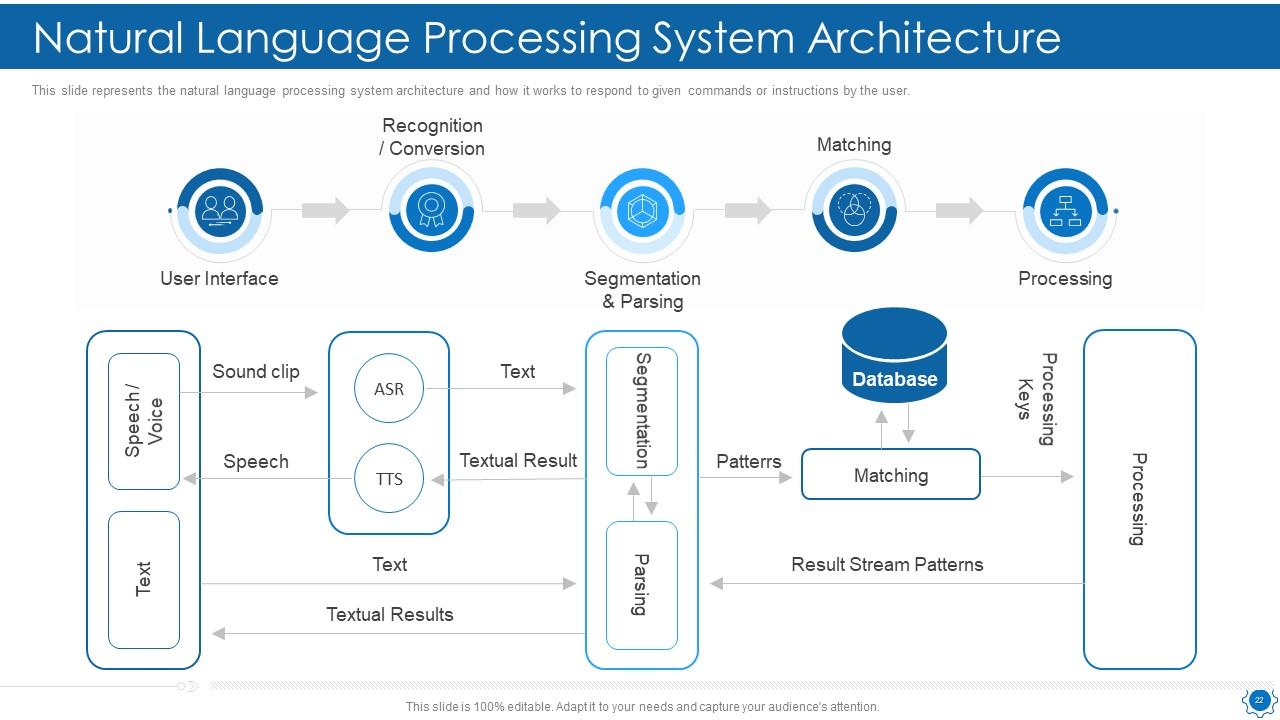

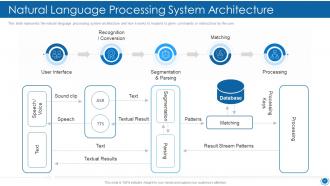

Slide 22: This slide shows Natural Language Processing System Architecture.

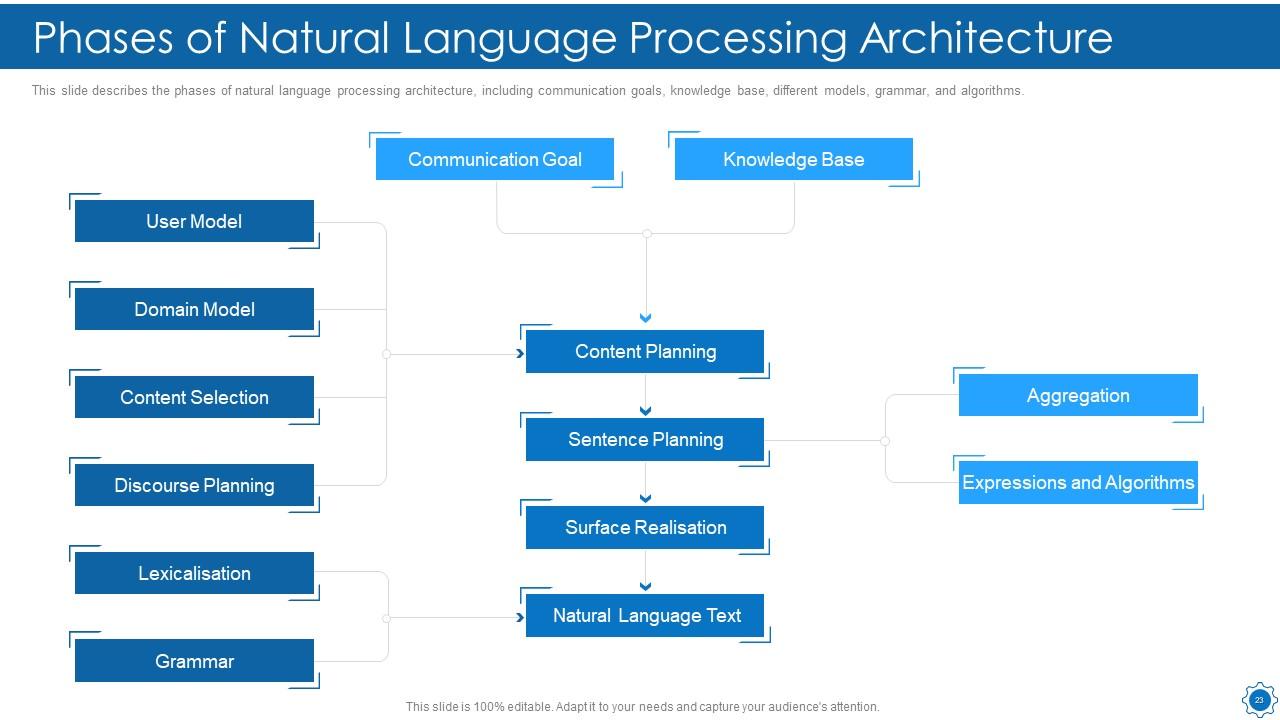

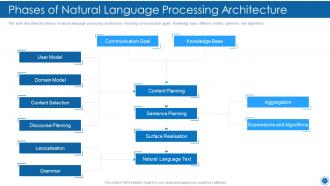

Slide 23: This slide presents Phases of Natural Language Processing Architecture.

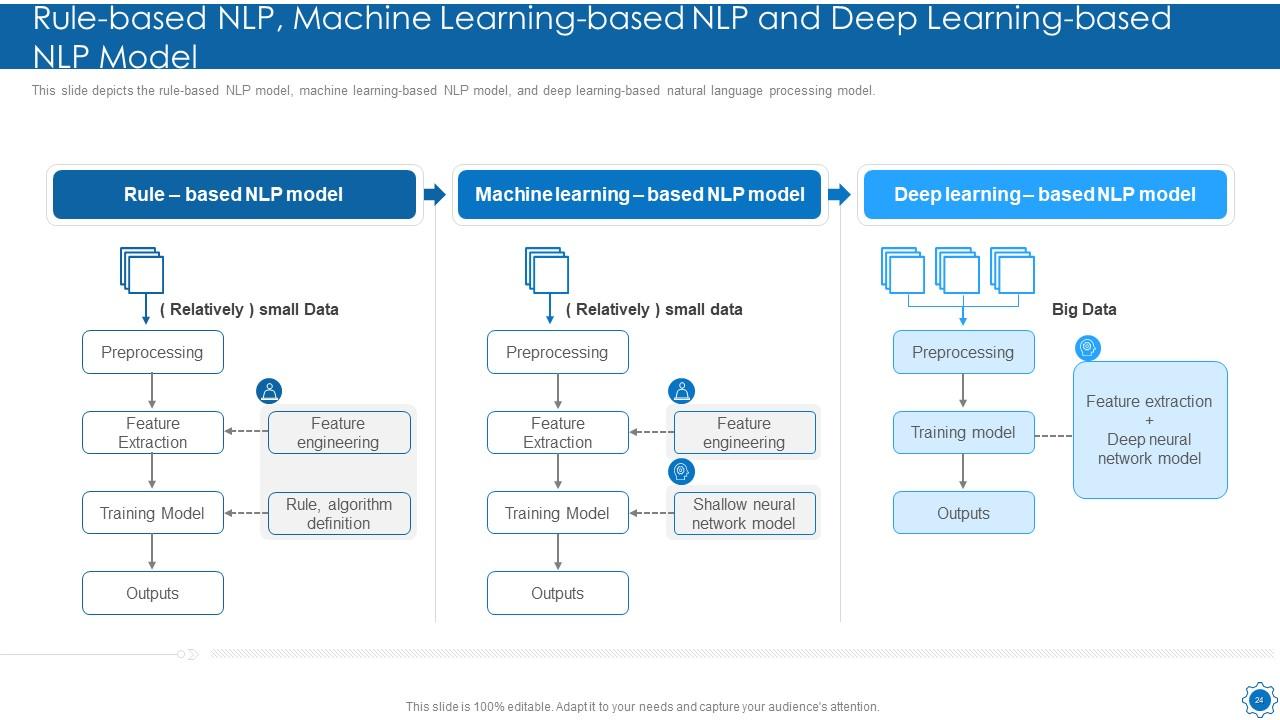

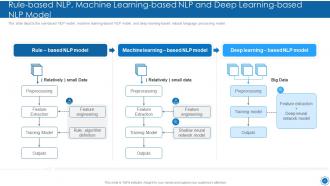

Slide 24: This slide shows Rule-based NLP, Machine Learning-based NLP and Deep Learning-based NLP Model.

Slide 25: This slide displays Table of Content for the presentation.

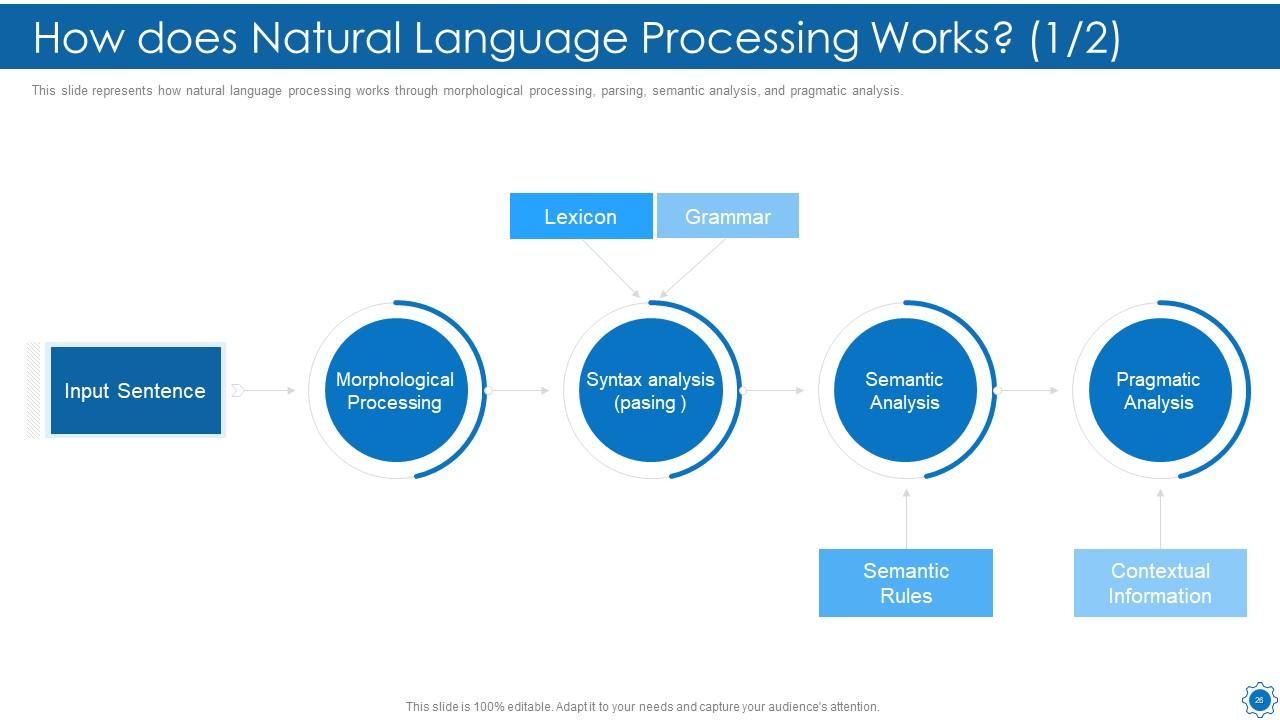

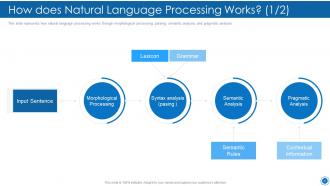

Slide 26: This slide represents how natural language processing works through morphological processing, parsing, semantic analysis, etc.

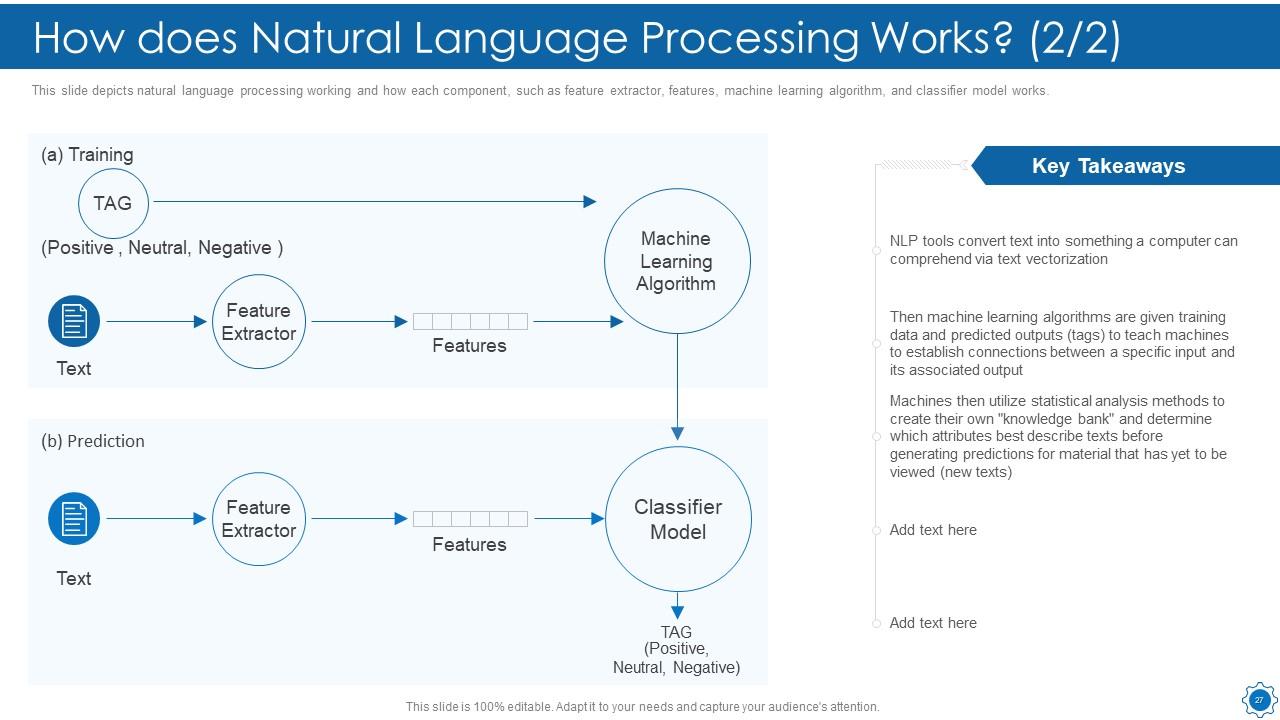

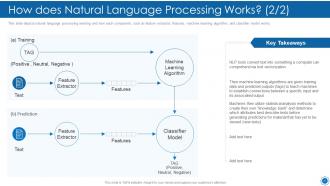

Slide 27: This slide shows How does Natural Language Processing Works.

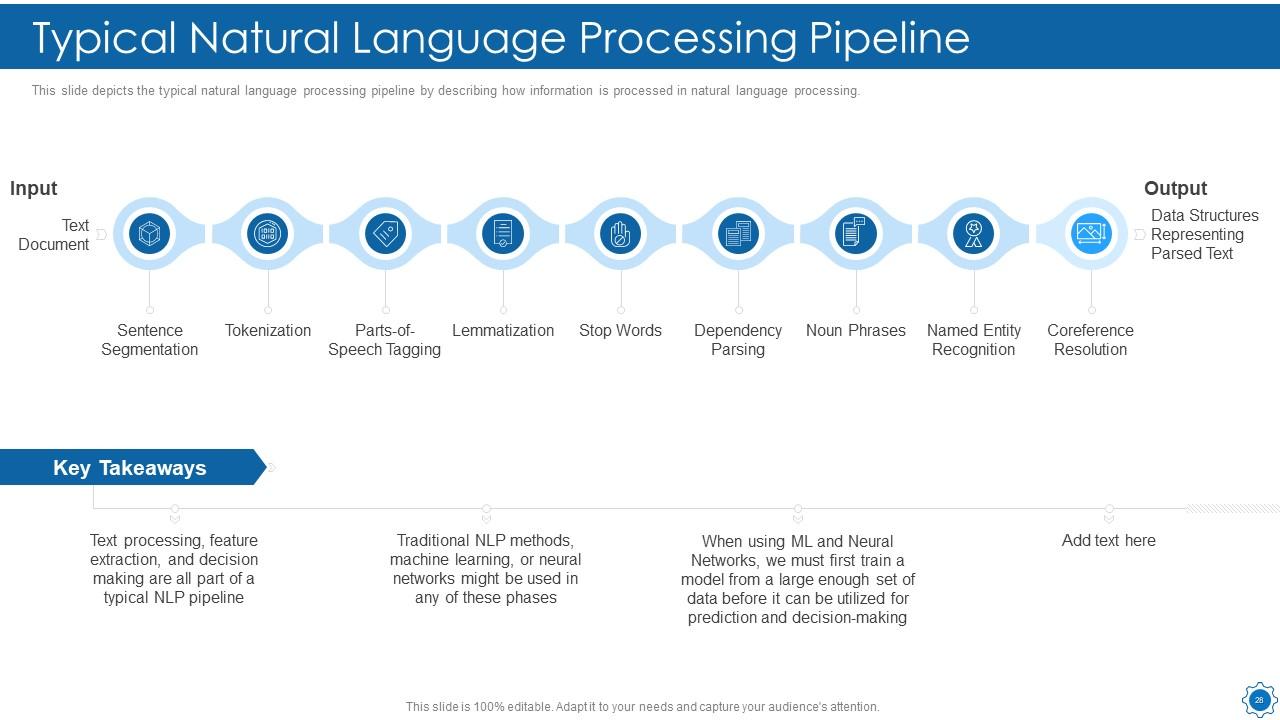

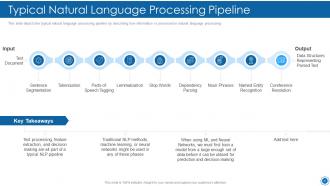

Slide 28: This slide depicts the typical natural language processing pipeline by describing how information is processed in natural language processing.

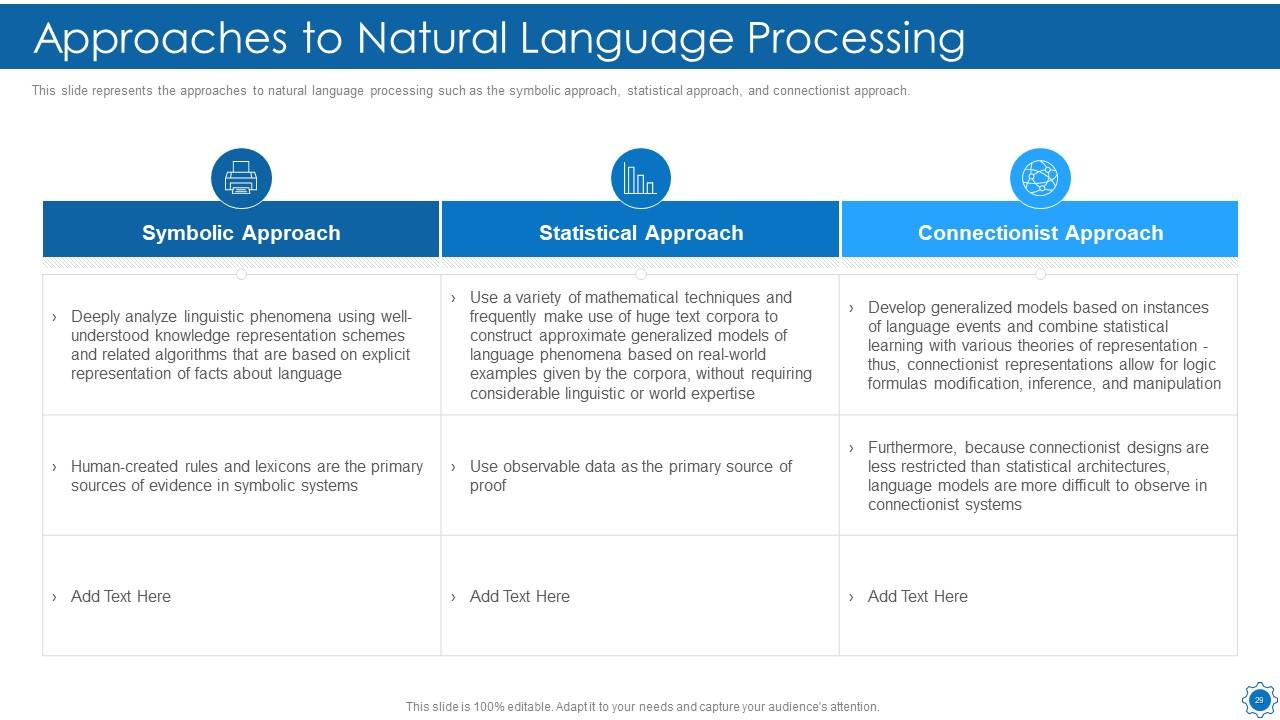

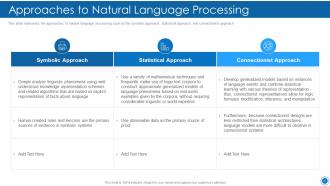

Slide 29: This slide shows Approaches to Natural Language Processing.

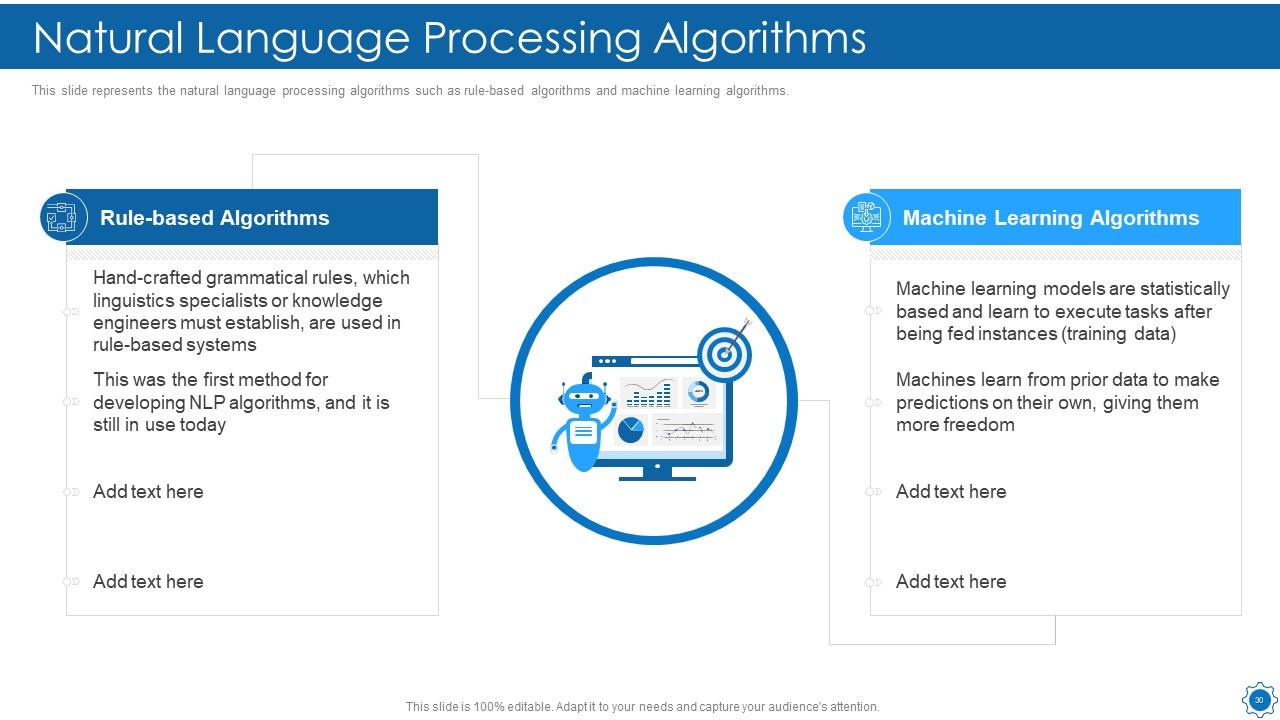

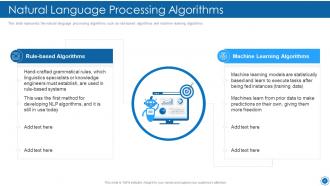

Slide 30: This slide displays the natural language processing algorithms such as rule-based algorithms and machine learning algorithms.

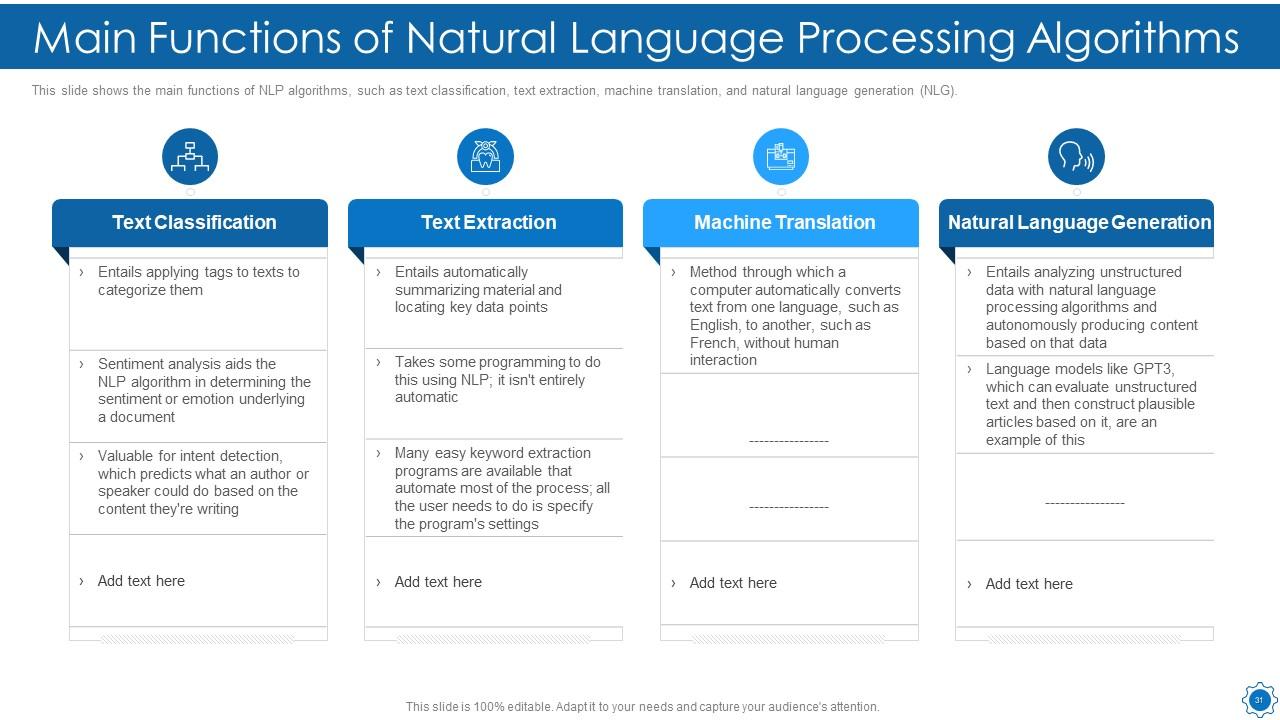

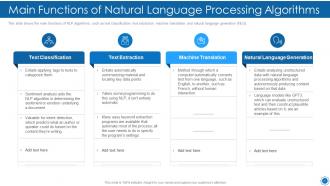

Slide 31: This slide shows the main functions of NLP algorithms, such as text classification, text extraction, etc.

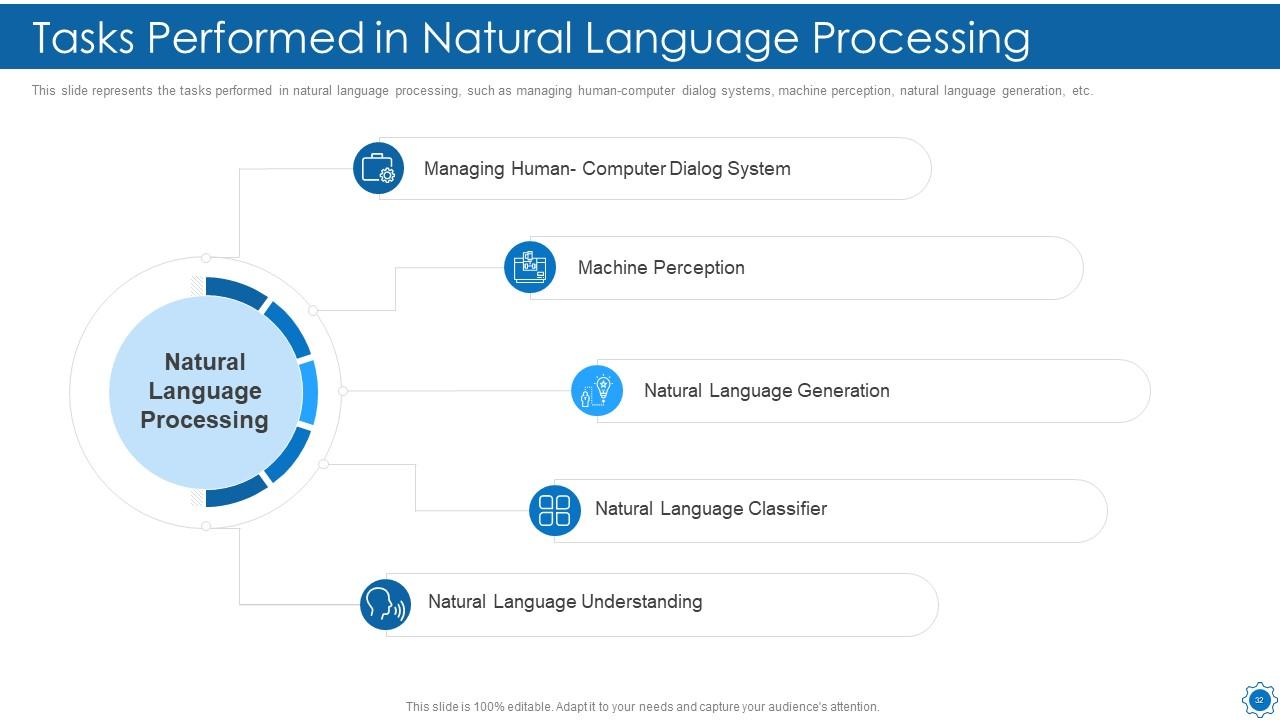

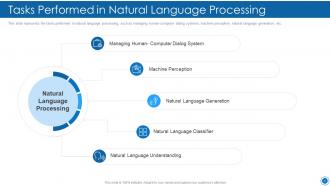

Slide 32: This slide shows Tasks Performed in Natural Language Processing.

Slide 33: This slide presents Table of Content for the presentation.

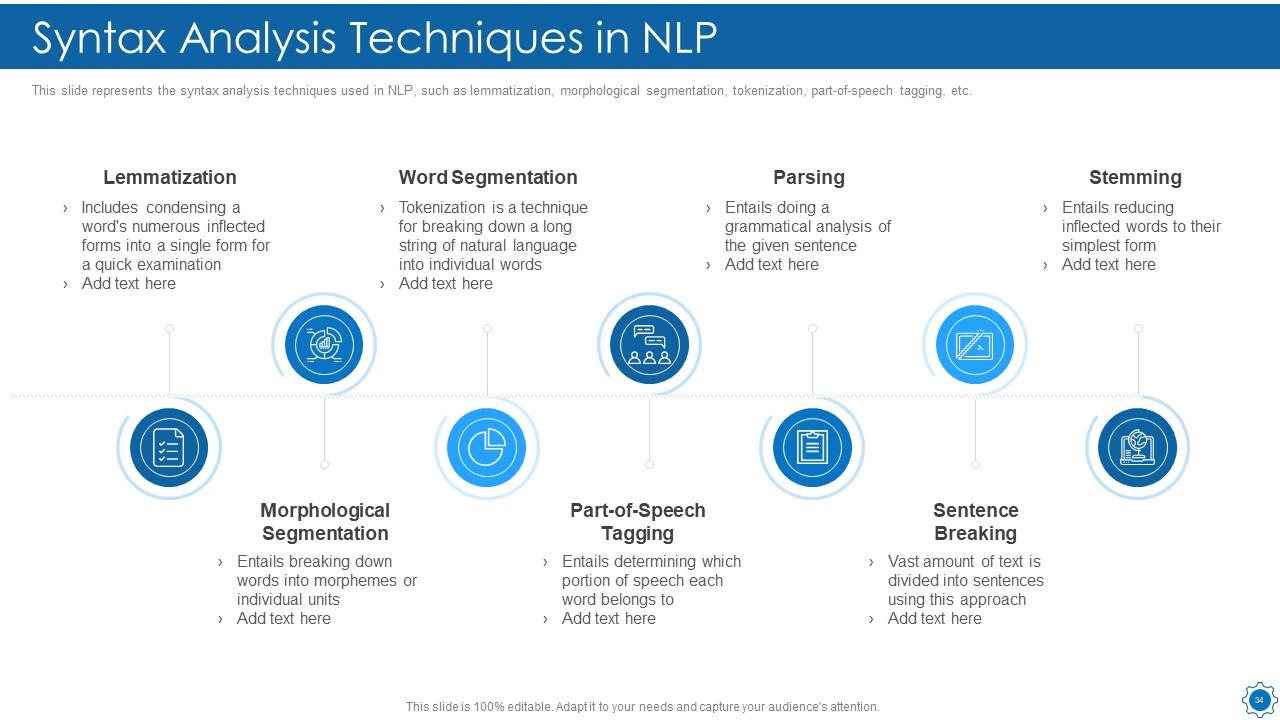

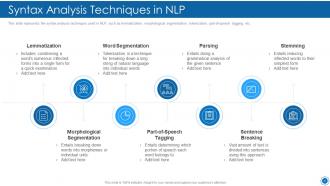

Slide 34: This slide displays the syntax analysis techniques used in NLP, such as lemmatization, morphological segmentation, etc.

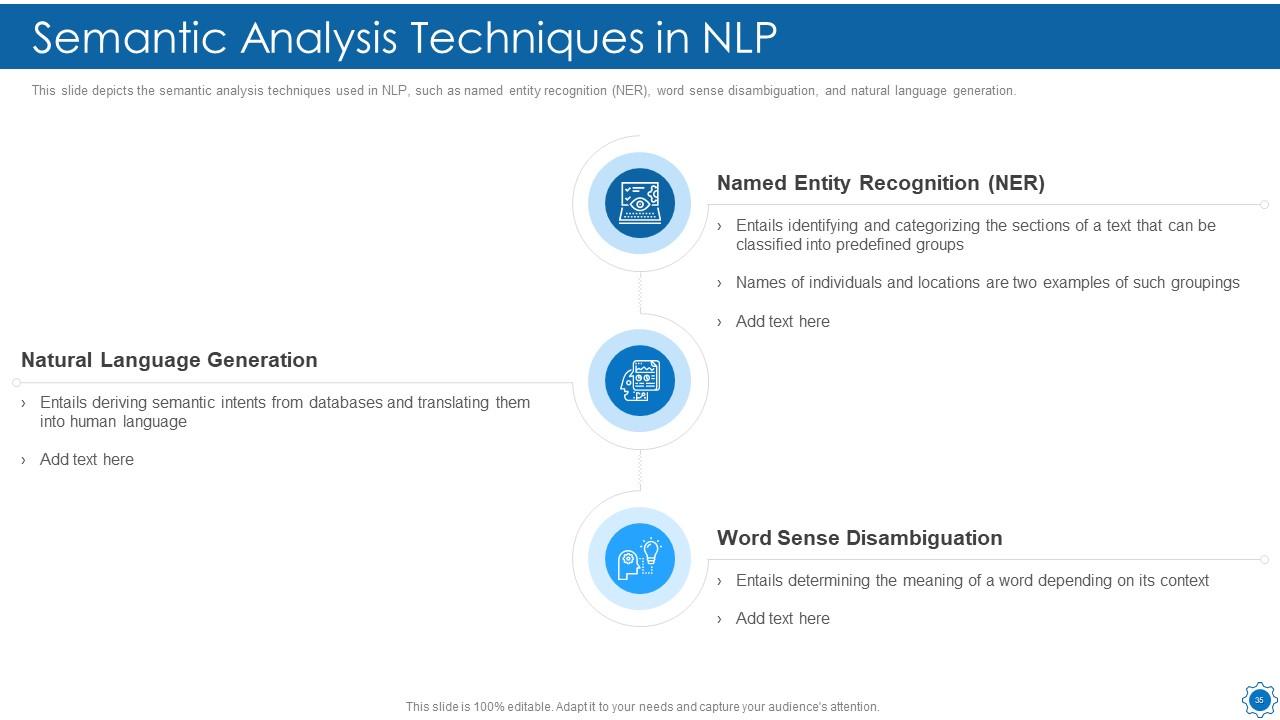

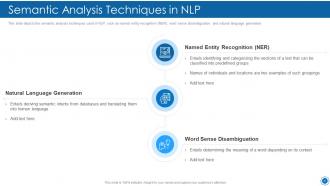

Slide 35: This slide depicts the semantic analysis techniques used in NLP, such as named entity recognition (NER), word sense disambiguation, etc.

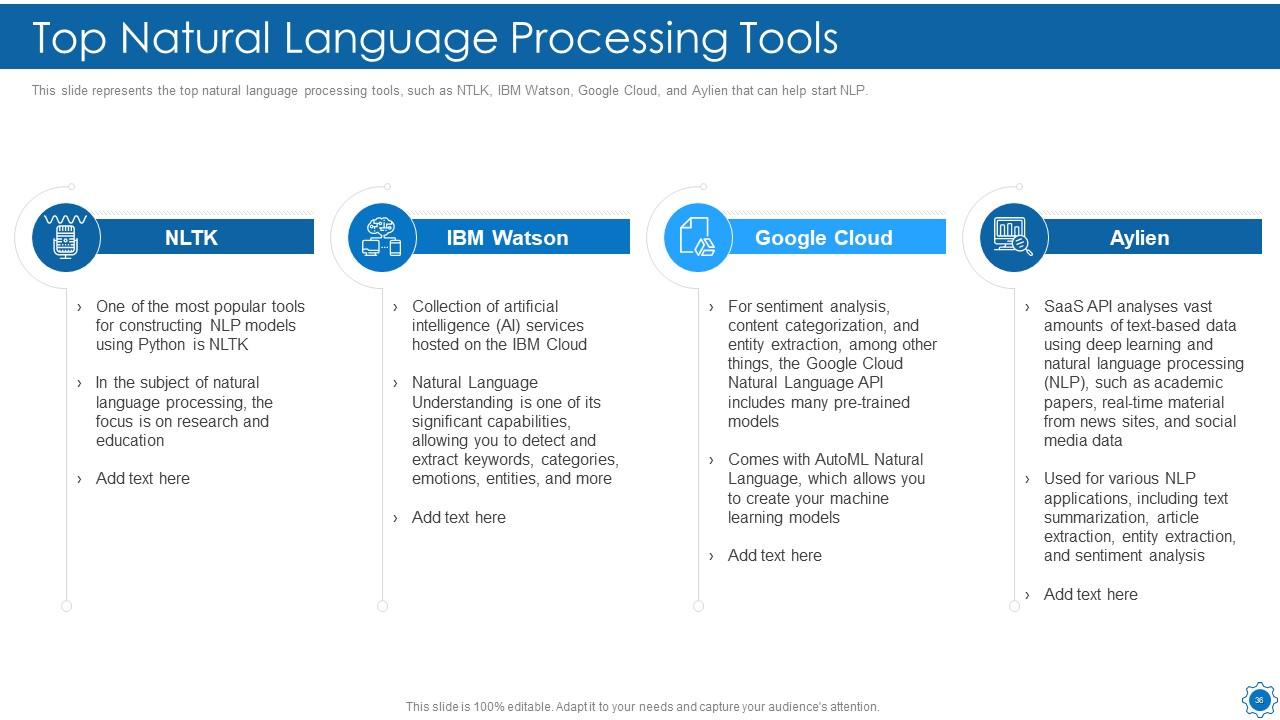

Slide 36: This slide represents the top natural language processing tools, such as NTLK, IBM Watson, etc.

Slide 37: This slide shows Table of Content for the presentation.

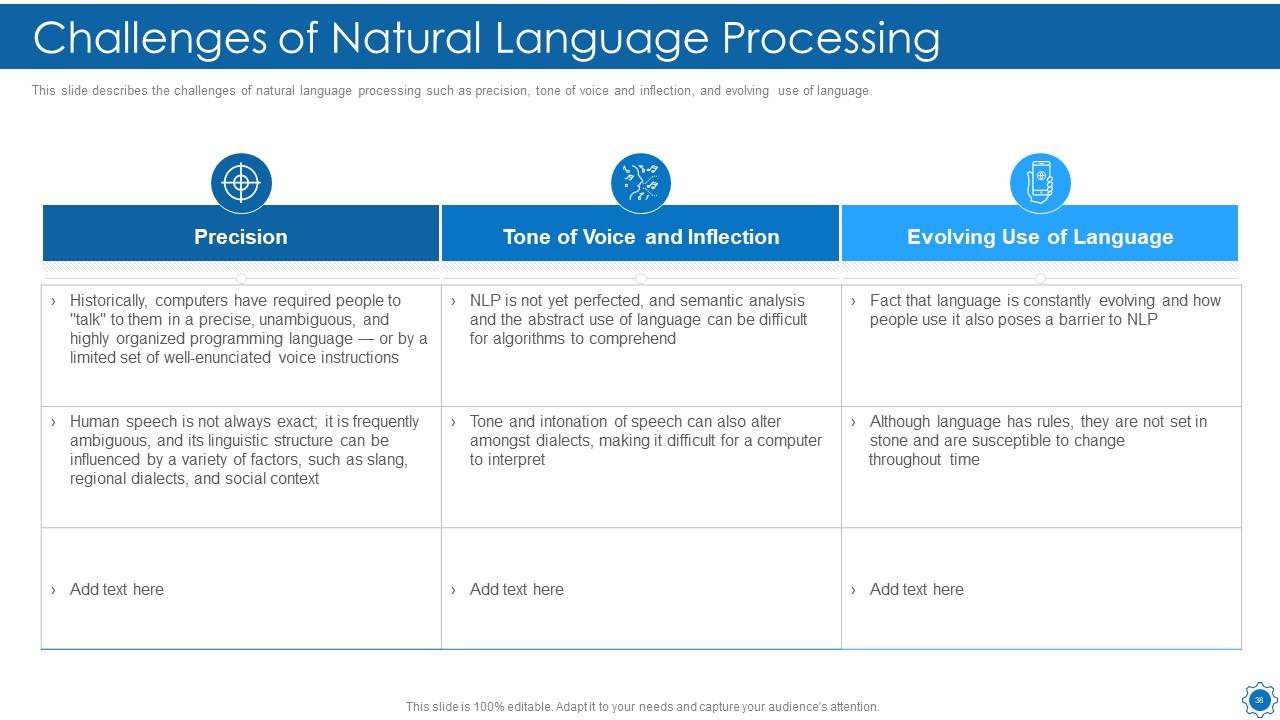

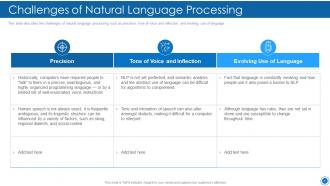

Slide 38: This slide describes the challenges of natural language processing such as precision, tone of voice and inflection, etc.

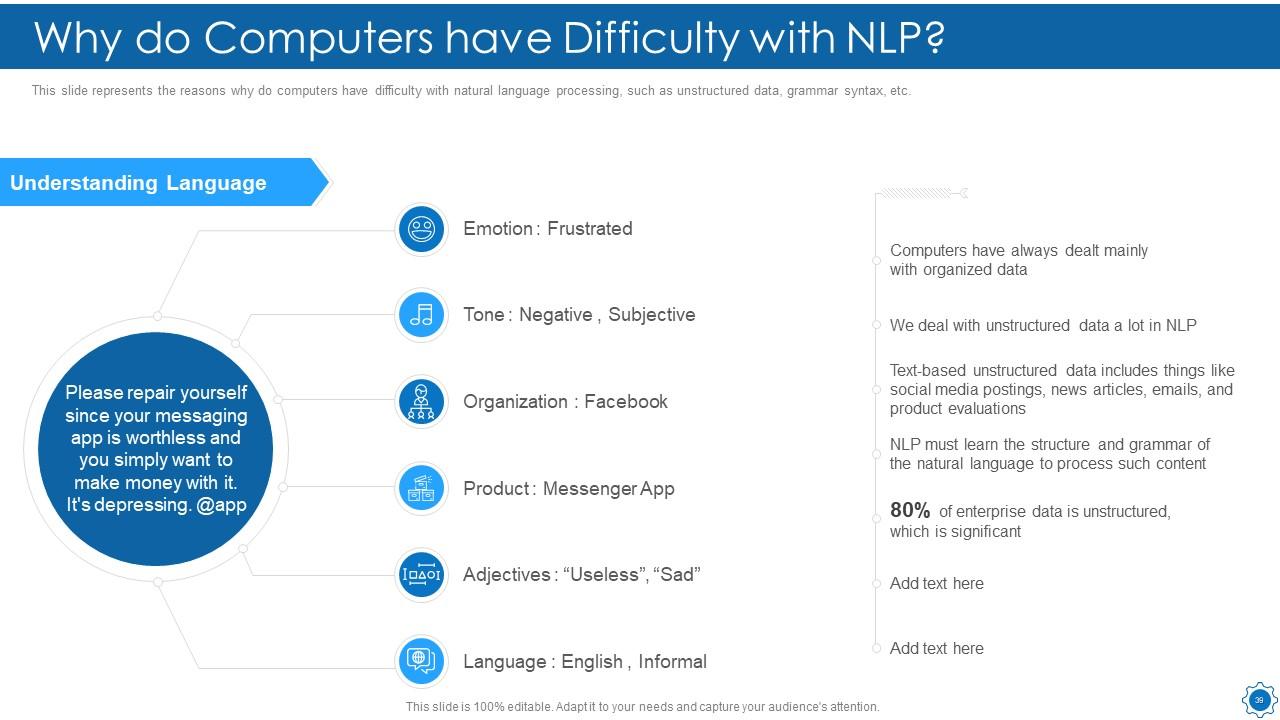

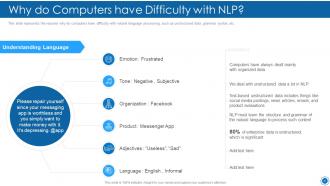

Slide 39: This slide represents the reasons why do computers have difficulty with natural language processing.

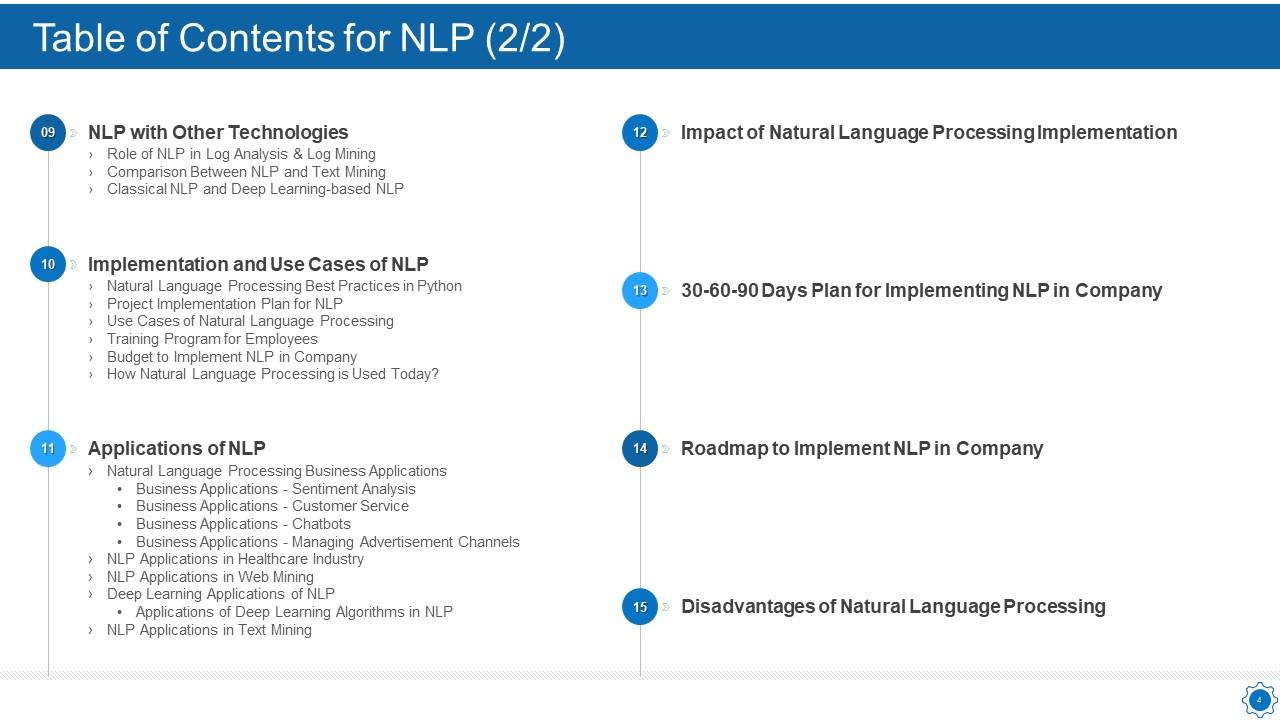

Slide 40: This slide displays Table of Content for the presentation.

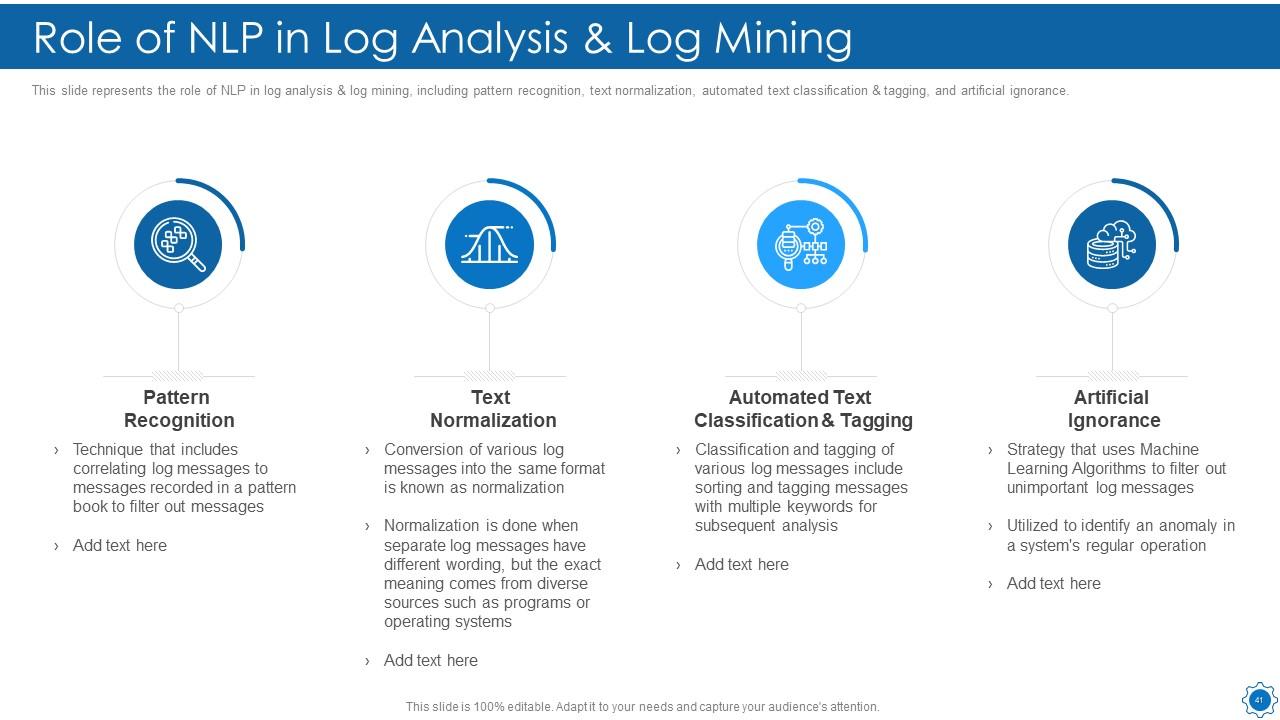

Slide 41: This slide represents the role of NLP in log analysis & log mining, including pattern recognition, text normalization, etc.

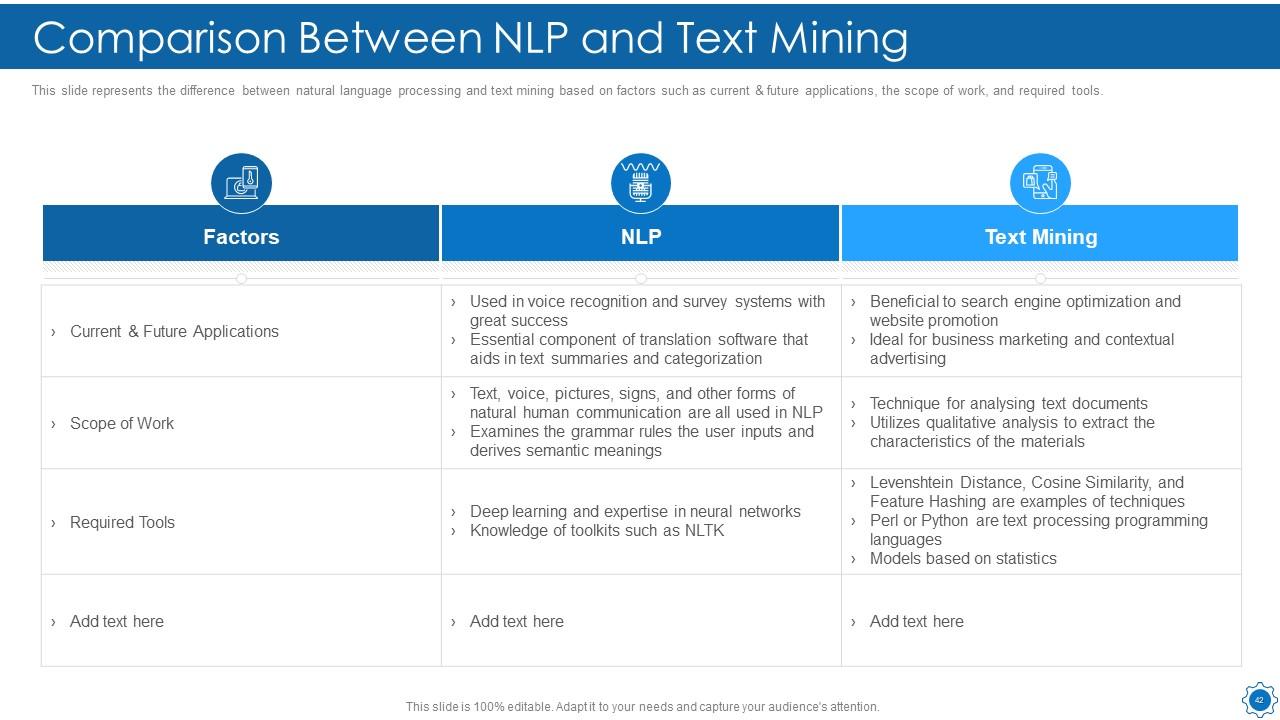

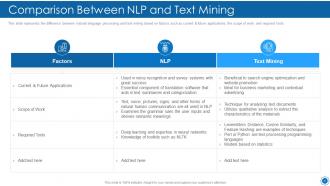

Slide 42: This slide shows Comparison Between NLP and Text Mining.

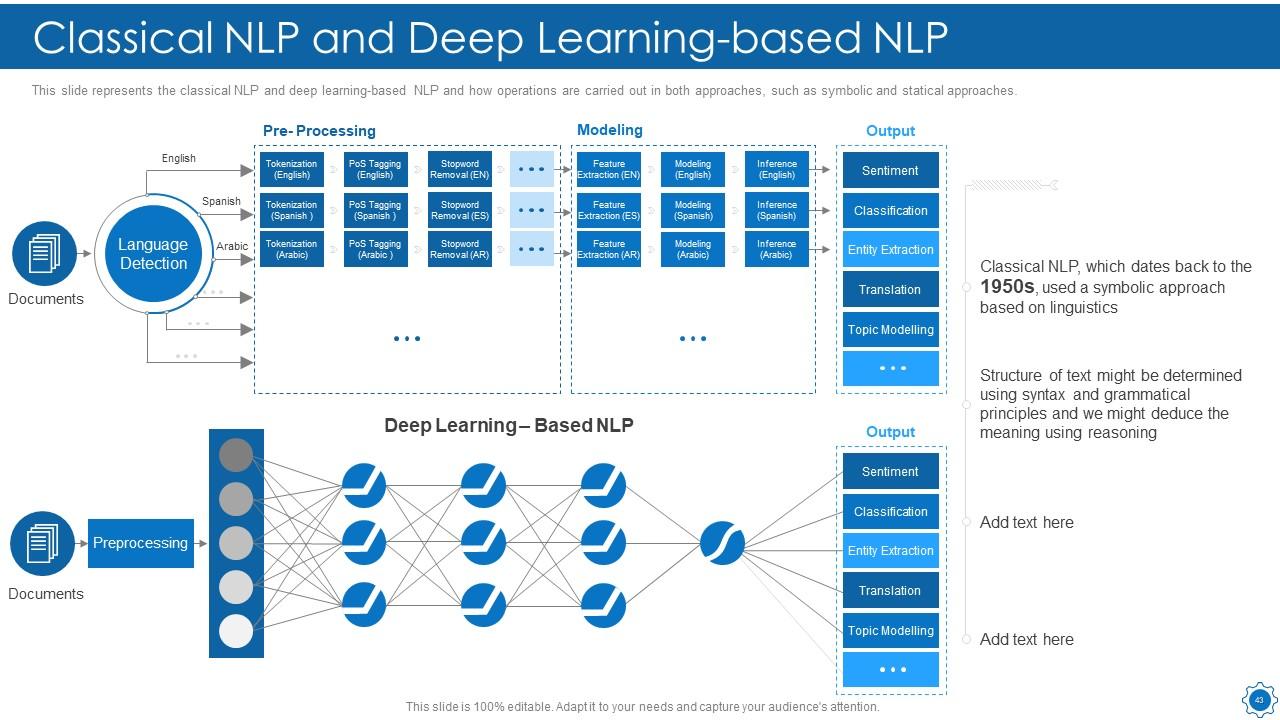

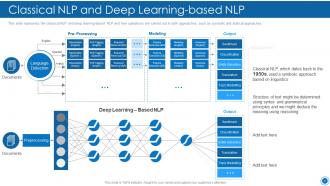

Slide 43: This slide represents the classical NLP and deep learning-based NLP and how operations are carried out in both approaches.

Slide 44: This slide shows Table of Content for the presentation.

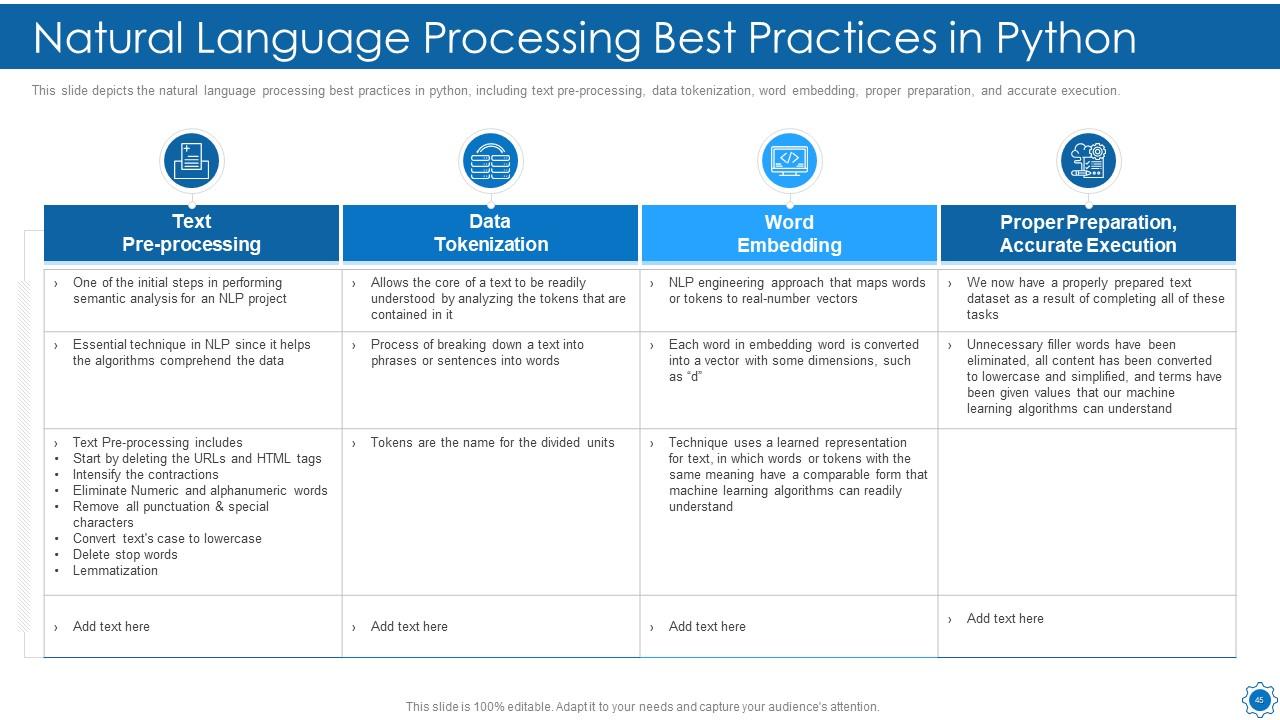

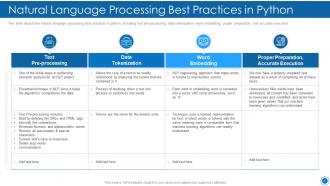

Slide 45: This slide displays Natural Language Processing Best Practices in Python.

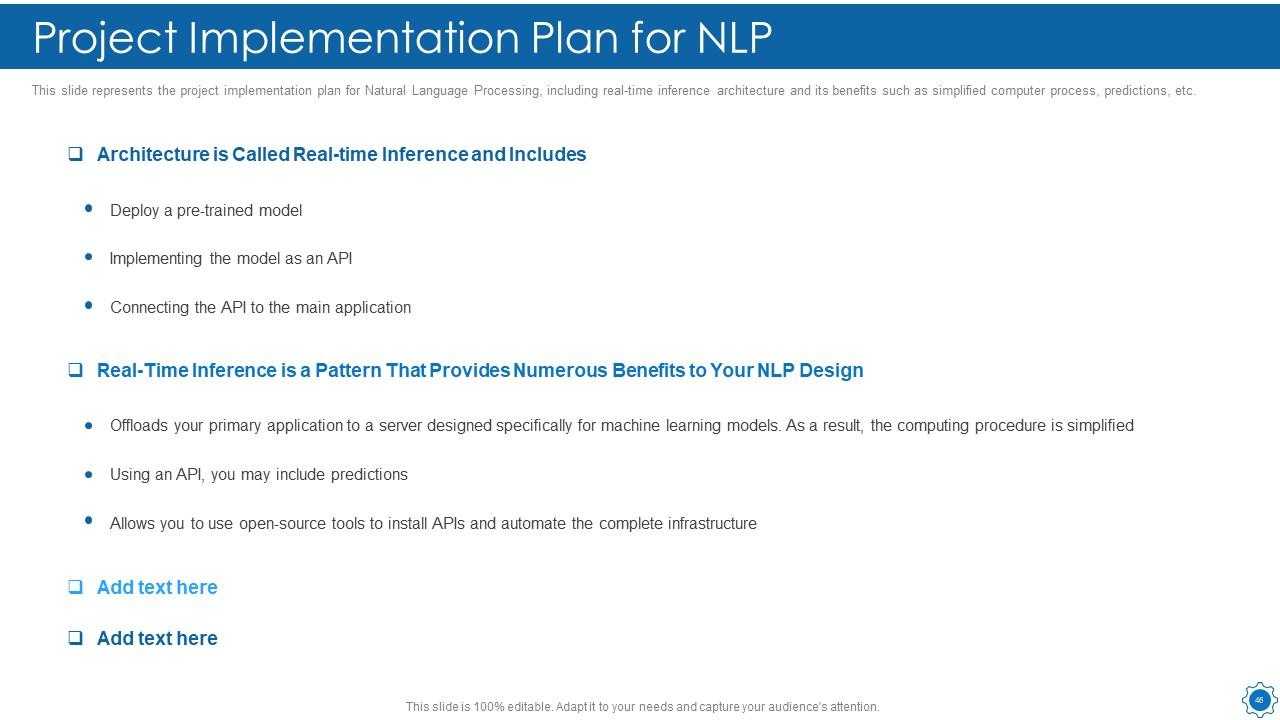

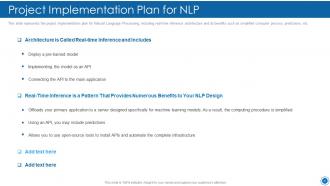

Slide 46: This slide represents the project implementation plan for Natural Language Processing.

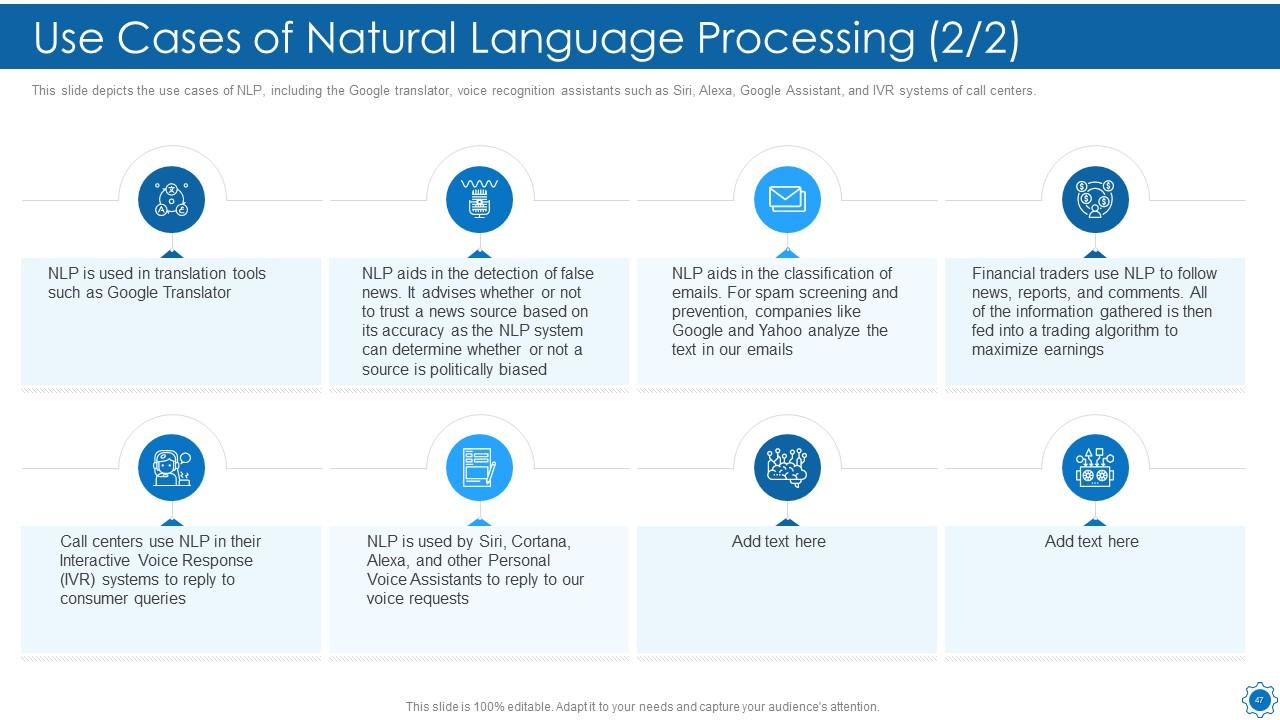

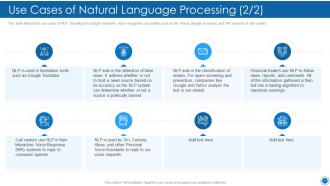

Slide 47: This slide depicts the use cases of NLP, including the Google translator, voice recognition assistants, etc.

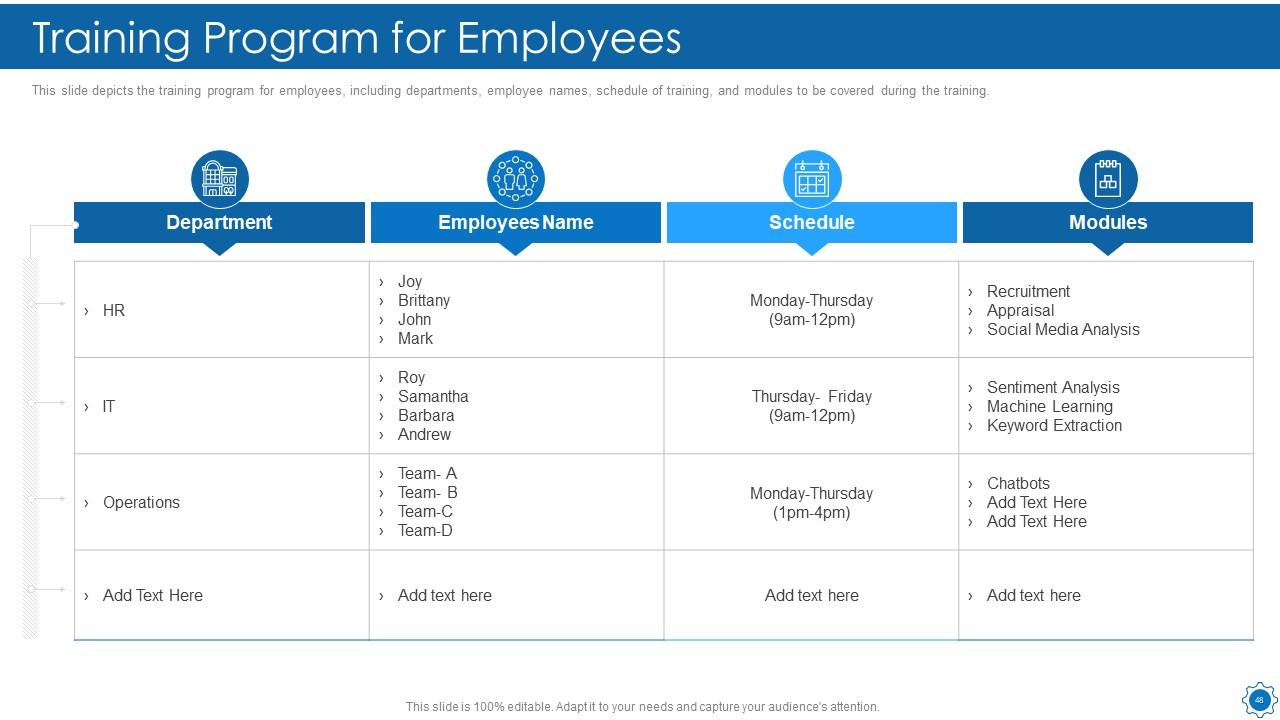

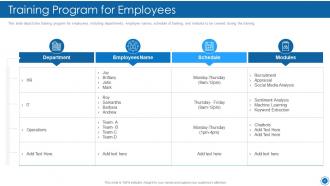

Slide 48: This slide presents the training program for employees, including departments, employee names, schedule of training, etc.

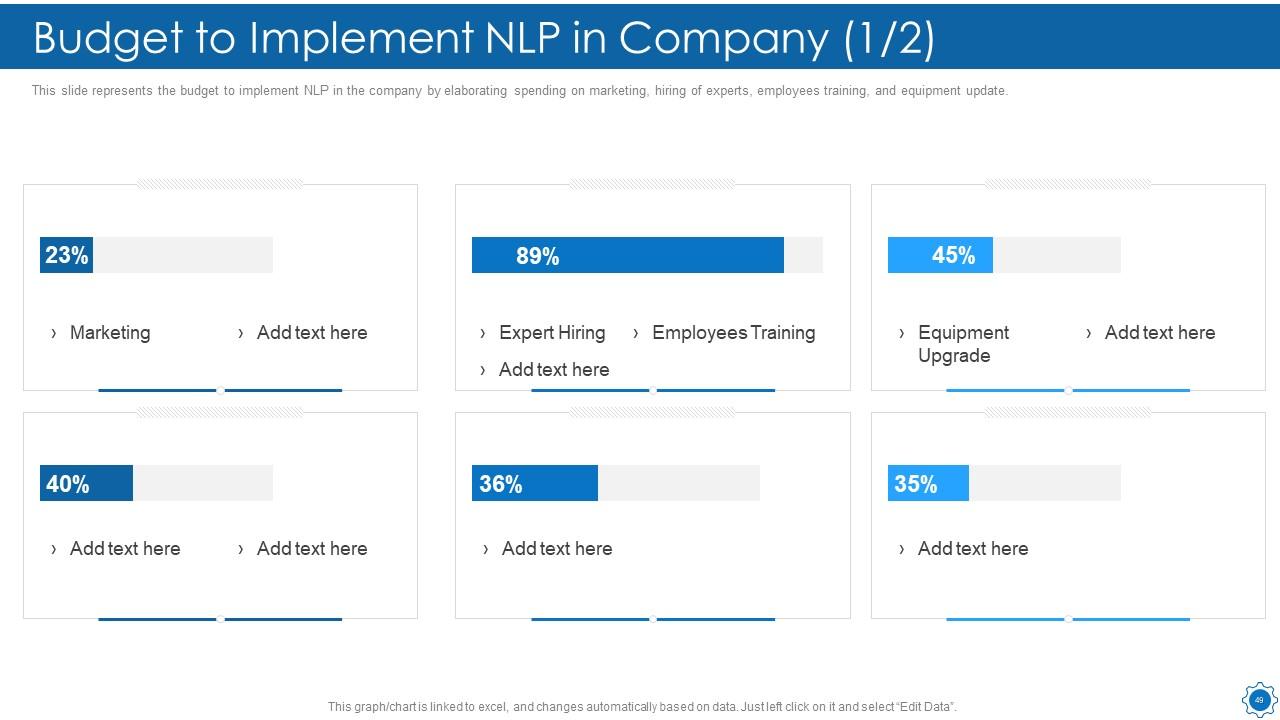

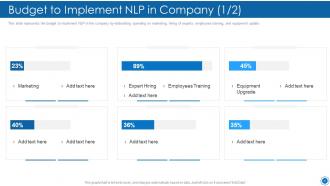

Slide 49: This slide represents the budget to implement NLP in the company by elaborating spending on marketing.

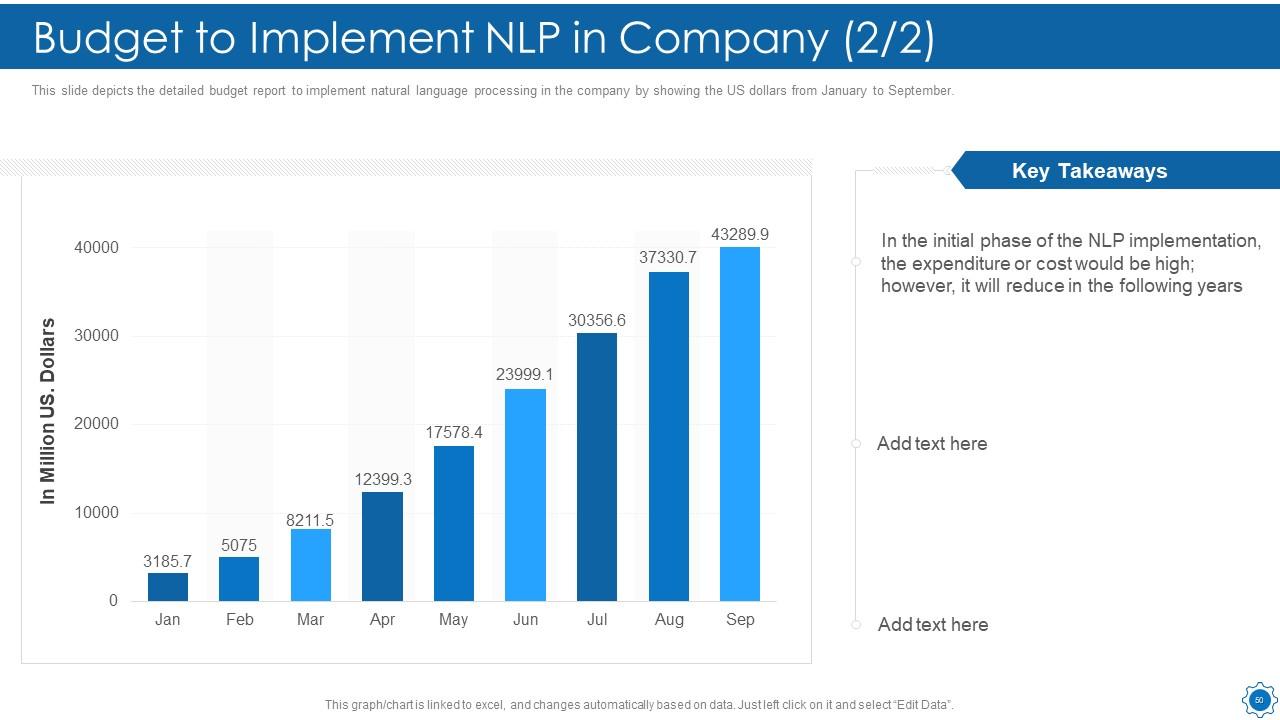

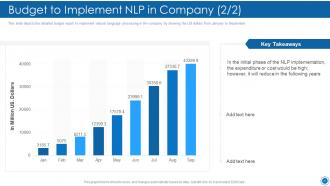

Slide 50: This slide depicts the detailed budget report to implement natural language processing in the company.

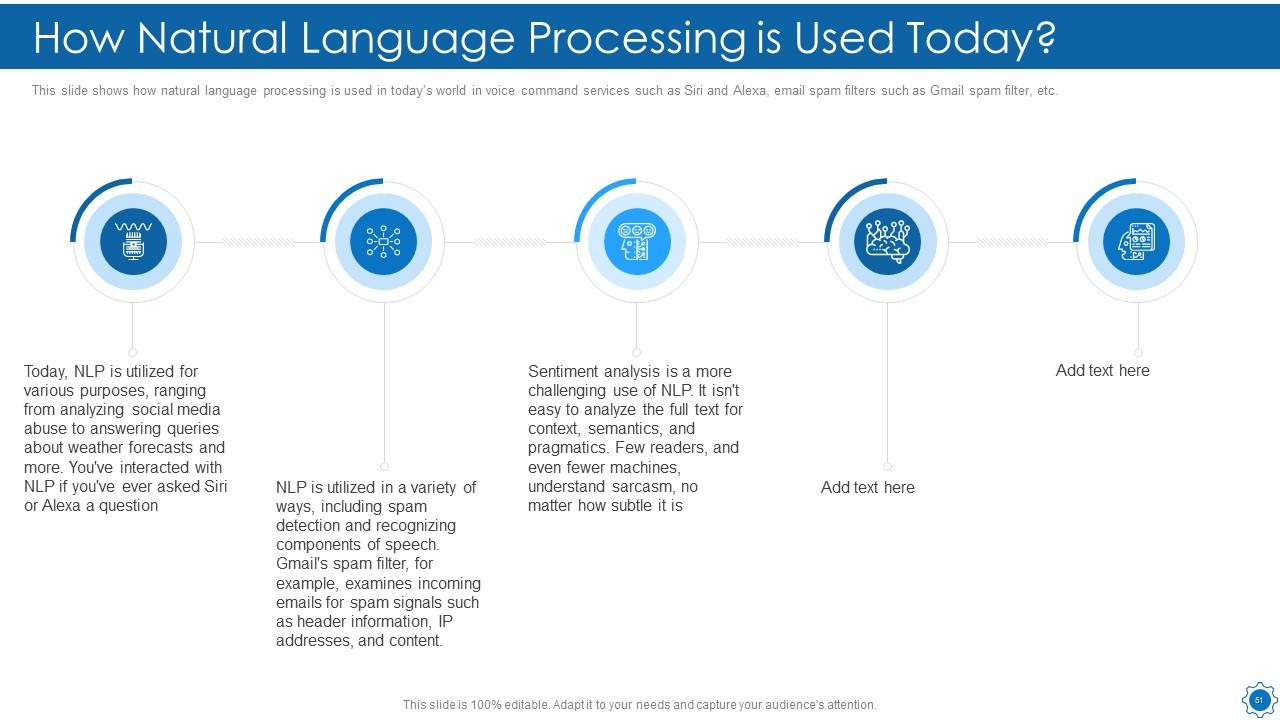

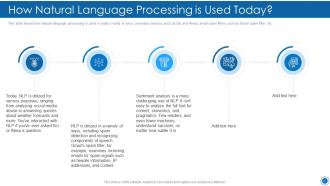

Slide 51: This slide shows How Natural Language Processing is Used Today.

Slide 52: This slide shows Table of Content for the presentation.

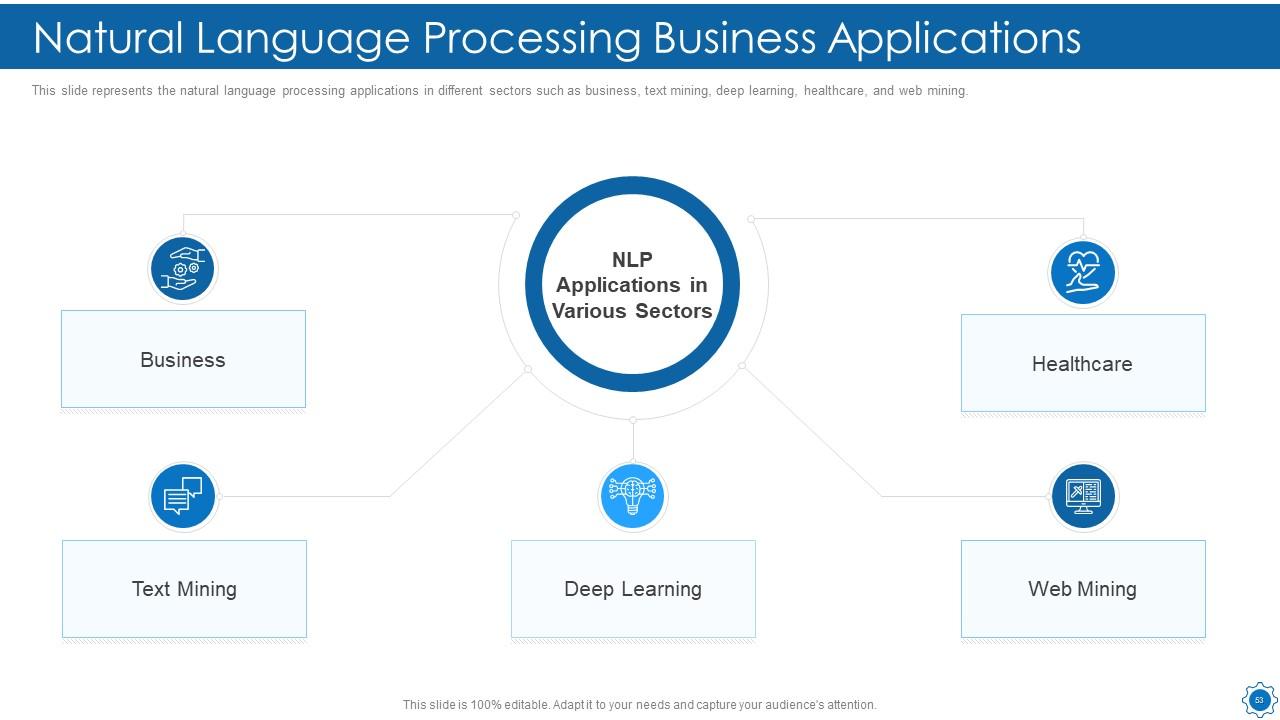

Slide 53: This slide presents Natural Language Processing Business Applications.

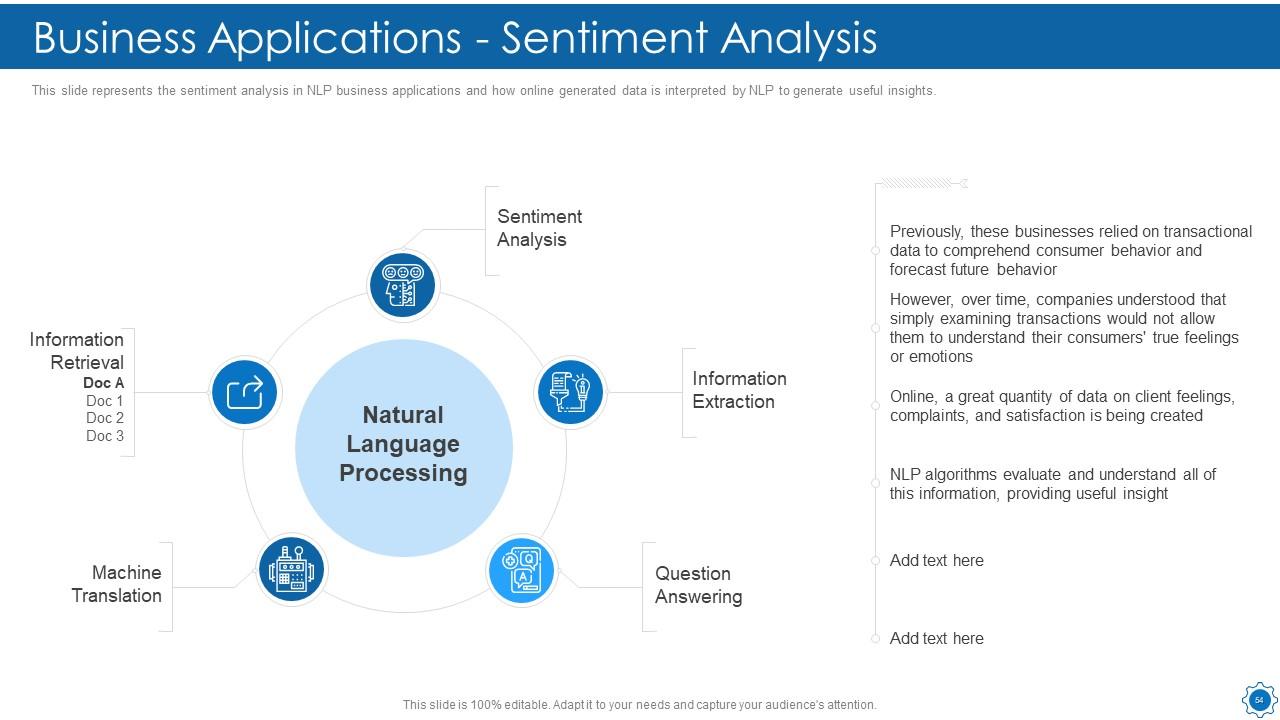

Slide 54: This slide represents the sentiment analysis in NLP business applications and how online generated data.

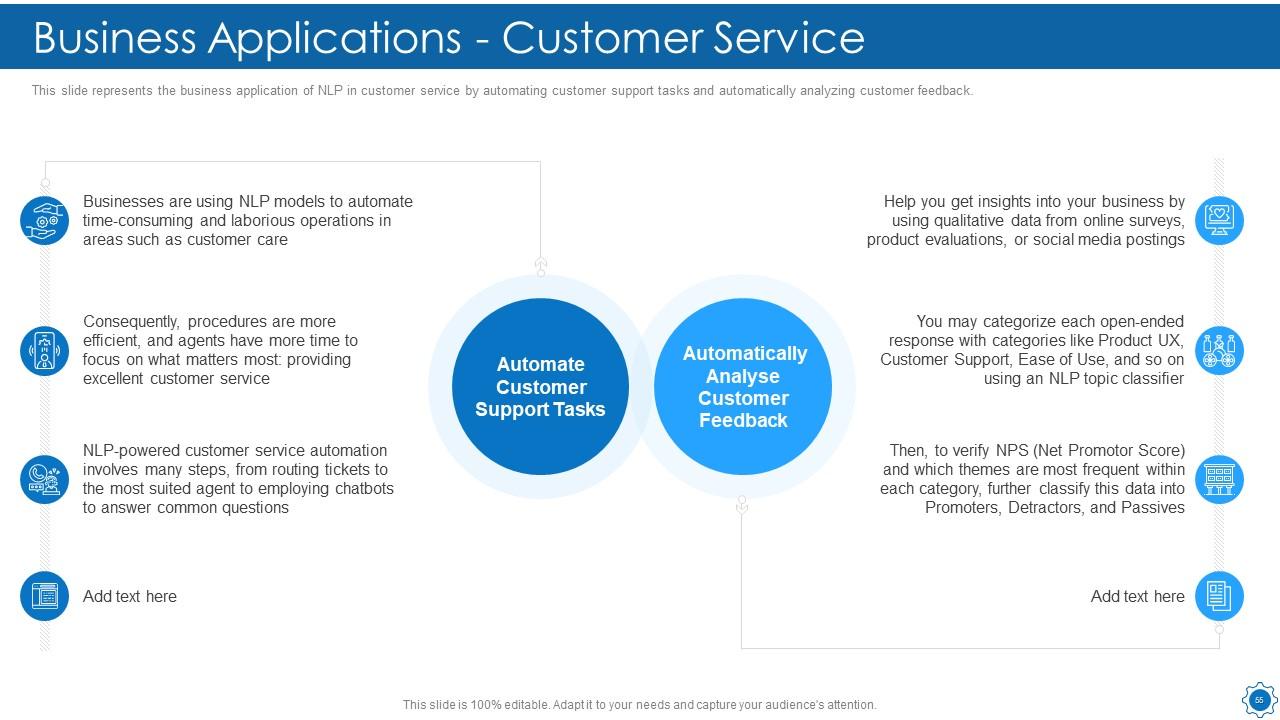

Slide 55: This slide displays the business application of NLP in customer service by automating customer support.

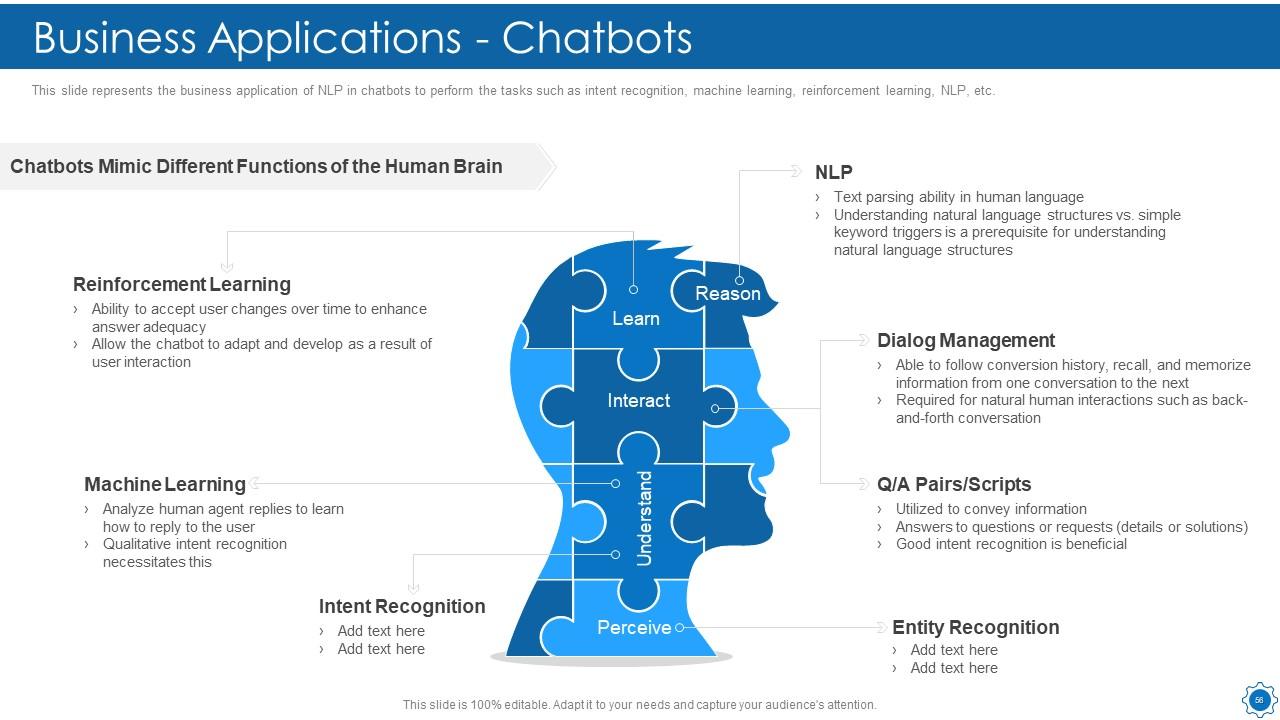

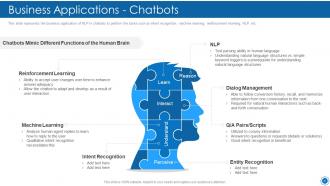

Slide 56: This slide represents the business application of NLP in chatbots to perform the tasks such as intent recognition, machine learning, etc.

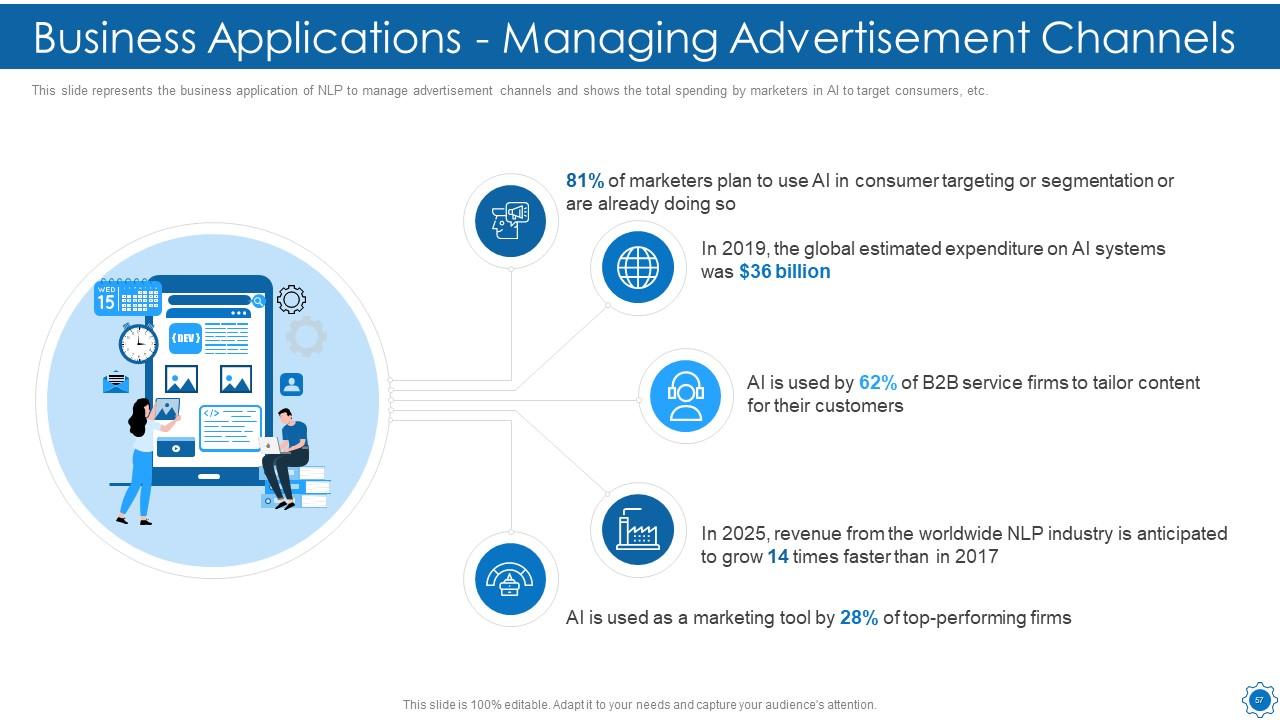

Slide 57: This slide shows Business Applications - Managing Advertisement Channels.

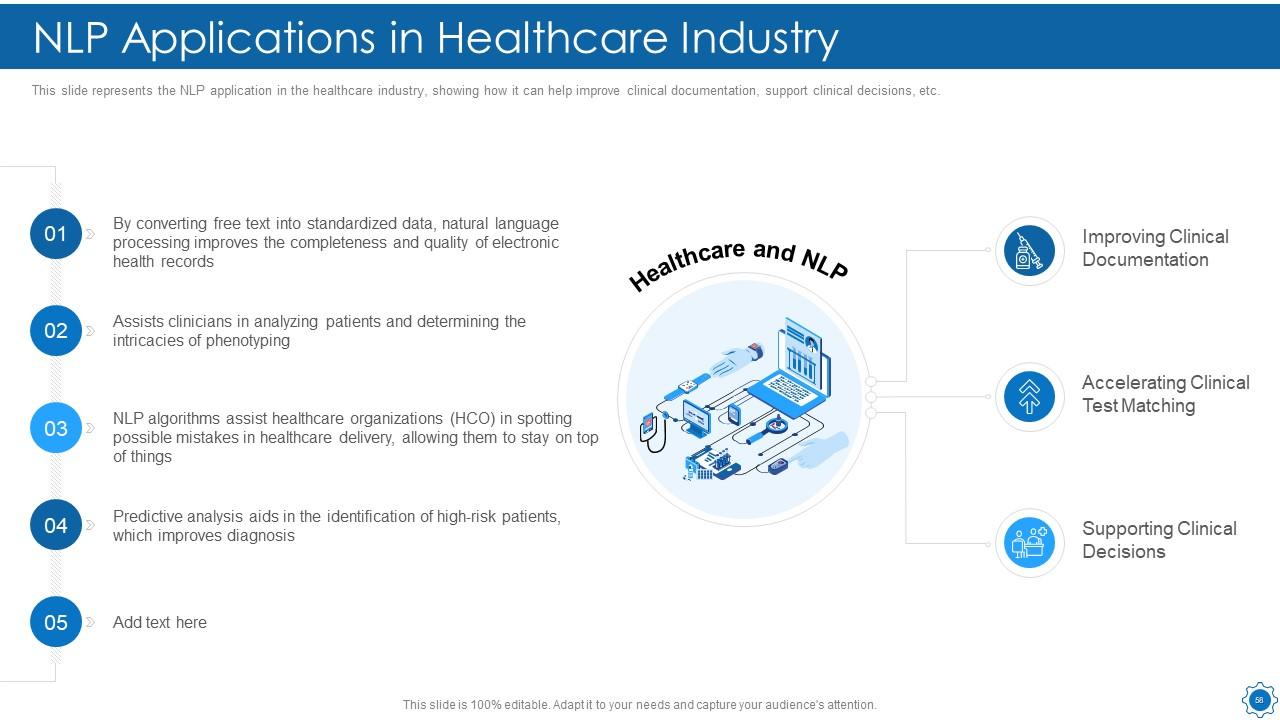

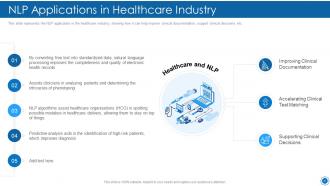

Slide 58: This slide presents the NLP application in the healthcare industry.

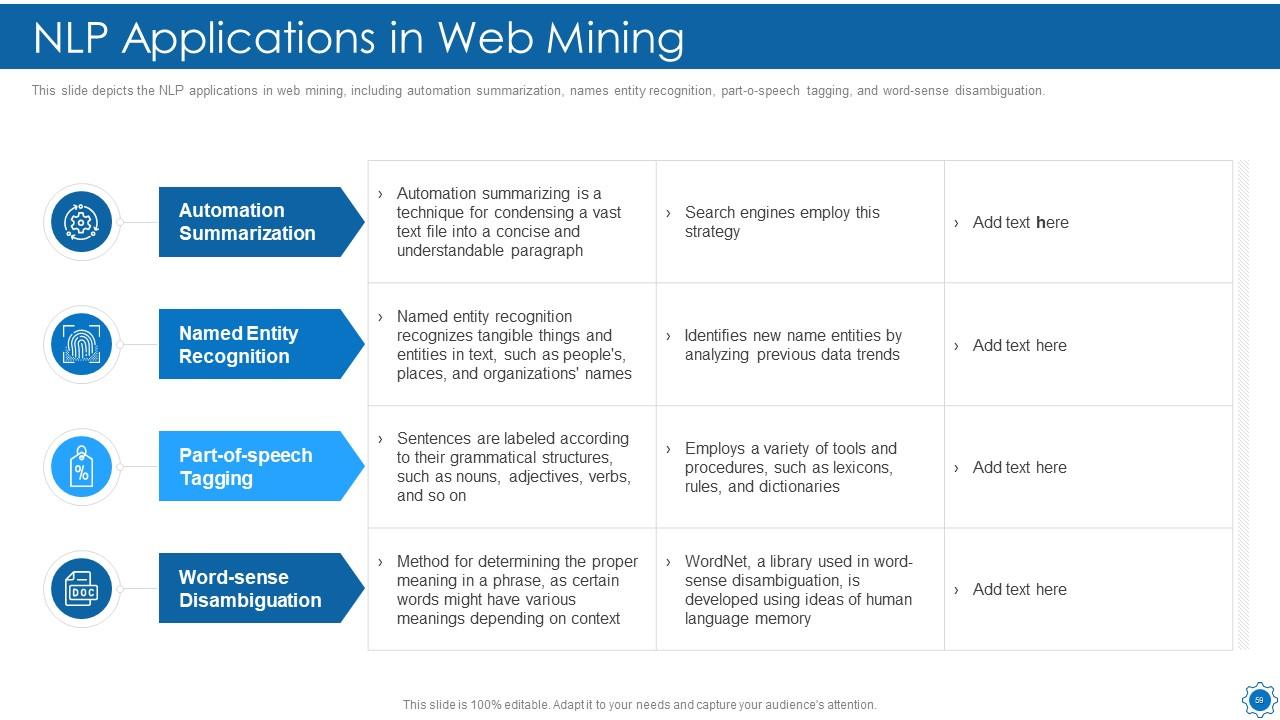

Slide 59: This slide depicts the NLP applications in web mining, including automation summarization.

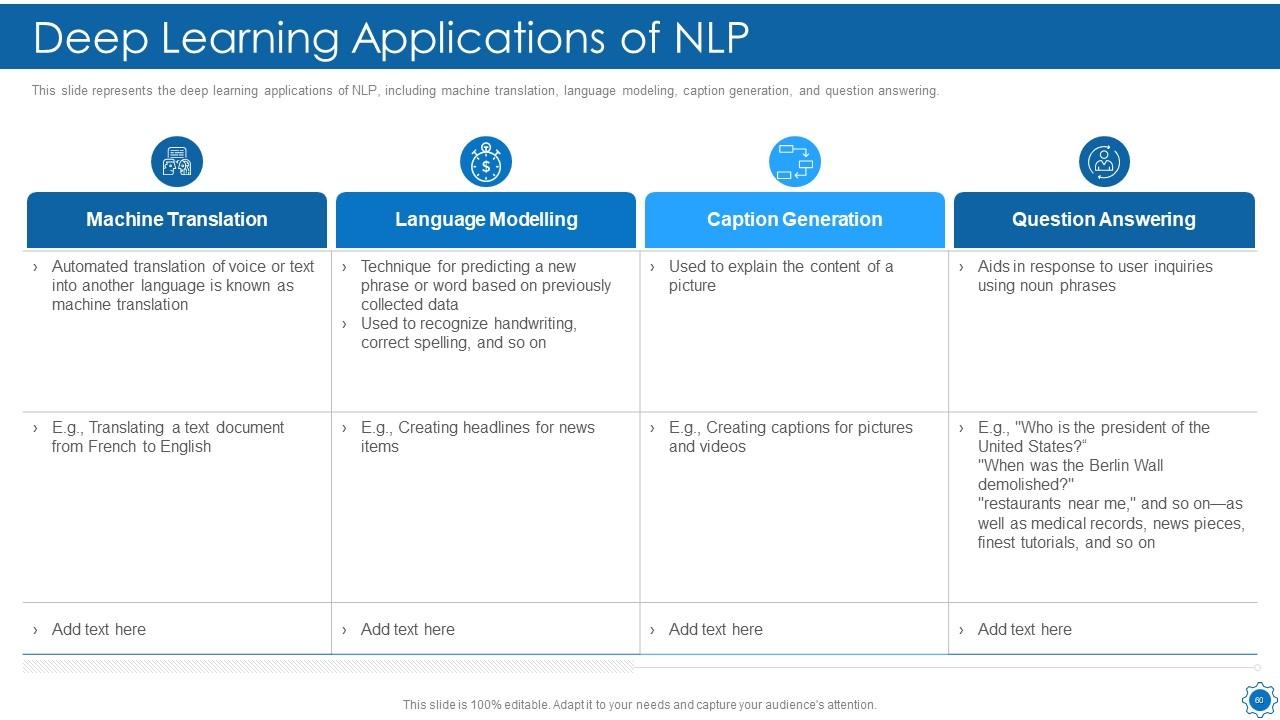

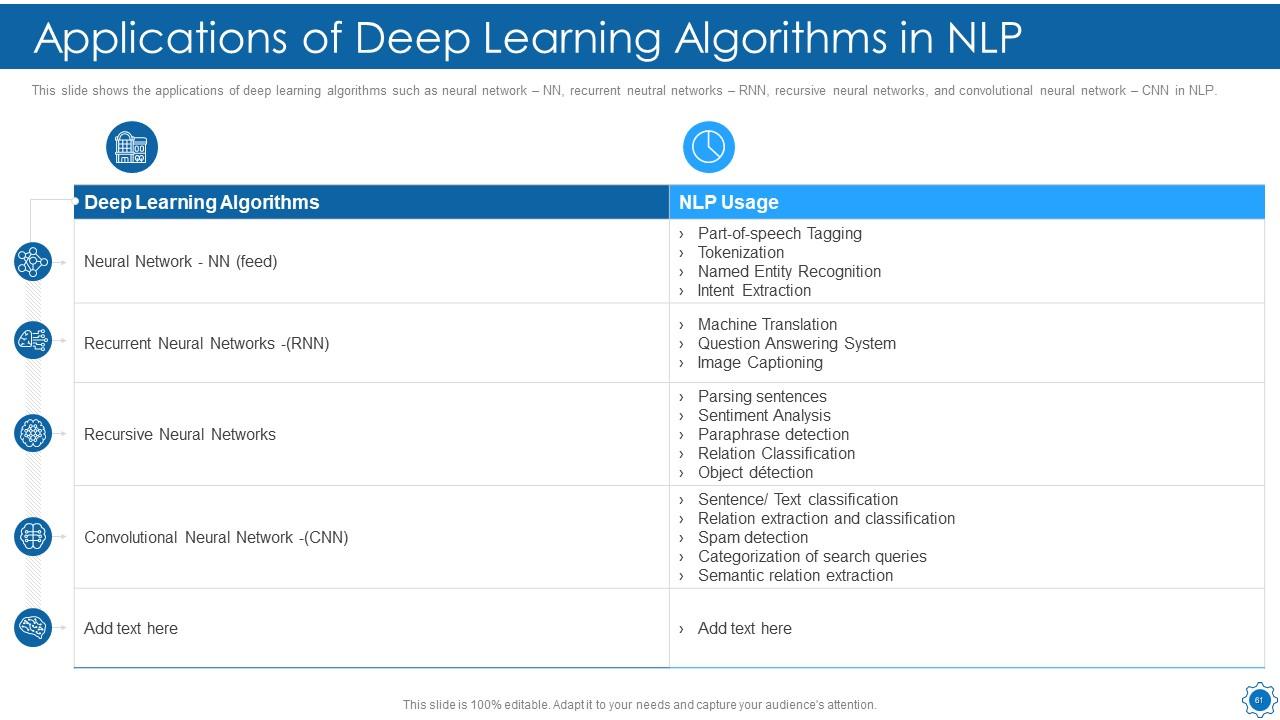

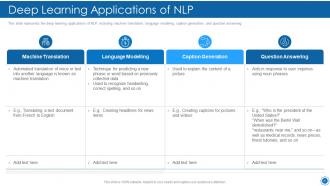

Slide 60: This slide represents the deep learning applications of NLP, including machine translation, language modeling, etc.

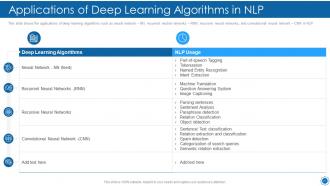

Slide 61: This slide displays the applications of deep learning algorithms.

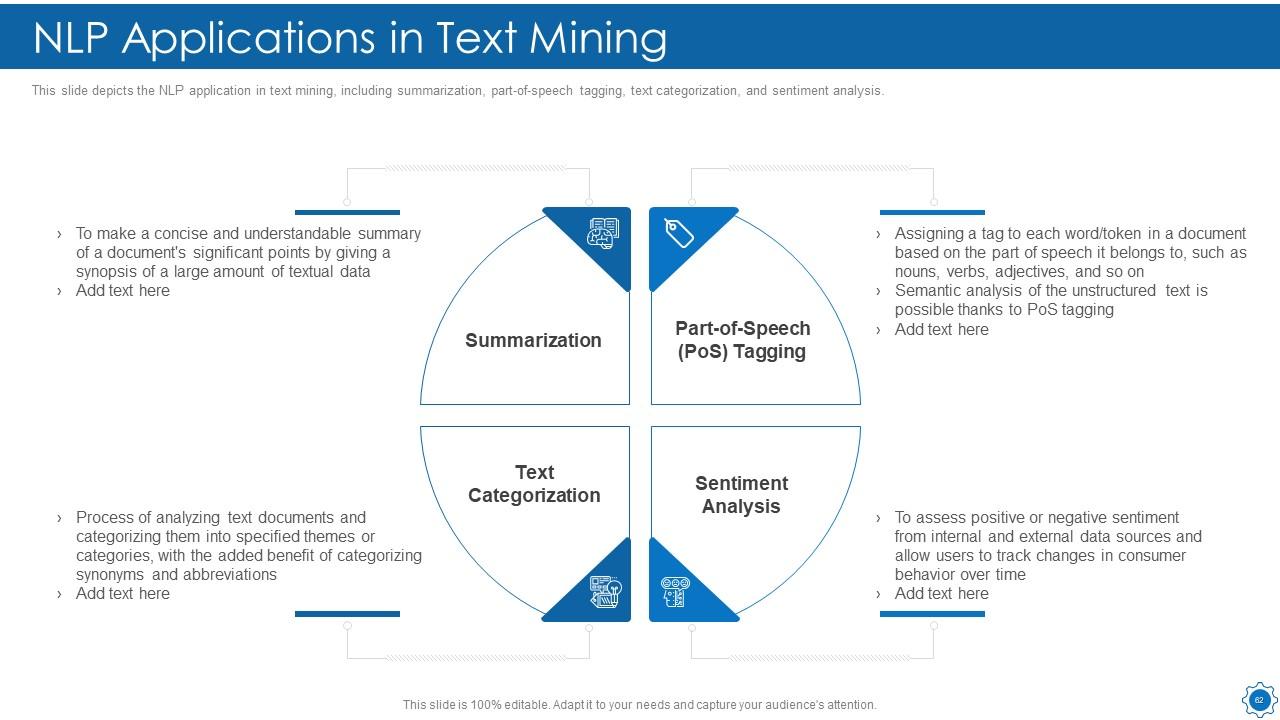

Slide 62: This slide depicts the NLP application in text mining, including summarization, part-of-speech tagging, etc.

Slide 63: This slide presents Table of Content for the presentation.

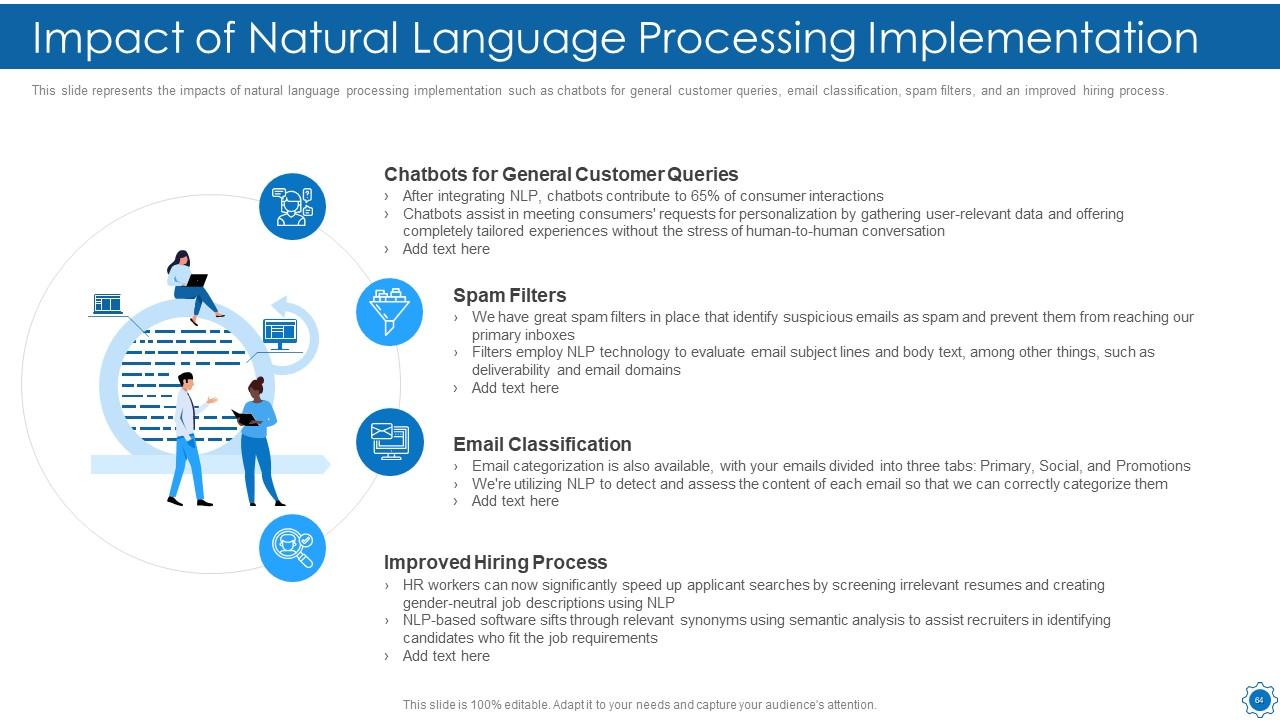

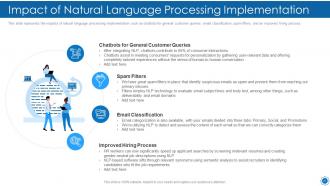

Slide 64: This slide shows Impact of Natural Language Processing Implementation.

Slide 65: This slide displays Table of Content for the presentation.

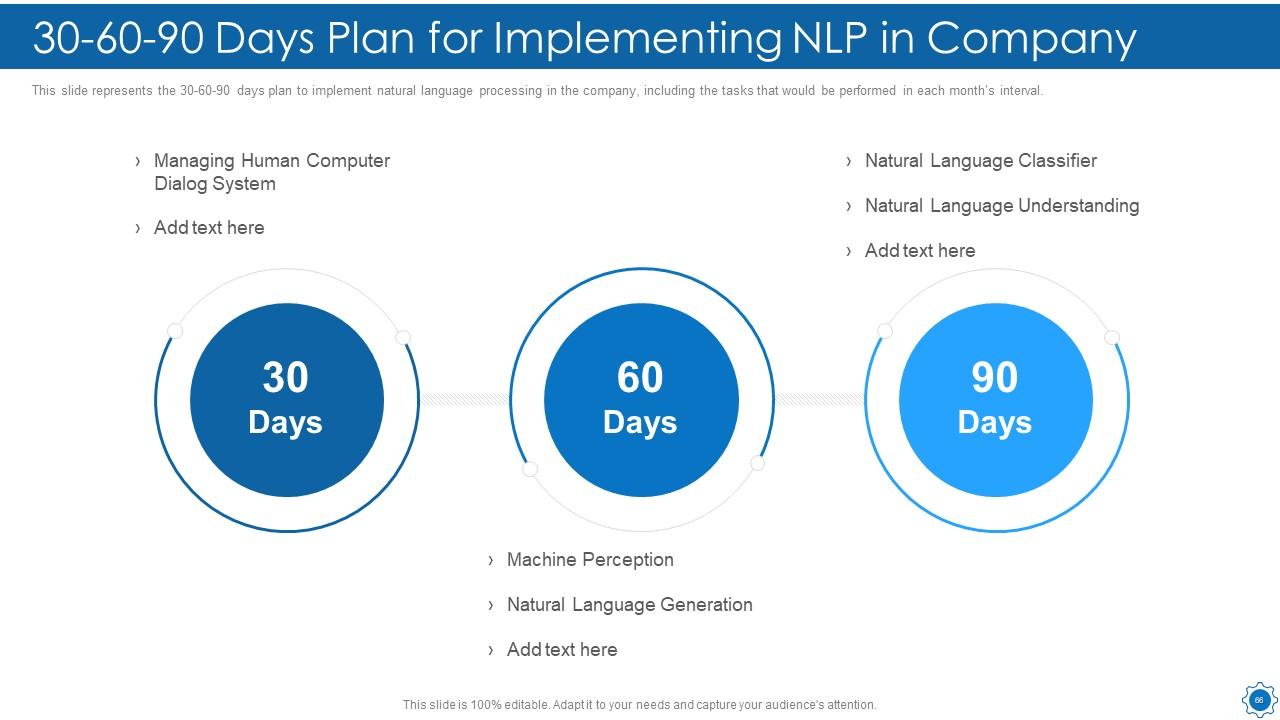

Slide 66: This slide shows 30-60-90 Days Plan for Implementing NLP in Company.

Slide 67: This slide shows Table of Content for the presentation.

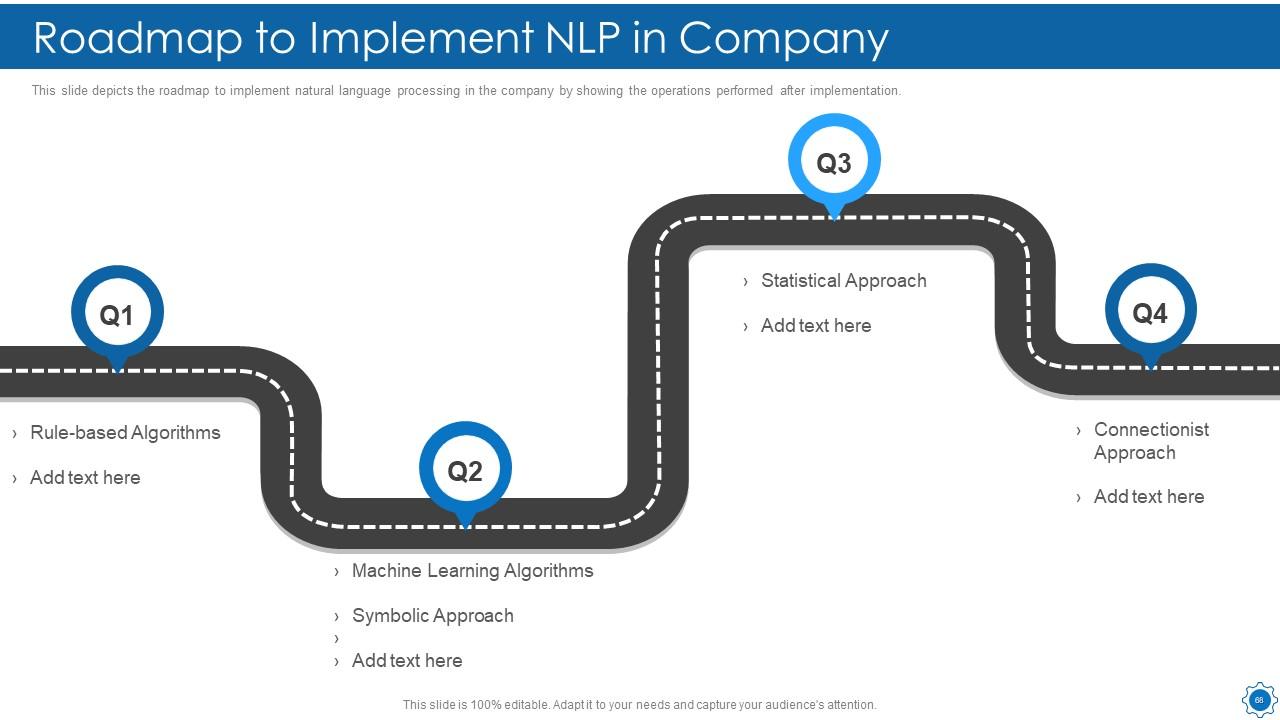

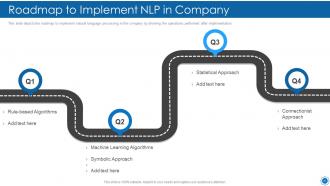

Slide 68: This slide depicts the roadmap to implement natural language processing in the company.

Slide 69: This slide is titled as Additional Slides for moving forward.

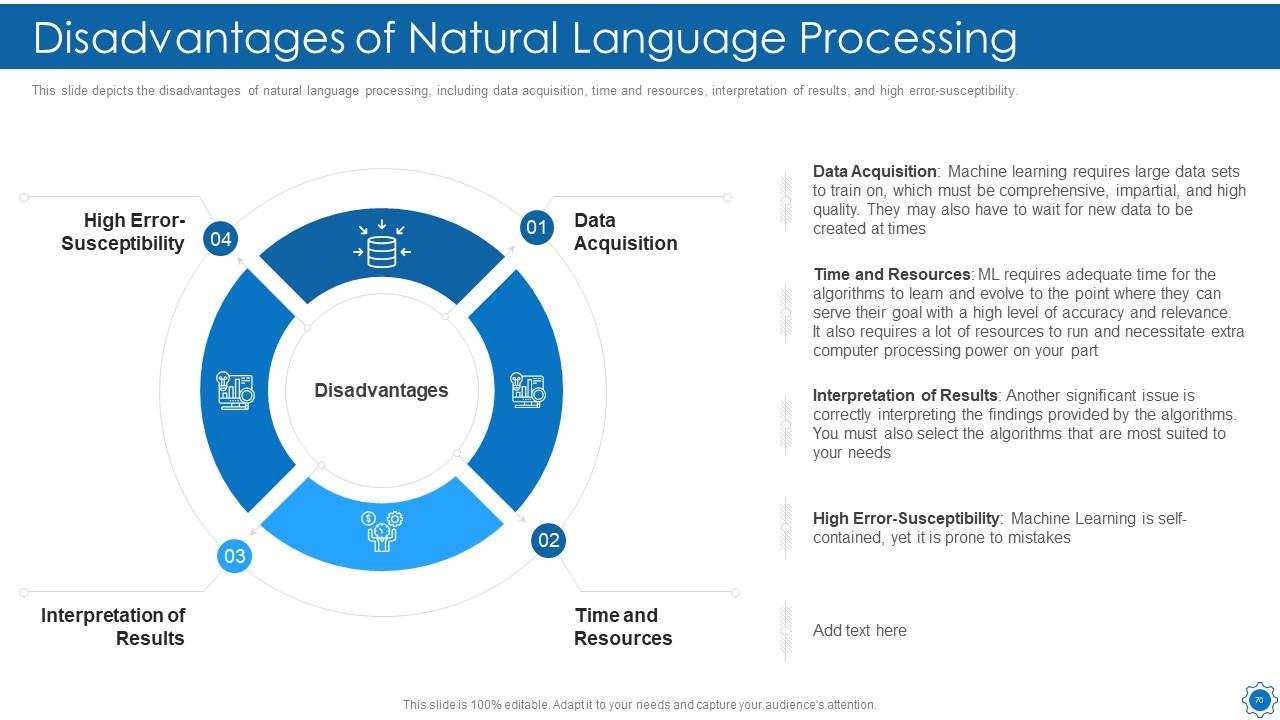

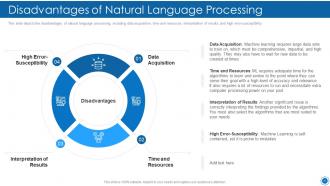

Slide 70: This slide displays Disadvantages of Natural Language Processing.

Slide 71: This slide shows Icons for Natural language Processing (IT).

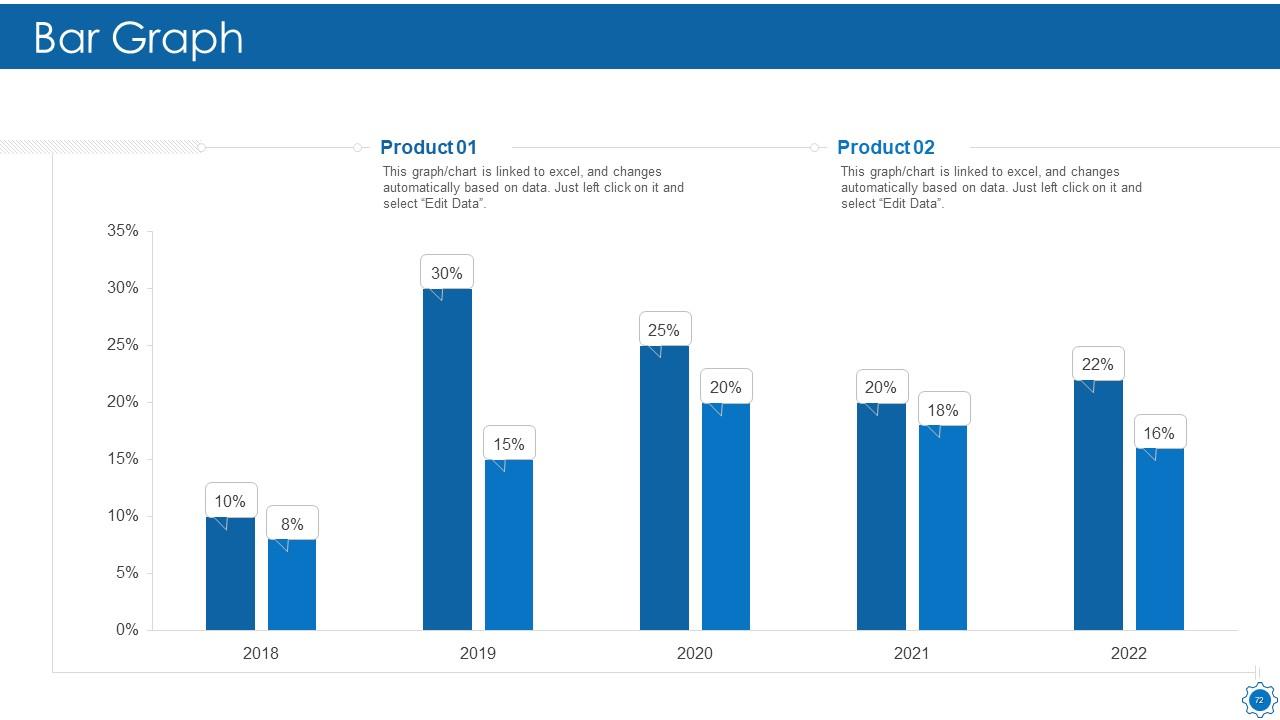

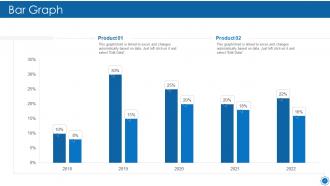

Slide 72: This slide displays Bar chart with two products comparison.

Slide 73: This is Our Goal slide. State your firm's goals here.

Slide 74: This slide shows Puzzle with related icons and text.

Slide 75: This is a Timeline slide. Show data related to time intervals here.

Slide 76: This slide shows Venn diagram with text boxes.

Slide 77: This slide shows Post It Notes. Post your important notes here.

Slide 78: This is an Idea Generation slide to state a new idea or highlight information, specifications etc.

Slide 79: This is a Thank You slide with address, contact numbers and email address.

Natural language processing it powerpoint presentation slides with all 79 slides:

Use our Natural Language Processing IT Powerpoint Presentation Slides to effectively help you save your valuable time. They are readymade to fit into any presentation structure.

FAQs

Natural Language Processing can provide a range of benefits for businesses, including automating tasks, improving customer service, and gaining insights from unstructured data. By automating tasks such as customer support or data analysis, businesses can save time and money while improving efficiency. NLP can also help businesses better understand customer needs and sentiment through sentiment analysis and other techniques.

The components of Natural Language Processing include morphology, syntax, semantics, and pragmatics. Morphology deals with the study of words and their structure, syntax deals with the study of sentence structure and grammar, semantics deals with the study of meaning, and pragmatics deals with the study of language in context.

Natural Language Processing works by breaking down language into its constituent parts, such as words and grammar, and then analyzing those parts to extract meaning. This can involve a range of techniques, including morphological analysis, parsing, semantic analysis, and natural language generation.

There are a variety of Natural Language Processing algorithms, including rule-based algorithms, machine learning algorithms, and deep learning algorithms. Rule-based algorithms rely on predefined rules to analyze language, while machine learning algorithms use statistical models to learn from data. Deep learning algorithms are a type of machine learning algorithm that use neural networks to analyze data.

Some common challenges with Natural Language Processing include the ambiguity of language, the complexity of grammar and syntax, and the variability of language across different contexts and domains. Additionally, Natural Language Processing algorithms can struggle with sarcasm, irony, and other forms of figurative language. These challenges can make it difficult to achieve high levels of accuracy in Natural Language Processing applications.

-

Very unique, user-friendly presentation interface.

-

Appreciate the research and its presentable format.

-

Topic best represented with attractive design.

-

Easily Editable.

-

Great designs, really helpful.