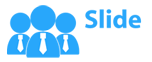

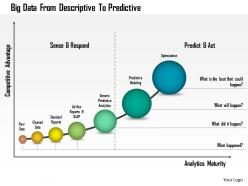

1214 big data from descriptive to predictive powerpoint presentation

Our 1214 Big Data From Descriptive To Predictive PowerPoint Presentation ensure your idea goes the distance. From concept to commercial feasibility.

You must be logged in to download this presentation.

PowerPoint presentation slides

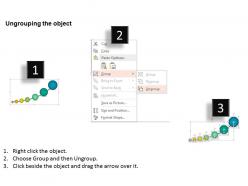

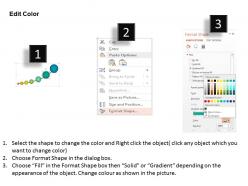

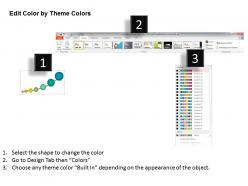

We are proud to present our 1214 big data from descriptive to predictive powerpoint presentation. This power point template has been designed with graphic of linear bar graph. This growing bar graph depicts the concept of big data analysis. Use this PPT for your data analysis related presentations.

People who downloaded this PowerPoint presentation also viewed the following :

1214 big data from descriptive to predictive powerpoint presentation with all 5 slides:

Many take a fancy to our 1214 Big Data From Descriptive To Predictive PowerPoint Presentation. They even find them cute.

-

Thanks for all your great templates they have saved me lots of time and accelerate my presentations. Great product, keep them up!

-

Use of different colors is good. It's simple and attractive.