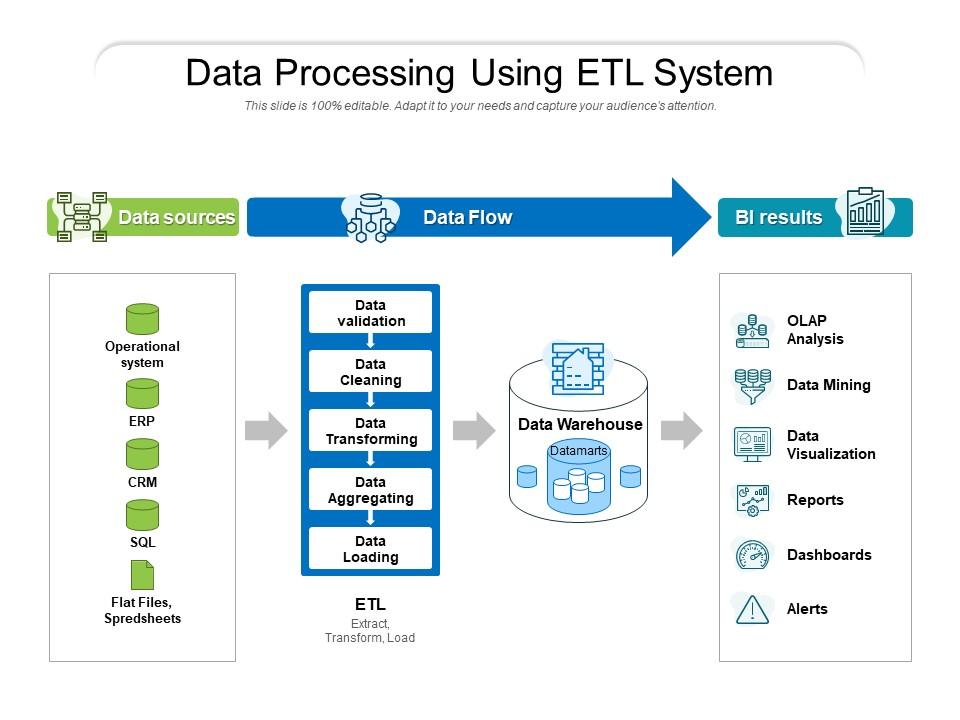

Data processing using etl system

ETL is the process of extracting data from non-optimized data sources and moving it to a centralised host (which is). The particular stages in that procedure may alter with ETL tools, but the ultimate outcome is the same. The ETL process, in its most basic form, involves data extraction, transformation, and loading. If you are looking for a comprehensive guide to data processing and want to learn about ETL system, then SlideTeam’s data analytics PowerPoint templates are the perfect resource for you. With our templates, you can get in-depth information about how data is processed and transformed into valuable insights. Plus, our templates are easy to use and customizable, so you can create presentations that fit your specific needs. Download our data analytics ppt templates now and start learning about data processing today.

You must be logged in to download this presentation.

PowerPoint presentation slides

Presenting this set of slides with name Data Processing Using ETL System. This is a three stage process. The stages in this process are Operational system, Data validation, Data Cleaning, Data Aggregating, Data Transforming, Data Loading, Data Visualization, Dashboards, CRM, ERP. This is a completely editable PowerPoint presentation and is available for immediate download. Download now and impress your audience.

People who downloaded this PowerPoint presentation also viewed the following :

Data processing using etl system with all 2 slides:

Earn greater appreciation with our Data Processing Using ETL System. You will benefit hands over fist.

No Reviews