Multiple hypothesis testing ppt powerpoint presentation gallery tips cpb

Our Multiple Hypothesis Testing Ppt Powerpoint Presentation Gallery Tips Cpb are topically designed to provide an attractive backdrop to any subject. Use them to look like a presentation pro.

Our Multiple Hypothesis Testing Ppt Powerpoint Presentation Gallery Tips Cpb are topically designed to provide an attractiv..

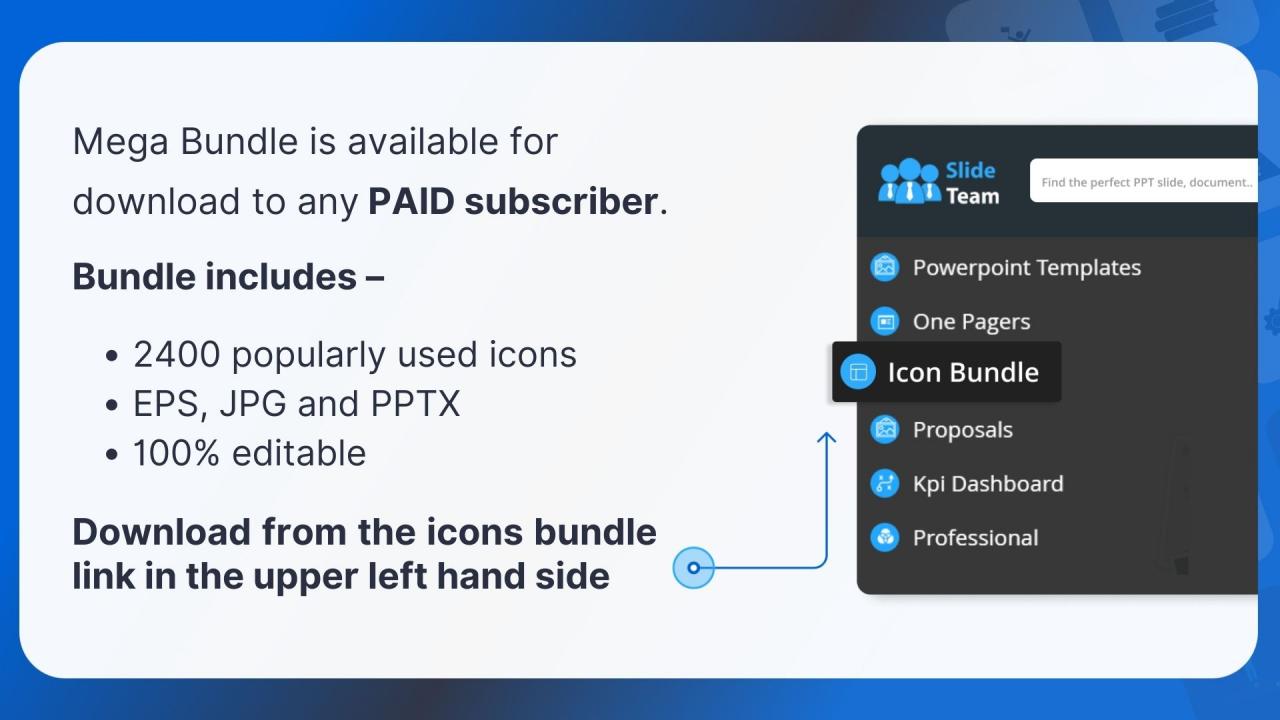

- Google Slides is a new FREE Presentation software from Google.

- All our content is 100% compatible with Google Slides.

- Just download our designs, and upload them to Google Slides and they will work automatically.

- Amaze your audience with SlideTeam and Google Slides.

-

Want Changes to This PPT Slide? Check out our Presentation Design Services

- WideScreen Aspect ratio is becoming a very popular format. When you download this product, the downloaded ZIP will contain this product in both standard and widescreen format.

-

- Some older products that we have may only be in standard format, but they can easily be converted to widescreen.

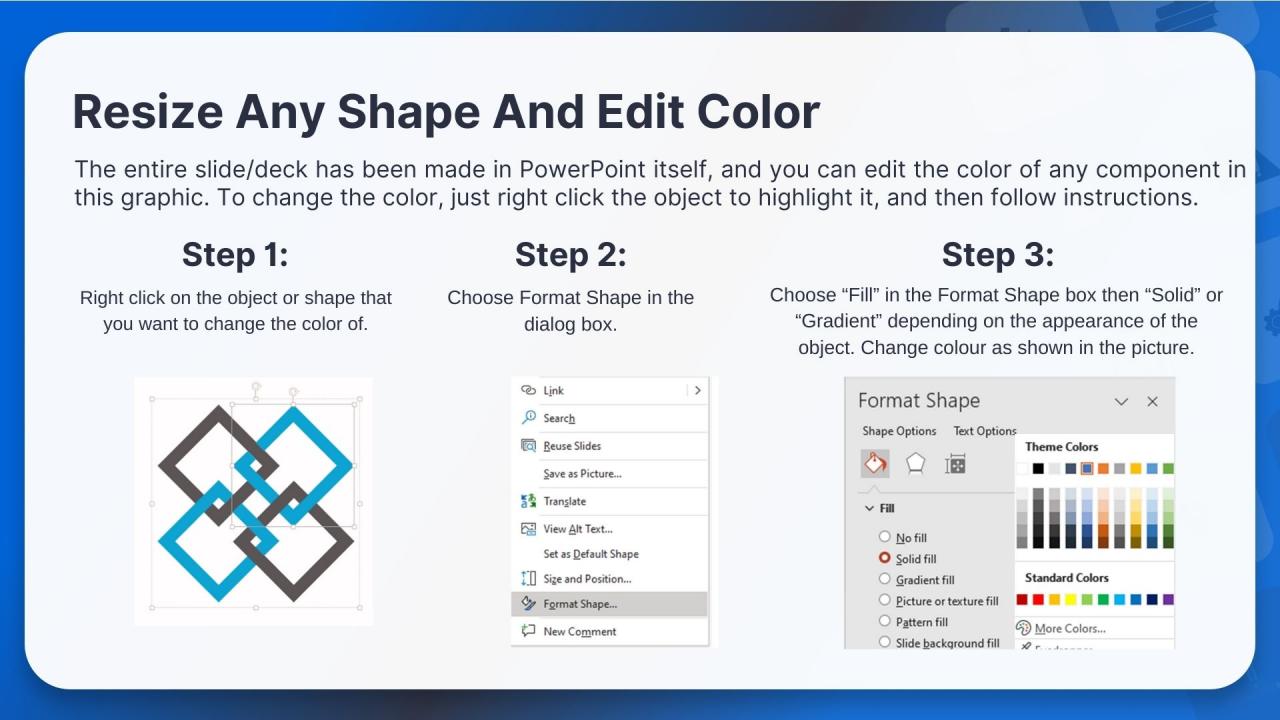

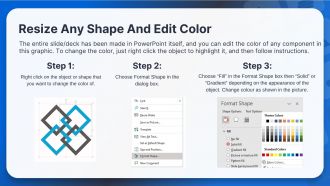

- To do this, please open the SlideTeam product in Powerpoint, and go to

- Design ( On the top bar) -> Page Setup -> and select "On-screen Show (16:9)” in the drop down for "Slides Sized for".

- The slide or theme will change to widescreen, and all graphics will adjust automatically. You can similarly convert our content to any other desired screen aspect ratio.

Compatible With Google Slides

Get This In WideScreen

You must be logged in to download this presentation.

PowerPoint presentation slides

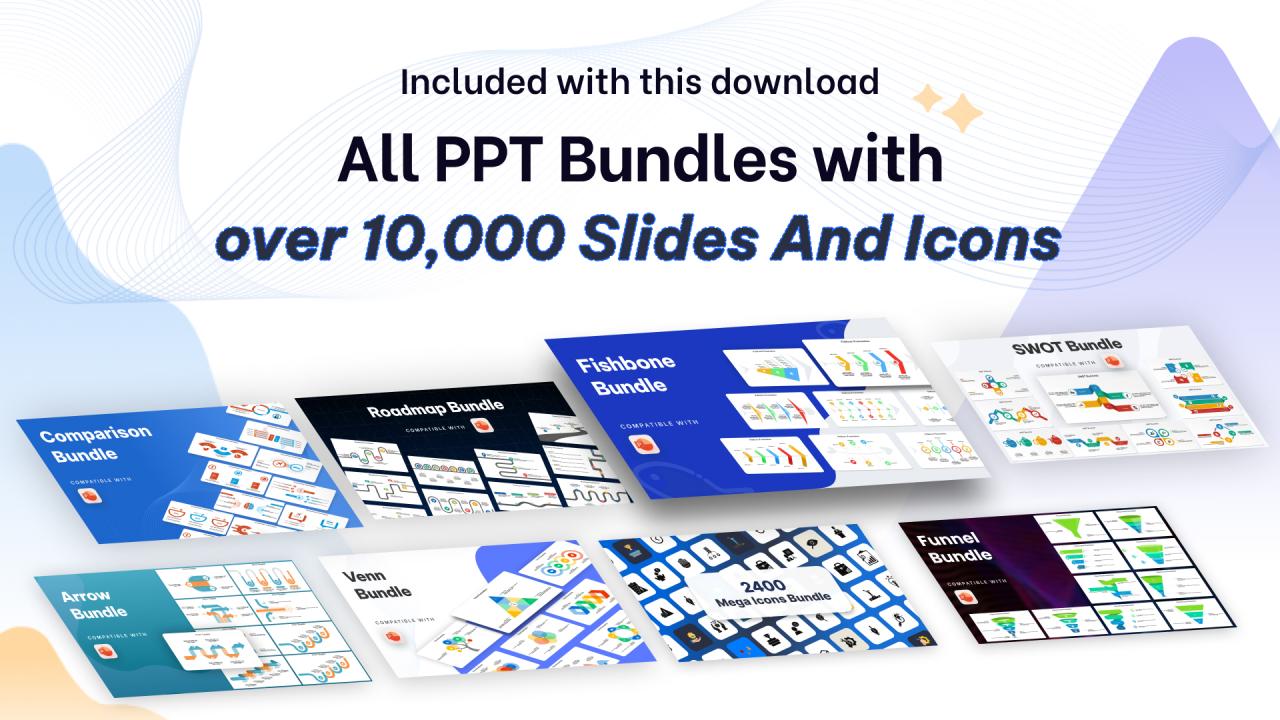

Presenting Multiple Hypothesis Testing Ppt Powerpoint Presentation Gallery Tips Cpb slide which is completely adaptable. The graphics in this PowerPoint slide showcase six stages that will help you succinctly convey the information. In addition, you can alternate the color, font size, font type, and shapes of this PPT layout according to your content. This PPT presentation can be accessed with Google Slides and is available in both standard screen and widescreen aspect ratios. It is also a useful set to elucidate topics like Multiple Hypothesis Testing. This well-structured design can be downloaded in different formats like PDF, JPG, and PNG. So, without any delay, click on the download button now.

People who downloaded this PowerPoint presentation also viewed the following :

Multiple hypothesis testing ppt powerpoint presentation gallery tips cpb with all 6 slides:

Use our Multiple Hypothesis Testing Ppt Powerpoint Presentation Gallery Tips Cpb to effectively help you save your valuable time. They are readymade to fit into any presentation structure.

-

Use of icon with content is very relateable, informative and appealing.

-

Great quality slides in rapid time.