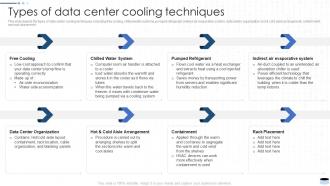

Types Of Data Center Cooling Techniques Data Center Types It Ppt Show Background Designs

This slide depicts the types of data center cooling techniques, including free cooling, chilled water systems, pumped refrigerant, indirect air evaporative system, data center organization, hot and cold aisle arrangement, containment, and rack placement.

You must be logged in to download this presentation.

PowerPoint presentation slides

This slide depicts the types of data center cooling techniques, including free cooling, chilled water systems, pumped refrigerant, indirect air evaporative system, data center organization, hot and cold aisle arrangement, containment, and rack placement. Increase audience engagement and knowledge by dispensing information using Types Of Data Center Cooling Techniques Data Center Types It Ppt Show Background Designs. This template helps you present information on eight stages. You can also present information on Chilled Water System, Pumped Refrigerant, Containment using this PPT design. This layout is completely editable so personaize it now to meet your audiences expectations.

People who downloaded this PowerPoint presentation also viewed the following :

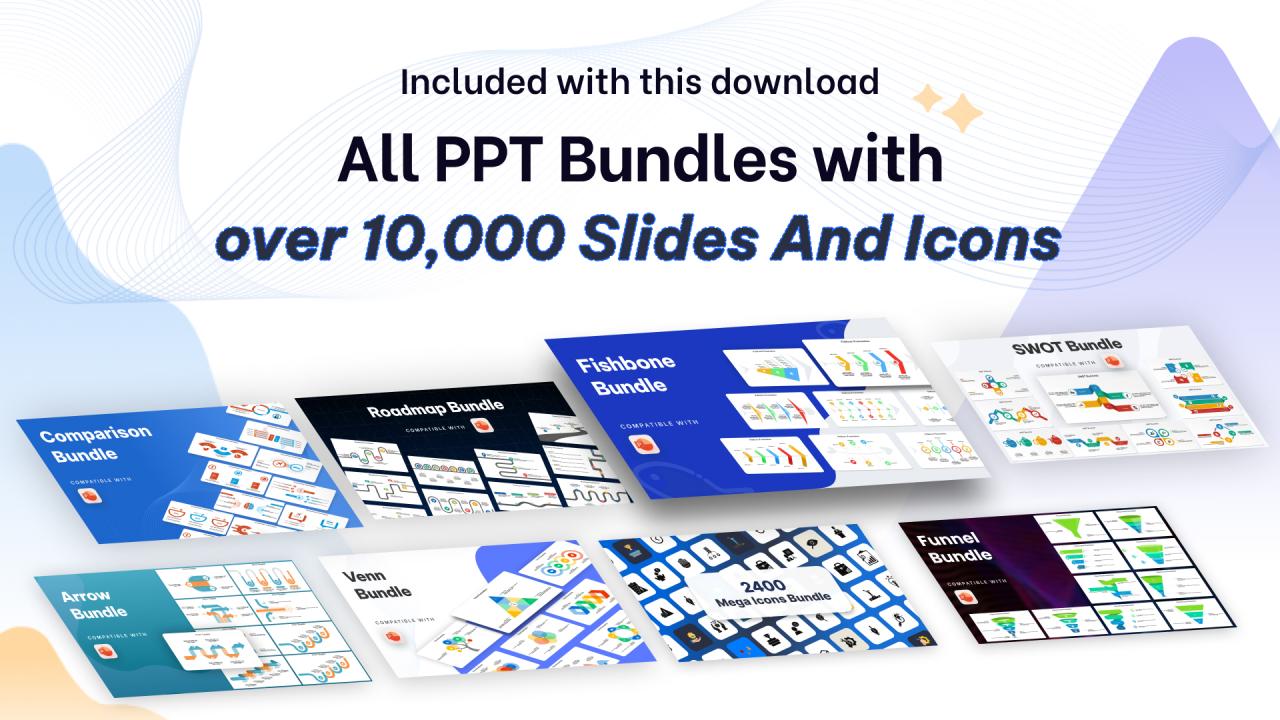

Types Of Data Center Cooling Techniques Data Center Types It Ppt Show Background Designs with all 6 slides:

Use our Types Of Data Center Cooling Techniques Data Center Types It Ppt Show Background Designs to effectively help you save your valuable time. They are readymade to fit into any presentation structure.

-

Spacious slides, just right to include text. SlideTeam has also helped us focus on the most important points that need to be highlighted for our clients.

-

I am glad to have come across Slideteam. I was searching for some unique presentations and templates for my business. There are a lot of alternatives available here.