Apache Hadoop Powerpoint Presentation Slides

Hadoop is a Java based Apache open-source platform that enables distributed massive datasets among computer clusters using basic programming concepts. Here is a competently designed template on Apache Hadoop that gives a brief idea about the companys current situation through a map and its need in the business. It incorporates templates that depict the importance, global market share, and advantages of Hadoop. In this presentation, we have covered the overview of Hadoop, its different components, Cluster, architecture, its working, and use cases in various sectors. In addition, this Apache PPT contains slides depicting Hadoop as a big data management platform that can be used to jot down the key takeaways. This presentation addresses features of the Apache flume tool for extensive data management in Hadoop. Furthermore, this presentation provides a framework for implementing Hadoop in the company and helps you conduct a comparative analysis between Hadoop 2. x and Hadoop 3. x. Lastly, the deck comprises a 30 60 90 days plan, a roadmap, and a dashboard for Hadoop implementation in the company. Get access now.

Hadoop is a Java based Apache open-source platform that enables distributed massive datasets among computer clusters using ..

- Google Slides is a new FREE Presentation software from Google.

- All our content is 100% compatible with Google Slides.

- Just download our designs, and upload them to Google Slides and they will work automatically.

- Amaze your audience with SlideTeam and Google Slides.

-

Want Changes to This PPT Slide? Check out our Presentation Design Services

- WideScreen Aspect ratio is becoming a very popular format. When you download this product, the downloaded ZIP will contain this product in both standard and widescreen format.

-

- Some older products that we have may only be in standard format, but they can easily be converted to widescreen.

- To do this, please open the SlideTeam product in Powerpoint, and go to

- Design ( On the top bar) -> Page Setup -> and select "On-screen Show (16:9)” in the drop down for "Slides Sized for".

- The slide or theme will change to widescreen, and all graphics will adjust automatically. You can similarly convert our content to any other desired screen aspect ratio.

Compatible With Google Slides

Get This In WideScreen

You must be logged in to download this presentation.

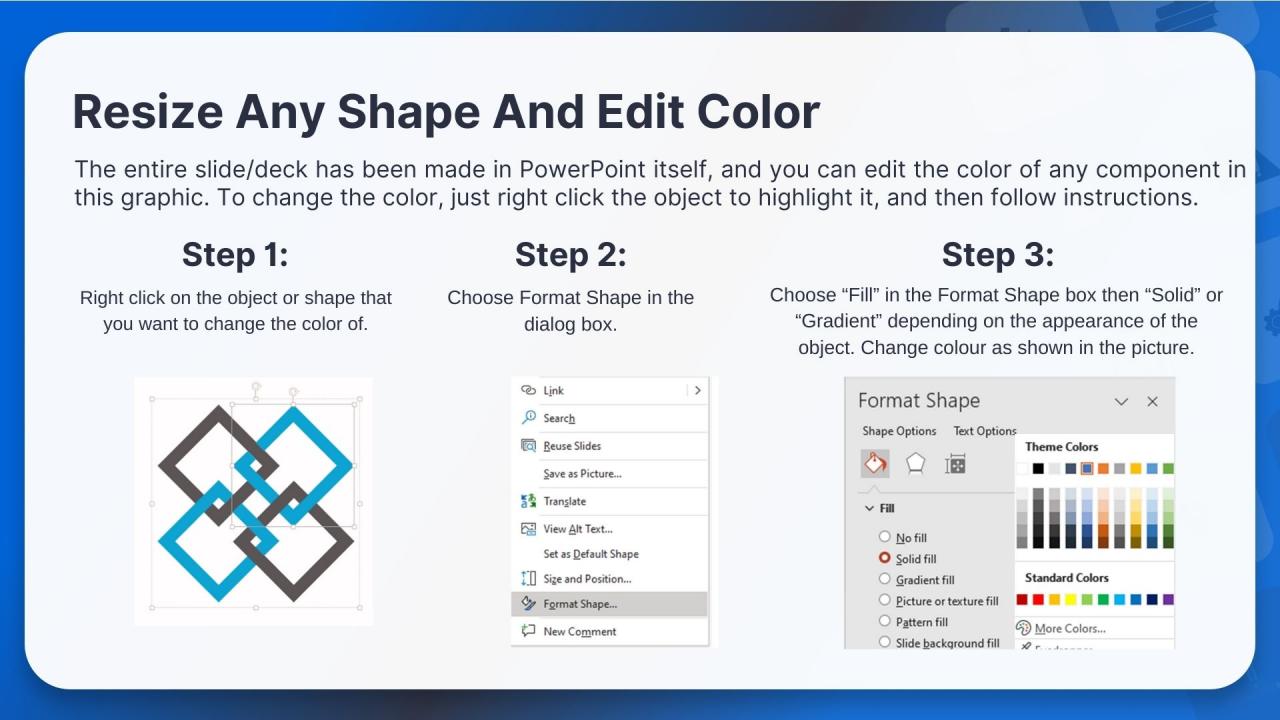

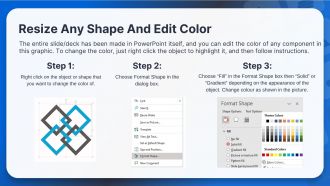

PowerPoint presentation slides

Deliver an informational PPT on various topics by using this Apache Hadoop Powerpoint Presentation Slides. This deck focuses and implements best industry practices, thus providing a birds-eye view of the topic. Encompassed with seventy nine slides, designed using high-quality visuals and graphics, this deck is a complete package to use and download. All the slides offered in this deck are subjective to innumerable alterations, thus making you a pro at delivering and educating. You can modify the color of the graphics, background, or anything else as per your needs and requirements. It suits every business vertical because of its adaptable layout.

People who downloaded this PowerPoint presentation also viewed the following :

Content of this Powerpoint Presentation

Slide 1: This slide introduces Apache Hadoop. State your company name and begin.

Slide 2: This slide states Agenda of the presentation.

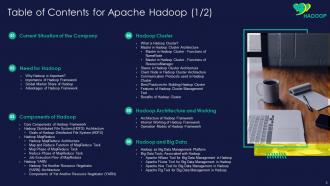

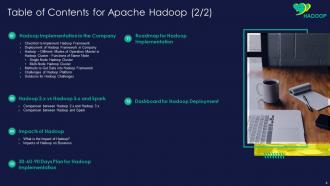

Slide 3: This slide shows Table of Content for the presentation.

Slide 4: This is another slide continuing Table of Content for the presentation.

Slide 5: This slide displays title for topics that are to be covered next in the template.

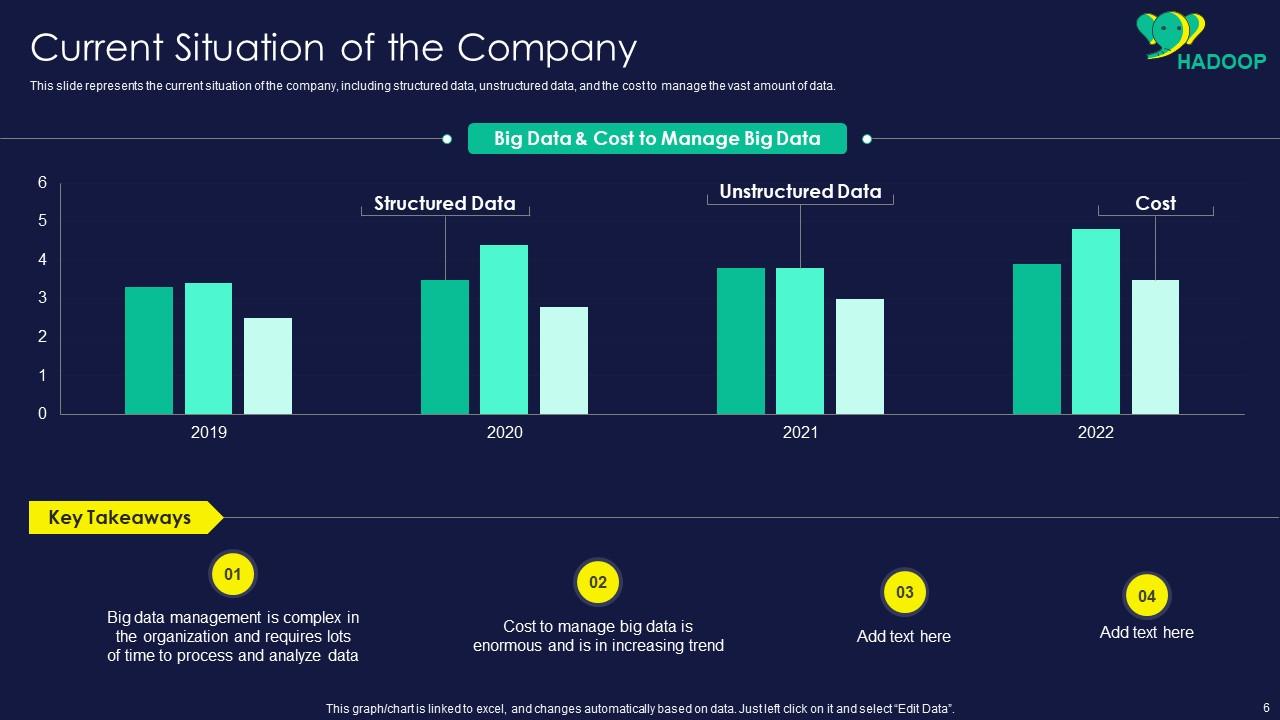

Slide 6: This slide represents current situation of the company, including structured data, unstructured data, etc.

Slide 7: This slide showcases title for topics that are to be covered next in the template.

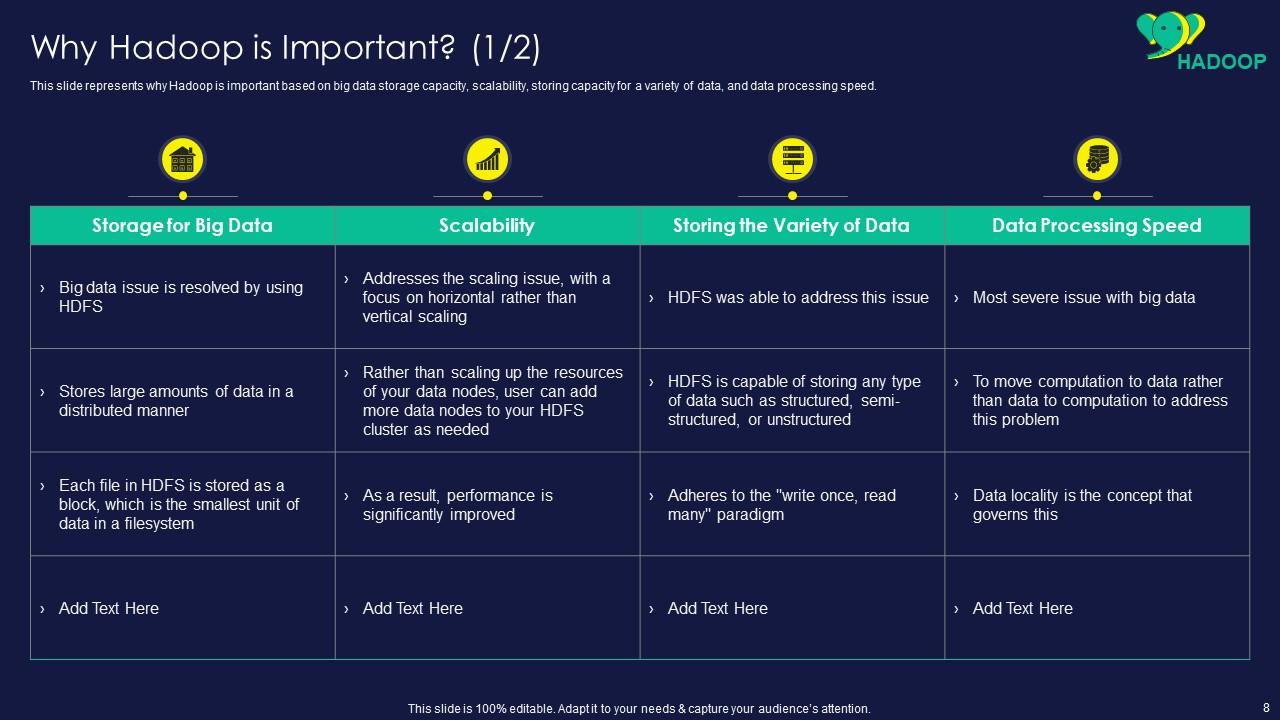

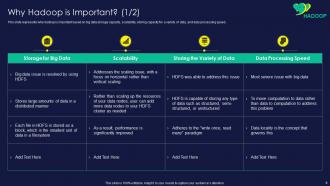

Slide 8: This slide shows why Hadoop is important based on big data storage capacity.

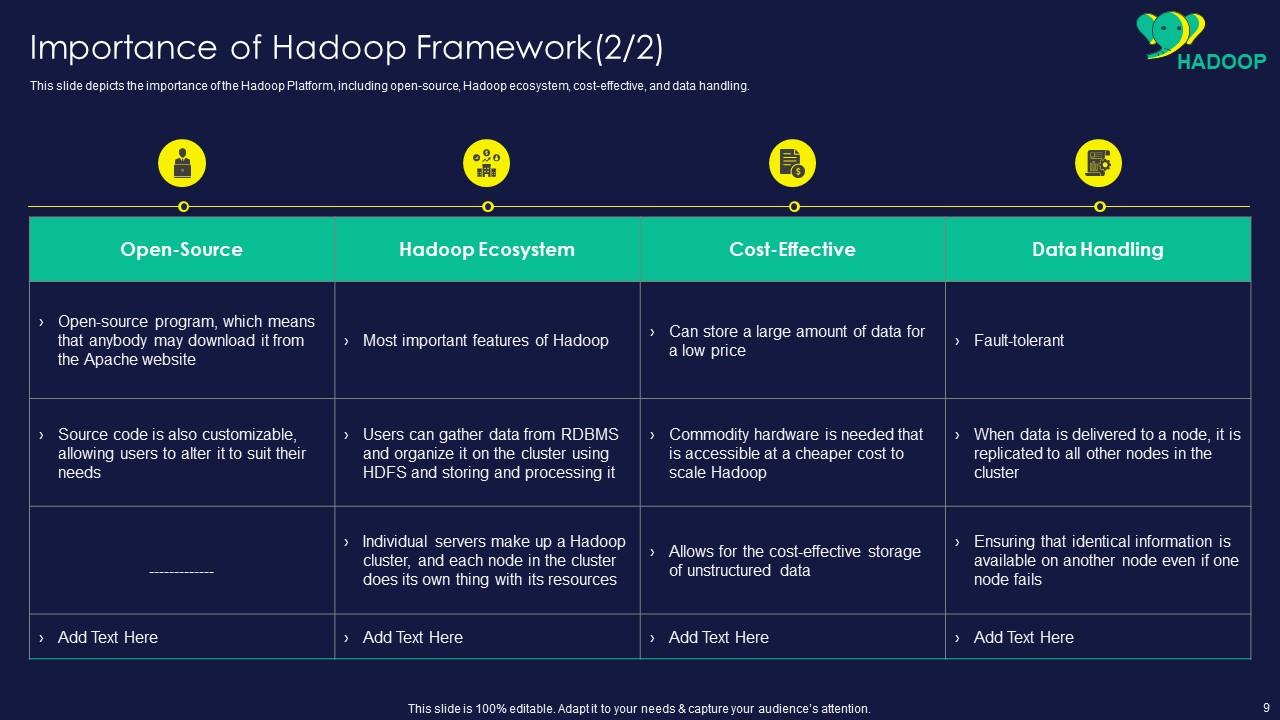

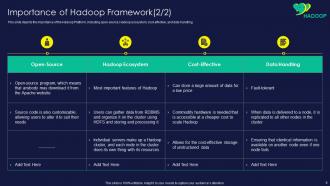

Slide 9: This slide presents importance of the Hadoop Platform, including open-source, Hadoop ecosystem, etc.

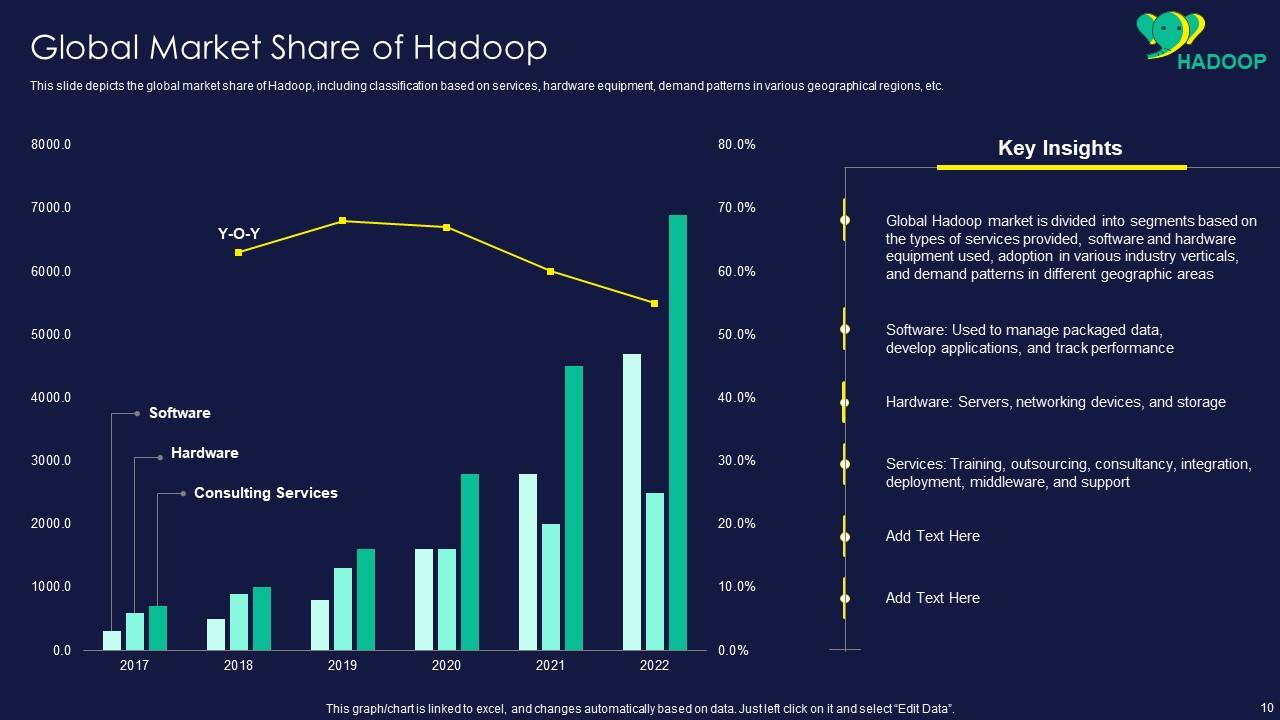

Slide 10: This slide displays global market share of Hadoop, including classification based on services.

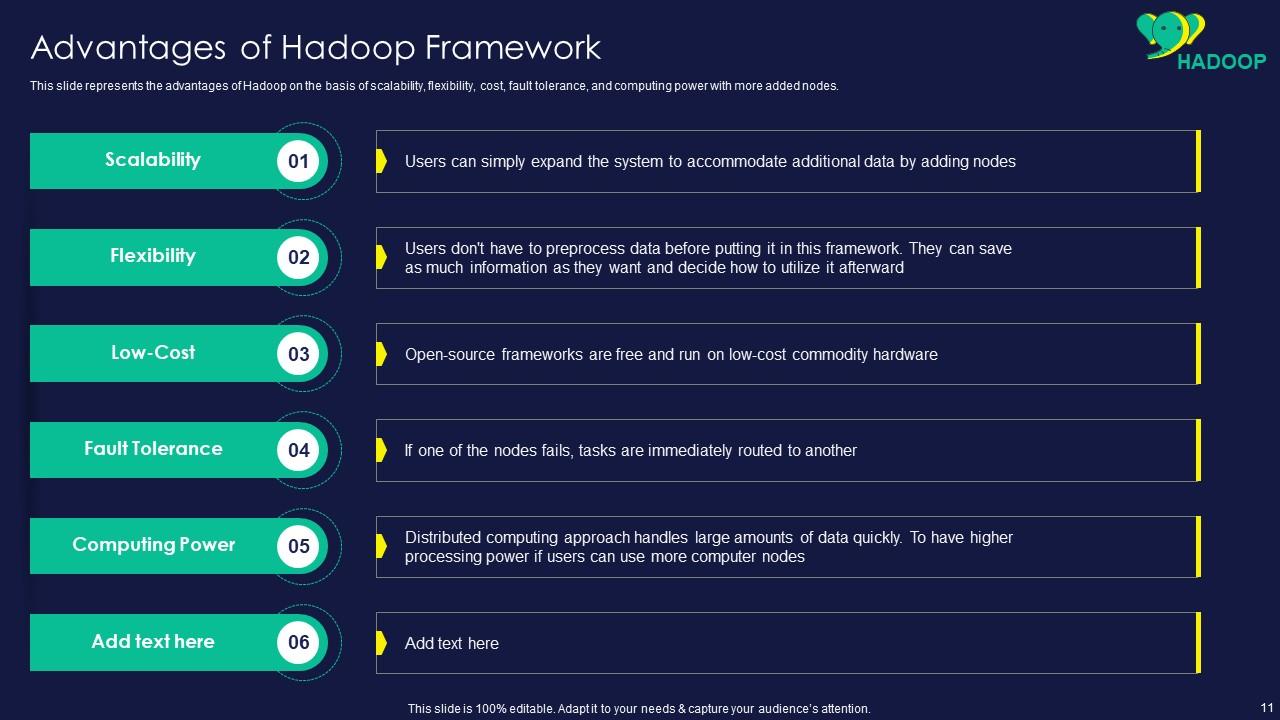

Slide 11: This slide represents advantages of Hadoop on the basis of scalability, flexibility, cost, etc.

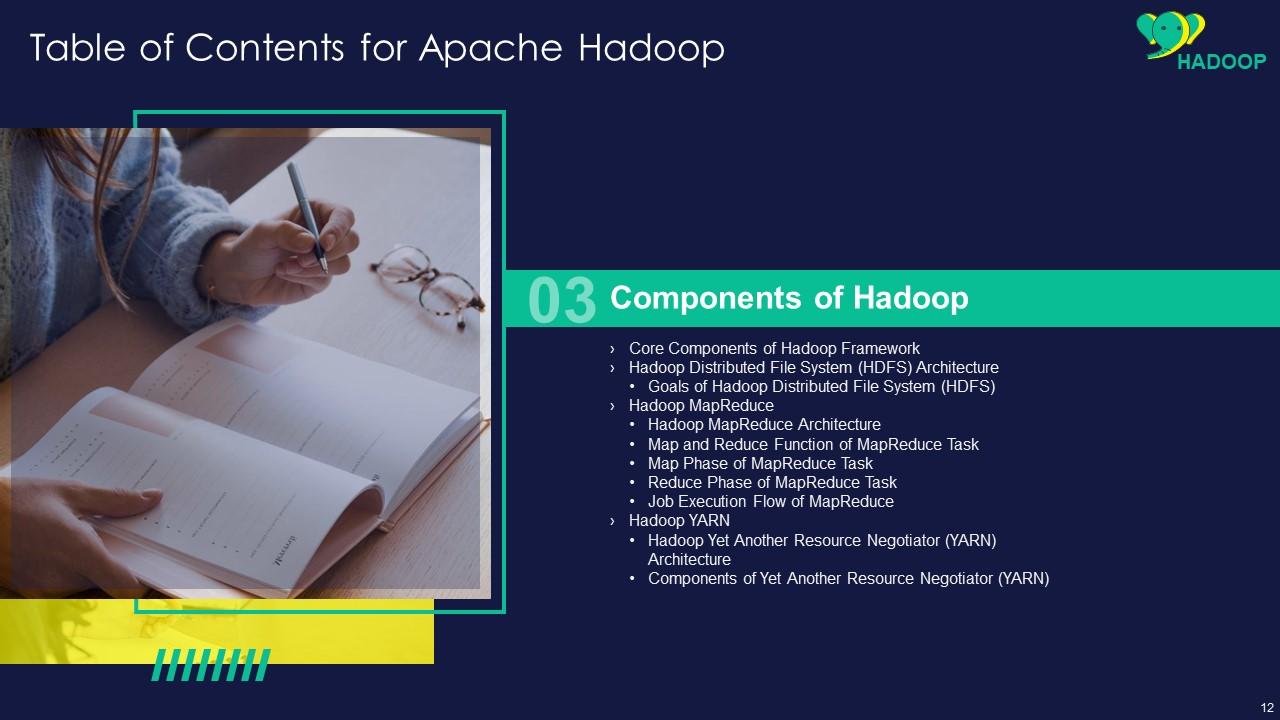

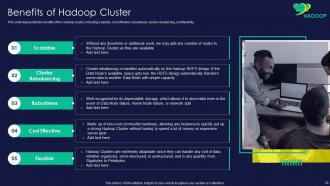

Slide 12: This slide showcases title for topics that are to be covered next in the template.

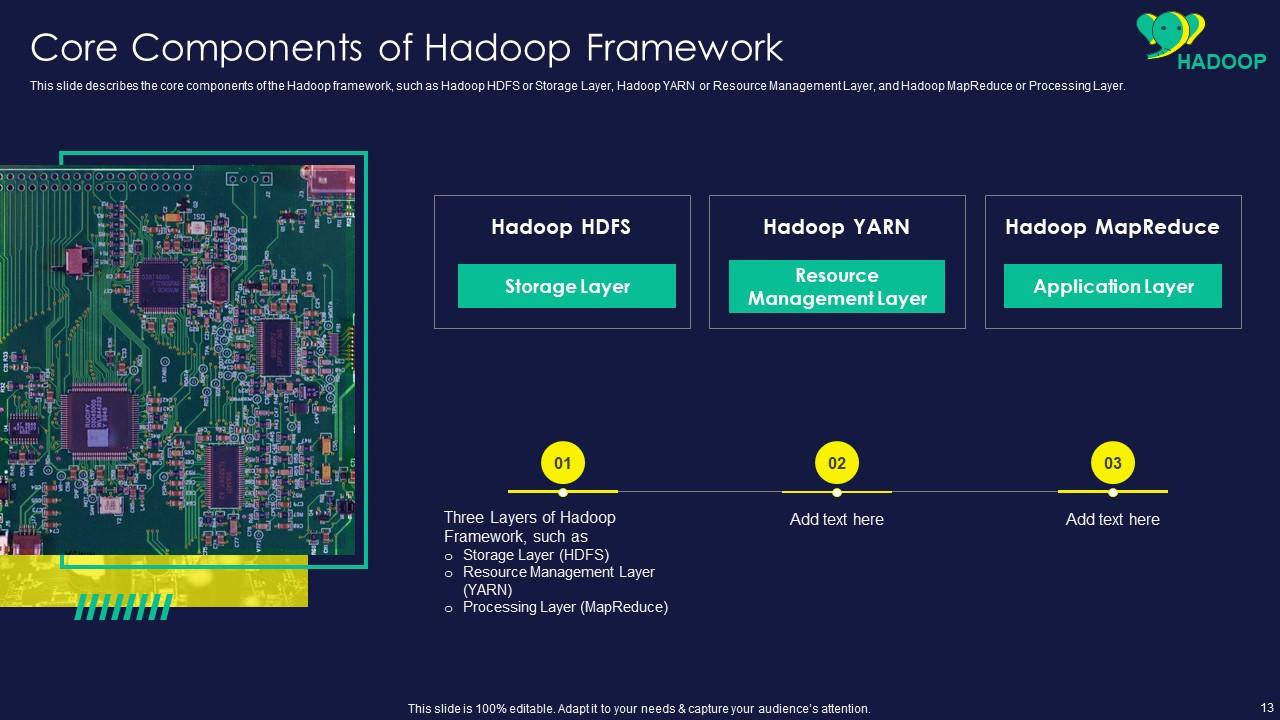

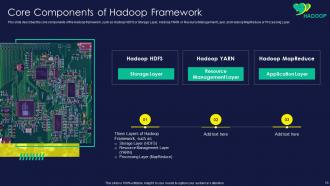

Slide 13: This slide shows Core Components of Hadoop Framework.

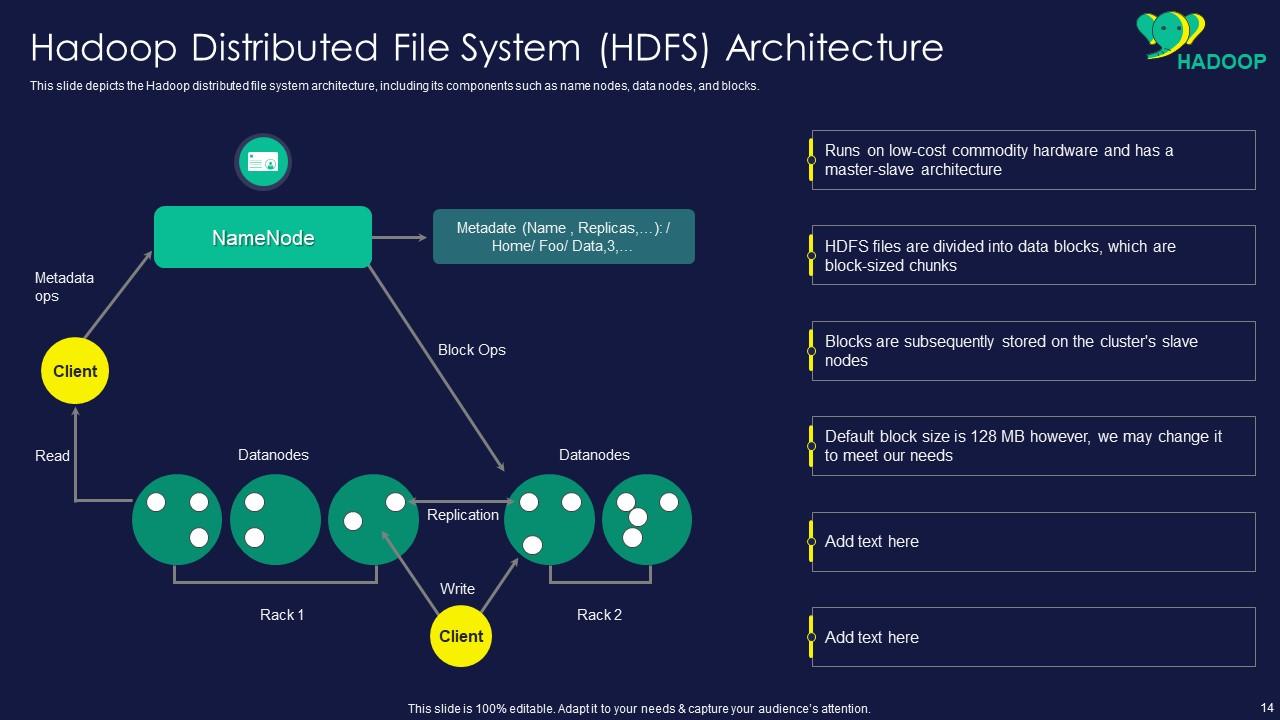

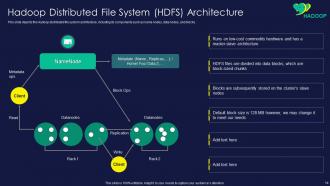

Slide 14: This slide presents Hadoop distributed file system architecture, including its components.

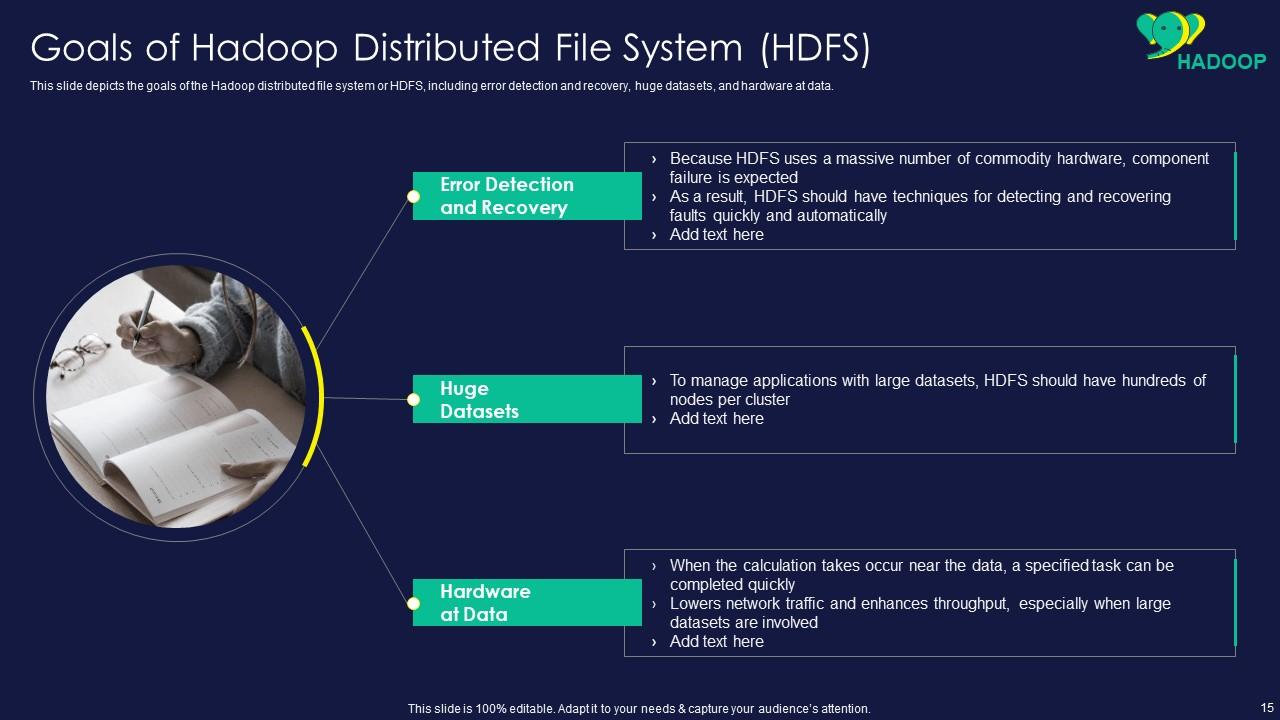

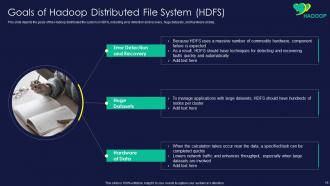

Slide 15: This slide displays Goals of Hadoop Distributed File System (HDFS).

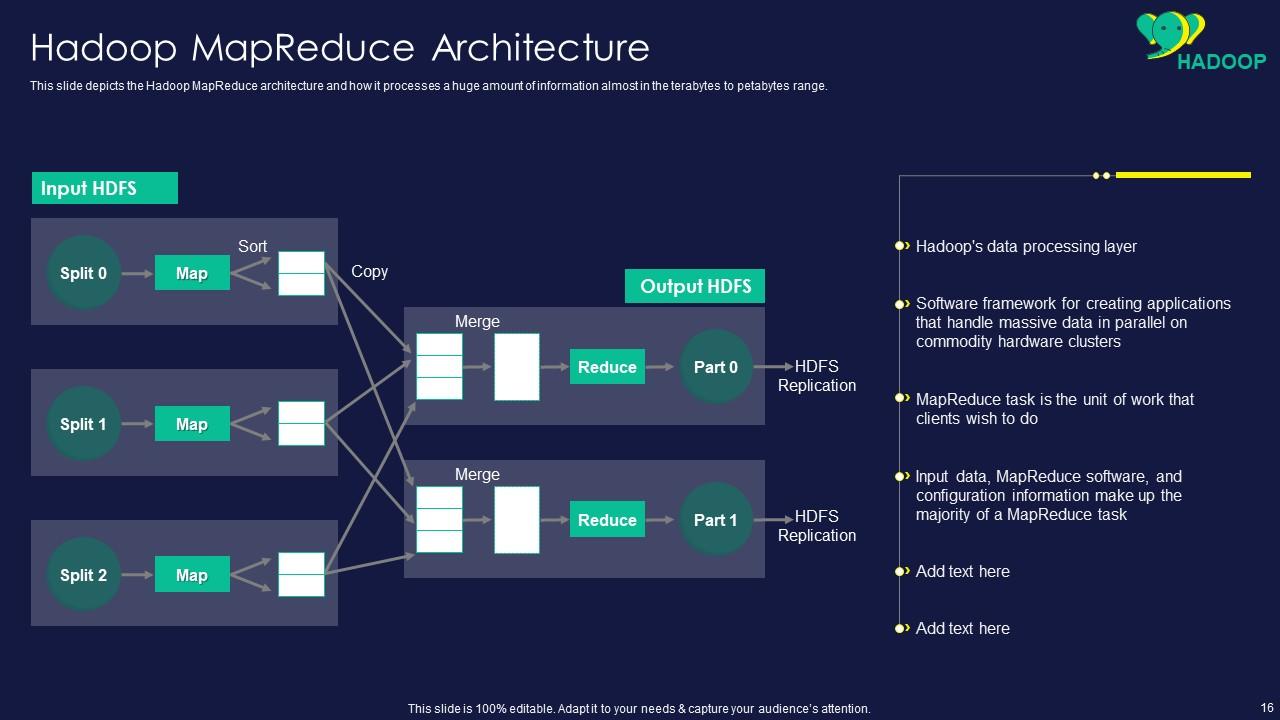

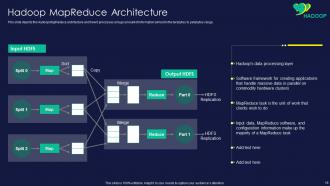

Slide 16: This slide represents Hadoop MapReduce architecture and how it processes a huge amount of information.

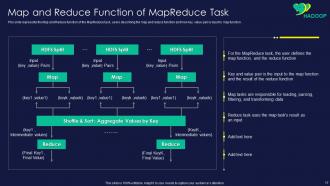

Slide 17: This slide showcases Map and Reduce Function of MapReduce Task.

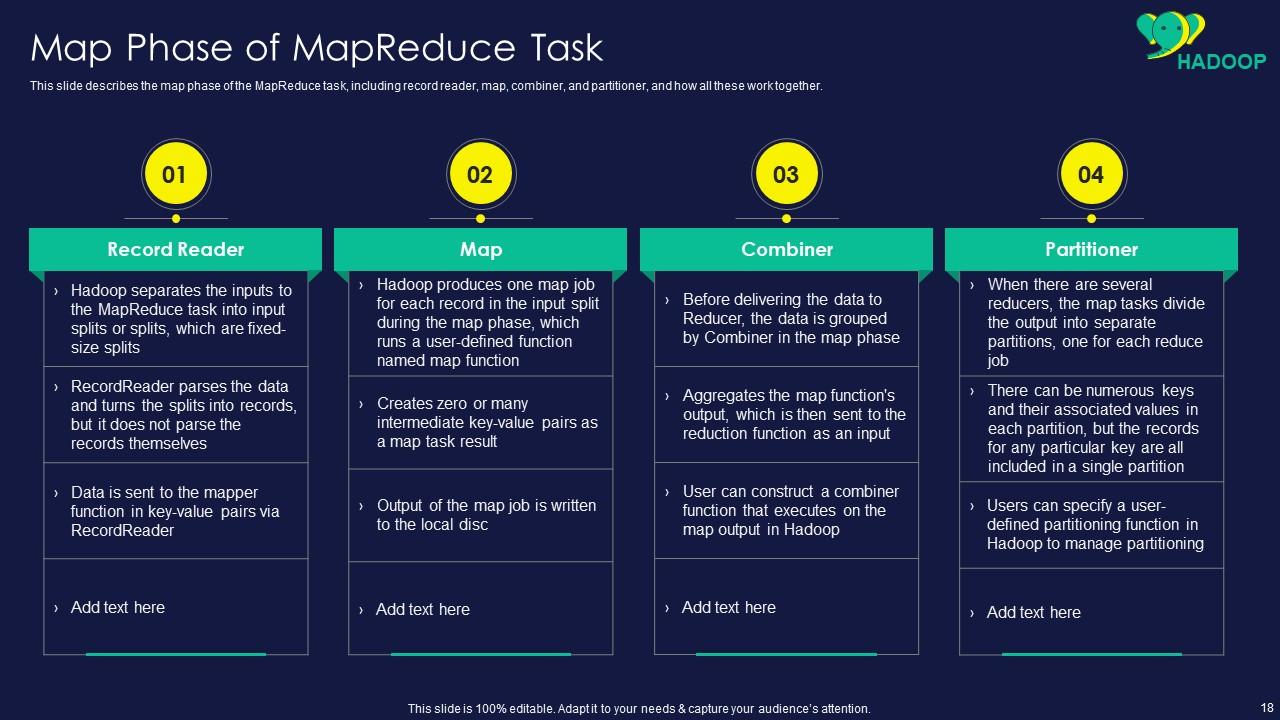

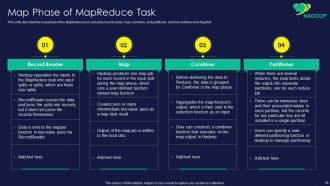

Slide 18: This slide shows map phase of the MapReduce task, including record reader, map, combiner, etc.

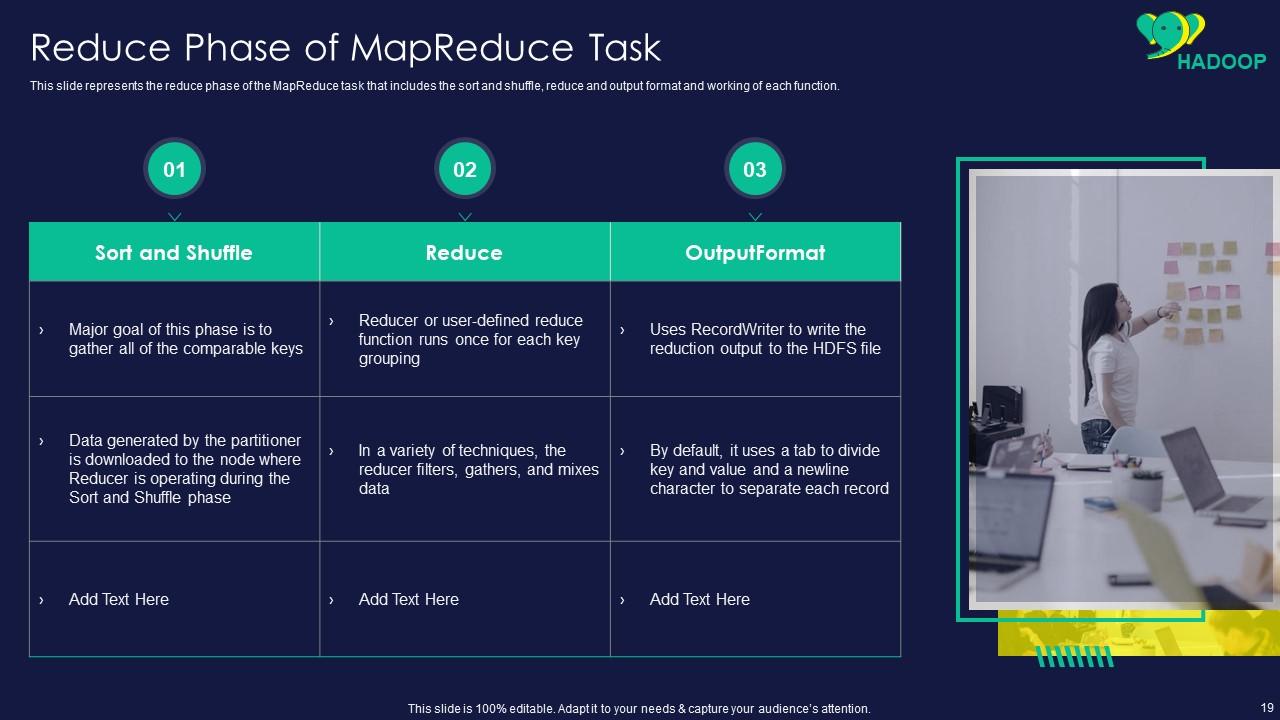

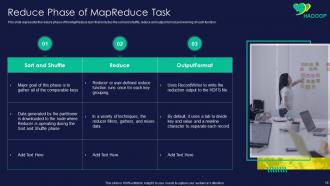

Slide 19: This slide presents reduce phase of the MapReduce task that includes the sort and shuffle.

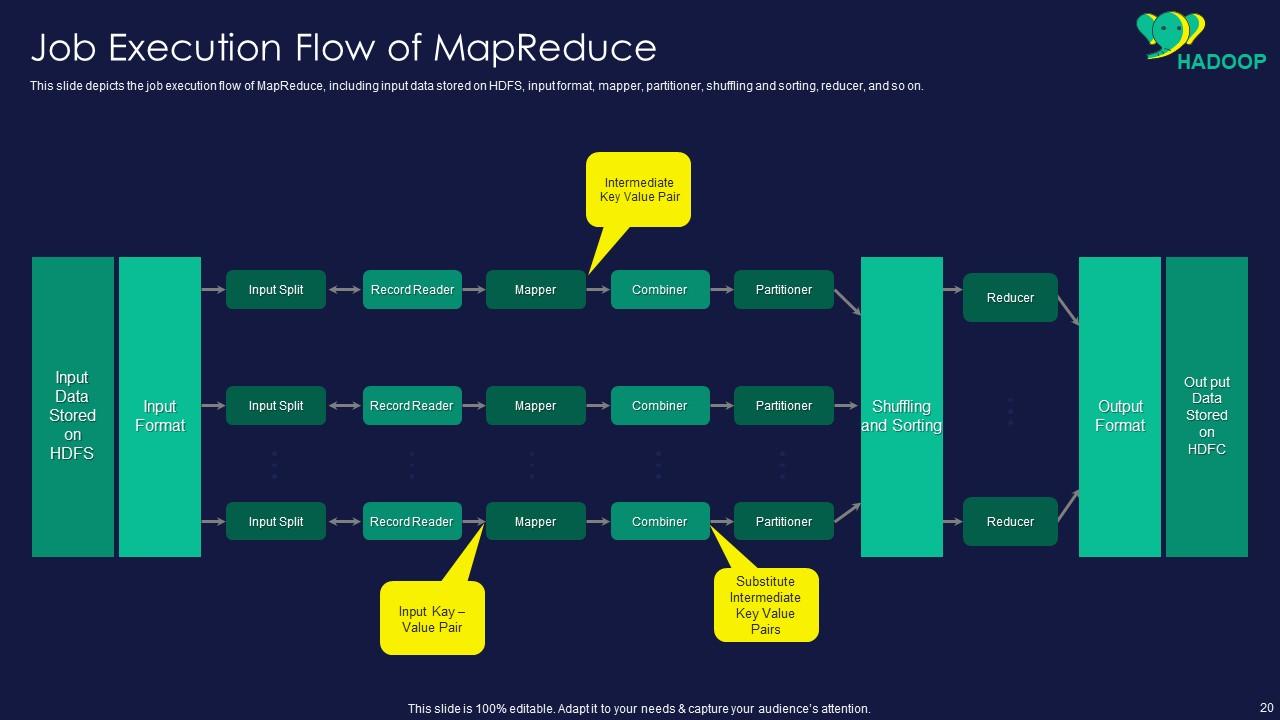

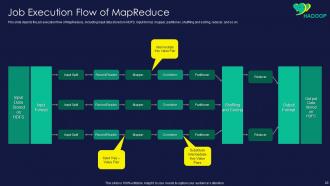

Slide 20: This slide displays job execution flow of MapReduce, including input data stored on HDFS.

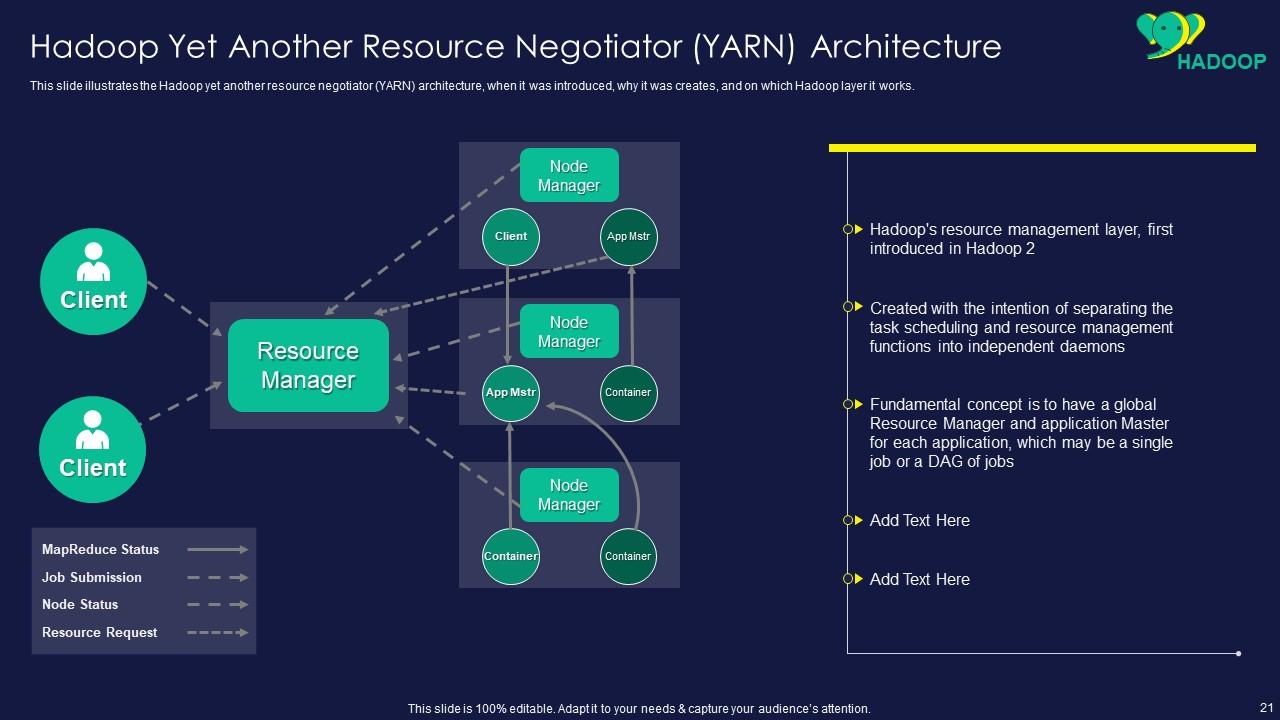

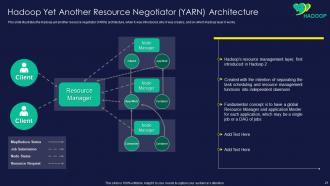

Slide 21: This slide represents Hadoop Yet Another Resource Negotiator (YARN) Architecture.

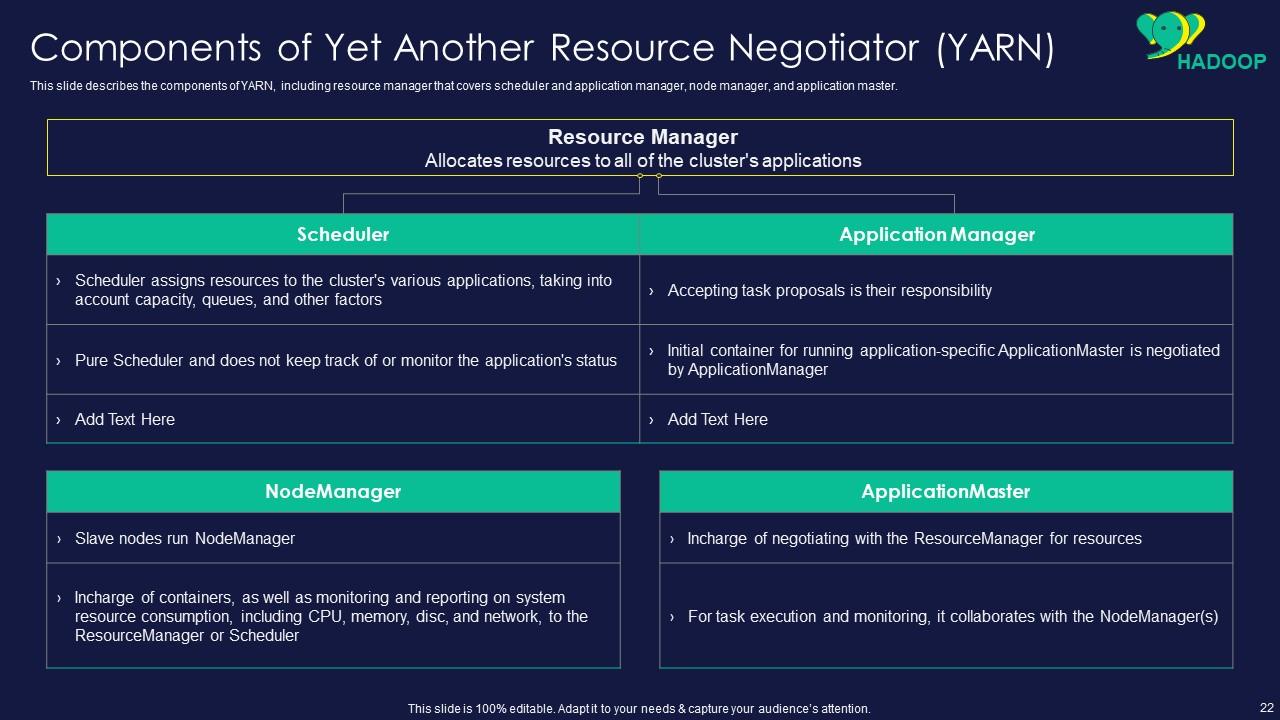

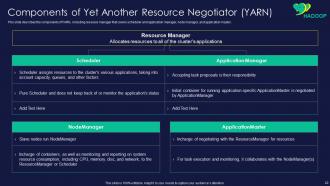

Slide 22: This slide showcases Components of Yet Another Resource Negotiator (YARN).

Slide 23: This slide shows title for topics that are to be covered next in the template.

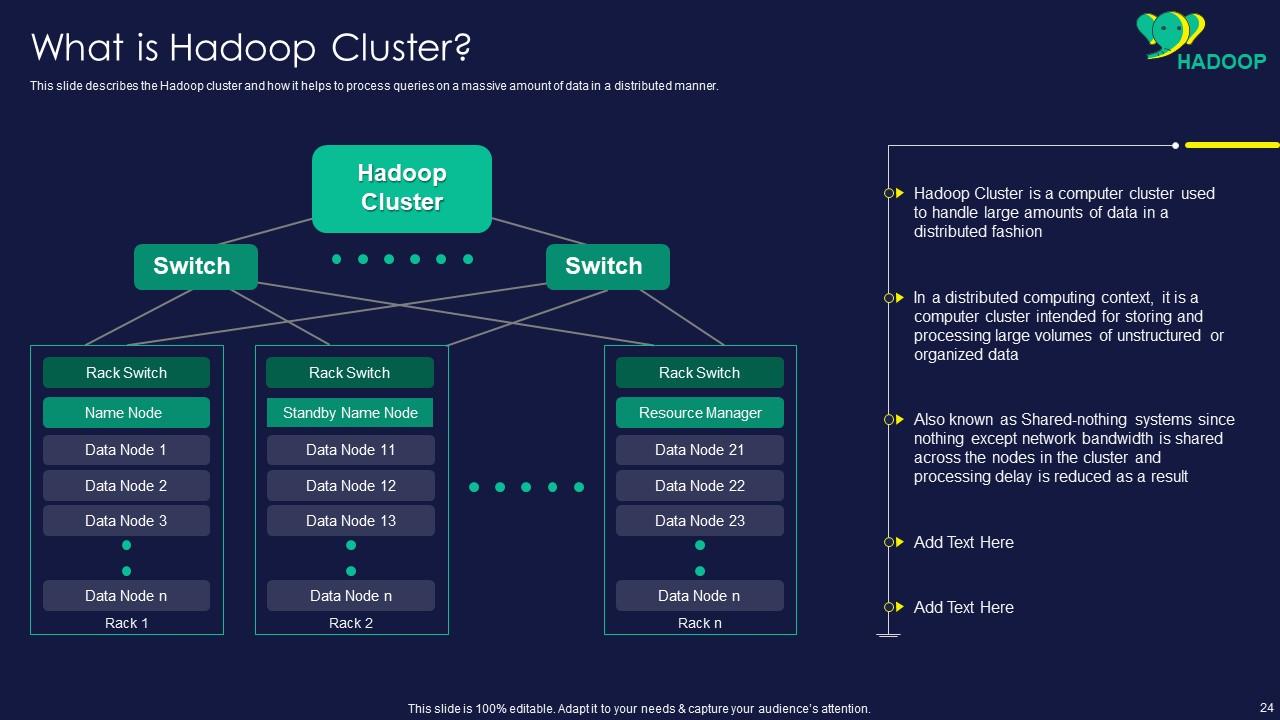

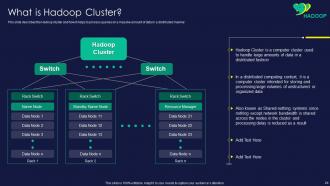

Slide 24: This slide presents Hadoop cluster and how it helps to process queries on a massive amount of data.

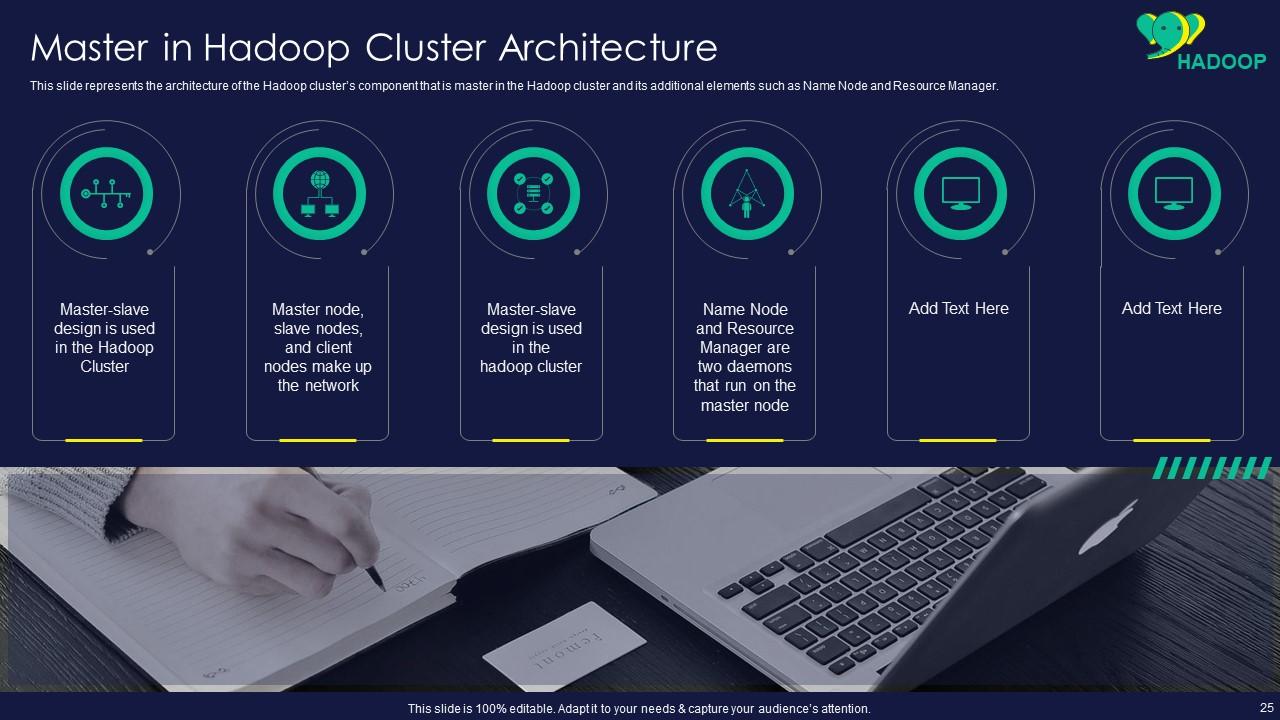

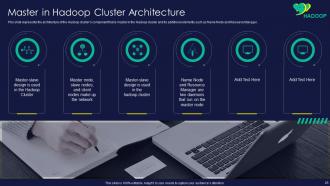

Slide 25: This slide displays architecture of the Hadoop cluster’s component.

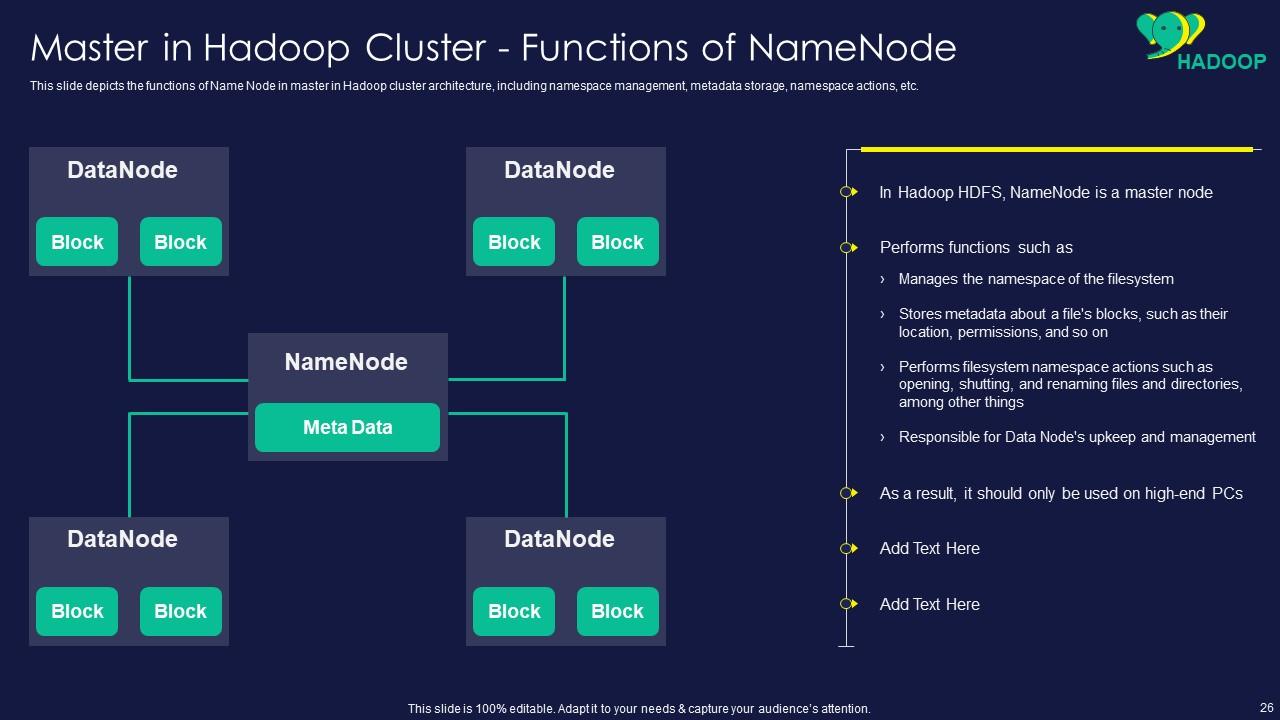

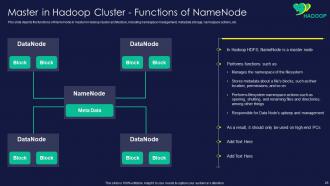

Slide 26: This slide represents functions of Name Node in master in Hadoop cluster architecture.

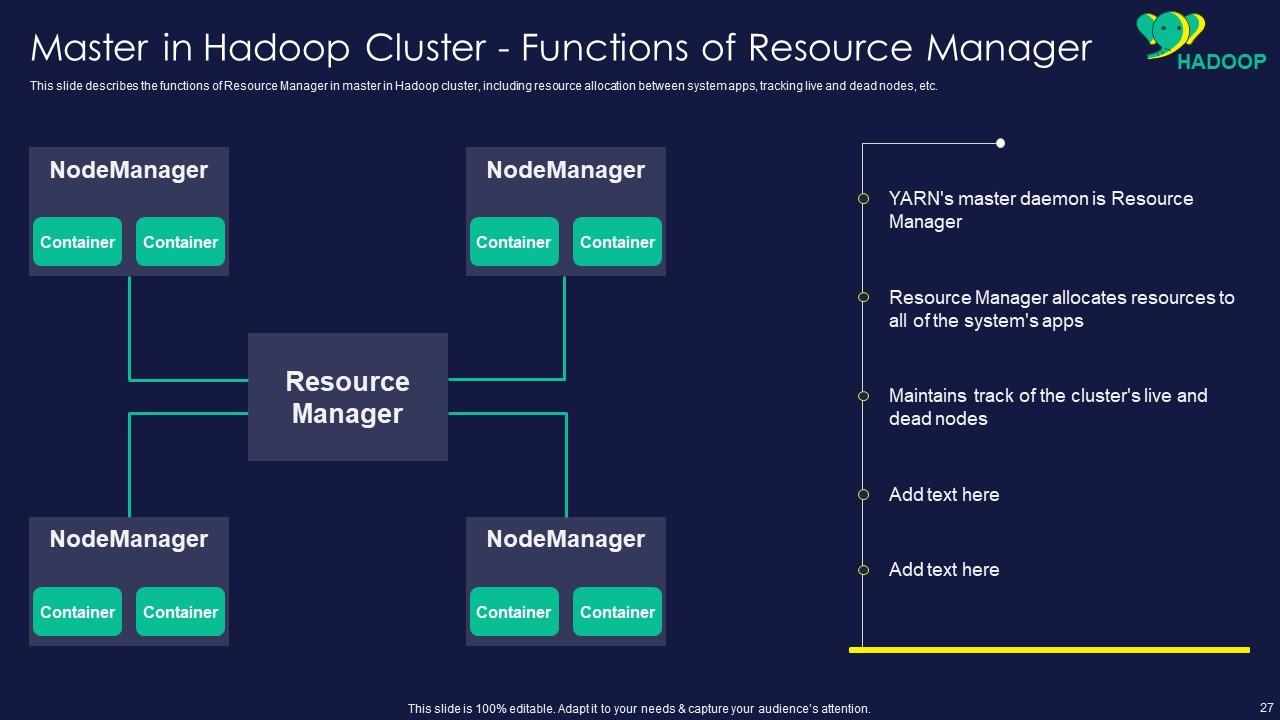

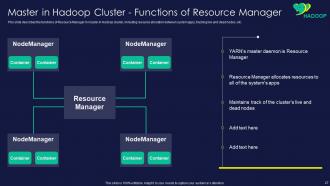

Slide 27: This slide showcases functions of Resource Manager in master in Hadoop cluster.

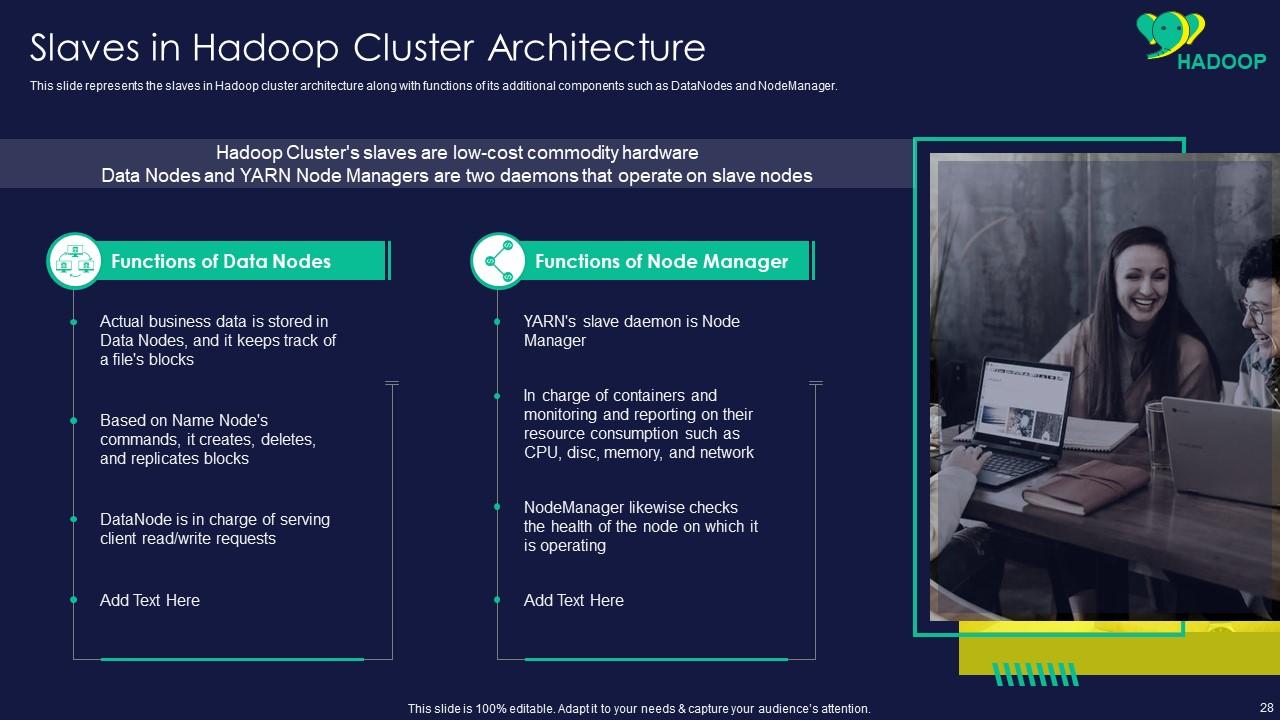

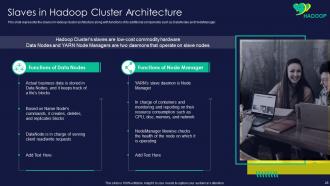

Slide 28: This slide shows slaves in Hadoop cluster architecture along with functions of its additional components.

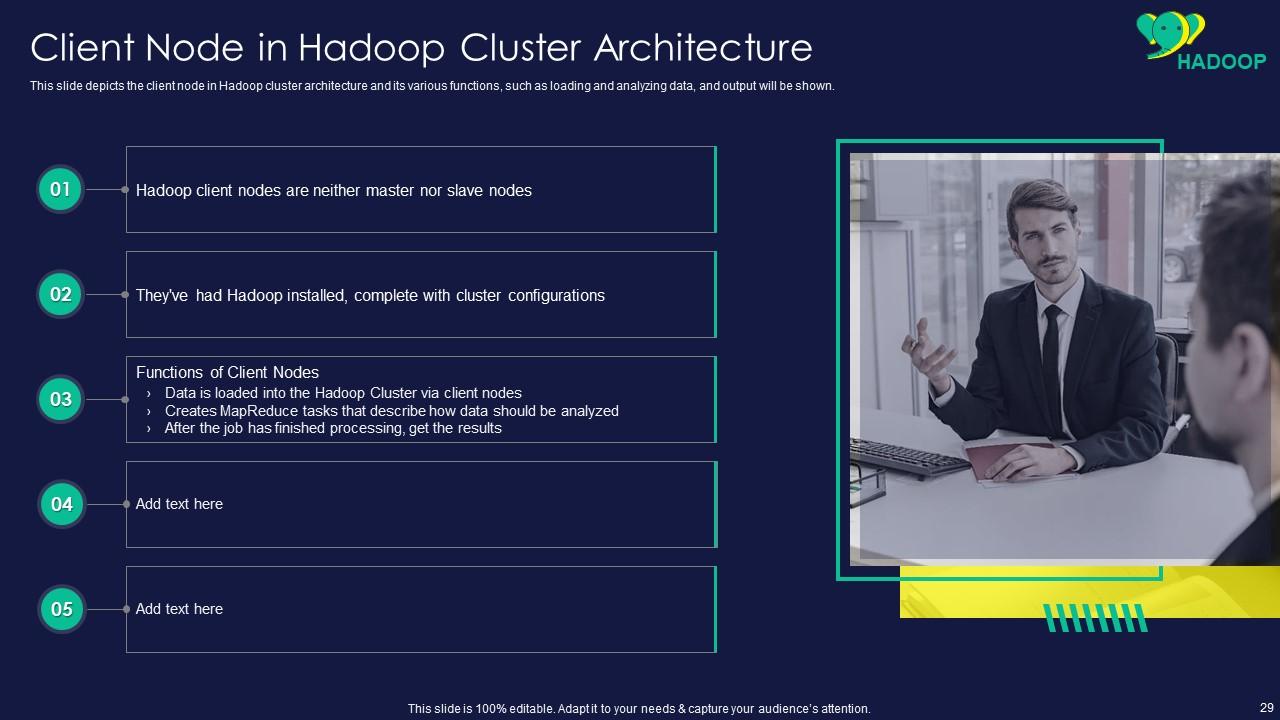

Slide 29: This slide presents client node in Hadoop cluster architecture and its various functions.

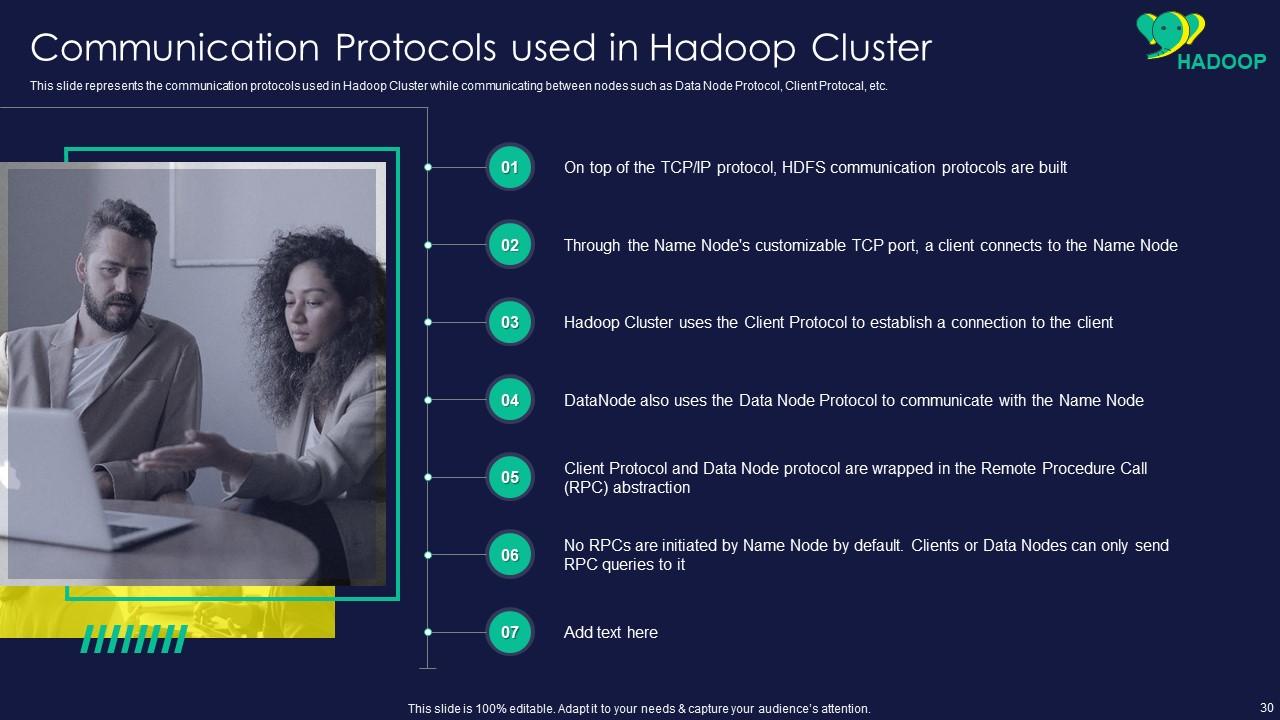

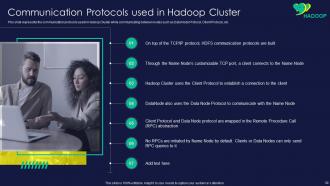

Slide 30: This slide displays Communication Protocols used in Hadoop Cluster.

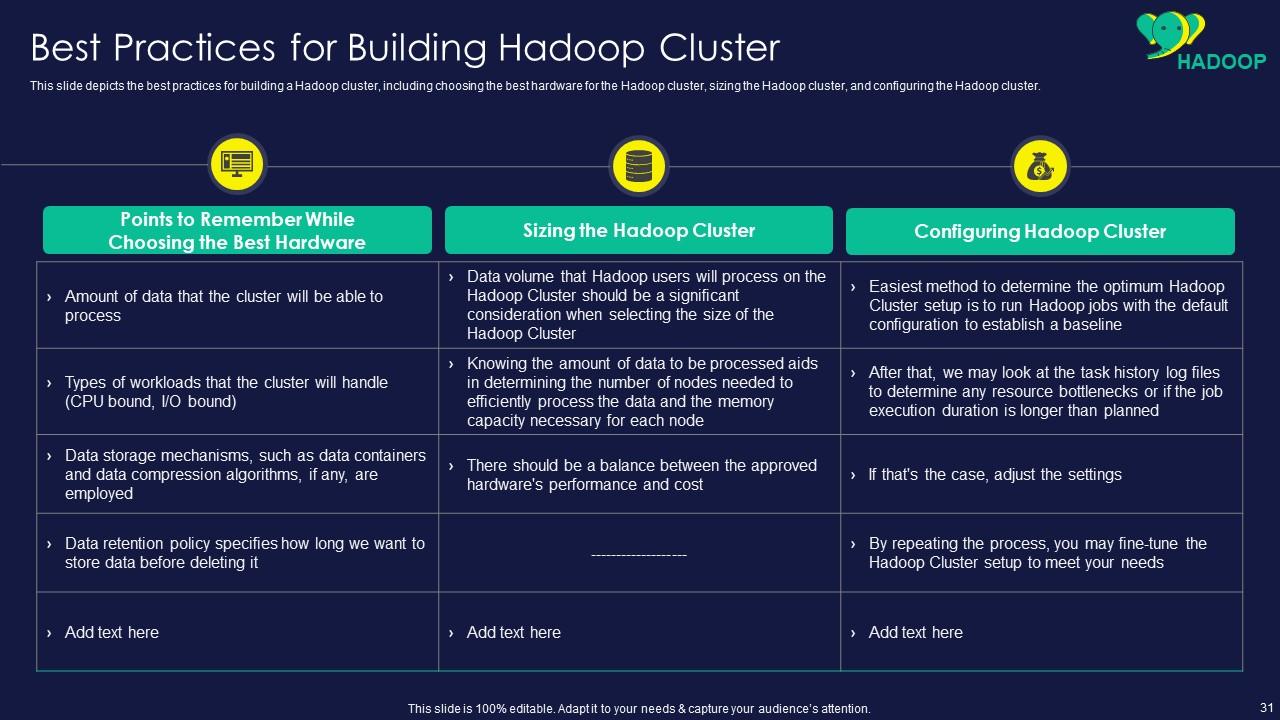

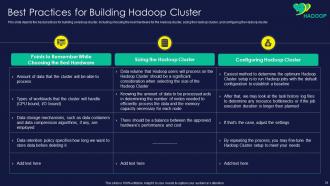

Slide 31: This slide represents Best Practices for Building Hadoop Cluster.

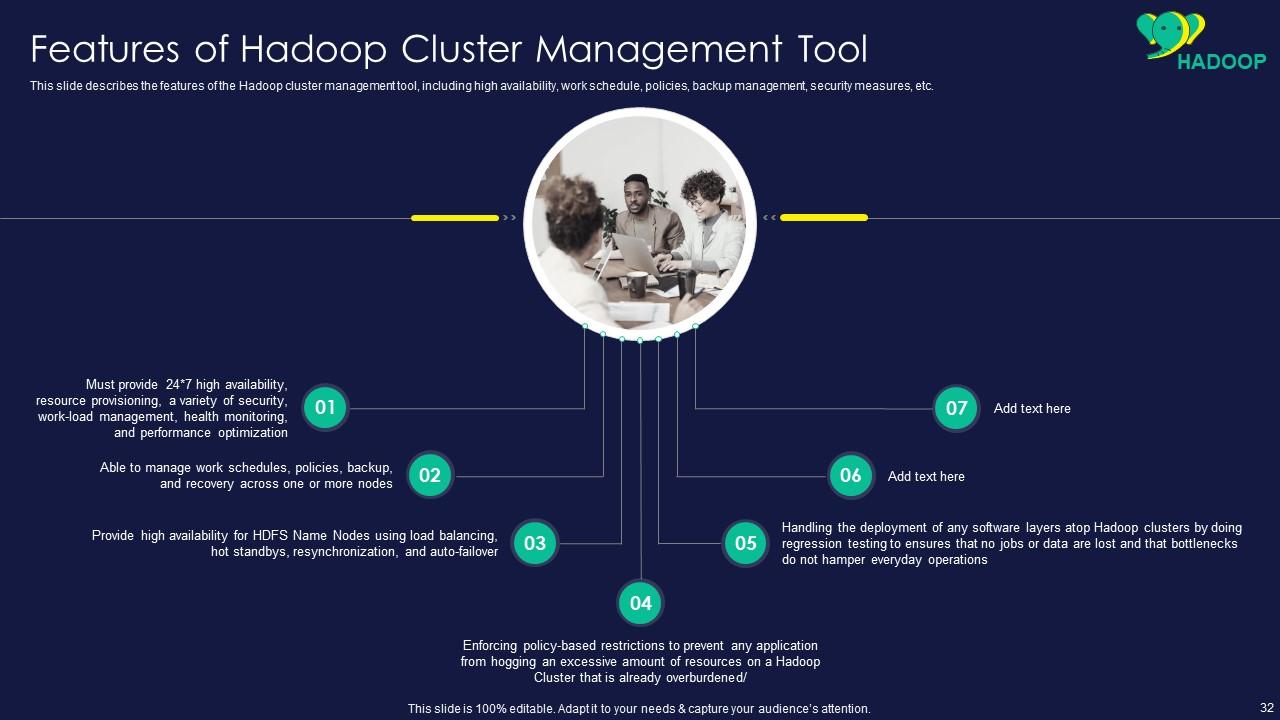

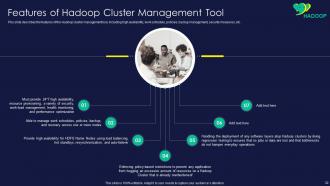

Slide 32: This slide showcases Features of Hadoop Cluster Management Tool.

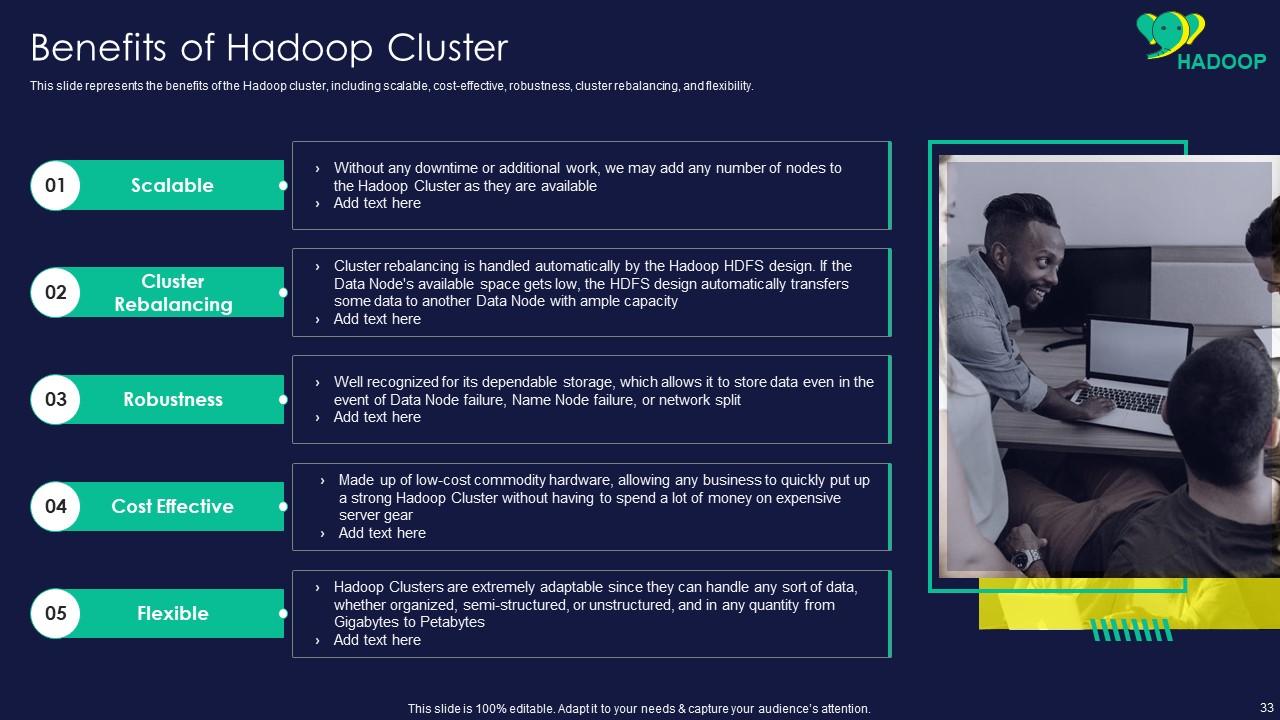

Slide 33: This slide shows benefits of the Hadoop cluster, including scalable, cost-effective, robustness, etc.

Slide 34: This slide presents title for topics that are to be covered next in the template.

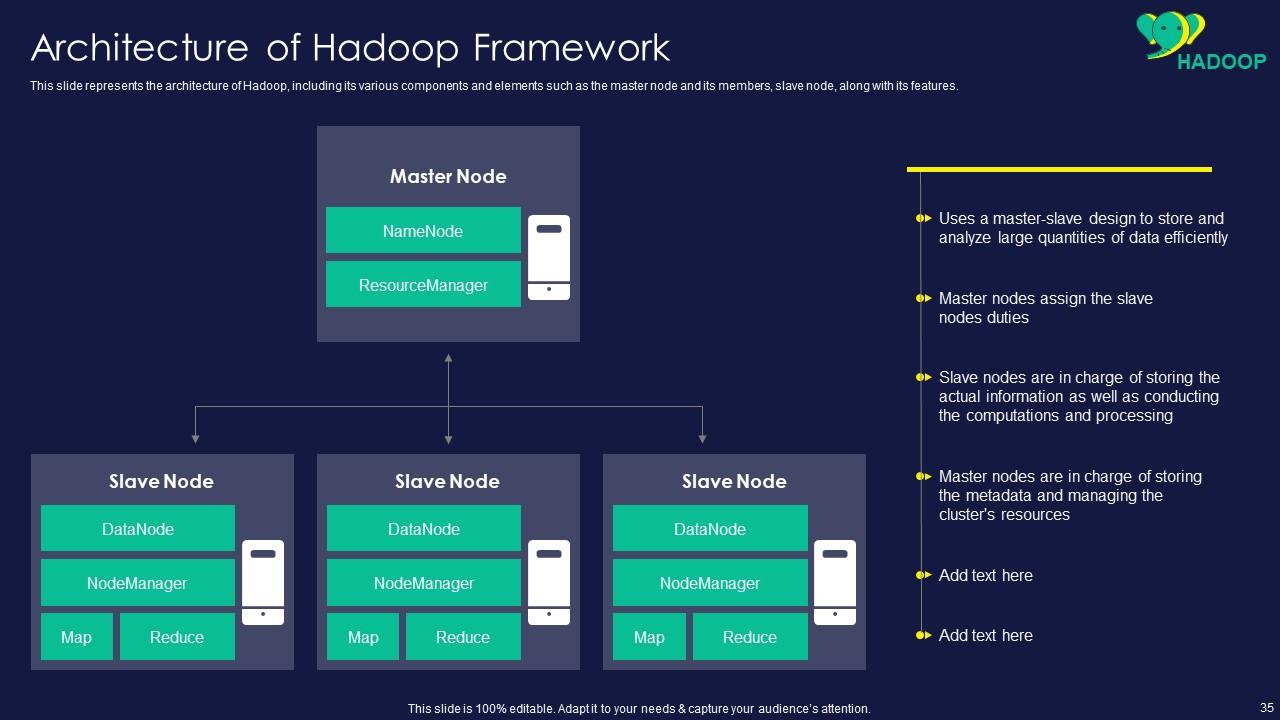

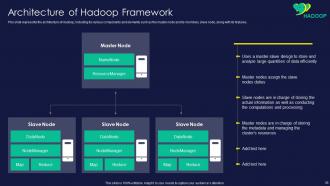

Slide 35: This slide displays architecture of Hadoop, including its various components and elements.

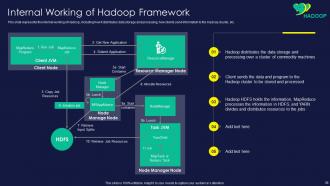

Slide 36: This slide represents internal working of Hadoop, including how it distributes data storage and processing.

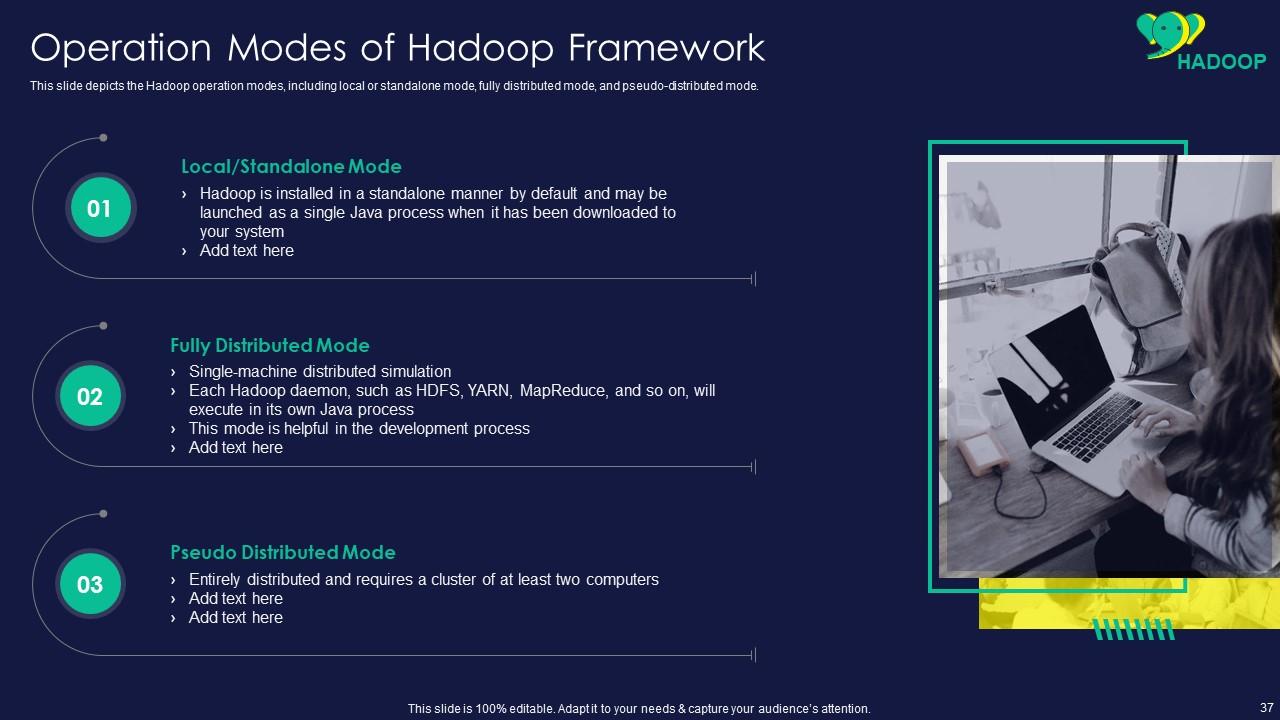

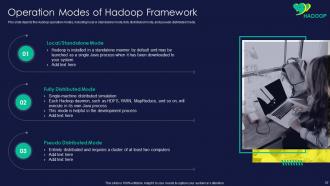

Slide 37: This slide showcases Operation Modes of Hadoop Framework.

Slide 38: This slide shows title for topics that are to be covered next in the template.

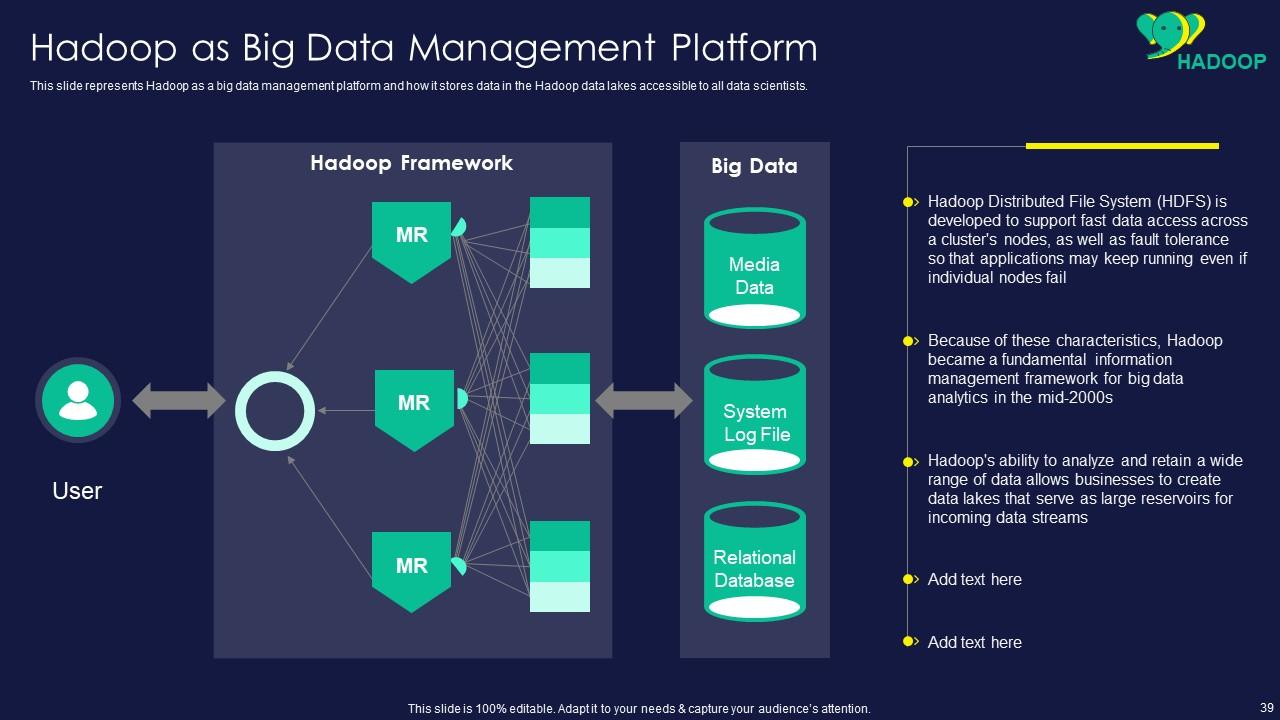

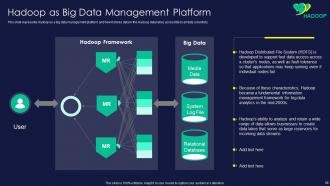

Slide 39: This slide presents Hadoop as a big data management platform and how it stores data in the Hadoop data lakes.

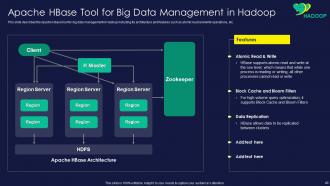

Slide 40: This slide displays Apache HBase Tool for Big Data Management in Hadoop.

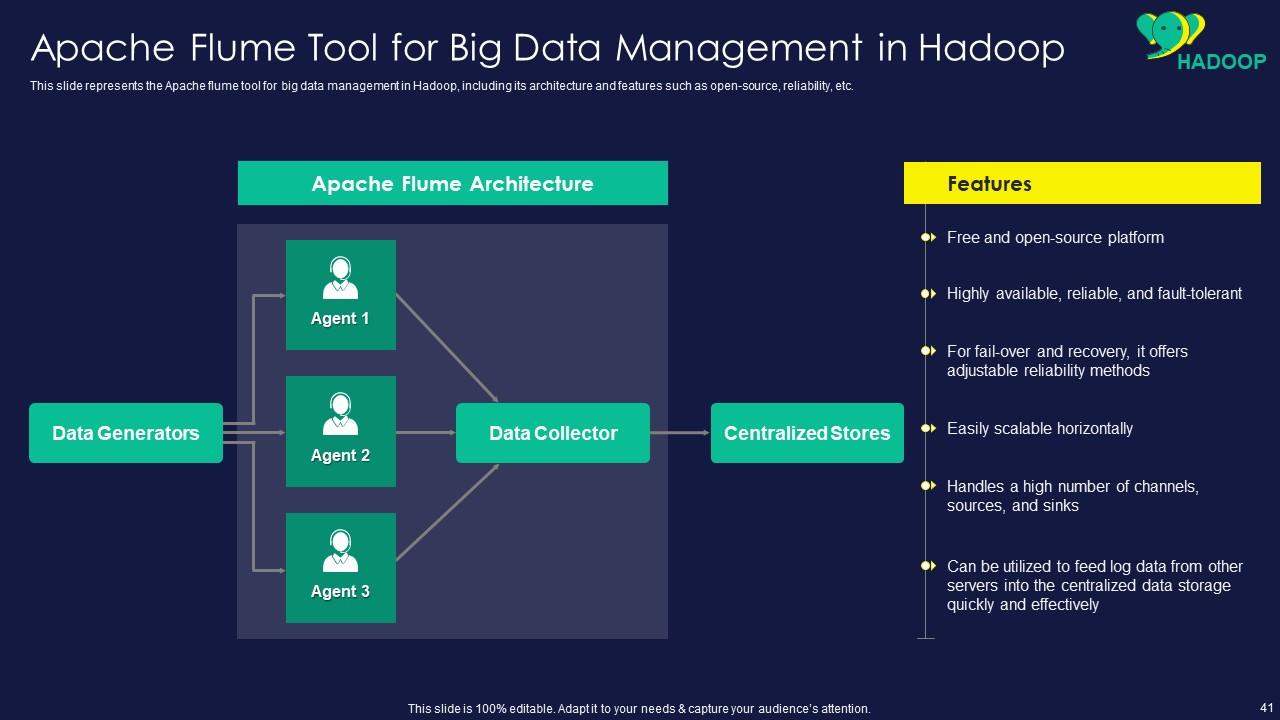

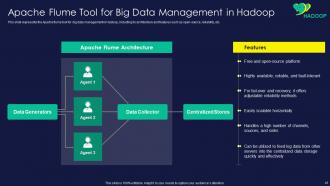

Slide 41: This slide represents Apache Flume Tool for Big Data Management in Hadoop.

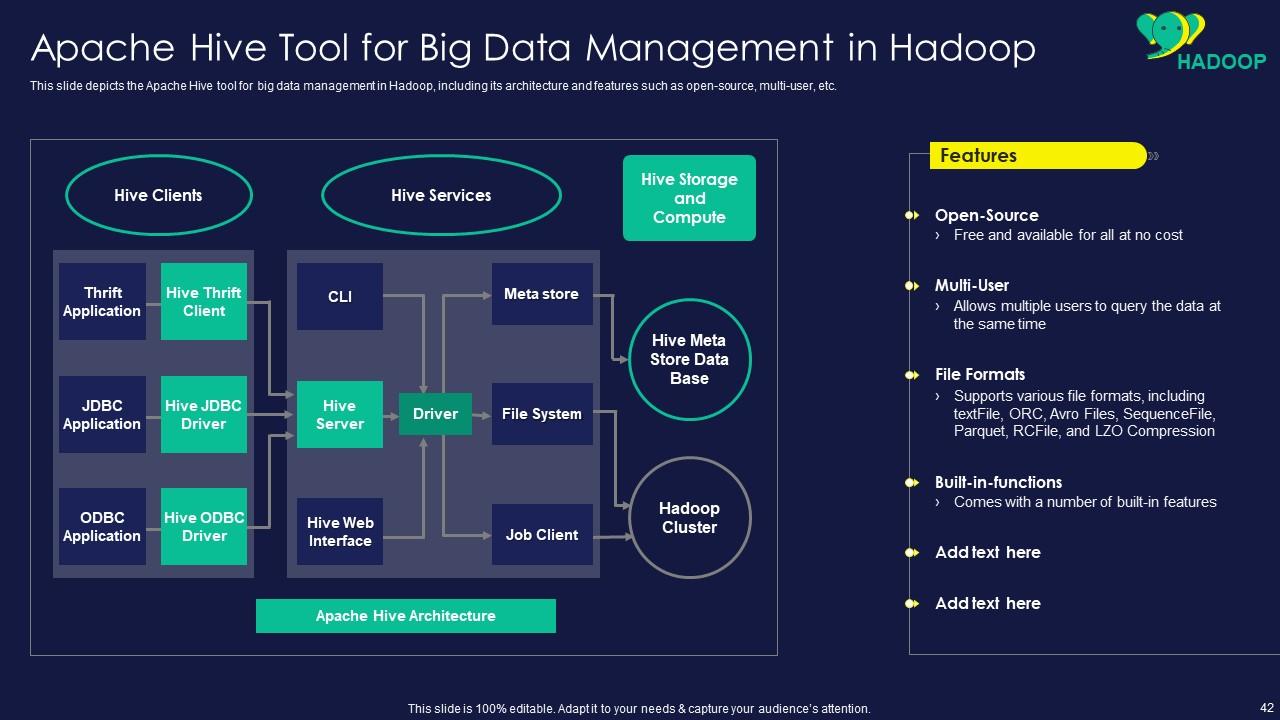

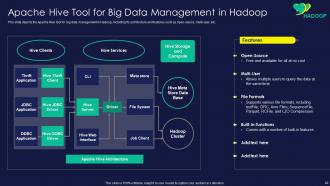

Slide 42: This slide showcases Apache Hive Tool for Big Data Management in Hadoop.

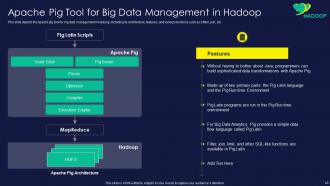

Slide 43: This slide shows Apache Pig Tool for Big Data Management in Hadoop.

Slide 44: This slide presents title for topics that are to be covered next in the template.

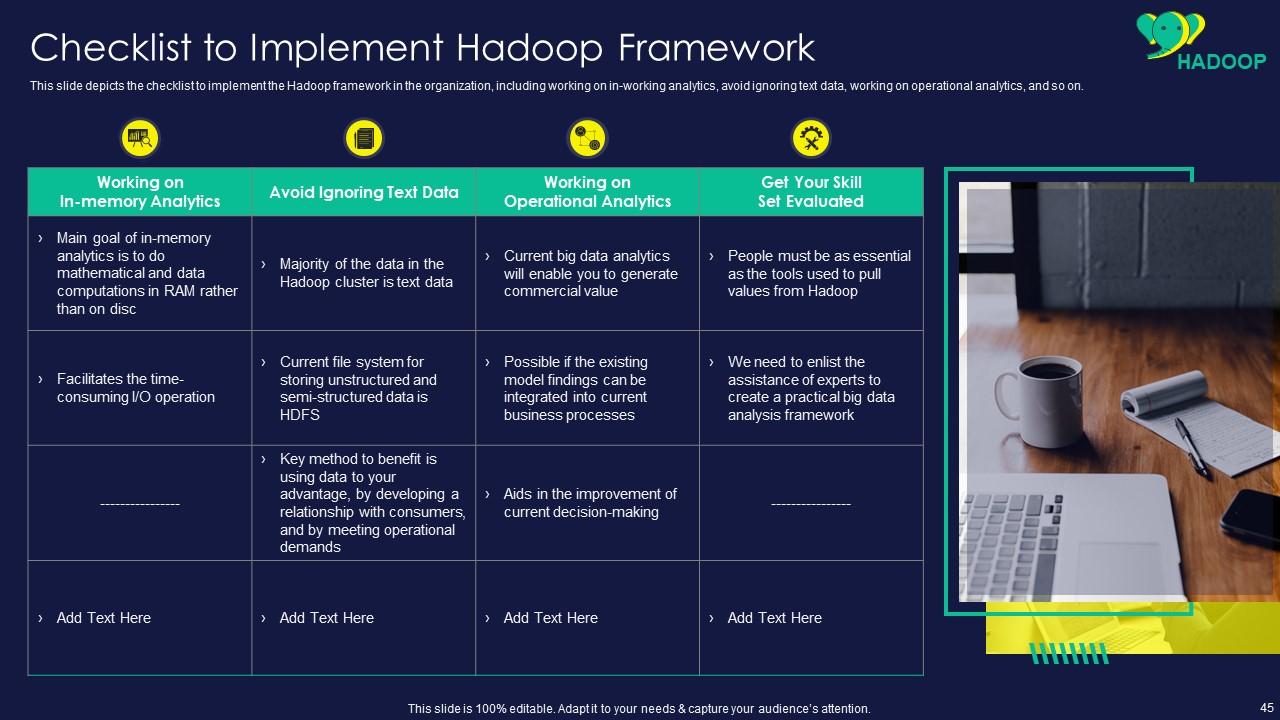

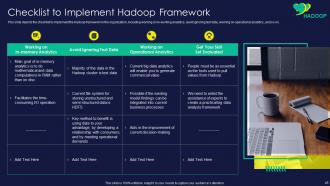

Slide 45: This slide displays checklist to implement the Hadoop framework in the organization.

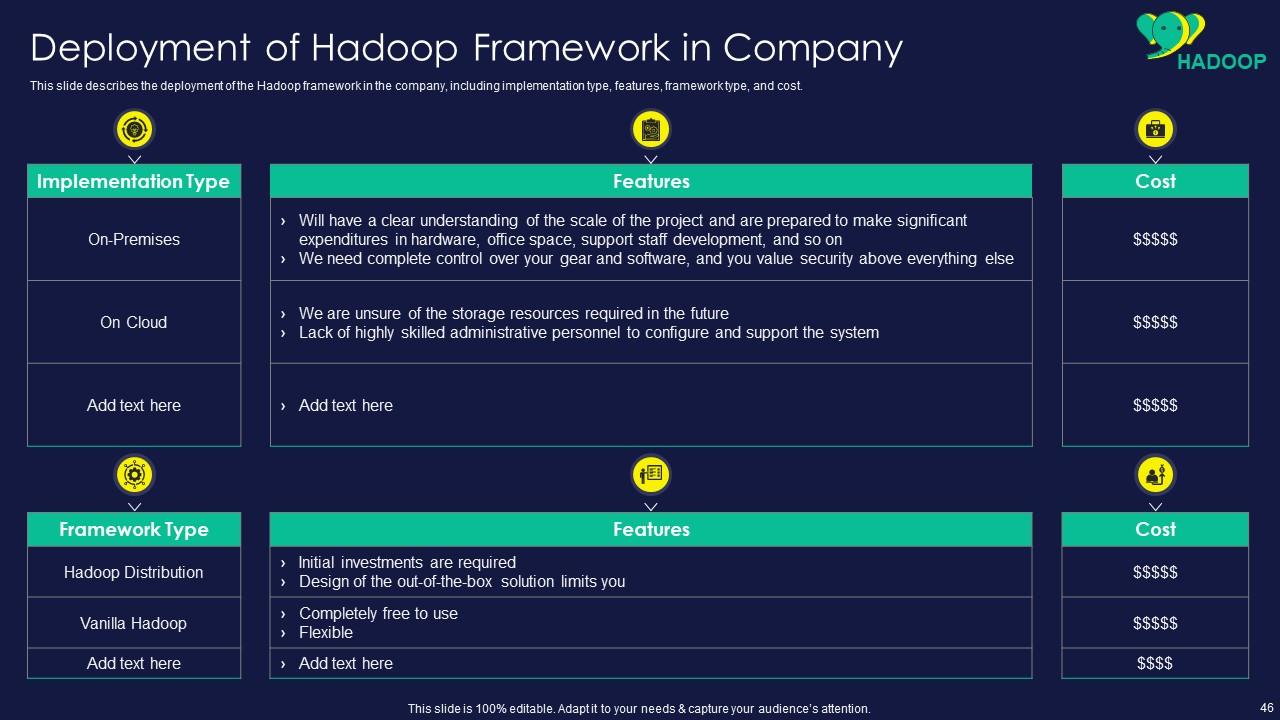

Slide 46: This slide represents Deployment of Hadoop Framework in Company.

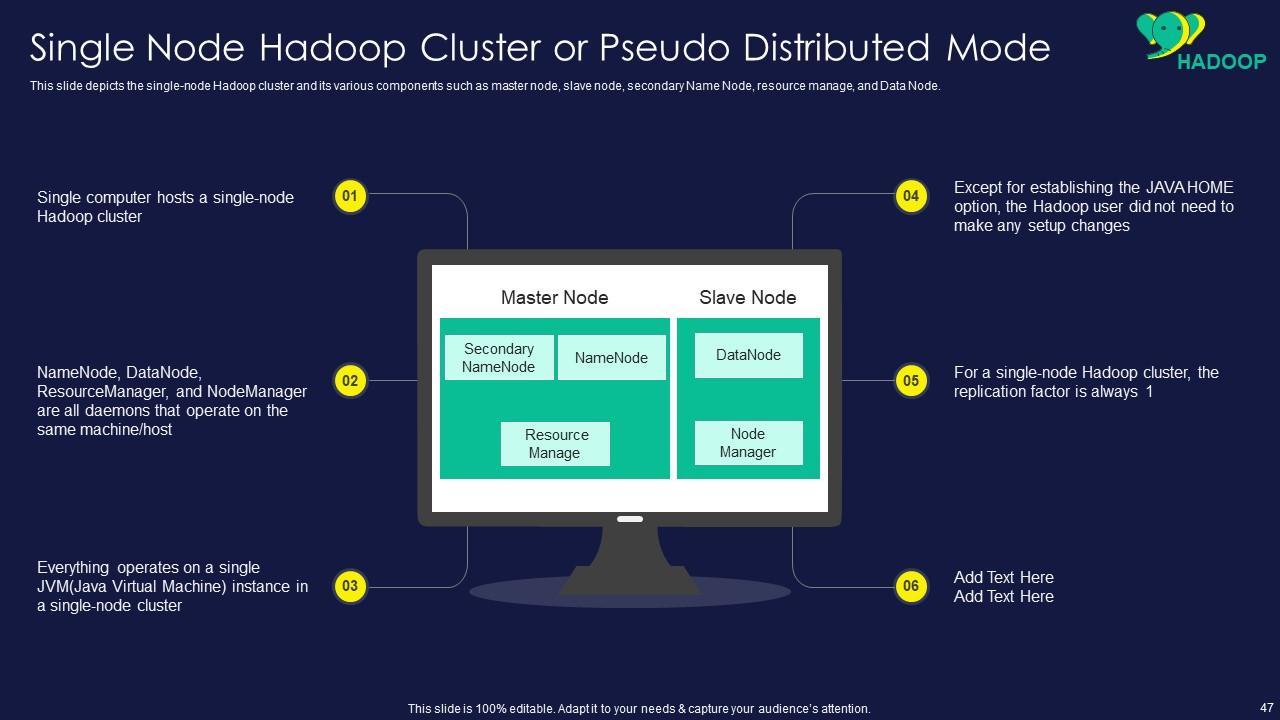

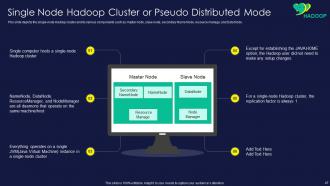

Slide 47: This slide showcases Single Node Hadoop Cluster or Pseudo Distributed Mode.

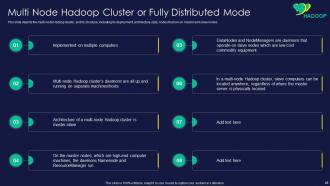

Slide 48: This slide shows Multi Node Hadoop Cluster or Fully Distributed Mode.

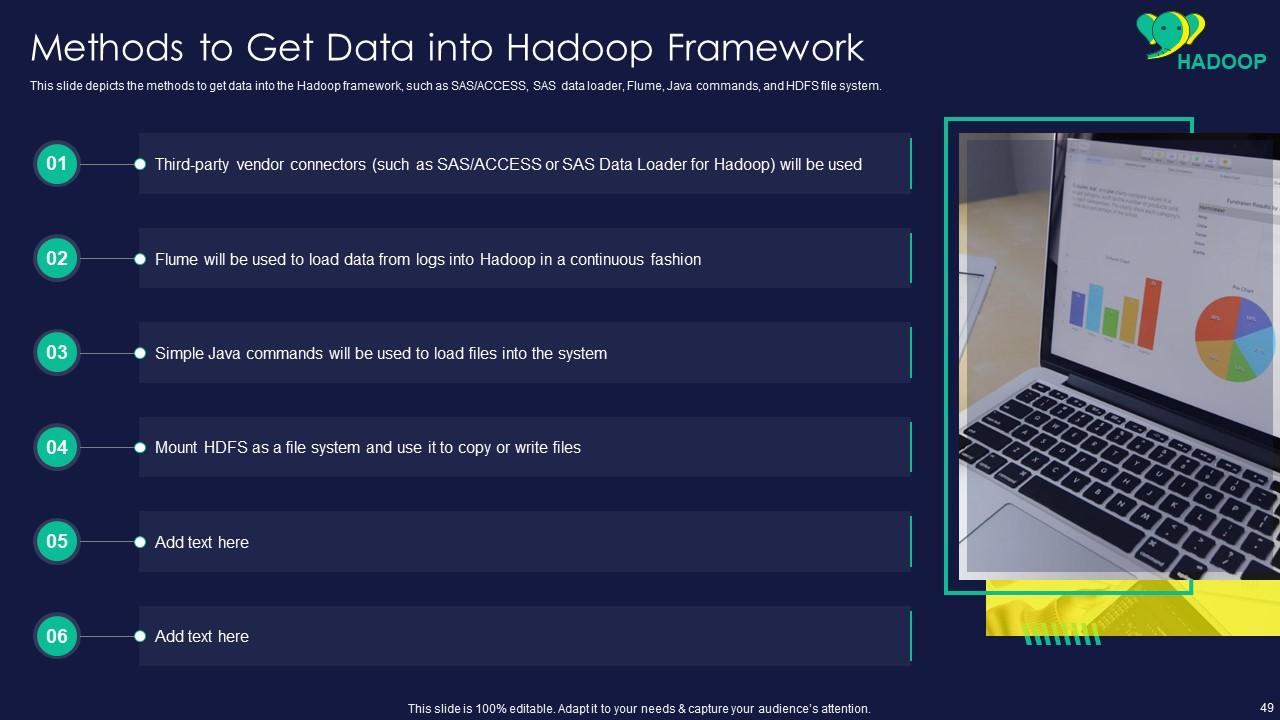

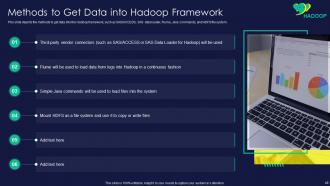

Slide 49: This slide presents methods to get data into the Hadoop framework.

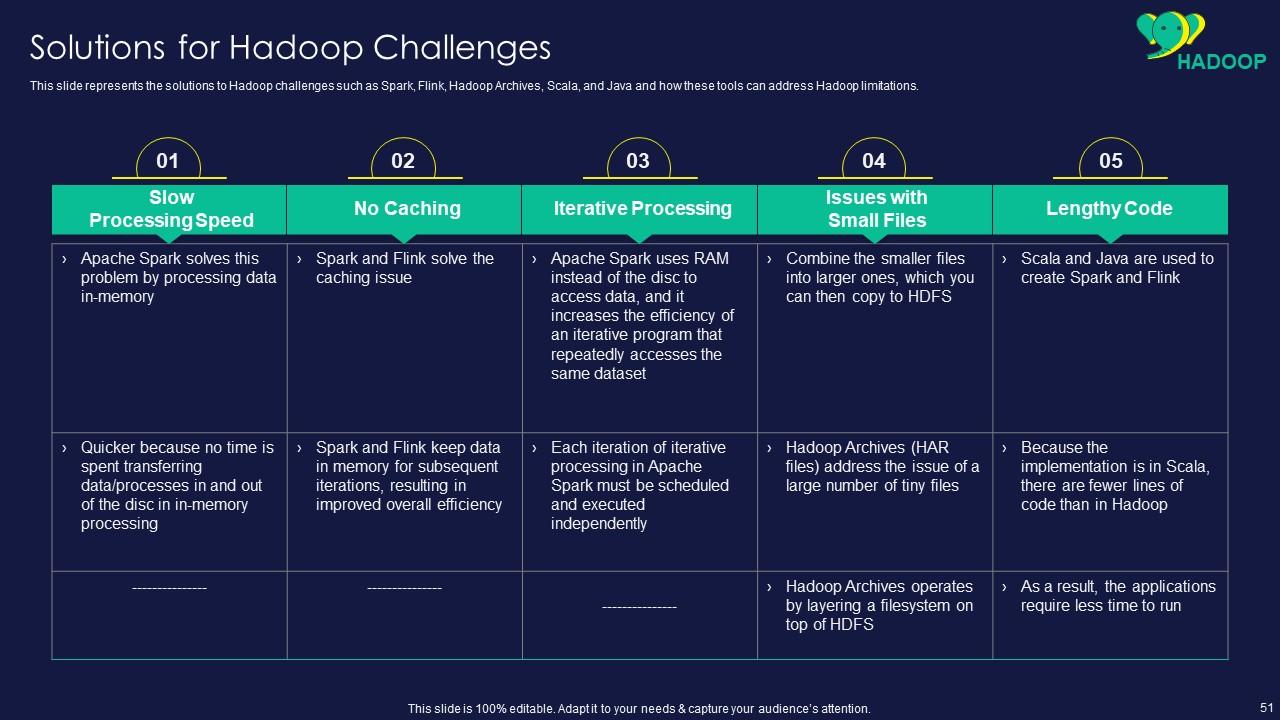

Slide 50: This slide displays challenges of the Hadoop platform, including slow processing speed, no caching, etc.

Slide 51: This slide represents solutions to Hadoop challenges such as Spark, Flink, Hadoop Archives, etc.

Slide 52: This slide showcases title for topics that are to be covered next in the template.

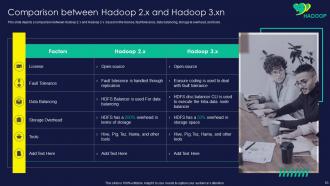

Slide 53: This slide shows Comparison between Hadoop 2.x and Hadoop 3.x.

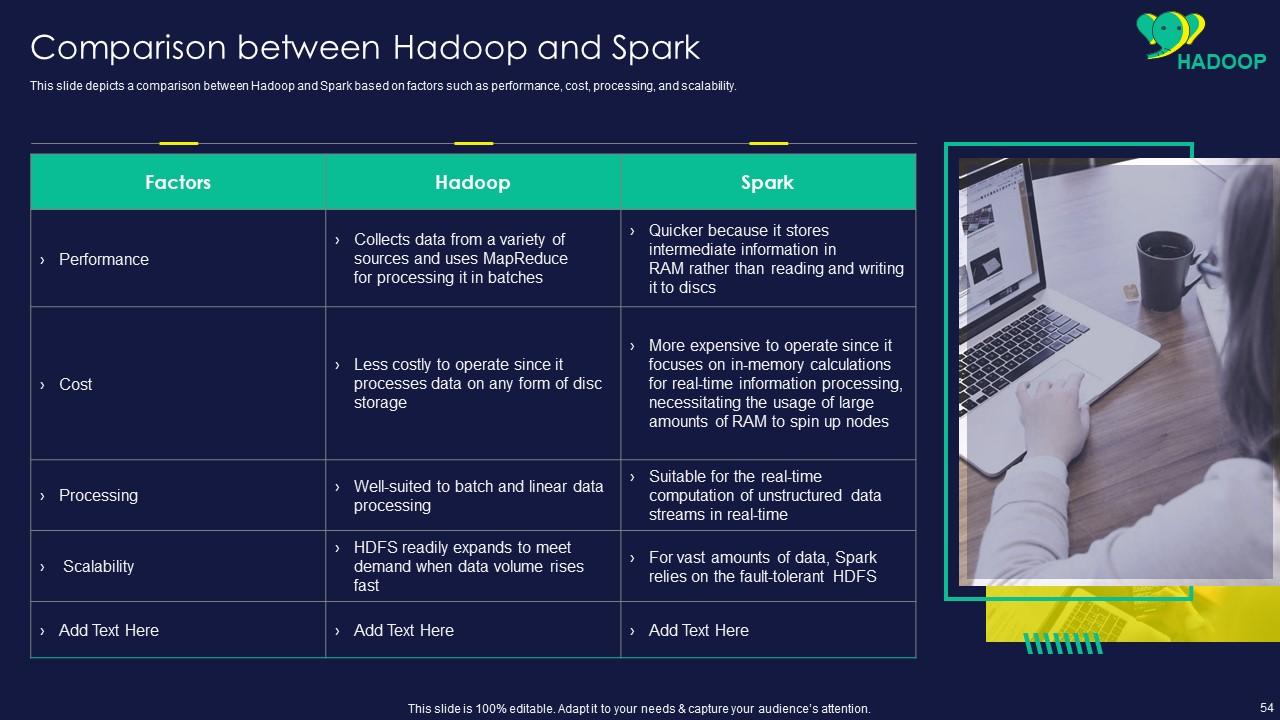

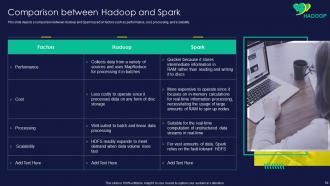

Slide 54: This slide presents comparison between Hadoop and Spark based on factors such as performance, cost, etc.

Slide 55: This slide displays title for topics that are to be covered next in the template.

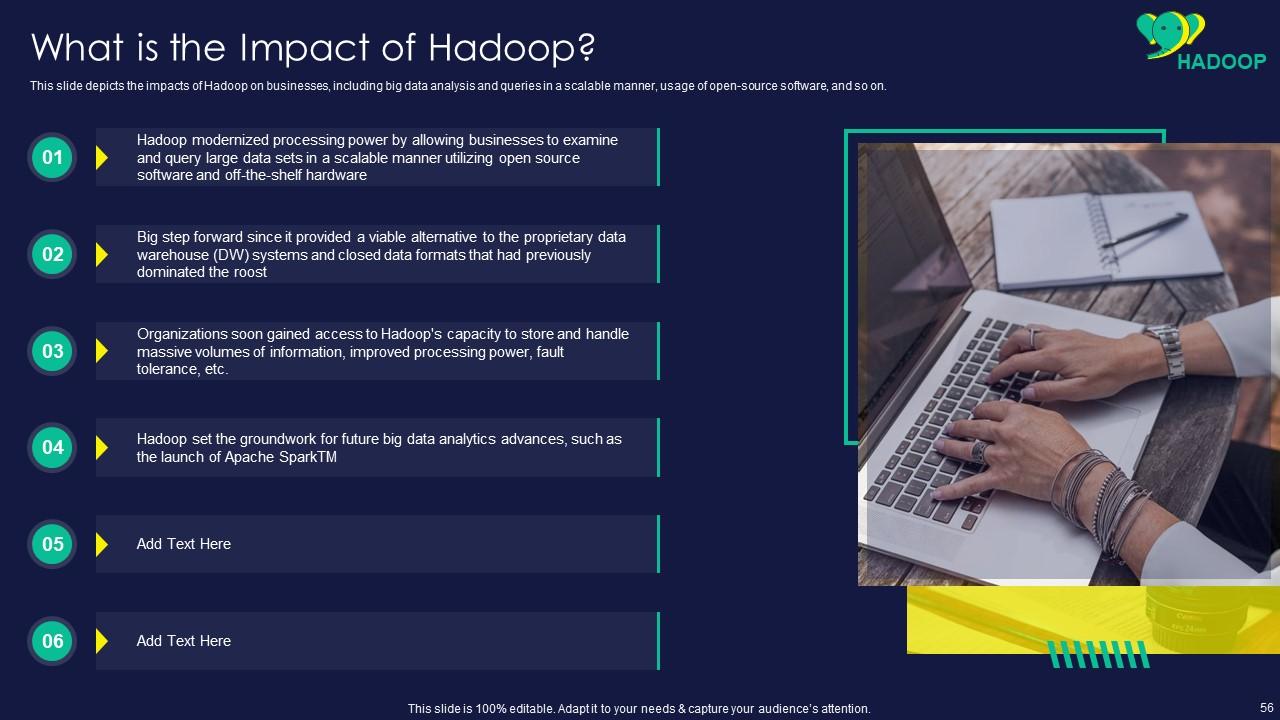

Slide 56: This slide represents impacts of Hadoop on businesses, including big data analysis and queries.

Slide 57: This slide showcases impacts of Hadoop on the business, including data-driven decisions, better data access, etc.

Slide 58: This slide shows title for topics that are to be covered next in the template.

Slide 59: This slide presents 30-60-90 Days Plan for Hadoop Implementation.

Slide 60: This slide displays title for topics that are to be covered next in the template.

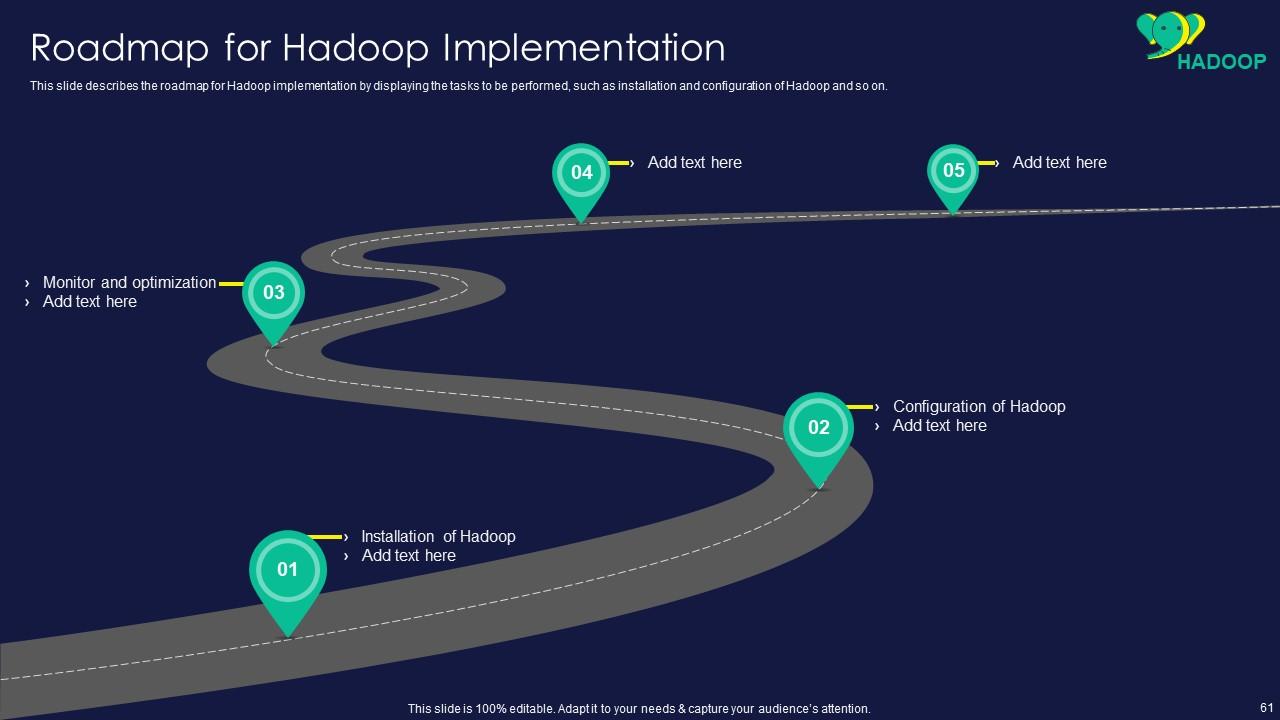

Slide 61: This slide represents roadmap for Hadoop implementation by displaying the tasks to be performed.

Slide 62: This slide showcases title for topics that are to be covered next in the template.

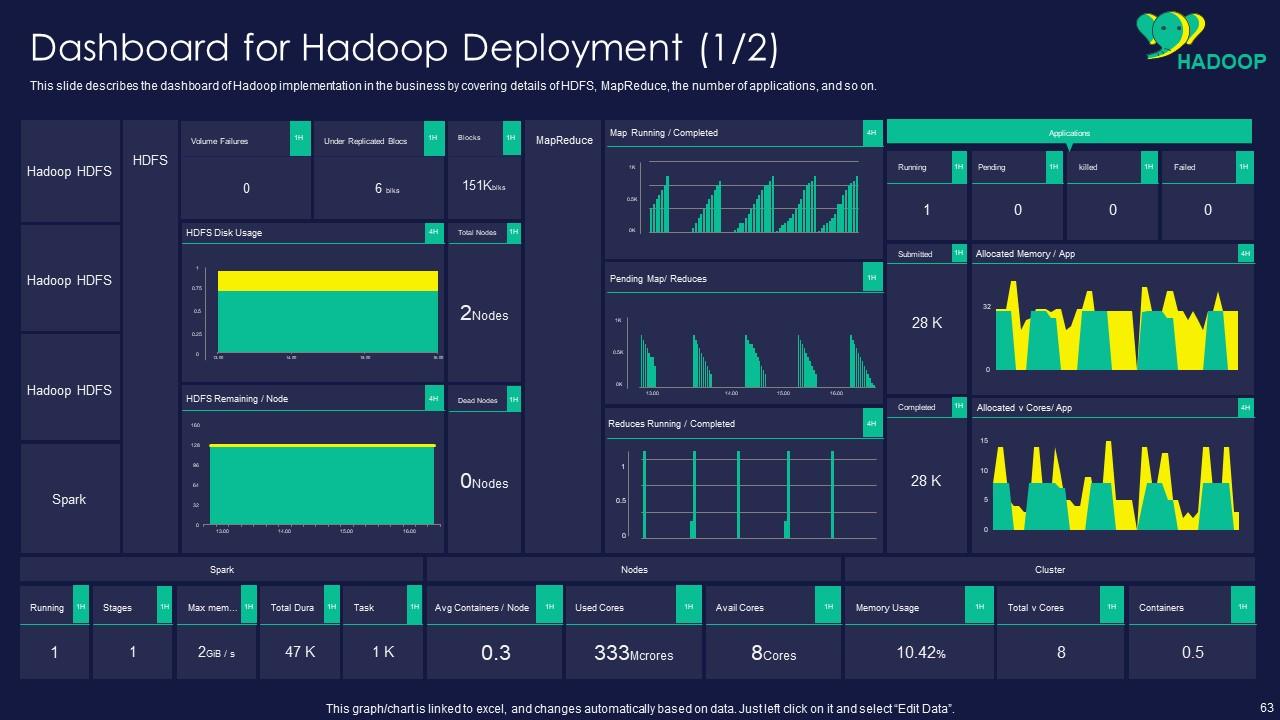

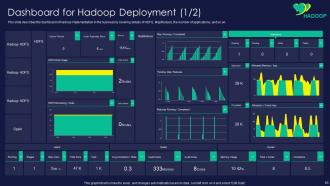

Slide 63: This slide shows dashboard of Hadoop implementation in the business by covering details of HDFS.

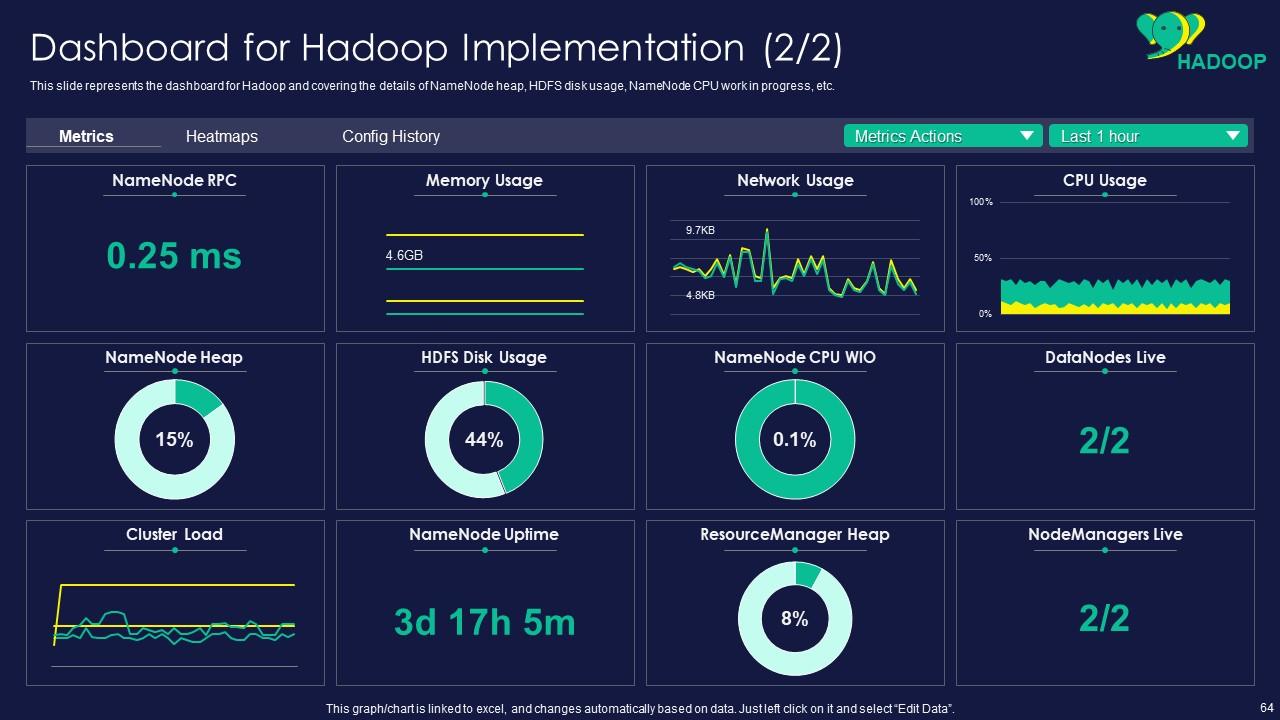

Slide 64: This slide presents dashboard for Hadoop and covering the details of NameNode heap, HDFS disk usage, etc.

Slide 65: This slide is titled as Additional Slides for moving forward.

Slide 66: This slide represents title for topics that are to be covered next in the template.

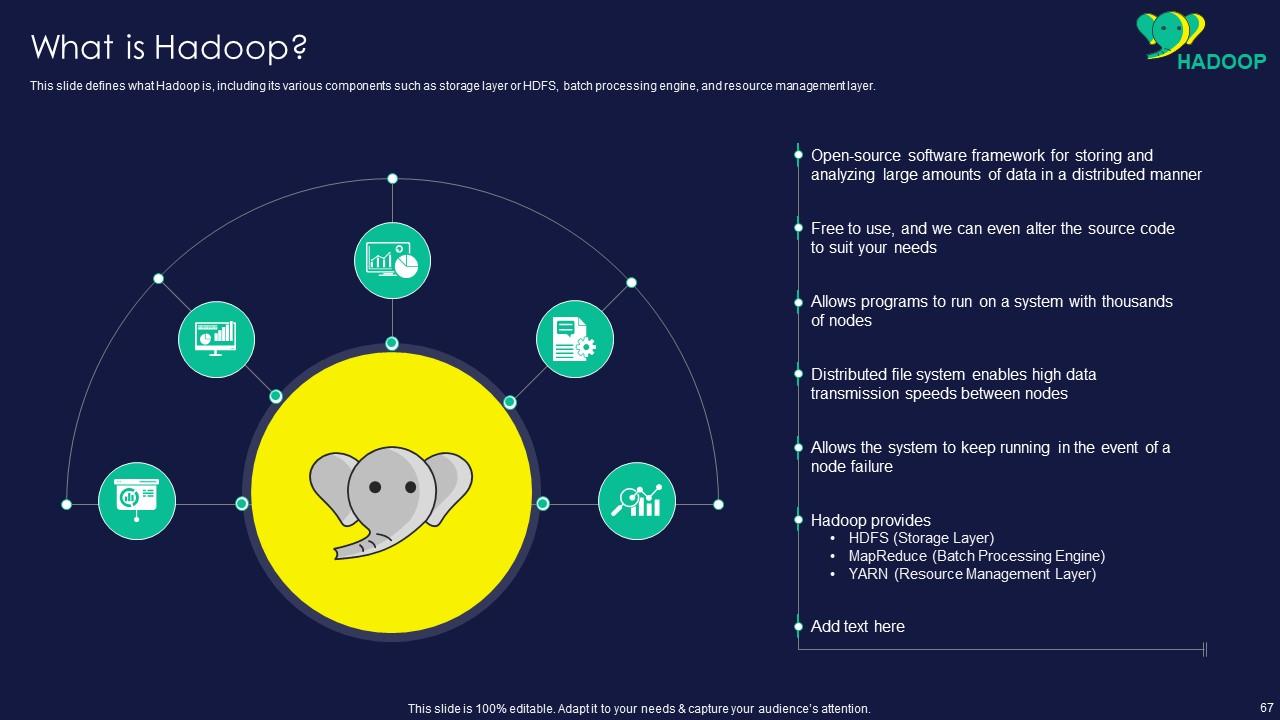

Slide 67: This slide shows what Hadoop is, including its various components such as storage layer or HDFS, batch processing engine, etc.

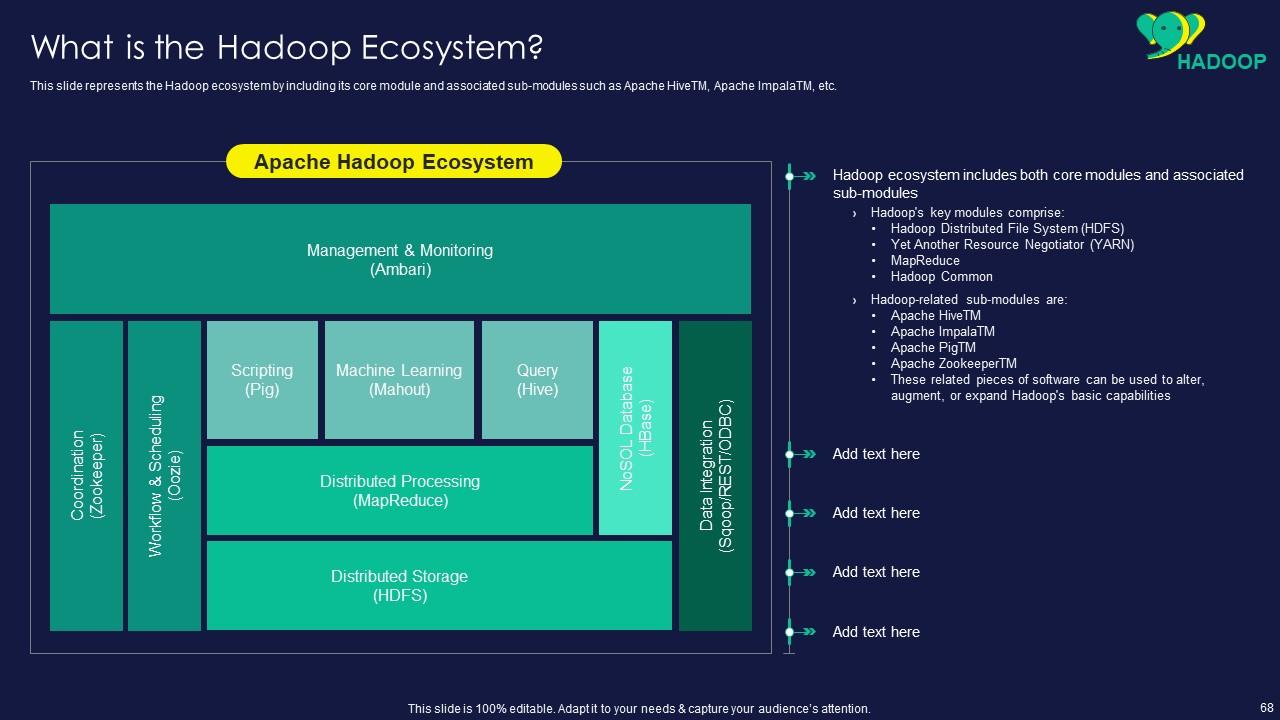

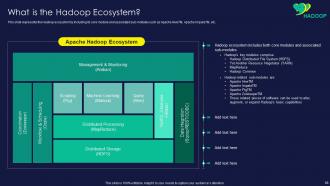

Slide 68: This slide presents Hadoop ecosystem by including its core module and associated sub-modules.

Slide 69: This slide displays disadvantages of Hadoop on the basis of security, vulnerability by design, etc.

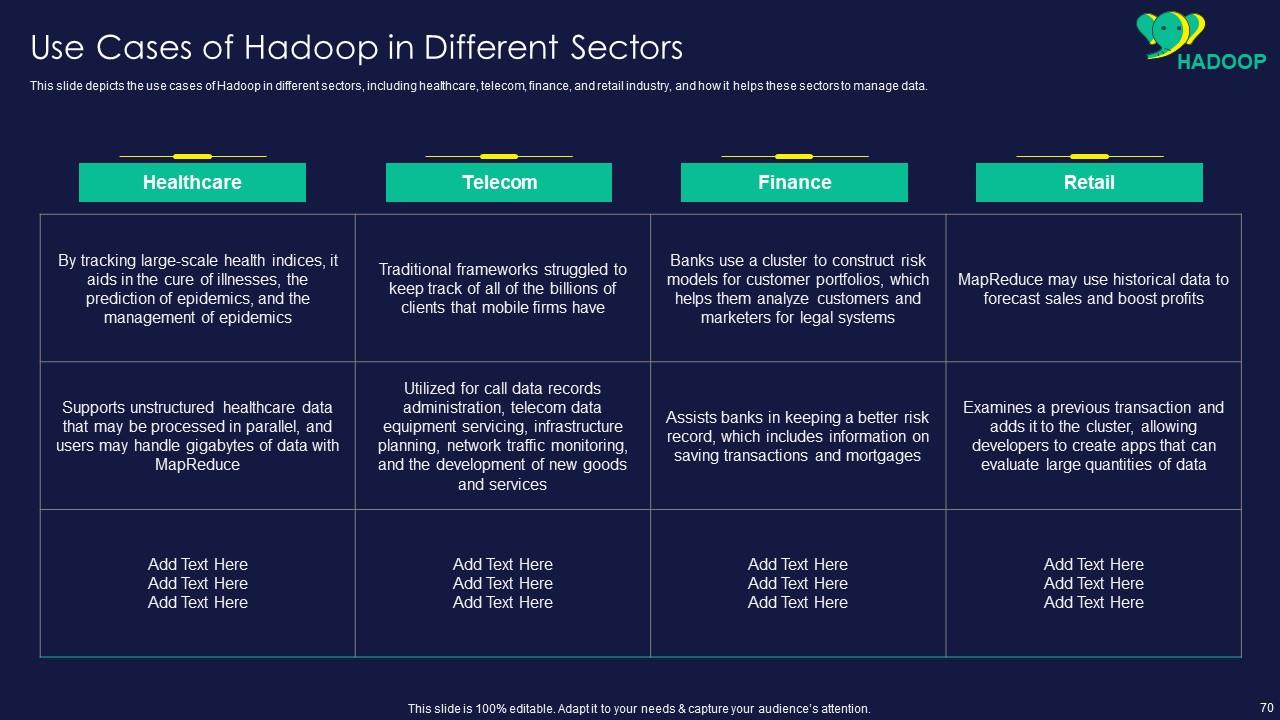

Slide 70: This slide represents use cases of Hadoop in different sectors, including healthcare, telecom, finance, etc.

Slide 71: This slide contains all the icons used in this presentation.

Slide 72: This slide represents Stacked Column chart with two products comparison.

Slide 73: This is Our Goal slide. State your firm's goals here.

Slide 74: This is an Idea Generation slide to state a new idea or highlight information, specifications etc.

Slide 75: This slide depicts Venn diagram with text boxes.

Slide 76: This slide shows Post It Notes. Post your important notes here.

Slide 77: This slide contains Puzzle with related icons and text.

Slide 78: This is a Comparison slide to state comparison between commodities, entities etc.

Slide 79: This is a Thank You slide with address, contact numbers and email address.

Apache Hadoop Powerpoint Presentation Slides with all 84 slides:

Use our Apache Hadoop Powerpoint Presentation Slides to effectively help you save your valuable time. They are readymade to fit into any presentation structure.

FAQs

Hadoop is a distributed computing framework that is used for storing and processing large amounts of data. It is important because it can handle big data storage and processing efficiently, making it an ideal solution for businesses with large amounts of data to manage.

Hadoop offers several advantages including scalability, flexibility, cost-effectiveness, fault tolerance, and the ability to handle a wide range of data types.

Hadoop Distributed File System (HDFS) is a distributed file system that is used to store large amounts of data across multiple servers. It is a key component of the Hadoop framework and is designed to provide high availability and data reliability.

MapReduce is a programming model used for processing large datasets. It works by dividing the input data into smaller chunks, processing them in parallel across multiple nodes, and then combining the results into a single output.

Some of the tools used for big data management in Hadoop include Apache HBase, Apache Flume, Apache Hive, and Apache Pig. These tools are used for storing, processing, and analyzing large amounts of data in Hadoop.

-

The PPTs are extremely simple to modify. Thank you for providing the slides that are ready to be used. They assist me in saving a lot of time.

-

I looked at their huge selection of themes and designs. They appeared to be ideal for my profession. I'm sure I'll grab a few of them.