Big Data Engineer Powerpoint Presentation Slides

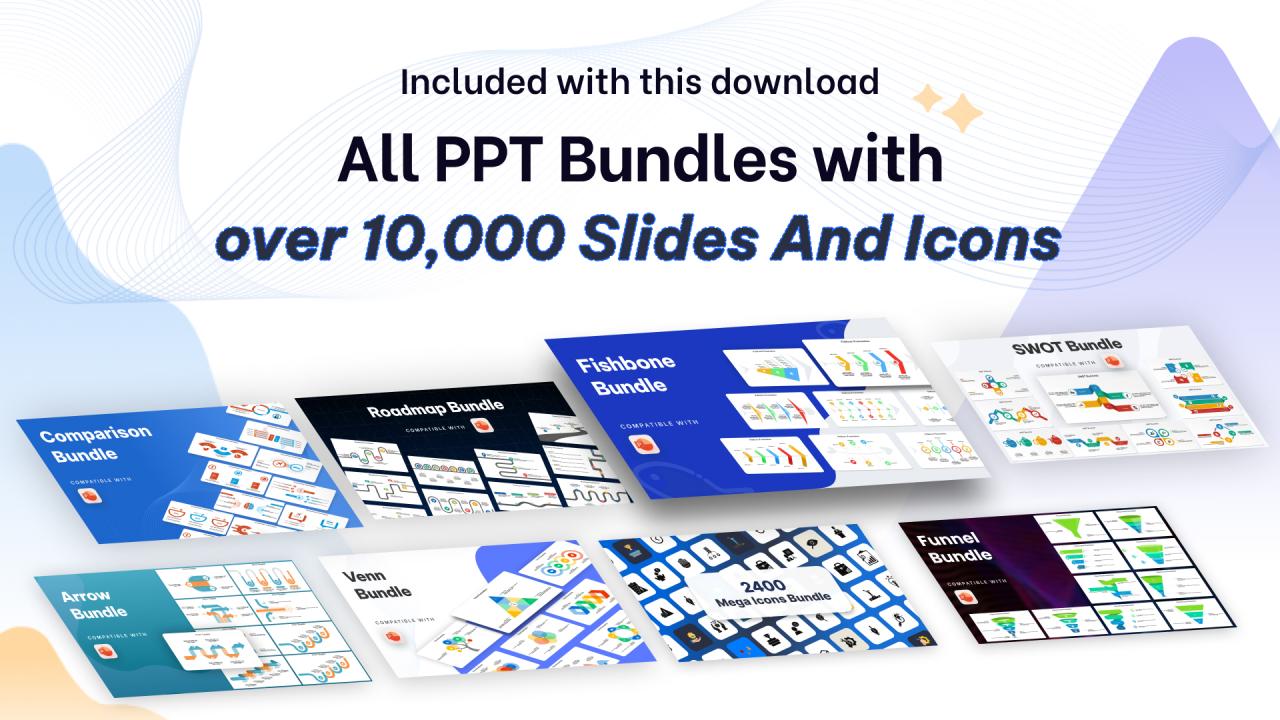

Big Data is a massive collection of data that continues to increase dramatically over time. It is a data set that is so vast and complicated that no standard data management technologies can effectively hold or handle it. Here is a competently designed template on Big Data Engineer that provides details about the problems experienced by organizations to manage big data along with their solutions. It further highlights the top sources of big data, architecture, and workflow of extensive data management. This presentation covers sections for the different types of data, multiple resources of big data, working of big data, technologies, etc. It also comes with a ready made checklist for big data management. Additionally, this PPT talks about the impacts of extensive data management on business processes and their benefits. Furthermore, this template includes a training program for comprehensive data management, budget planning, and big data applications in different sectors such as healthcare, education, automobile, finance, etc. Also, this PPT provides a 30 60 90 days plan and a roadmap for extensive data management. Lastly, this deck comprises a dashboard for comprehensive data management. Download the template now.

Big Data is a massive collection of data that continues to increase dramatically over time. It is a data set that is so vas..

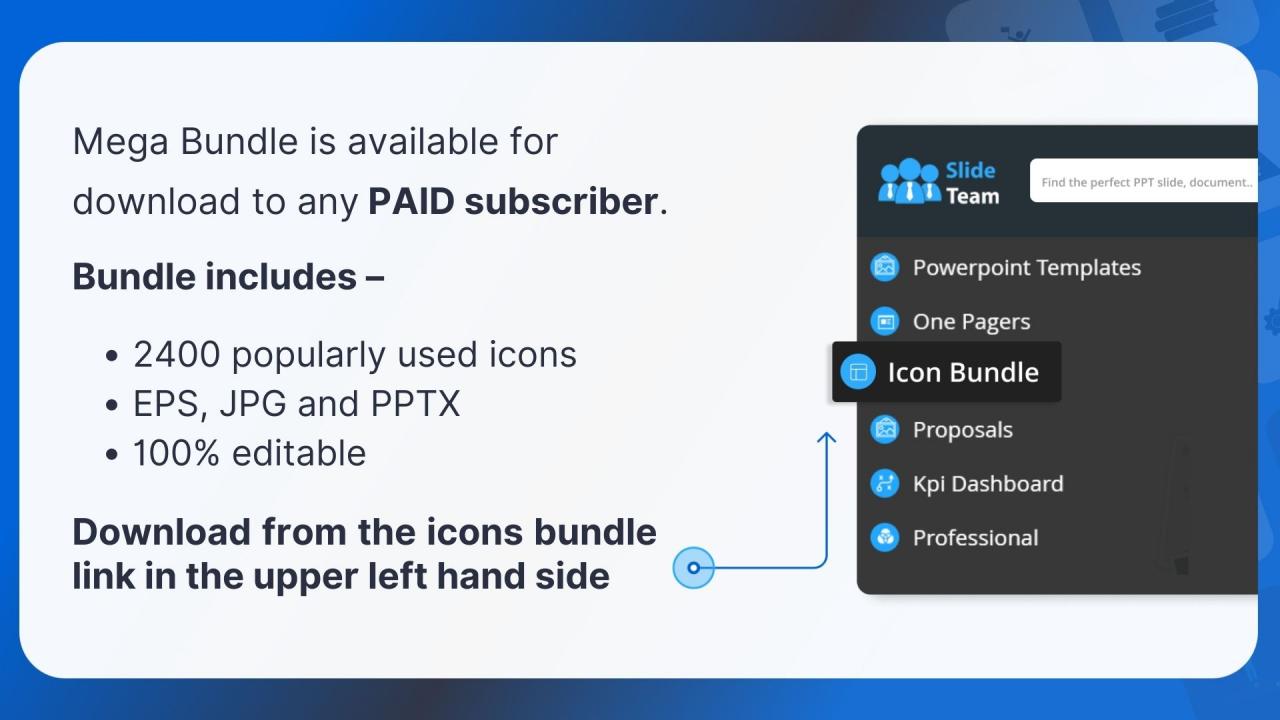

- Google Slides is a new FREE Presentation software from Google.

- All our content is 100% compatible with Google Slides.

- Just download our designs, and upload them to Google Slides and they will work automatically.

- Amaze your audience with SlideTeam and Google Slides.

-

Want Changes to This PPT Slide? Check out our Presentation Design Services

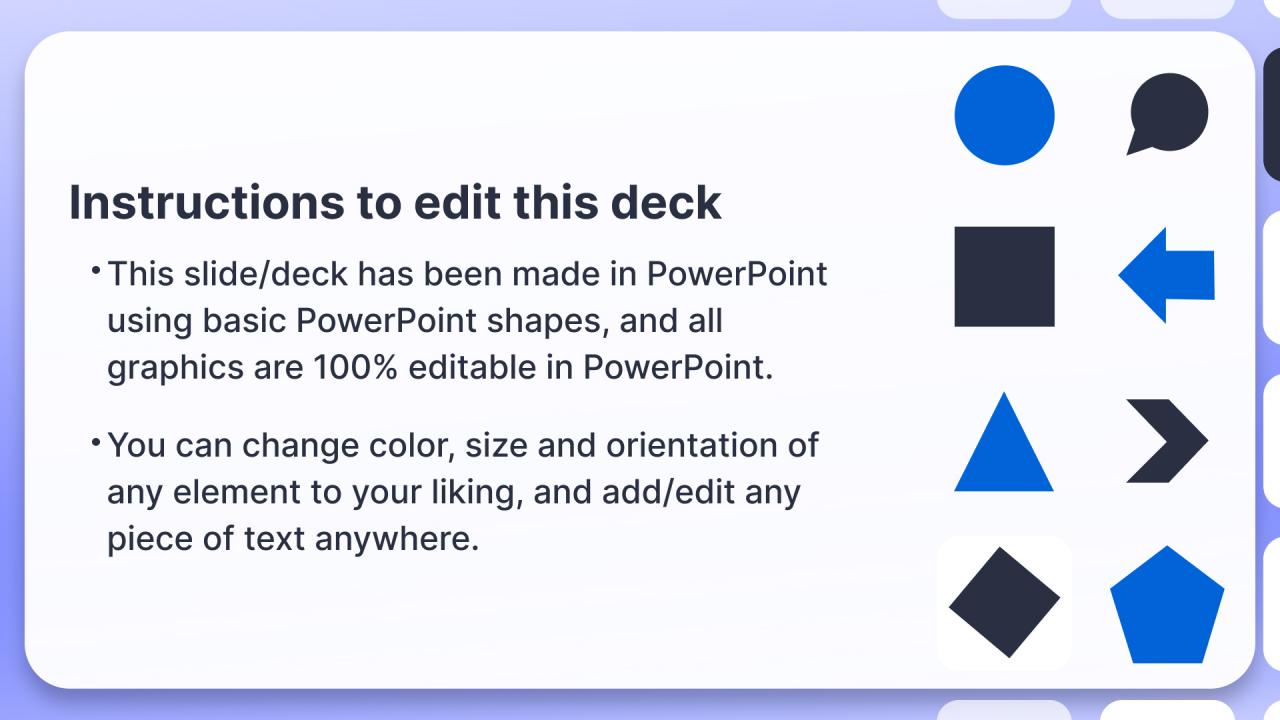

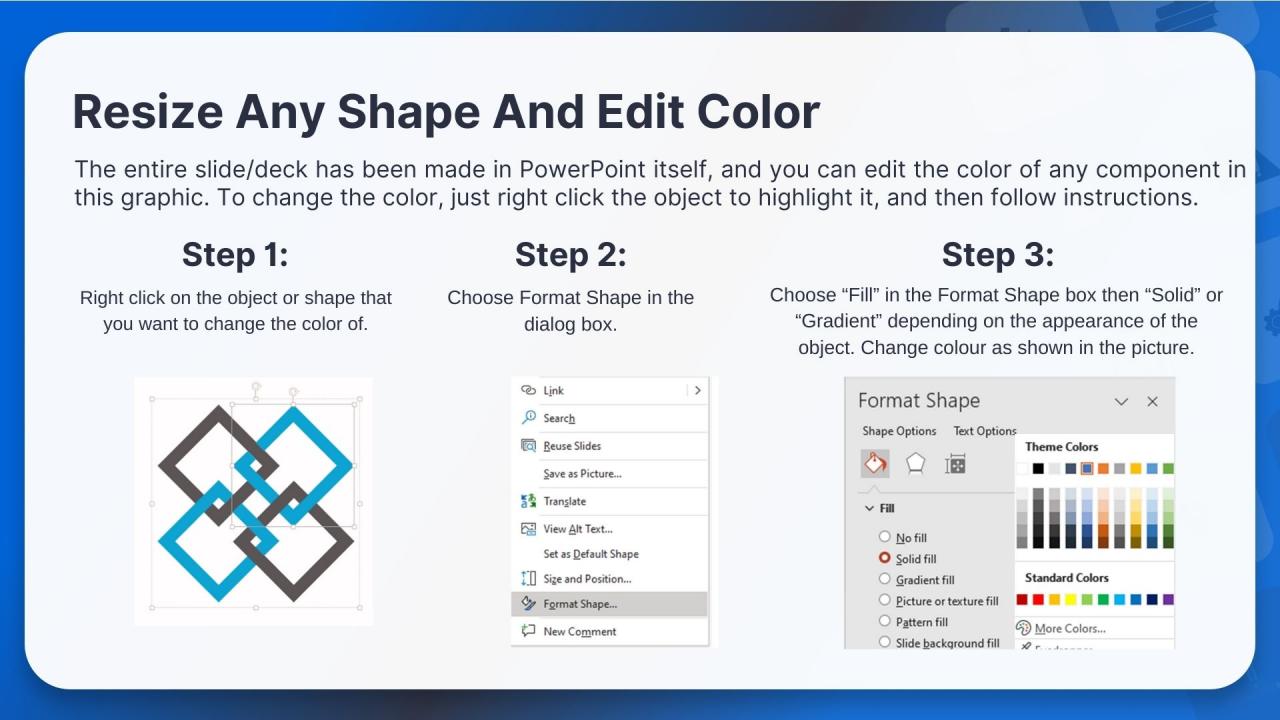

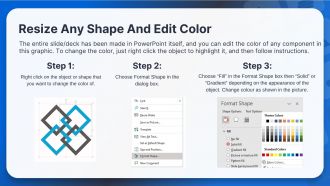

- WideScreen Aspect ratio is becoming a very popular format. When you download this product, the downloaded ZIP will contain this product in both standard and widescreen format.

-

- Some older products that we have may only be in standard format, but they can easily be converted to widescreen.

- To do this, please open the SlideTeam product in Powerpoint, and go to

- Design ( On the top bar) -> Page Setup -> and select "On-screen Show (16:9)” in the drop down for "Slides Sized for".

- The slide or theme will change to widescreen, and all graphics will adjust automatically. You can similarly convert our content to any other desired screen aspect ratio.

Compatible With Google Slides

Get This In WideScreen

You must be logged in to download this presentation.

PowerPoint presentation slides

Deliver this complete deck to your team members and other collaborators. Encompassed with stylized slides presenting various concepts, this Big Data Engineer Powerpoint Presentation Slides is the best tool you can utilize. Personalize its content and graphics to make it unique and thought-provoking. All the sixty five slides are editable and modifiable, so feel free to adjust them to your business setting. The font, color, and other components also come in an editable format making this PPT design the best choice for your next presentation. So, download now.

People who downloaded this PowerPoint presentation also viewed the following :

Content of this Powerpoint Presentation

Slide 1: This slide displays the title Big Data Engineer.

Slide 2: This slide displays the Agenda for Big Data Engineer.

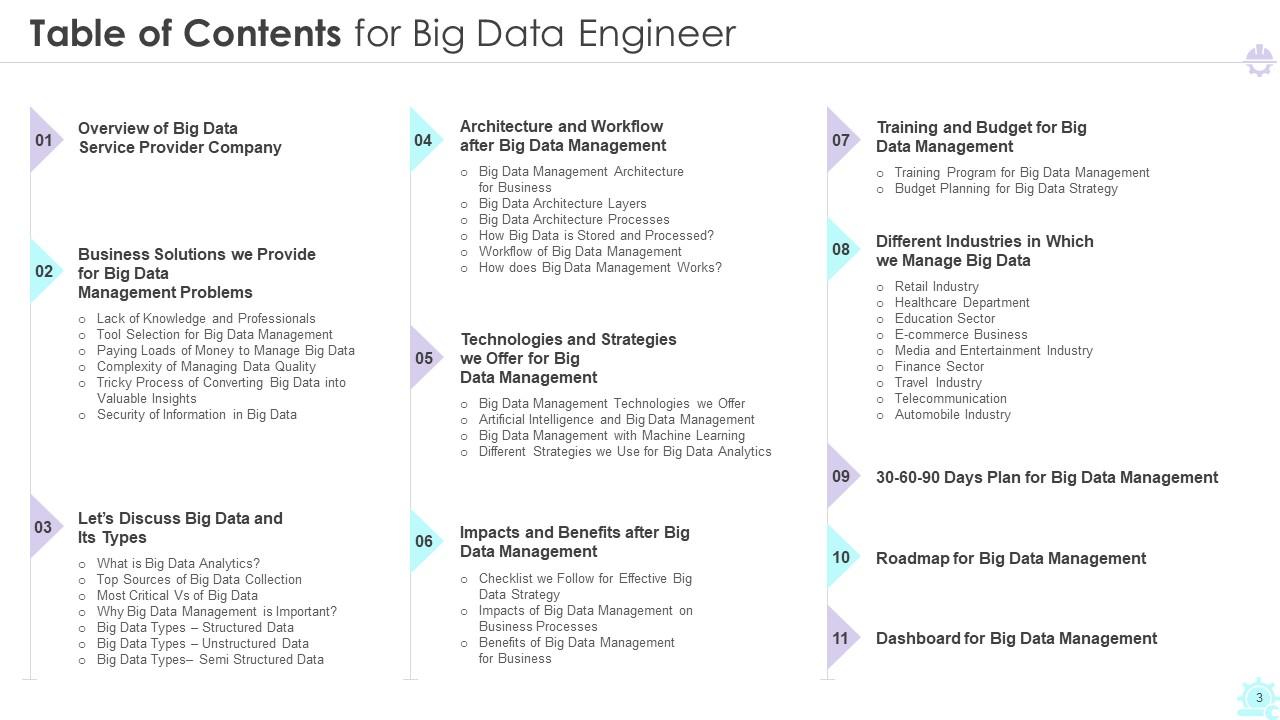

Slide 3: This slide exhibit table of content.

Slide 4: This slide exhibit table of content- Overview of Big Data Service Provider Company.

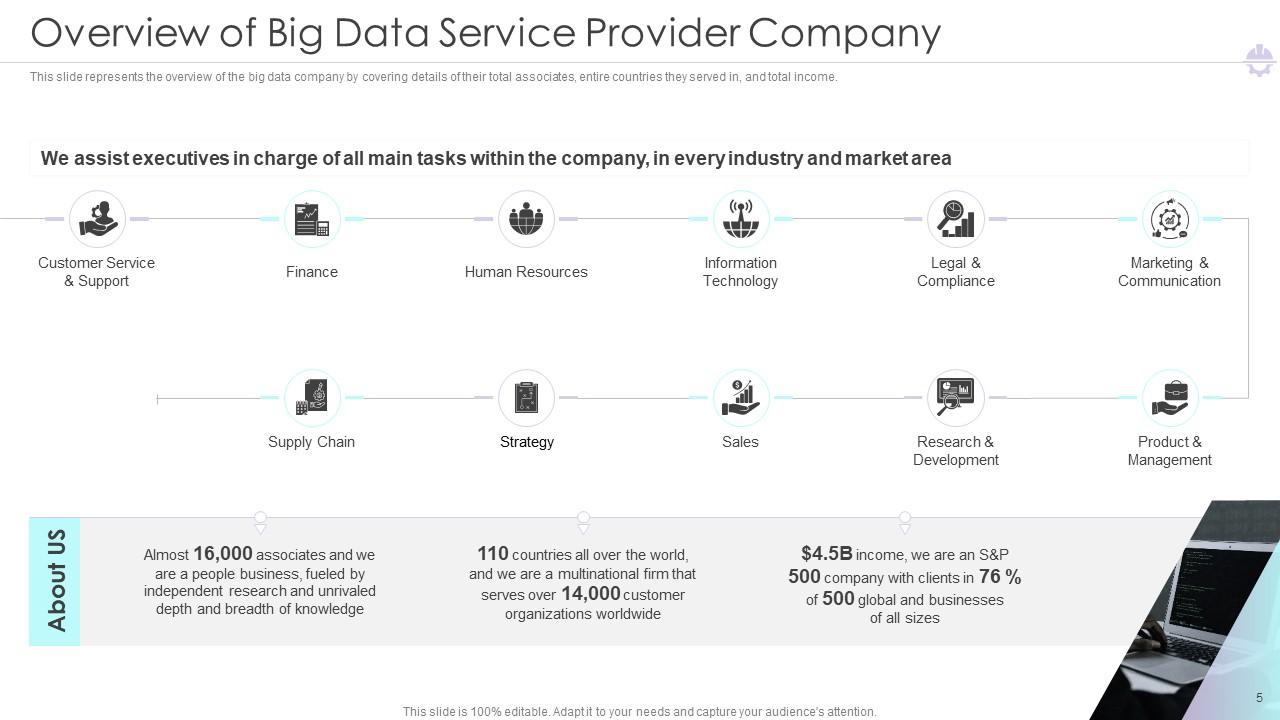

Slide 5: This slide represents the overview of the big data company.

Slide 6: This slide exhibit table of content- Business Solutions we Provide for Big Data Management Problems.

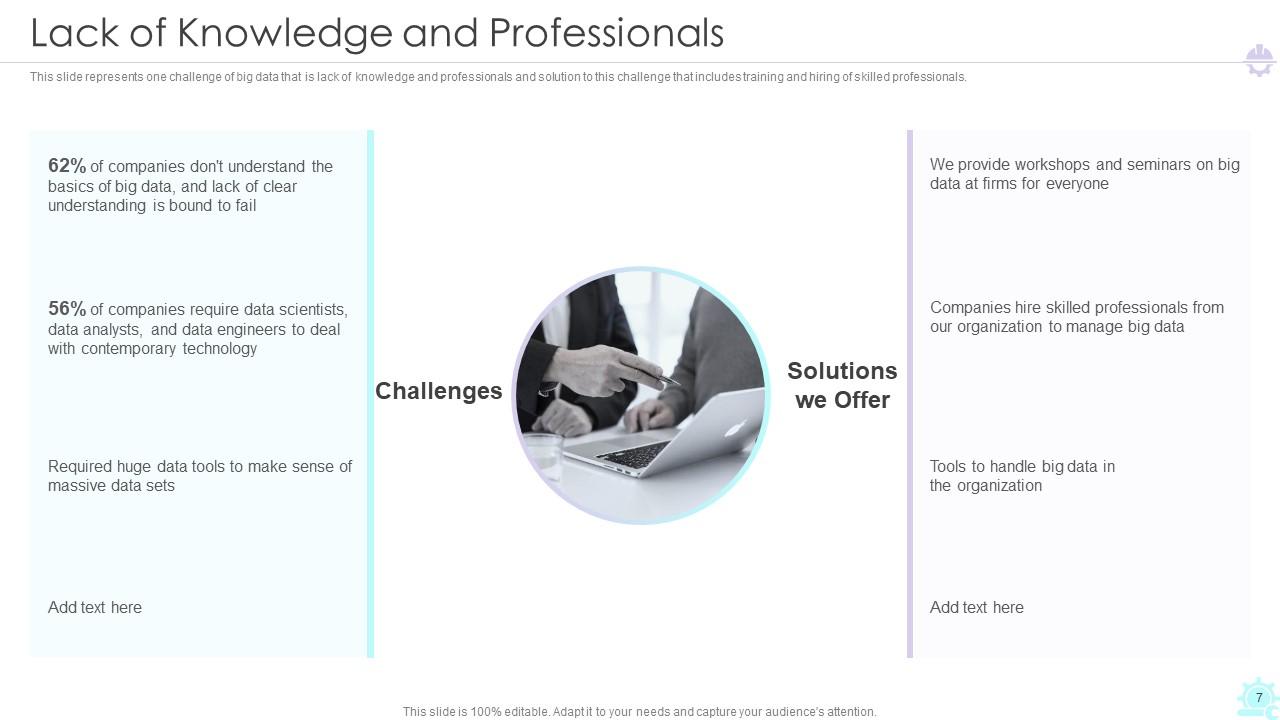

Slide 7: This slide represents one challenge of big data that is lack of knowledge and professionals.

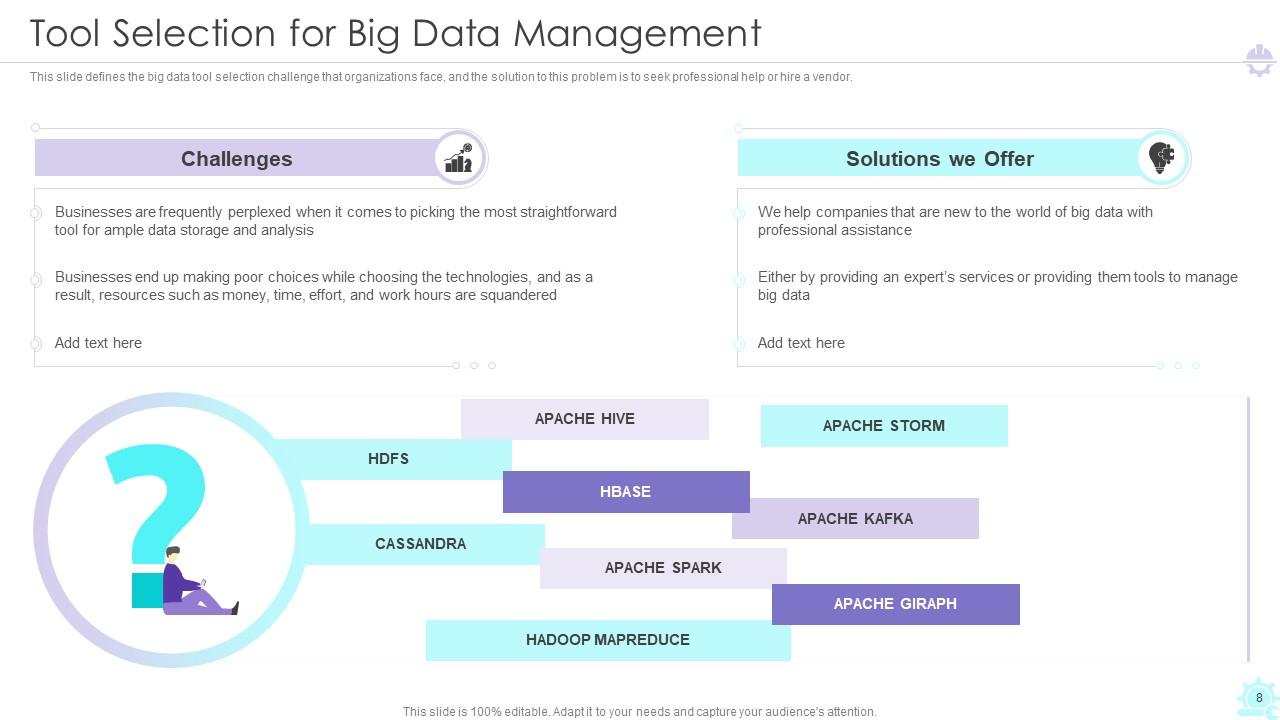

Slide 8: This slide defines the big data tool selection challenge that organizations face.

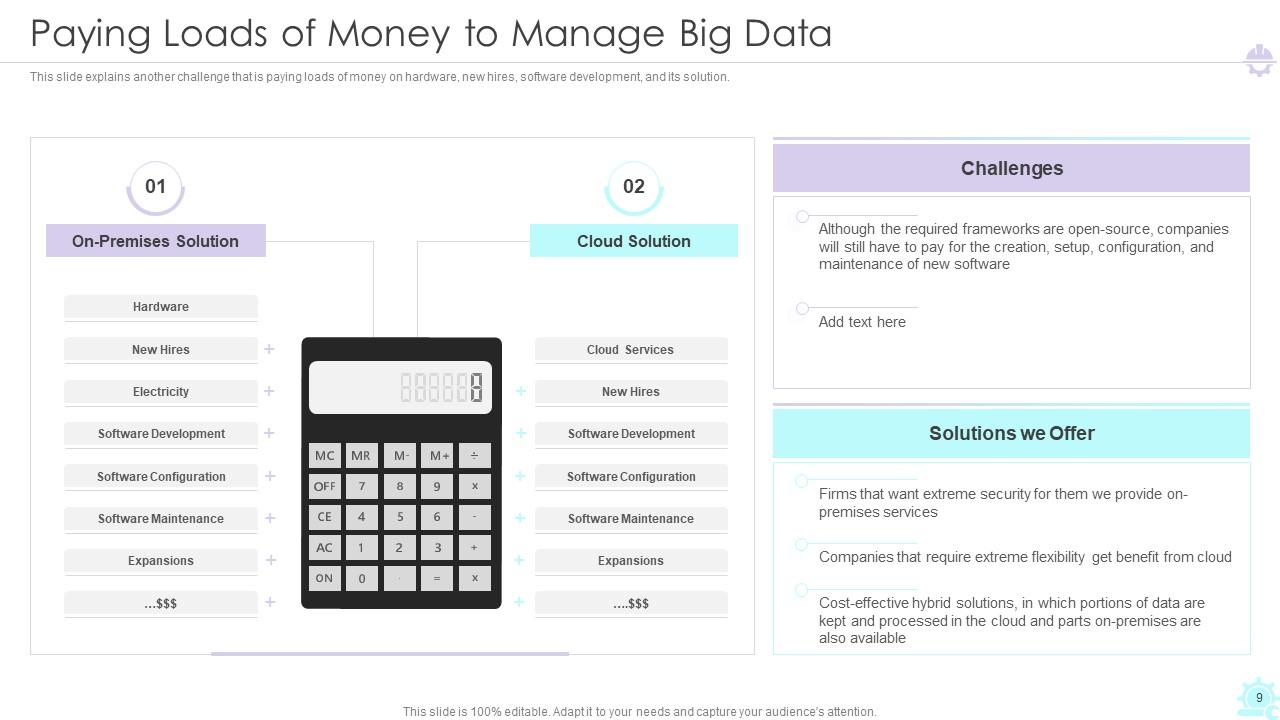

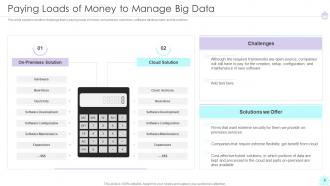

Slide 9: This slide explains another challenge that is paying loads of money on hardware, new hires, software development, and its solution.

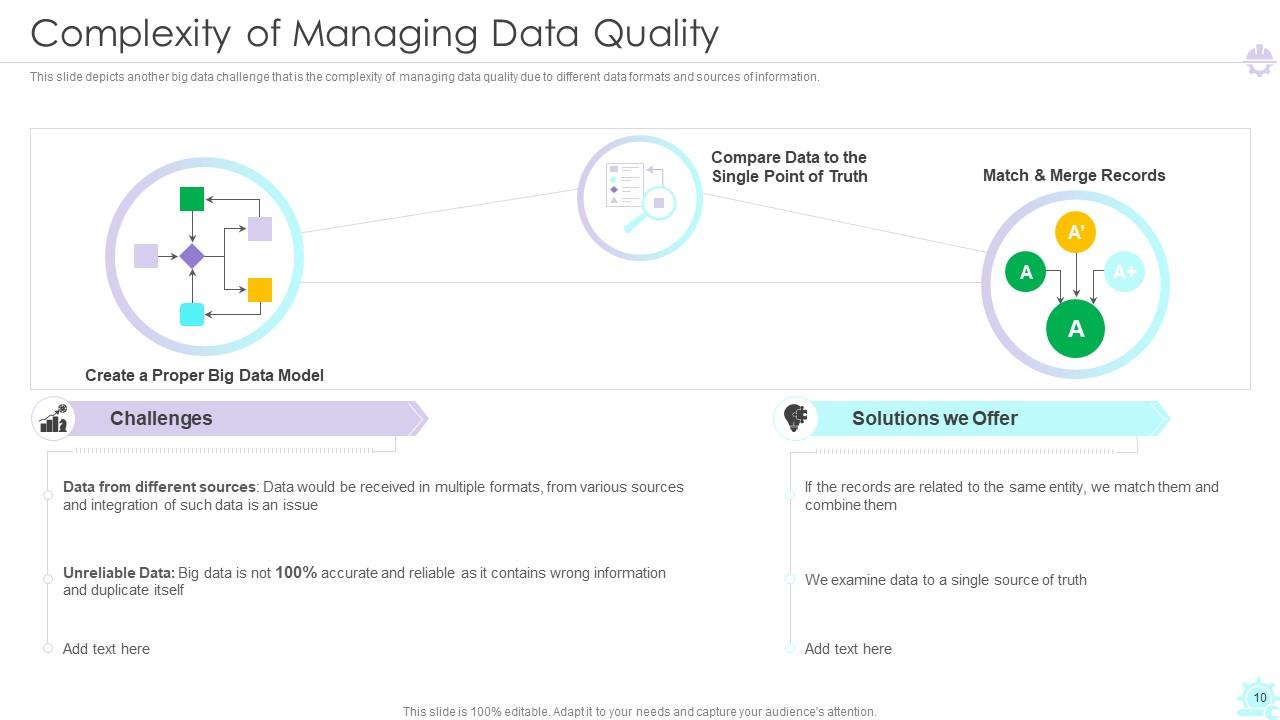

Slide 10: This slide depicts another big data challenge that is the complexity of managing data quality due to different data formats and sources of information.

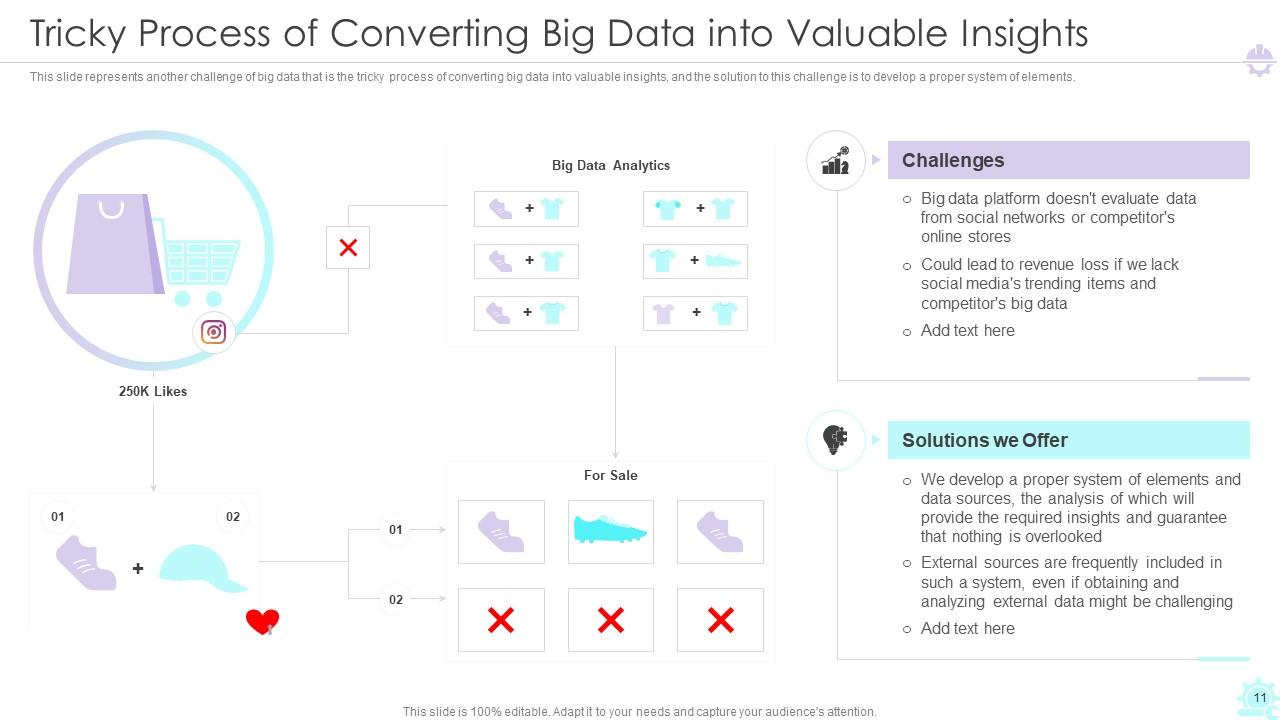

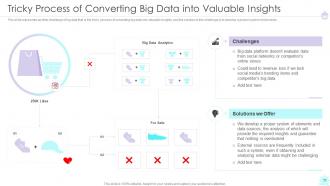

Slide 11: This slide represents another challenge that is the tricky process of converting big data into valuable insights.

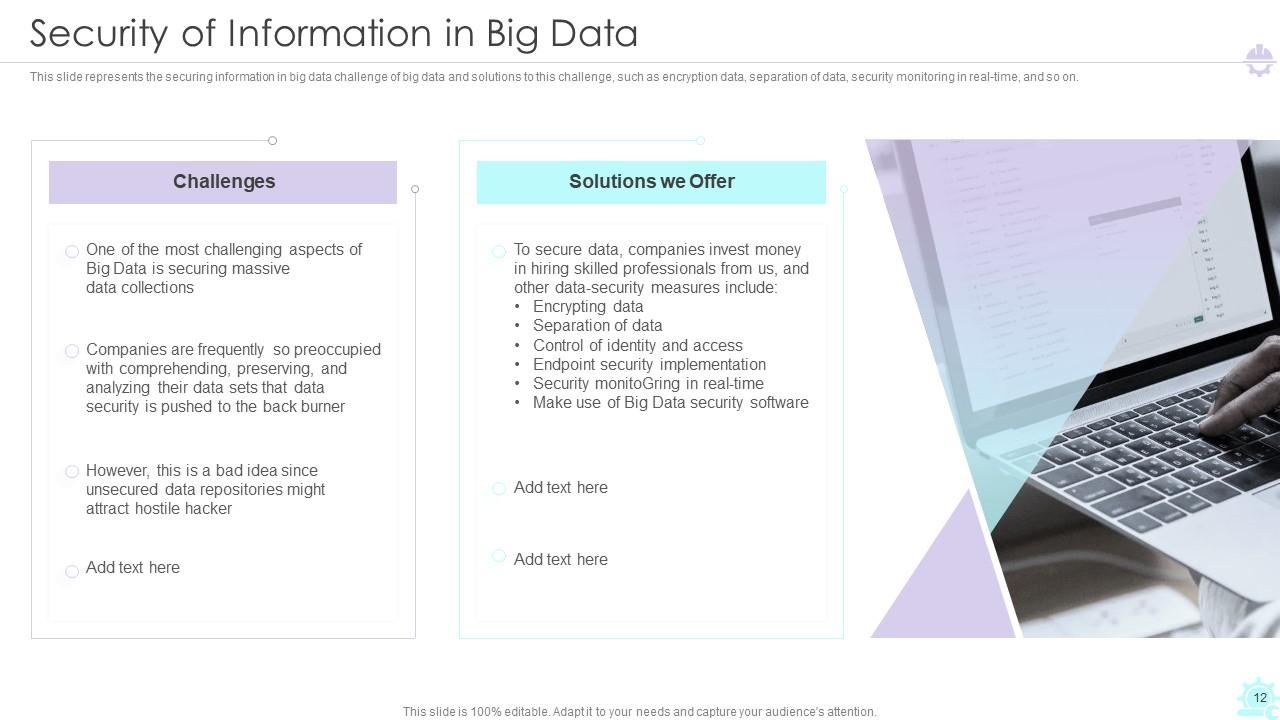

Slide 12: This slide represents the securing information in big data challenge of big data and solutions to this challenge.

Slide 13: This slide exhibit table of content- Let’s Discuss Big Data and Its Types.

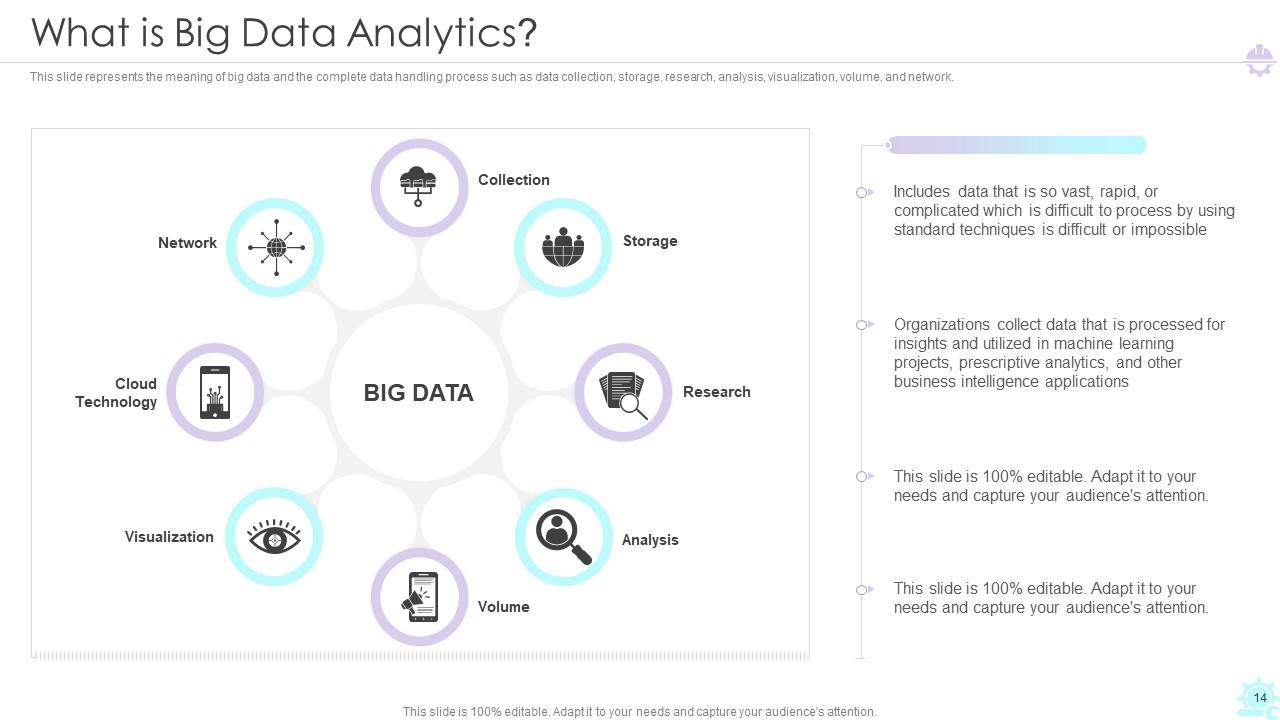

Slide 14: This slide represents the meaning of big data analyticsand the complete data handling process.

Slide 15: This slide describes the top sources of big data collection such as media data, cloud data, web data.

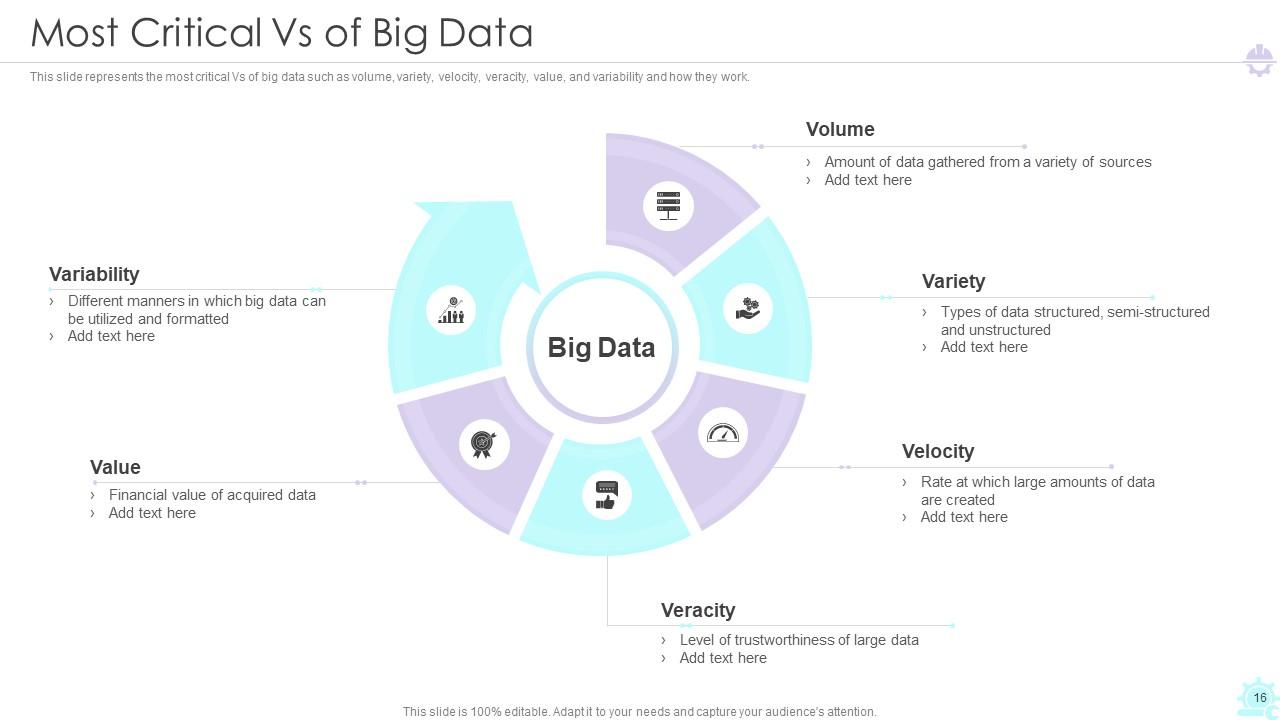

Slide 16: This slide represents the most critical Vs of big data such as volume, variety, value, and variability and how they work.

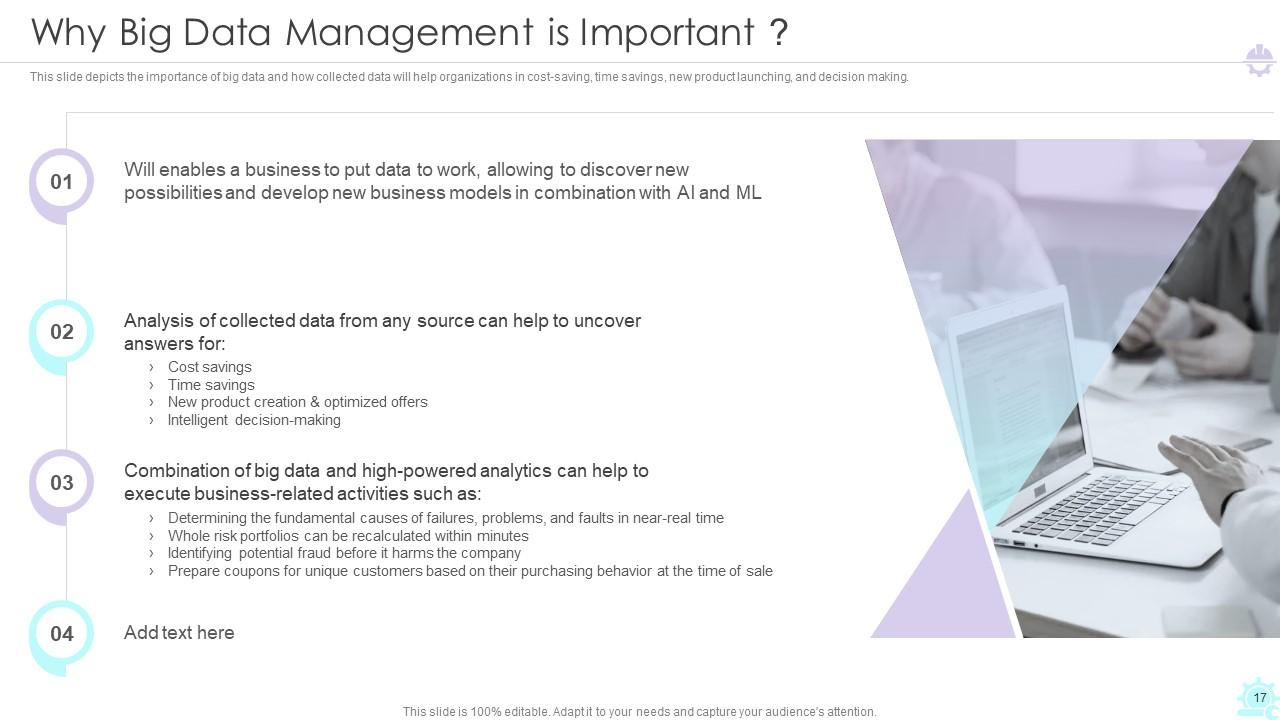

Slide 17: This slide depicts the importance of big data and how collected data will help organizations.

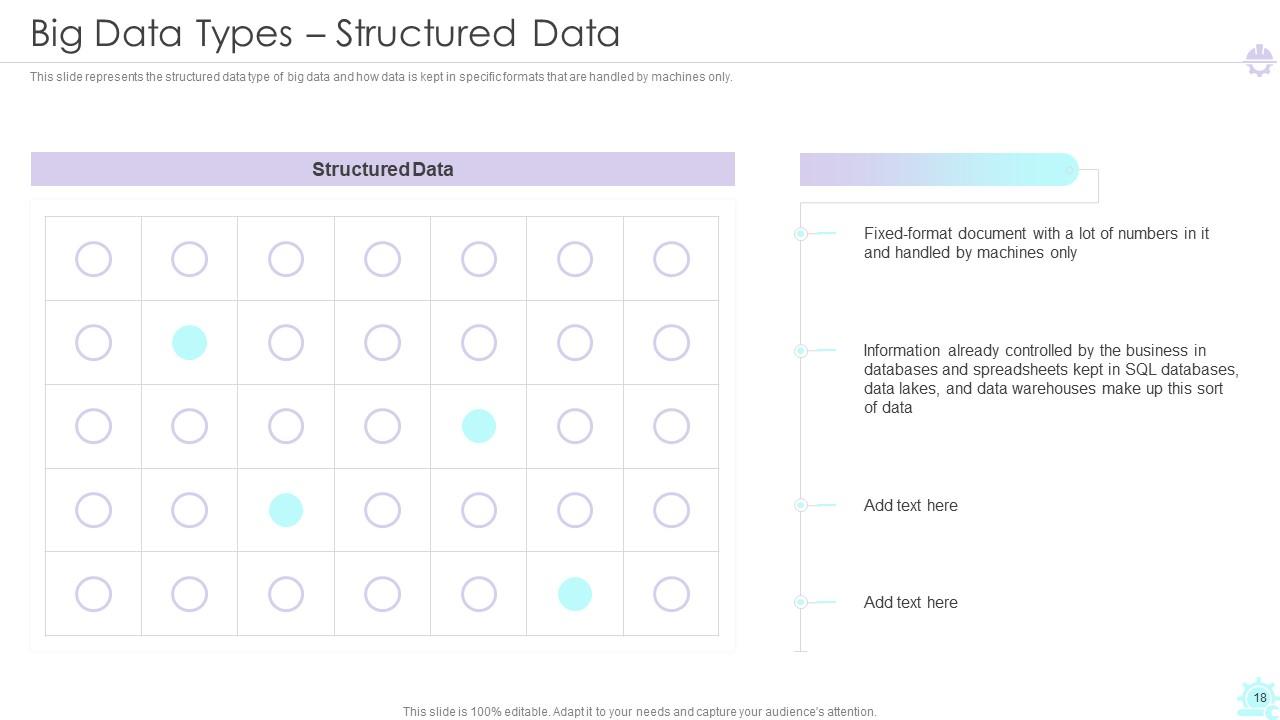

Slide 18: This slide represents the structured data type of big data and how data is kept in specific formats that are handled by machines only.

Slide 19: This slide depicts the unstructured data form of big data.

Slide 20: This slide represents the semi-structured data form of big data.

Slide 21: This slide exhibit table of content- Architecture and Workflow after Big Data Management.

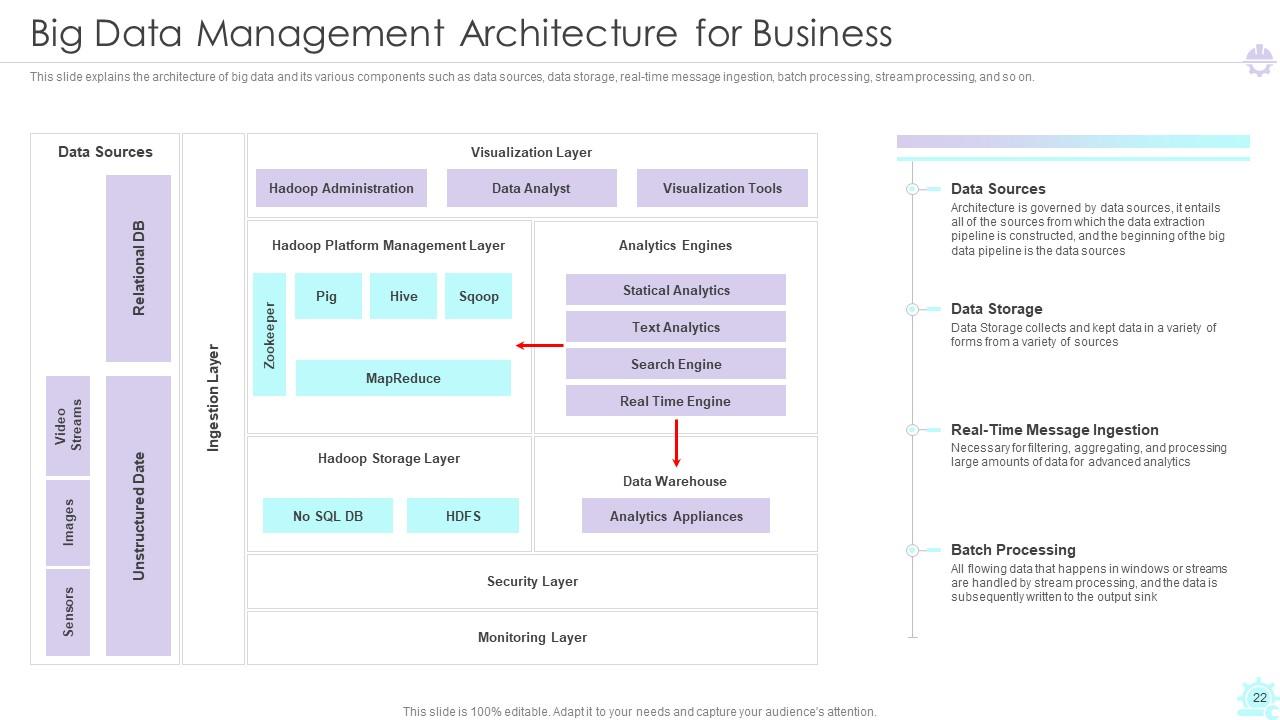

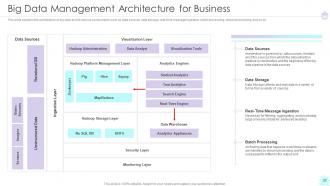

Slide 22: This slide explains the architecture of big data and its various components.

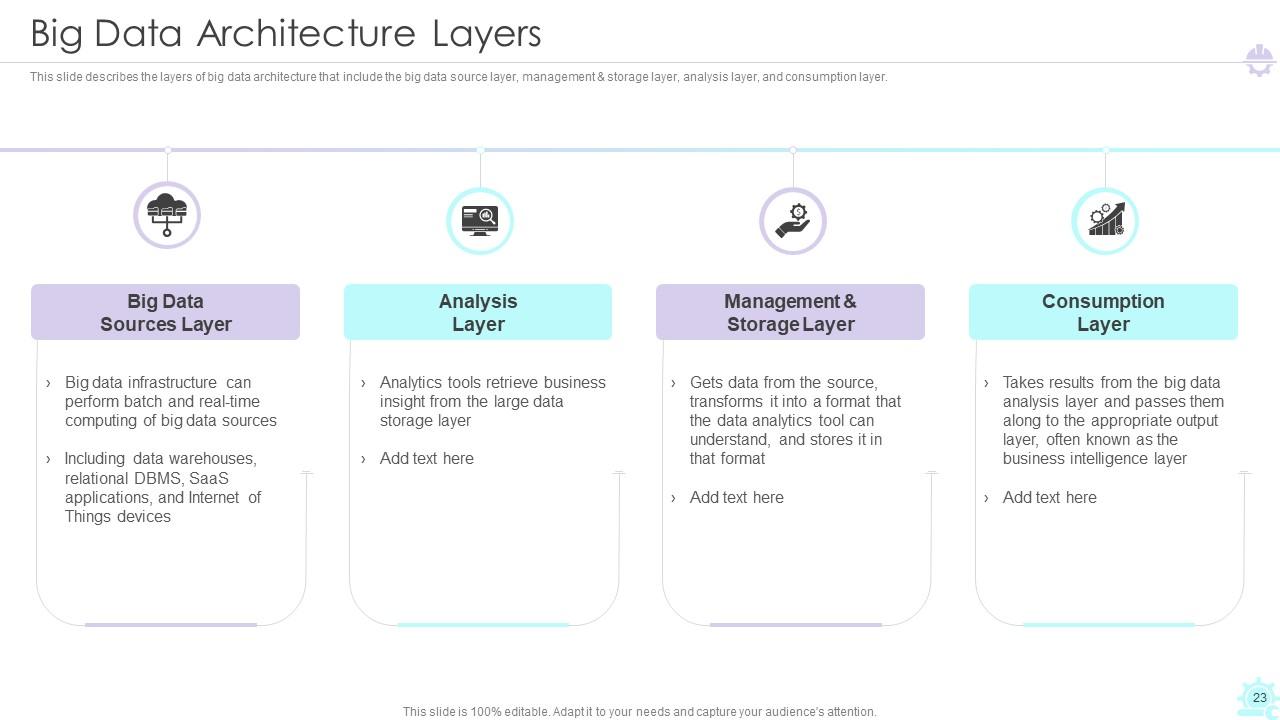

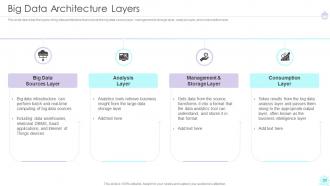

Slide 23: This slide describes the layers of big data architecture.

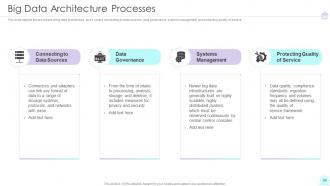

Slide 24: This slide depicts the processes of big data architecture.

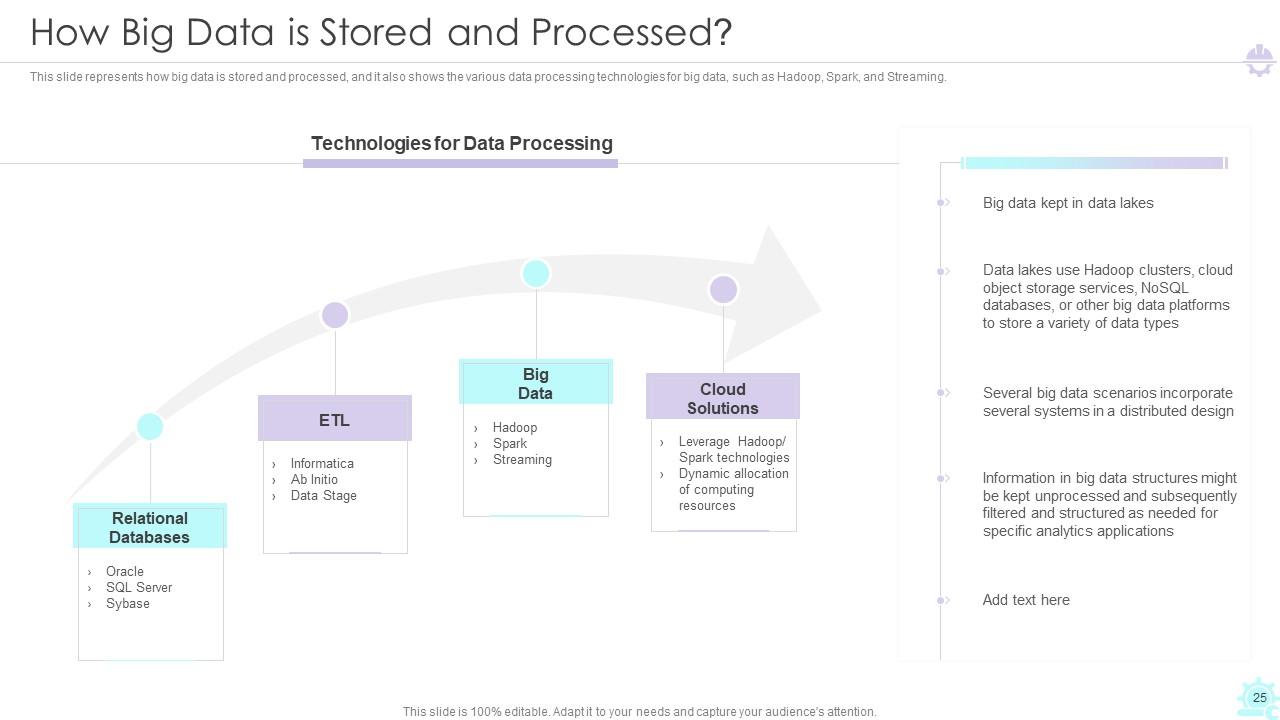

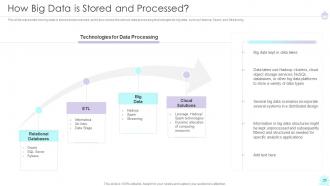

Slide 25: This slide represents how big data is stored and processed.

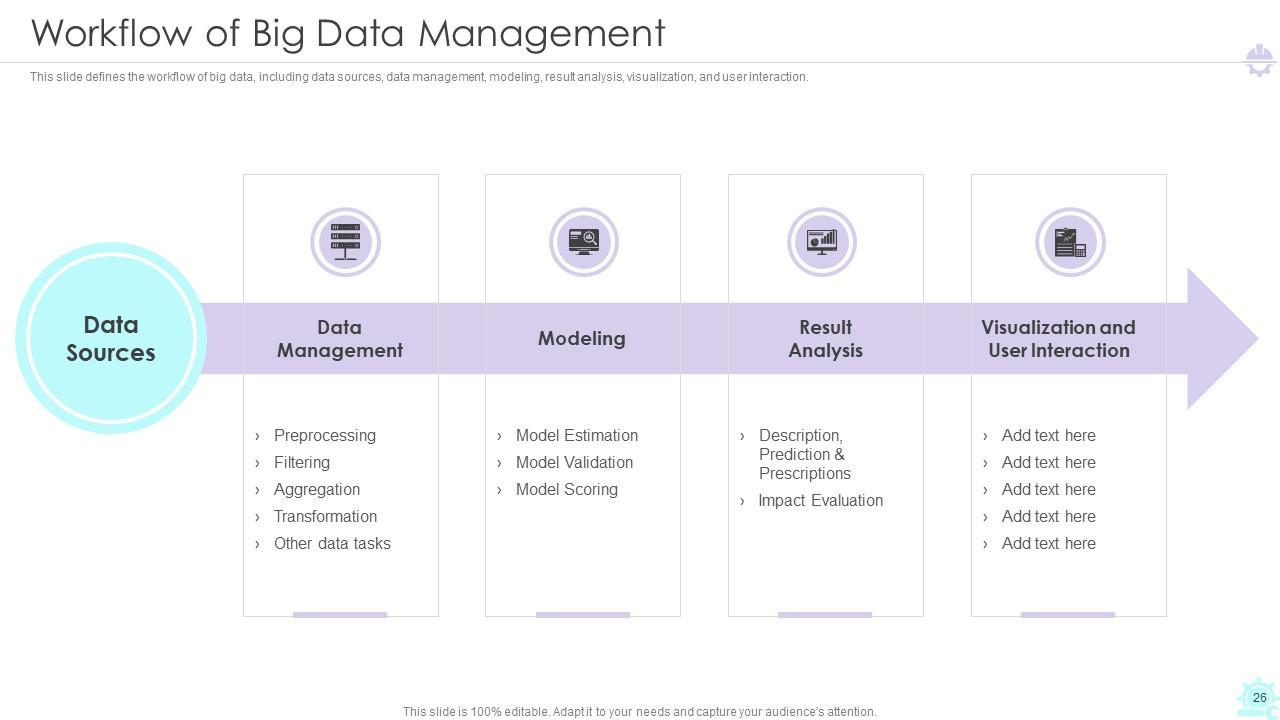

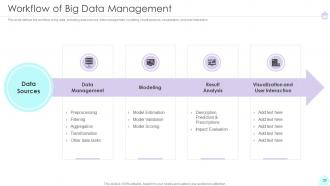

Slide 26: This slide defines the workflow of big data, including data sources, data management, visualization, and user interaction.

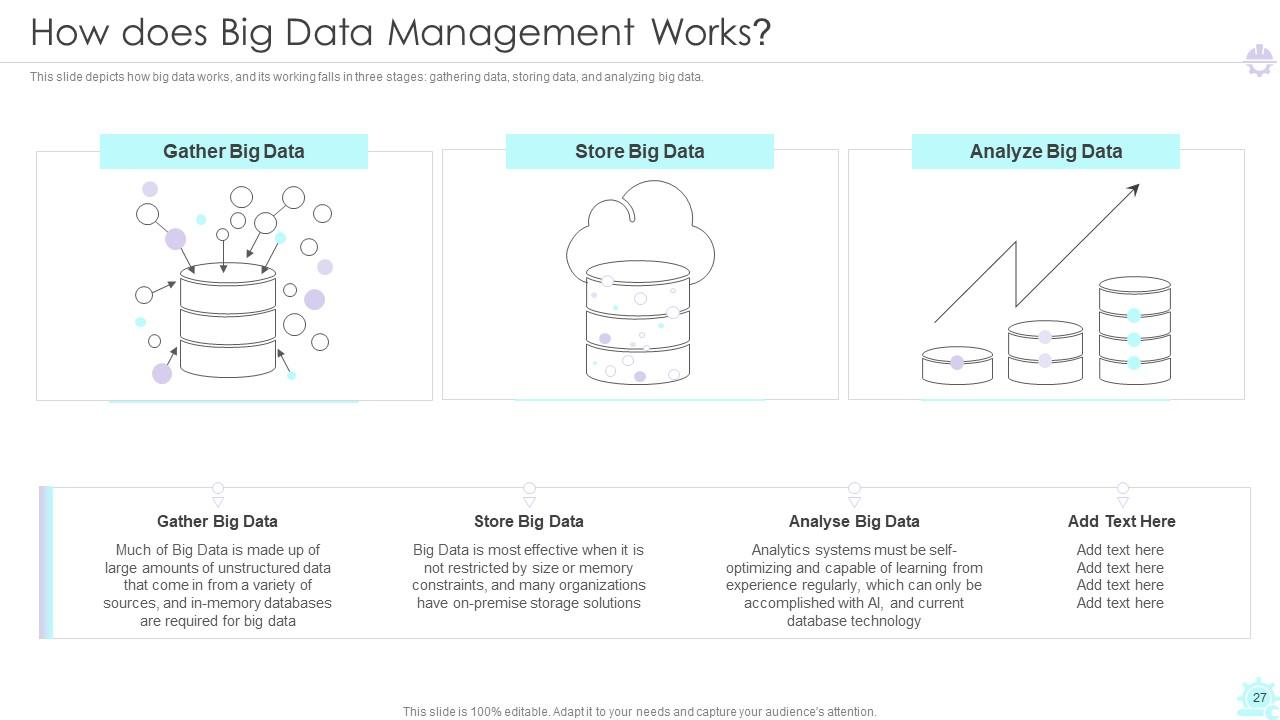

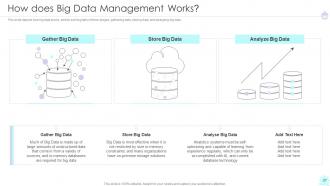

Slide 27: This slide depicts how big data works, and its working falls in three stages: gathering data, storing data, and analyzing big data.

Slide 28: This slide exhibit table of content- Technologies and Strategies we Offer for Big Data Management.

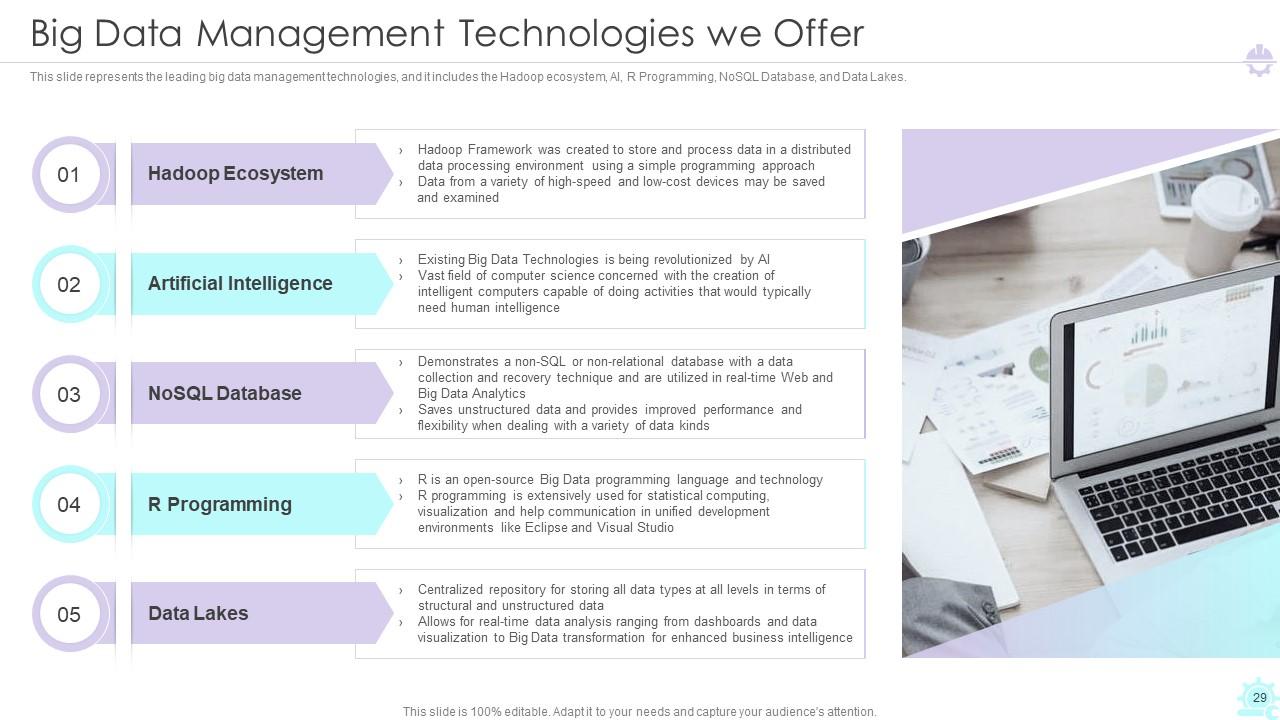

Slide 29: This slide represents the leading big data management technologies.

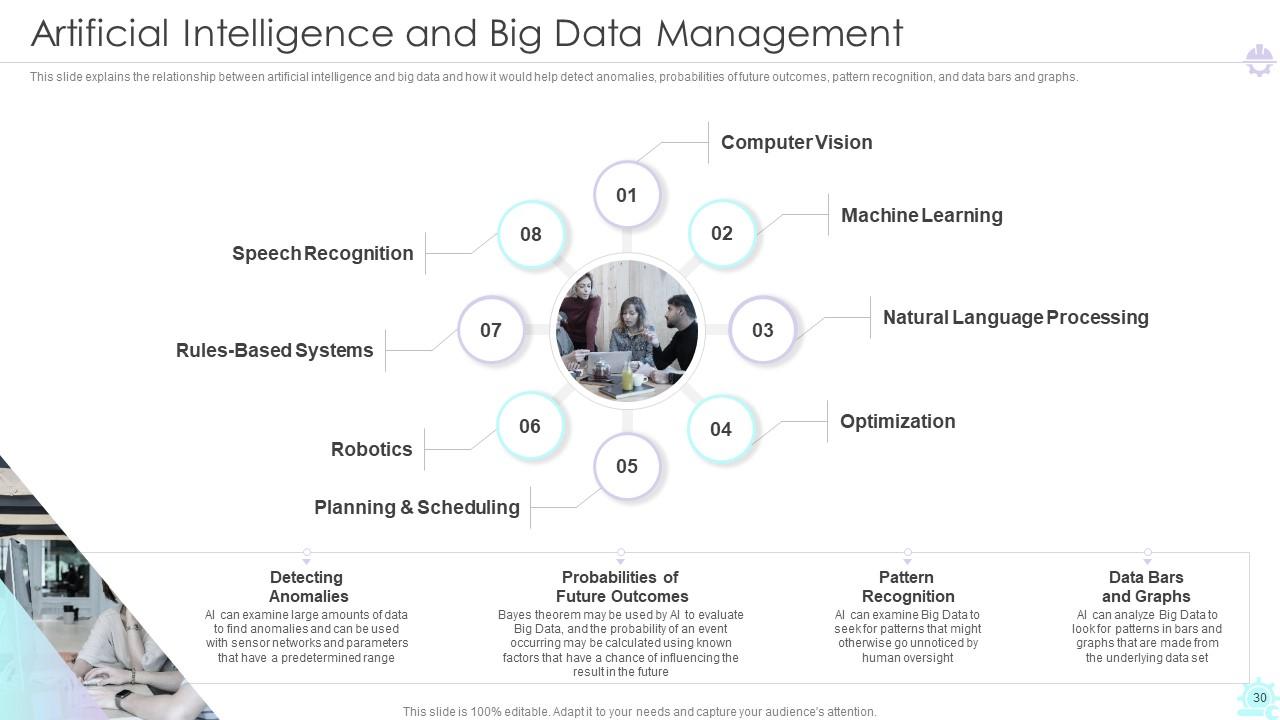

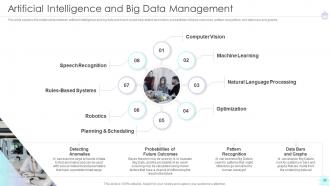

Slide 30: This slide explains the relationship between artificial intelligence and big data.

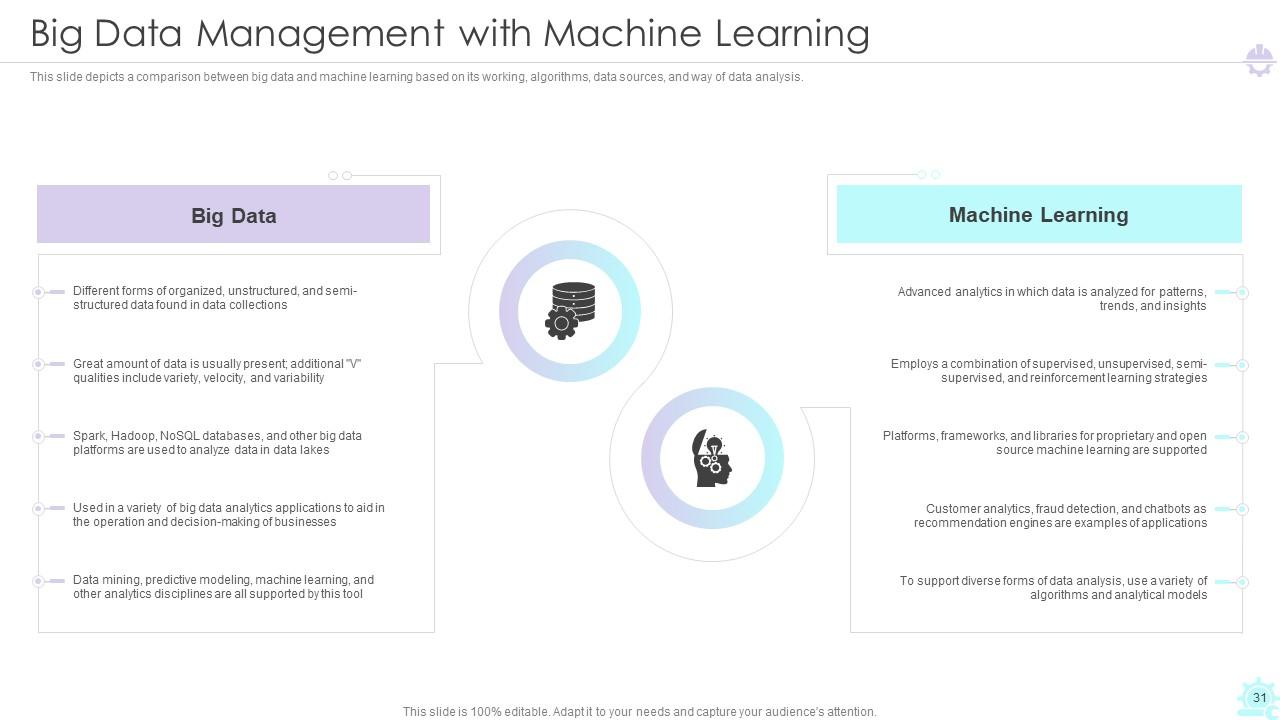

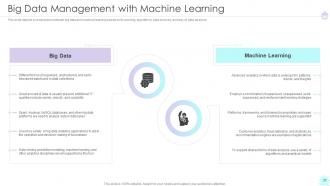

Slide 31: This slide depicts a comparison between big data and machine learning based on its working, algorithms, data sources.

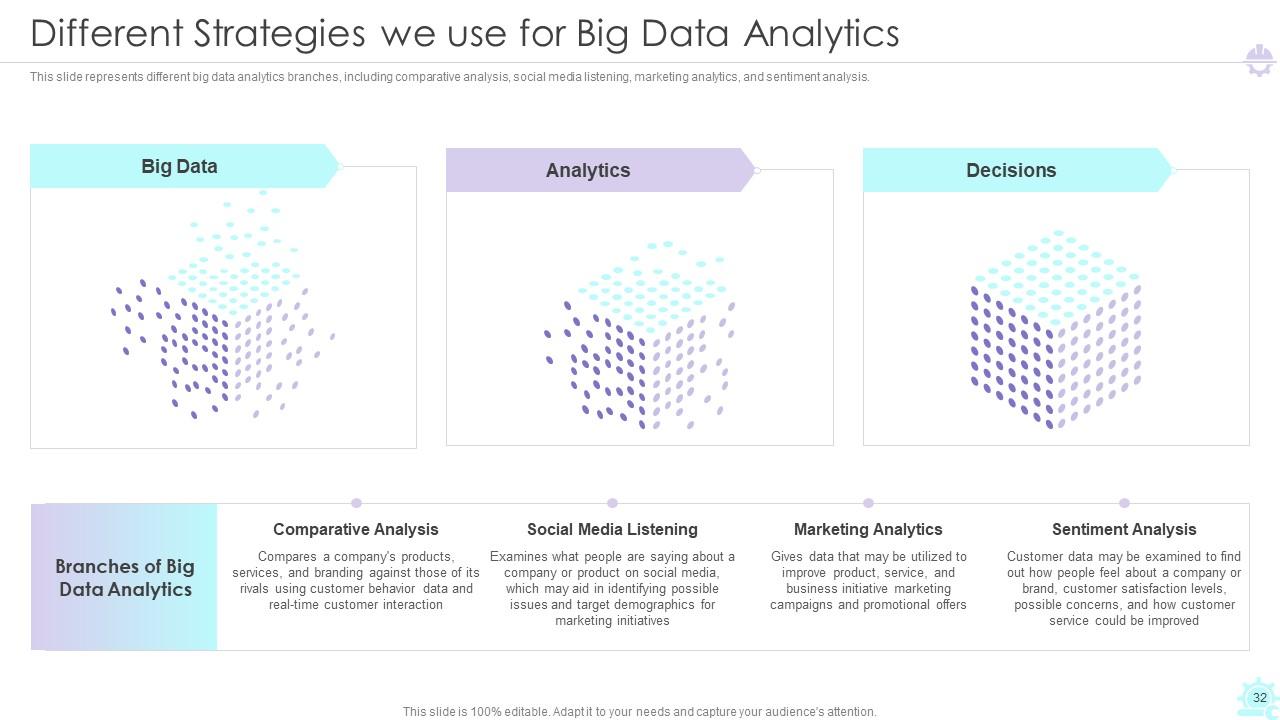

Slide 32: This slide represents Different Strategies we use for Big Data Analytics.

Slide 33: This slide exhibit table of content- Impacts and Benefits after Big Data Management.

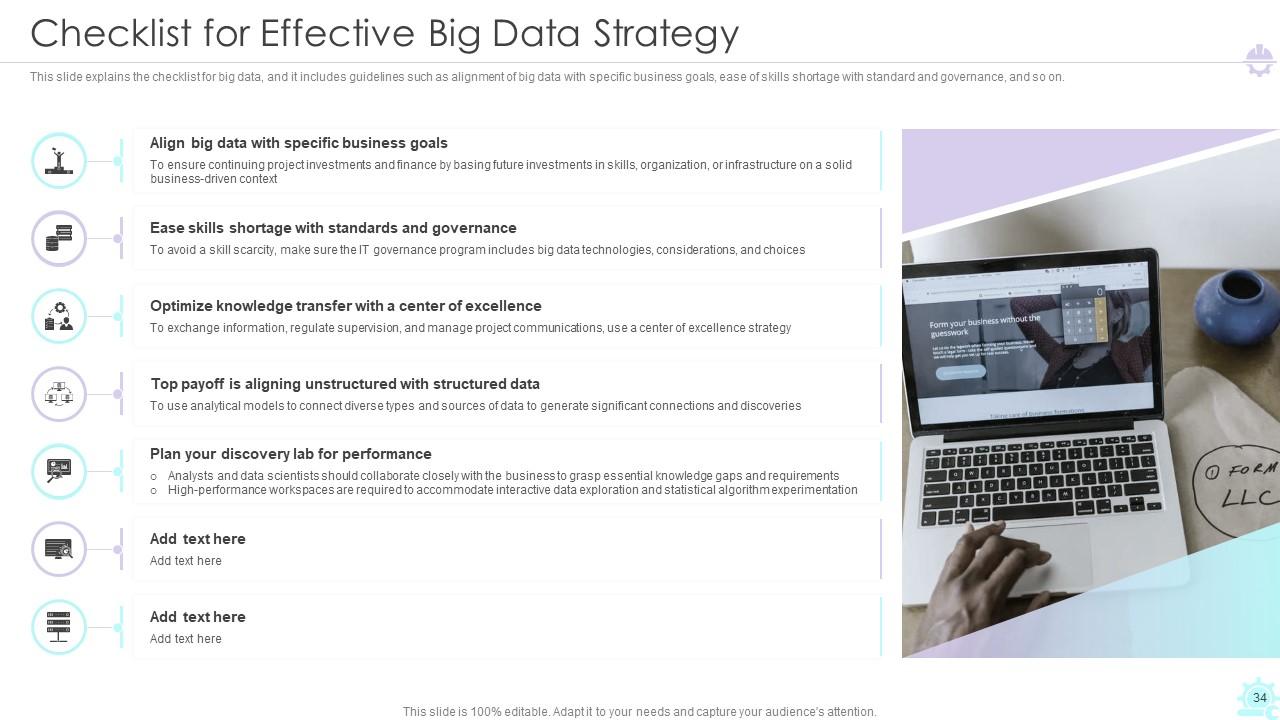

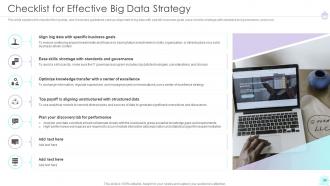

Slide 34: This slide explains the checklist for big data strategy.

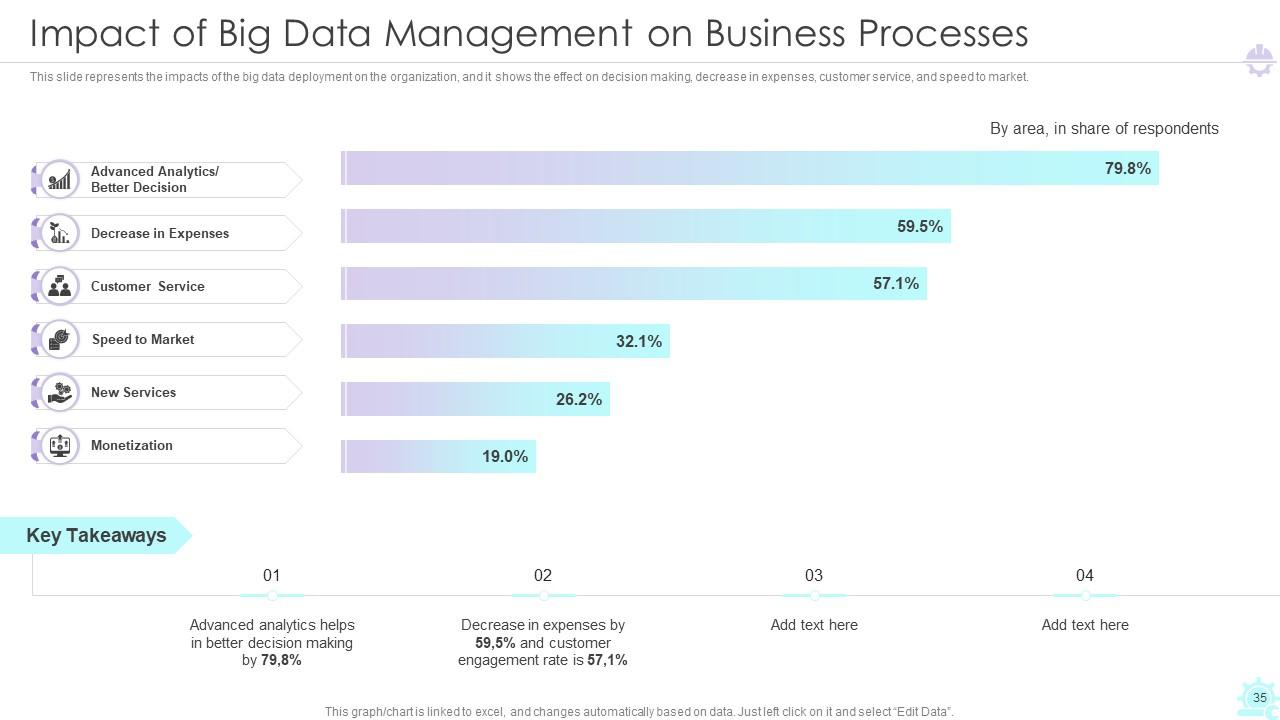

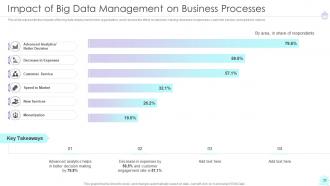

Slide 35: This slide represents the Impact of Big Data Management on Business Processes.

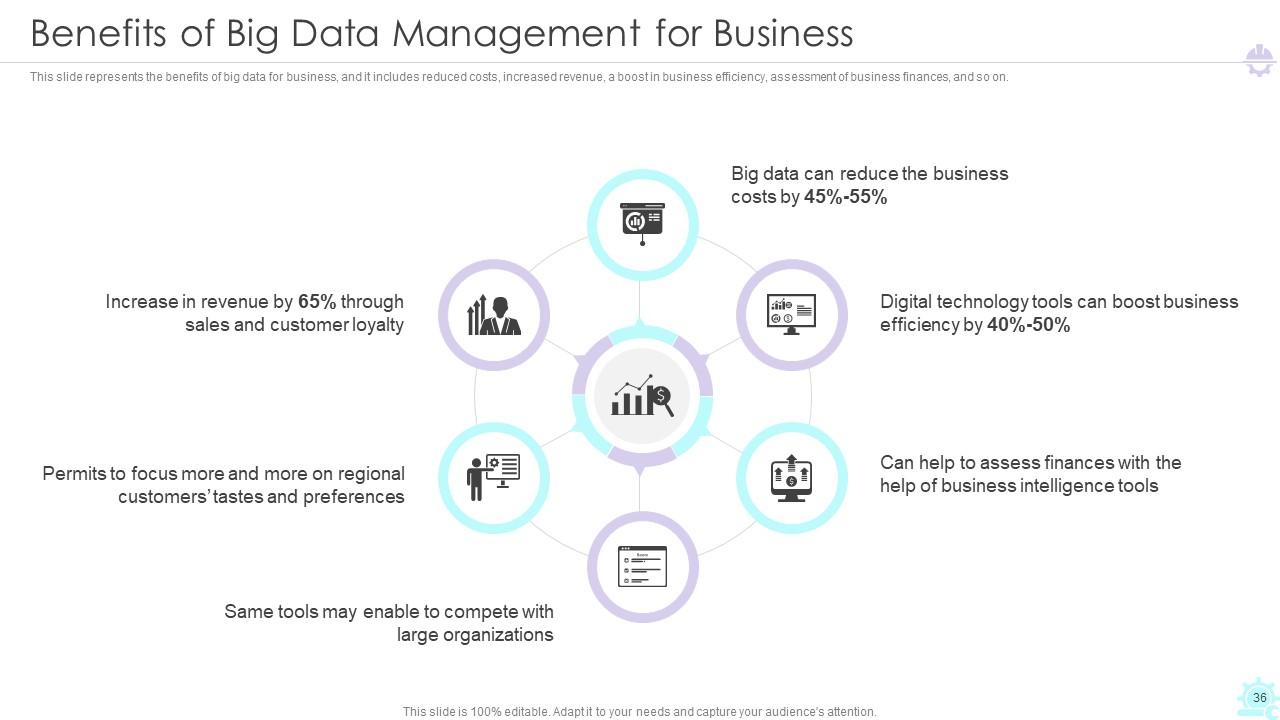

Slide 36: This slide represents the Benefits of Big Data Management for Business.

Slide 37: This slide exhibit table of content- Training and Budget for Big Data Management.

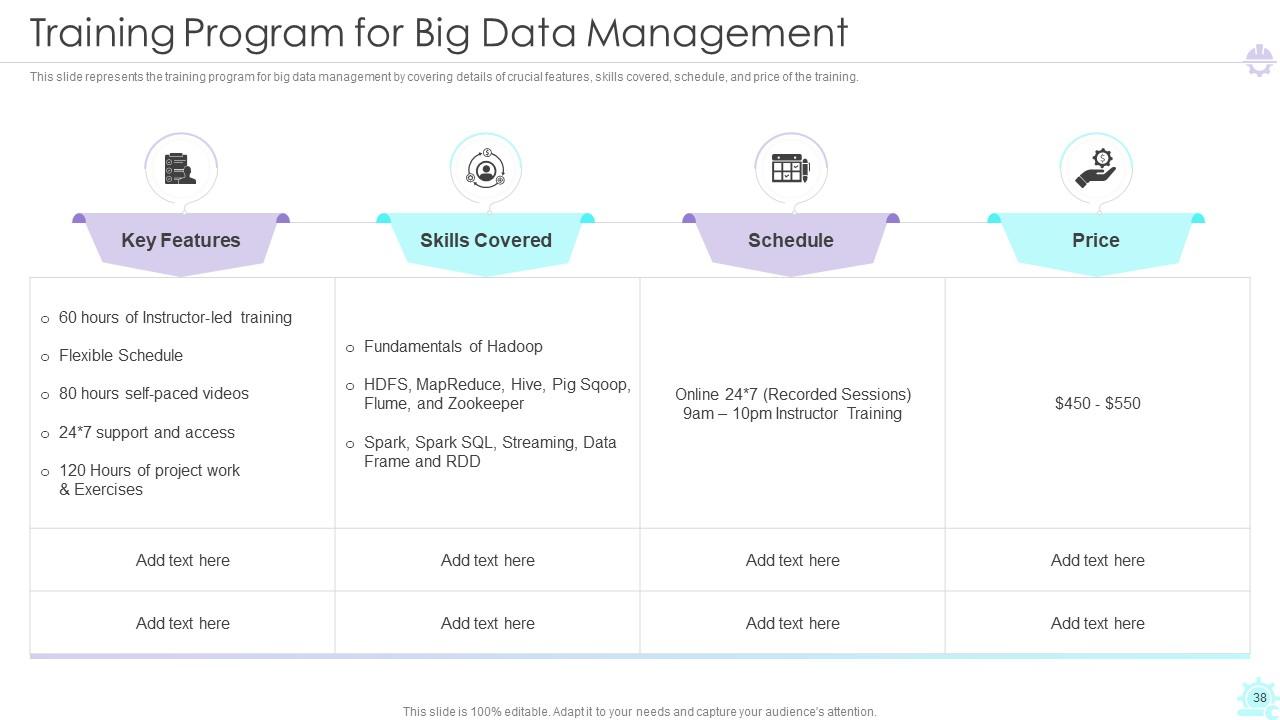

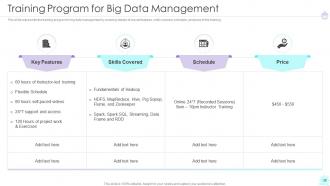

Slide 38: This slide represents the training program for big data management.

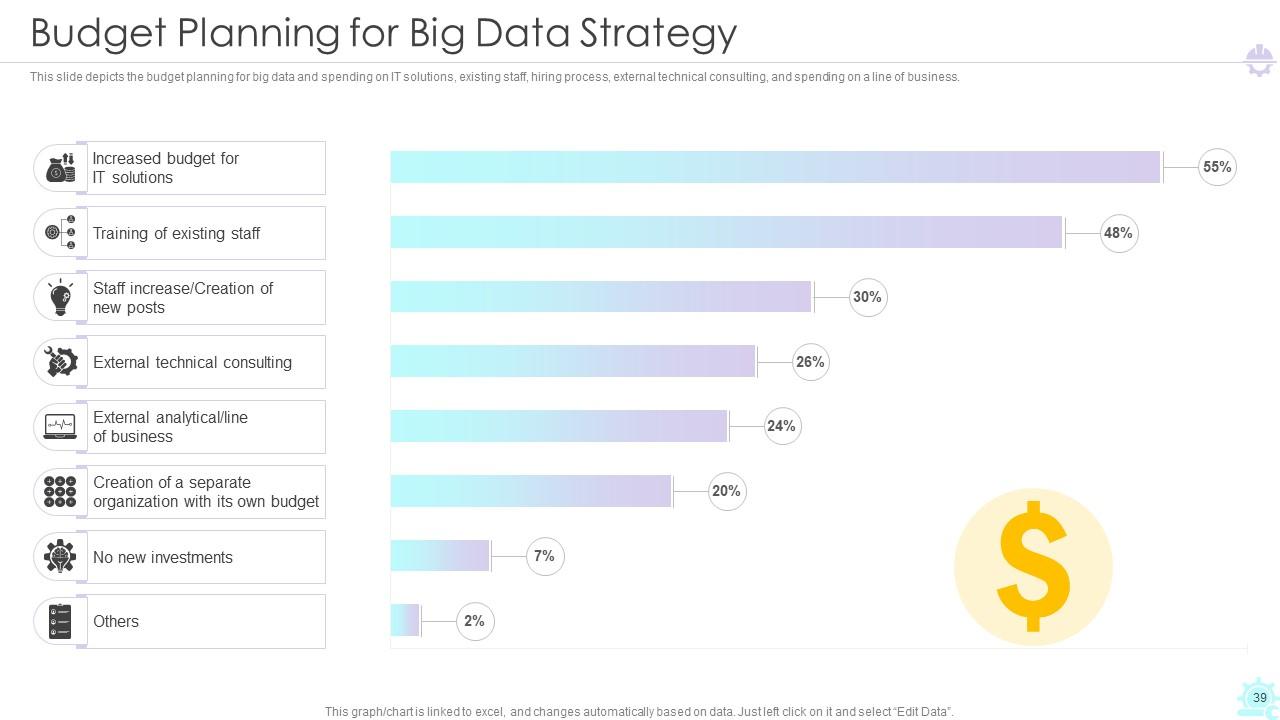

Slide 39: This slide depicts the budget planning for big data strategy.

Slide 40: This slide exhibit table of content- Different Industries in Which we Manage Big Data.

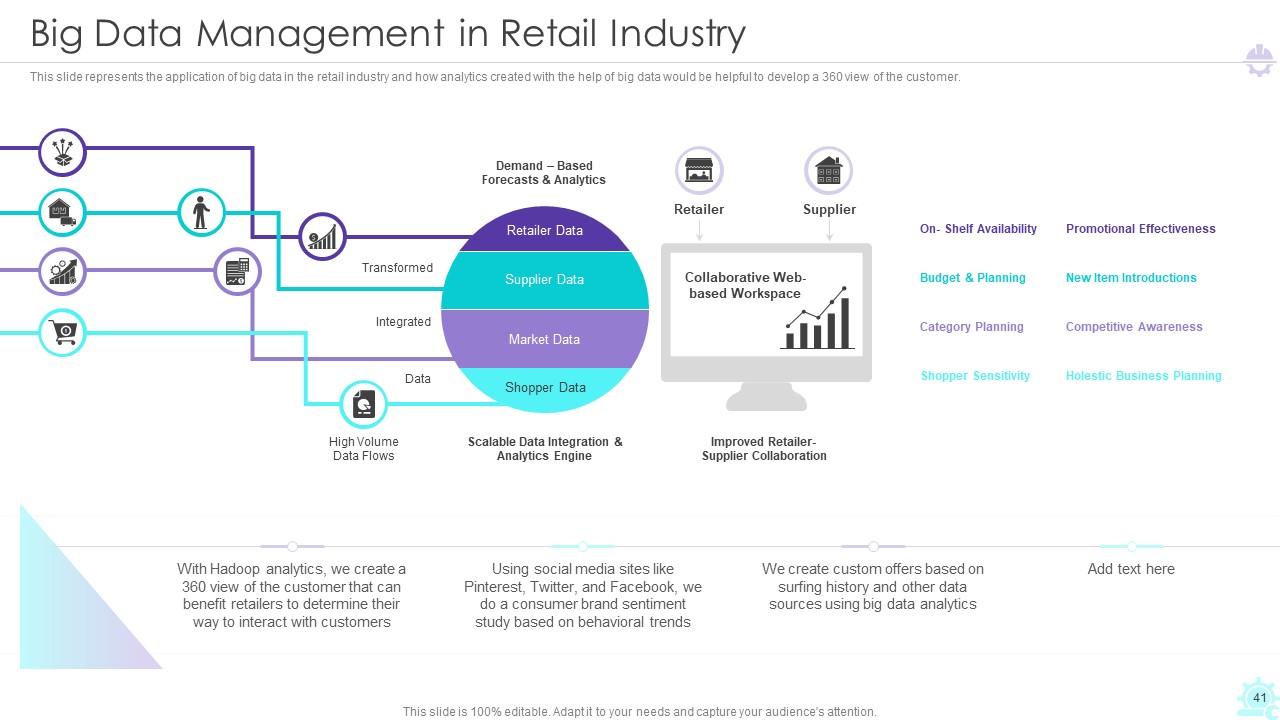

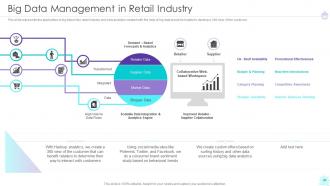

Slide 41: This slide represents the application of big data in the retail industry.

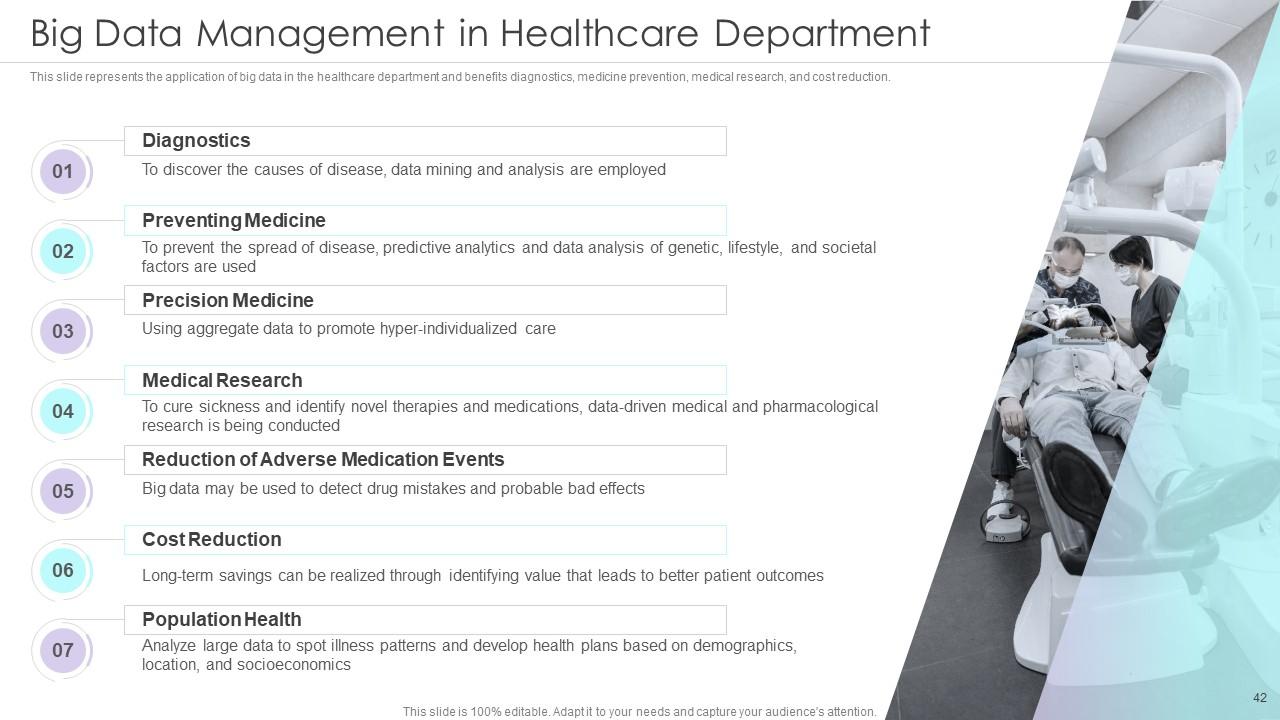

Slide 42: This slide represents the application of big data in the healthcare department.

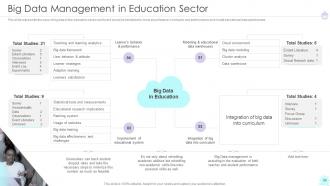

Slide 43: This slide represents the uses of big data in the education sector.

Slide 44: This slide represents the uses of big data in the E-commerce business.

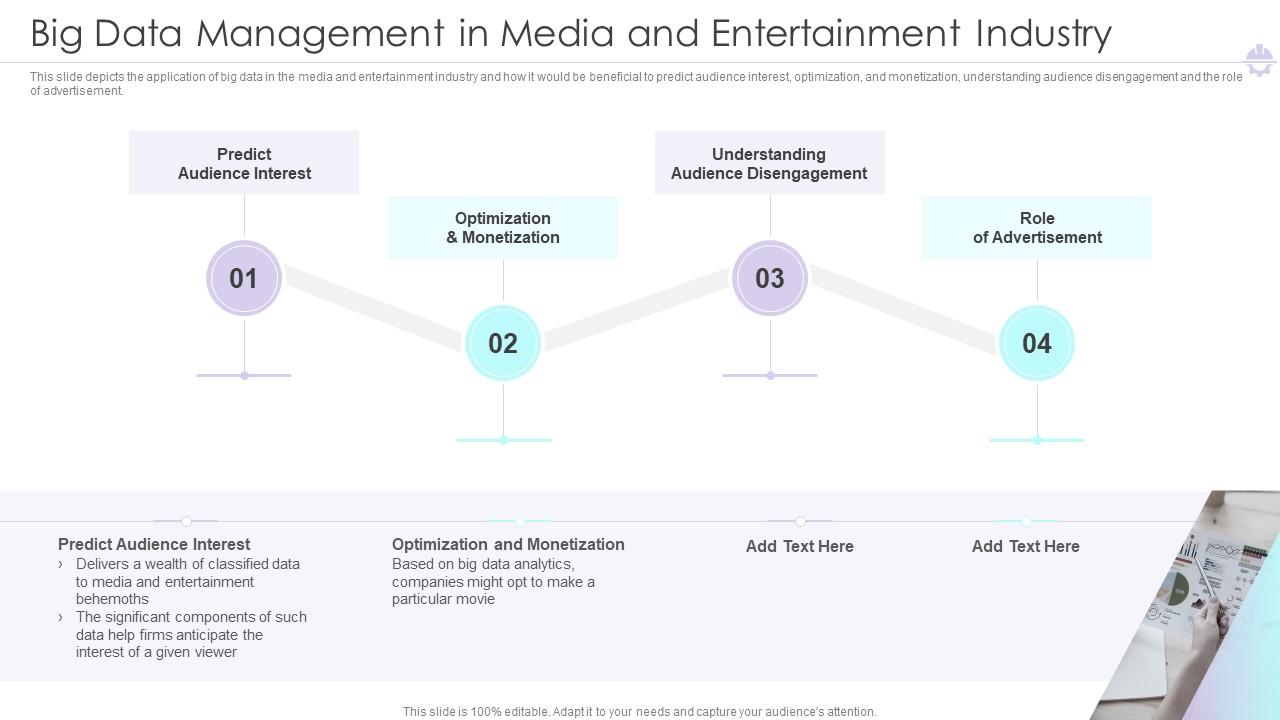

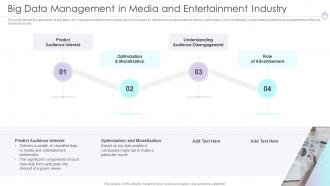

Slide 45: This slide depicts the application of big data in the media and entertainment industry.

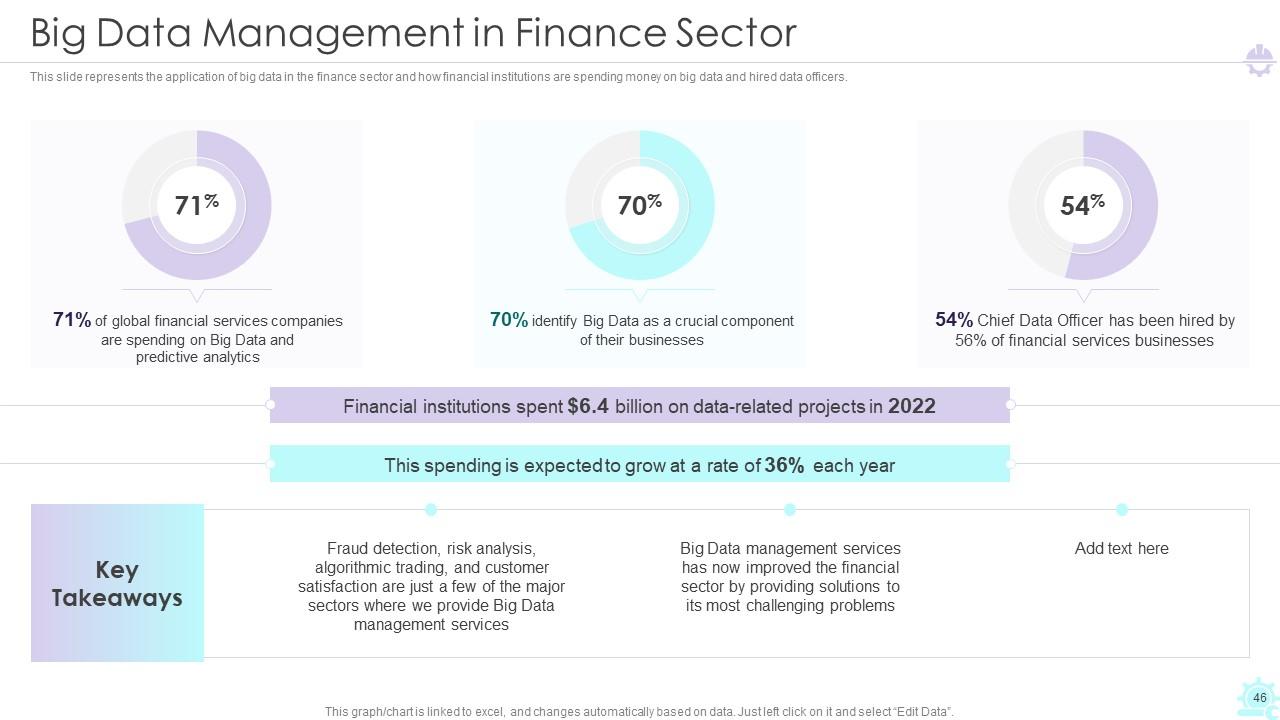

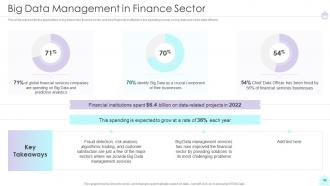

Slide 46: This slide represents the application of big data in the finance sector.

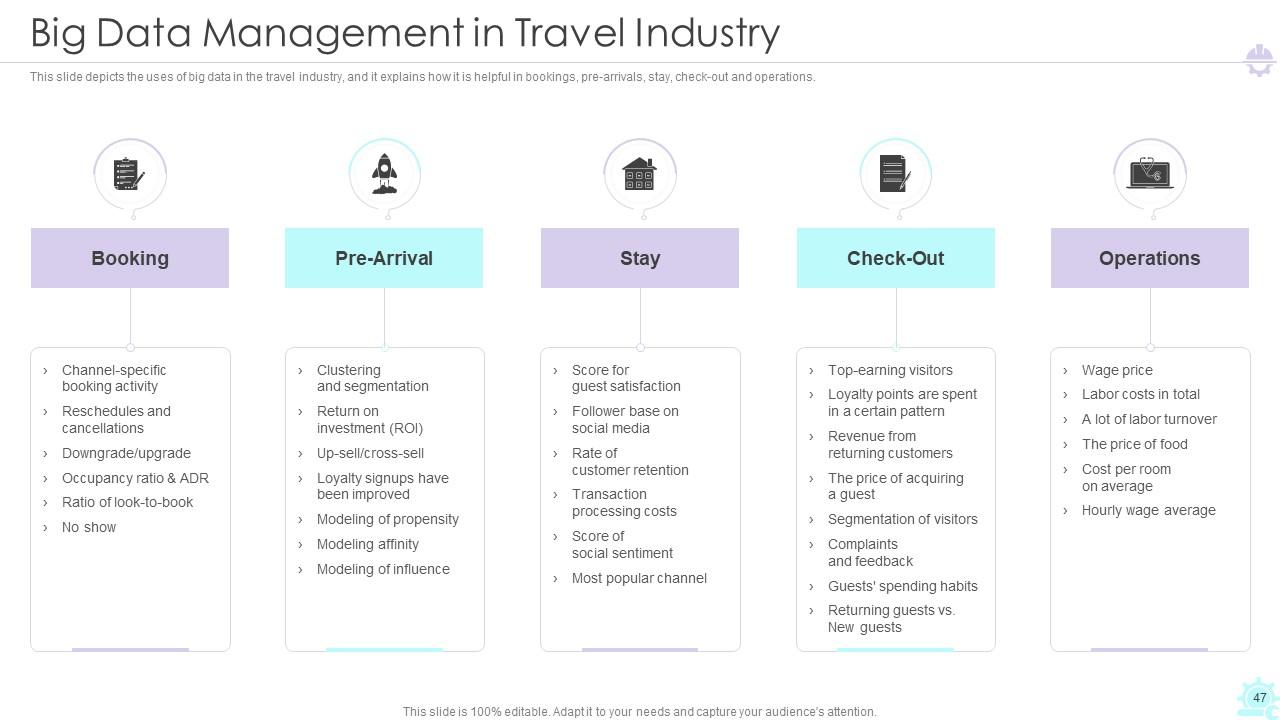

Slide 47: This slide depicts the uses of big data in the travel industry.

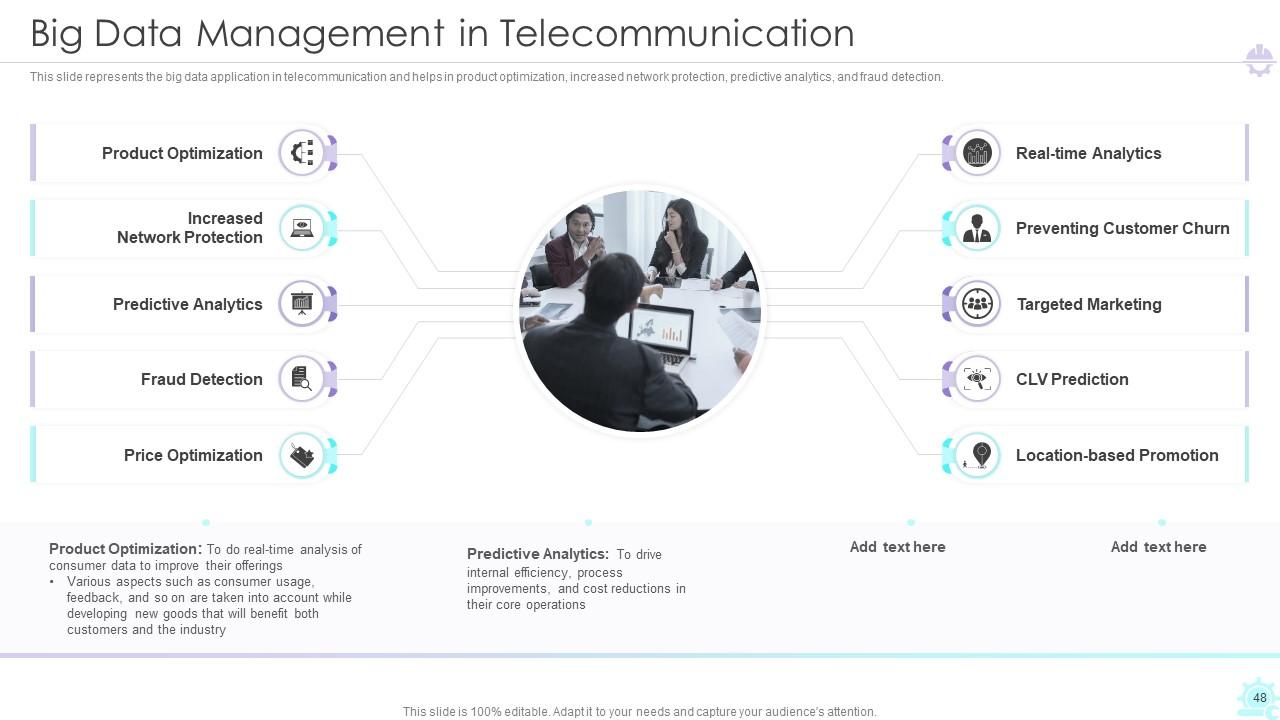

Slide 48: This slide represents the big data application in telecommunication.

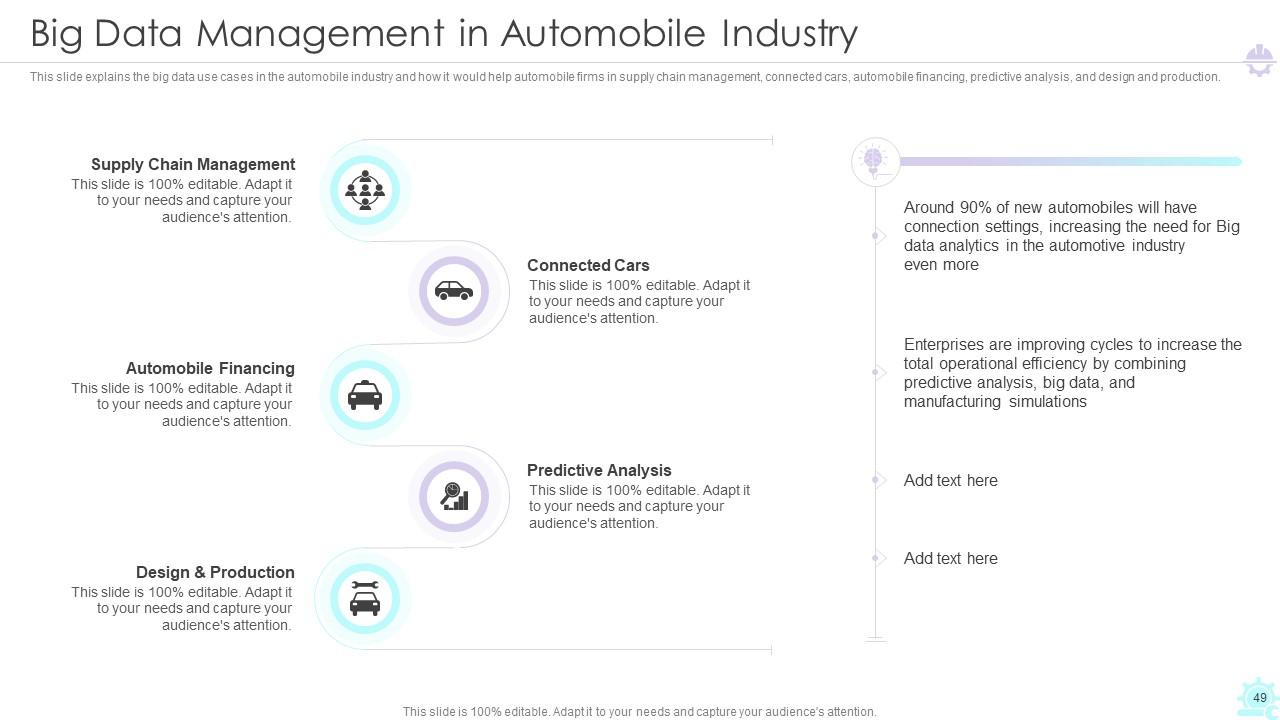

Slide 49: This slide explains the big data use cases in the automobile industry.

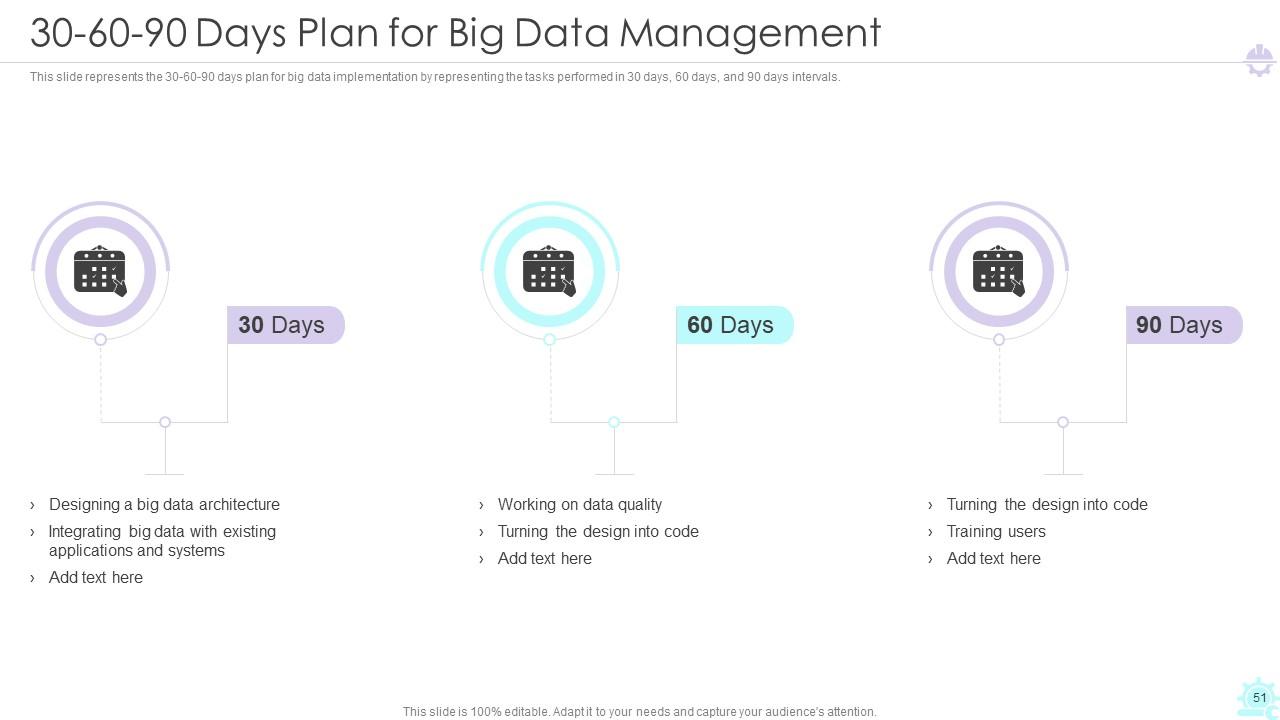

Slide 50: This slide exhibit table of content- 30-60-90 Days Plan for Big Data Management.

Slide 51: This slide represents the 30-60-90 days plan for big data implementation.

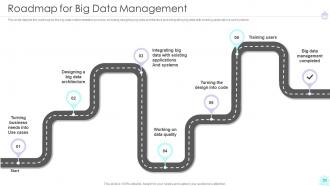

Slide 52: This slide exhibit table of content- Roadmap for Big Data Management.

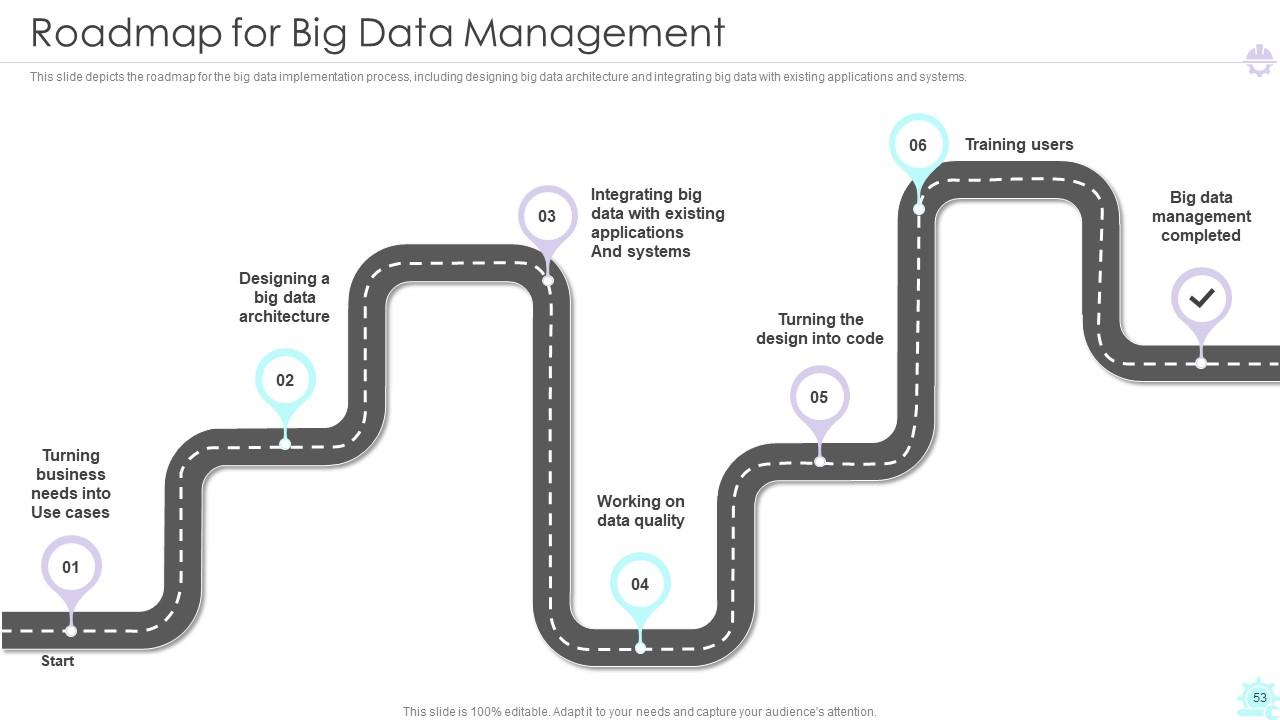

Slide 53: This slide exhibit table of content- Roadmap for Big Data Management.

Slide 54: This slide exhibit table of content- Dashboard for Big Data Management.

Slide 55: This slide represents the dashboards for big data deployment.

Slide 56: This is the icons slide.

Slide 57: This slide presents title for additional slides.

Slide 58: This slide presents your company's vision, mission and goals.

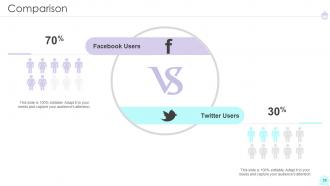

Slide 59: This slide presents Comparison of Facebook Users and Twitter Users.

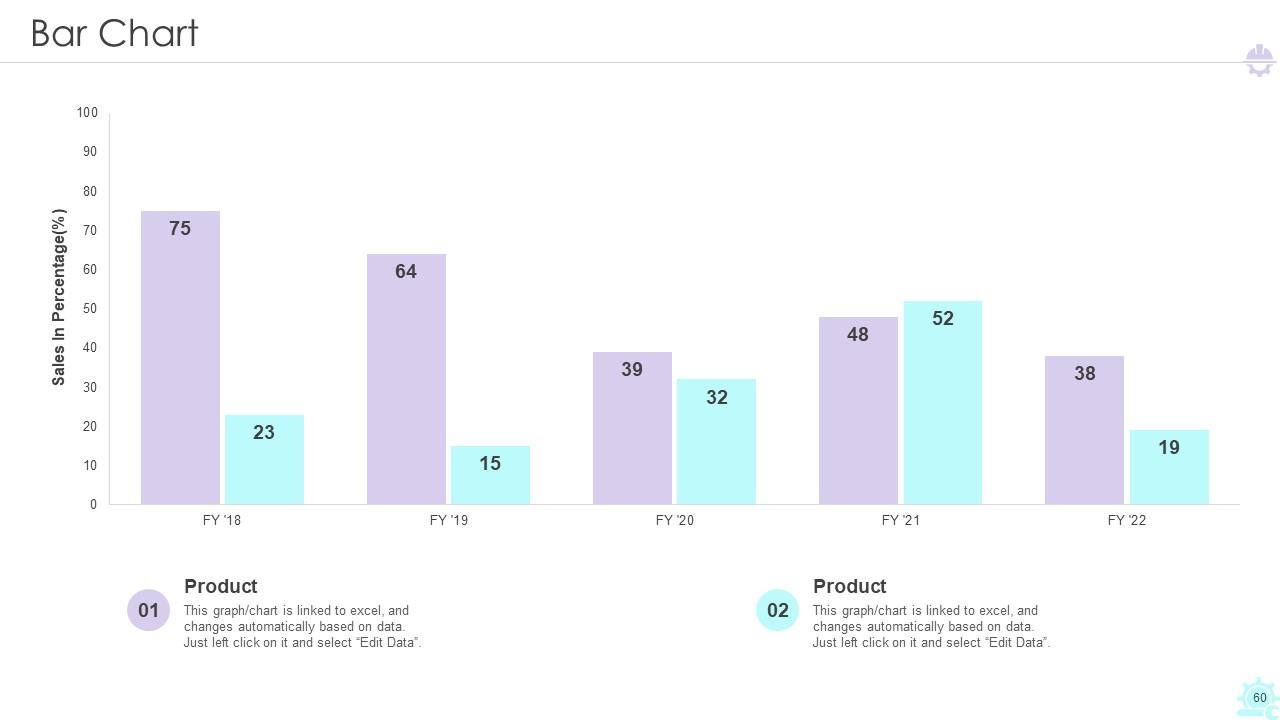

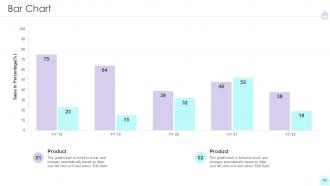

Slide 60: This slide displays yearly bar graph for different products.

Slide 61: This slide exhibits ideas generated.

Slide 62: This slide displays puzzle.

Slide 63: This slide shows roadmap.

Slide 64: This slide shows Mind Map.

Slide 65: This is thank you slide & contains contact details of company like office address, phone no., etc.

Big Data Engineer Powerpoint Presentation Slides with all 70 slides:

Use our Big Data Engineer Powerpoint Presentation Slides to effectively help you save your valuable time. They are readymade to fit into any presentation structure.

FAQs

Managing big data poses several challenges, including a lack of knowledge and professionals, tool selection, high hardware and software development costs, the complexity of managing data quality, and converting data into valuable insights.

There are three main types of big data: structured, unstructured, and semi-structured.

Big data architecture includes various components such as data sources, data processing and storage, data analysis and visualization, and user interaction. It also consists of layers such as the data source layer, ingestion layer, processing layer, storage layer, analysis layer, and visualization layer.

Some of the leading technologies used for big data management include Hadoop, Spark, and NoSQL databases. Strategies for big data analytics include data mining, predictive modeling, and sentiment analysis.

A well-planned big data management strategy can help organizations make informed decisions, improve operational efficiency, reduce costs, and gain a competitive edge in the market.

-

“I required a slide for a board meeting and was so satisfied with the final result !! So much went into designing the slide and the communication was amazing! Can’t wait to have my next slide!”

-

“The presentation template I got from you was a very useful one.My presentation went very well and the comments were positive.Thank you for the support. Kudos to the team!”