Research methodology with analysis template 1

Be growth oriented with our Research Methodology With Analysis Template 1. Give a big boost to your enterprise.

Be growth oriented with our Research Methodology With Analysis Template 1. Give a big boost to your enterprise.

- Google Slides is a new FREE Presentation software from Google.

- All our content is 100% compatible with Google Slides.

- Just download our designs, and upload them to Google Slides and they will work automatically.

- Amaze your audience with SlideTeam and Google Slides.

-

Want Changes to This PPT Slide? Check out our Presentation Design Services

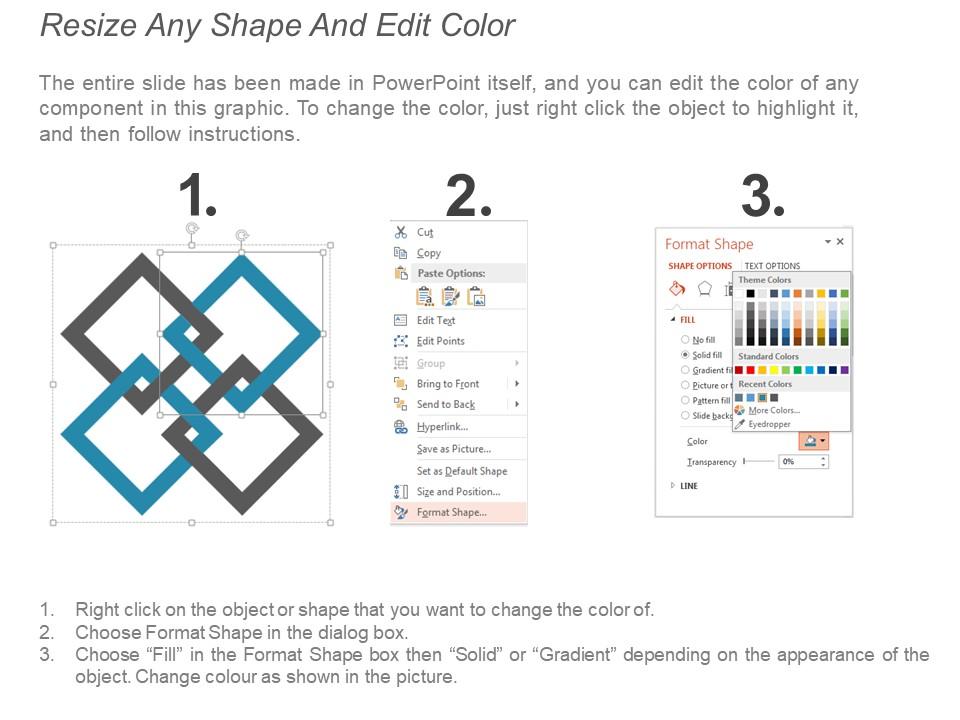

- WideScreen Aspect ratio is becoming a very popular format. When you download this product, the downloaded ZIP will contain this product in both standard and widescreen format.

-

- Some older products that we have may only be in standard format, but they can easily be converted to widescreen.

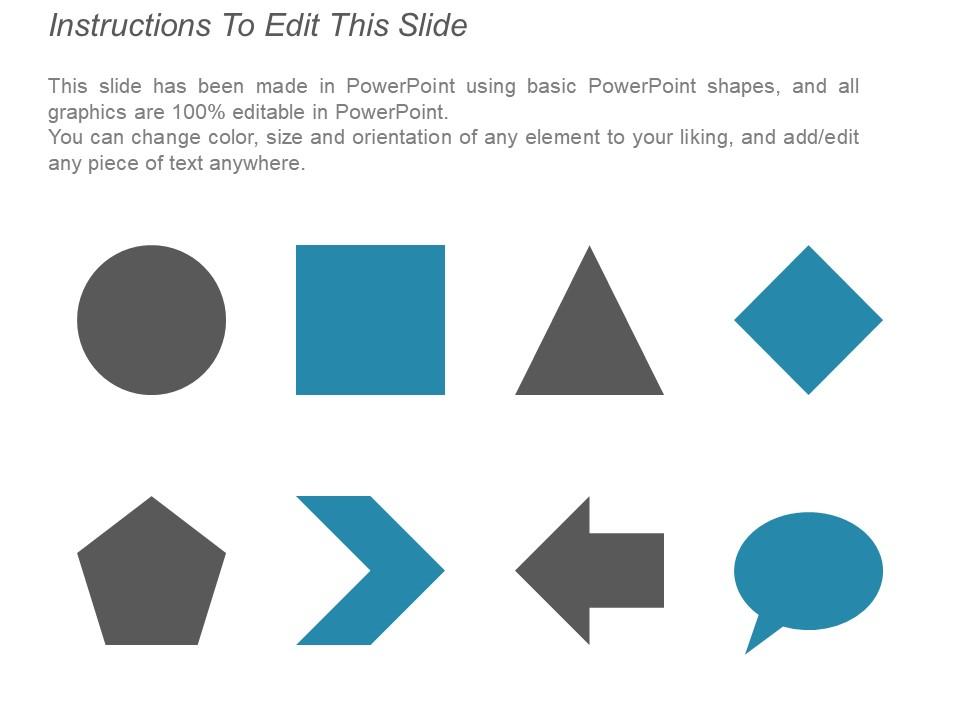

- To do this, please open the SlideTeam product in Powerpoint, and go to

- Design ( On the top bar) -> Page Setup -> and select "On-screen Show (16:9)” in the drop down for "Slides Sized for".

- The slide or theme will change to widescreen, and all graphics will adjust automatically. You can similarly convert our content to any other desired screen aspect ratio.

Compatible With Google Slides

Get This In WideScreen

You must be logged in to download this presentation.

PowerPoint presentation slides

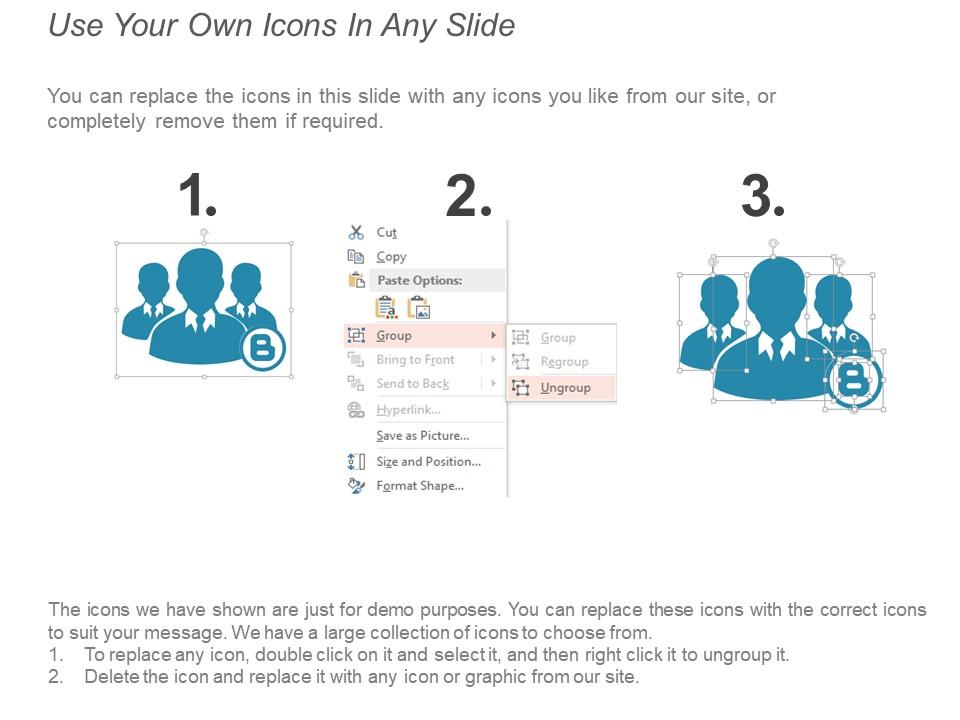

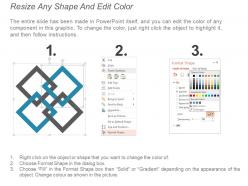

Presenting research methodology with analysis template 1. This is a research methodology with analysis template 1. This is a six stage process. The stages in this process are research methodology, research method, research techniques.

People who downloaded this PowerPoint presentation also viewed the following :

Research methodology with analysis template 1 with all 5 slides:

Come full circle with our Research Methodology With Analysis Template 1. get back to being at your absolute best.

-

Great quality product.

-

Good research work and creative work done on every template.