Disaster Recovery Management Powerpoint Presentation Slides

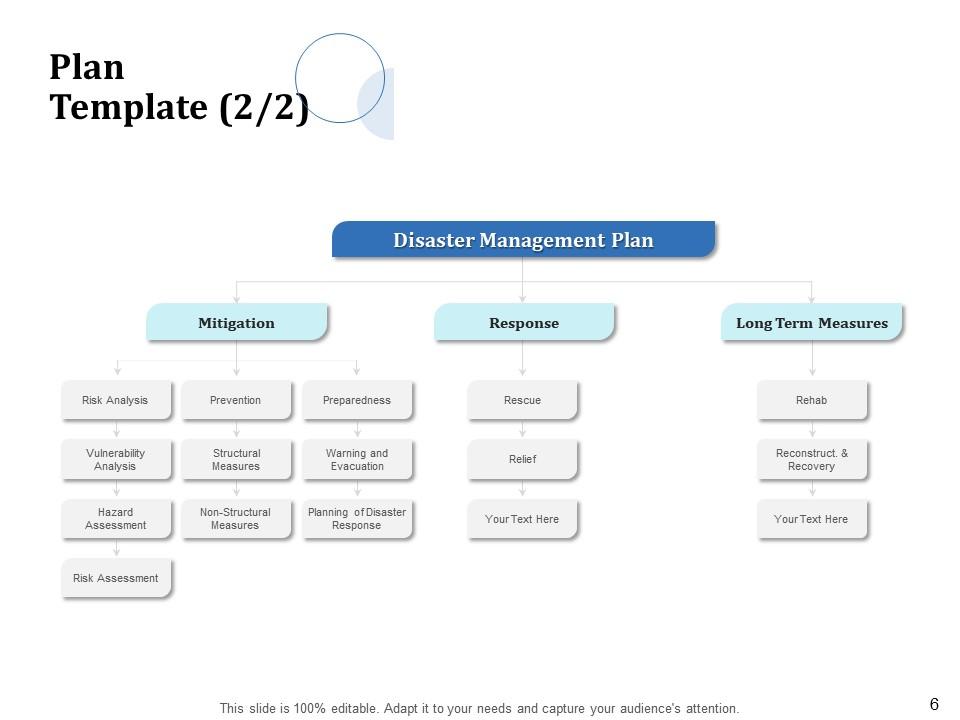

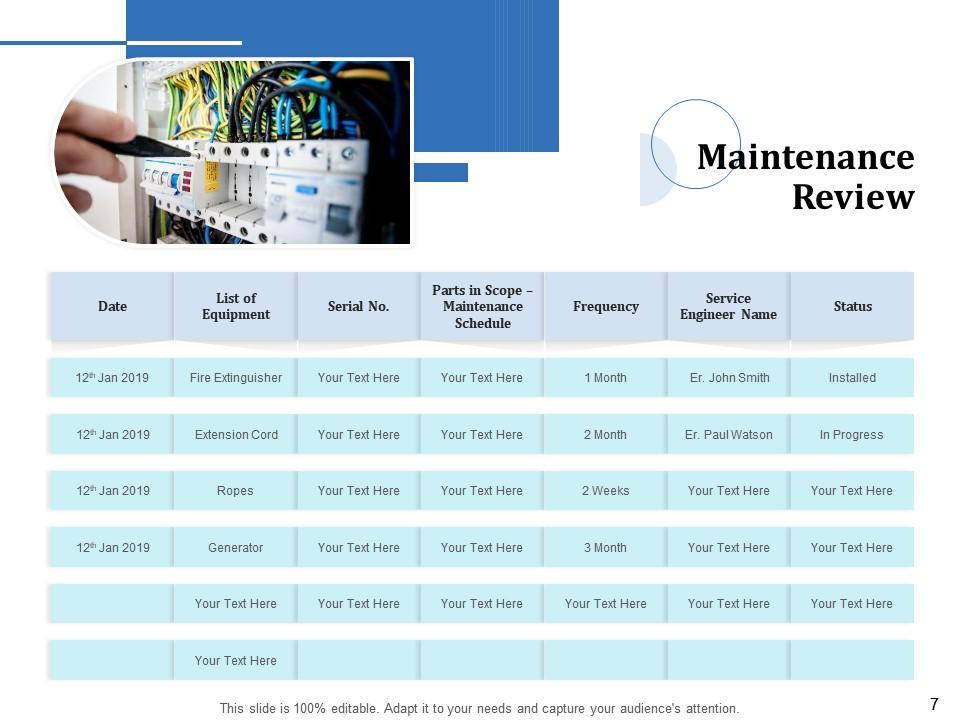

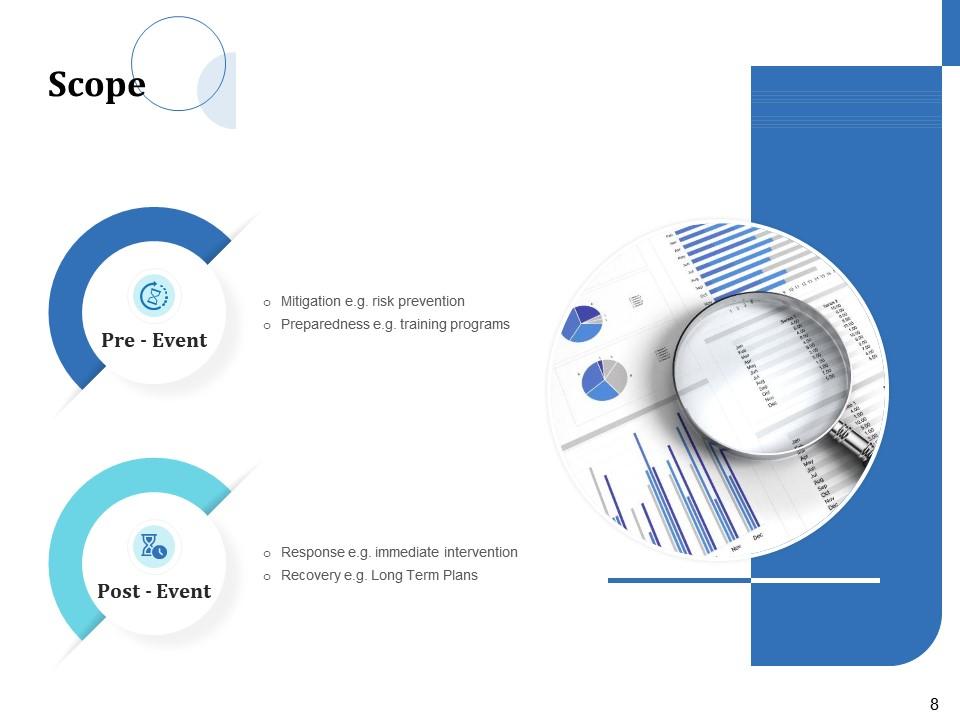

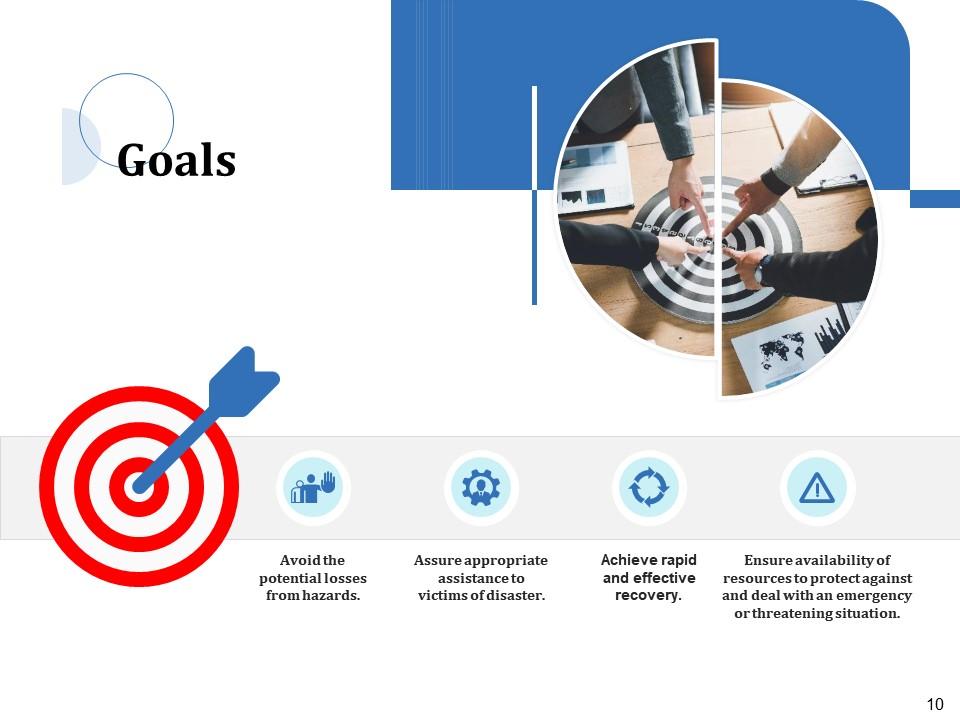

Disaster Recovery Management PowerPoint Presentation Slides gives you an impressive layout to formulate and explain your organization’s response plan to emergencies. Use this crisis management PPT theme to illustrate your disaster management plan in terms of mitigation, response, and long-term measures. With the help of our disaster control PowerPoint slideshow’s neat tabular format, it becomes fairly easy to showcase maintenance review. Through this emergency response PPT template, you can elucidate the structure for the proper governance of disaster response. Emergency management PowerPoint presentation helps you demonstrate prevention and mitigation measures like hazard identification, risk assessment, and financial impact analysis. This risk management PPT slides deck also helps you depict preparedness by elaborating on the business continuity plan. Further, showcase immediate steps to take in an emergency, response procedure, and staff communication process using a disaster response PowerPoint theme. So, gain access to impact data visualization tools and informative content by downloading threat management PPT slideshow.

Disaster Recovery Management PowerPoint Presentation Slides gives you an impressive layout to formulate and explain your or..

- Google Slides is a new FREE Presentation software from Google.

- All our content is 100% compatible with Google Slides.

- Just download our designs, and upload them to Google Slides and they will work automatically.

- Amaze your audience with SlideTeam and Google Slides.

-

Want Changes to This PPT Slide? Check out our Presentation Design Services

- WideScreen Aspect ratio is becoming a very popular format. When you download this product, the downloaded ZIP will contain this product in both standard and widescreen format.

-

- Some older products that we have may only be in standard format, but they can easily be converted to widescreen.

- To do this, please open the SlideTeam product in Powerpoint, and go to

- Design ( On the top bar) -> Page Setup -> and select "On-screen Show (16:9)” in the drop down for "Slides Sized for".

- The slide or theme will change to widescreen, and all graphics will adjust automatically. You can similarly convert our content to any other desired screen aspect ratio.

Compatible With Google Slides

Get This In WideScreen

You must be logged in to download this presentation.

PowerPoint presentation slides

Presenting Disaster Recovery Management PowerPoint Presentation Slides. This complete PPT deck is 50 slides long. All the templates feature 100% customizability. You can make changes to the font, text, patterns, background, and colors. Converting the PPT file format into PDF, PNG, and JPG is fairly simple. You can even view the PowerPoint template deck on Google Slides. Our PPT slideshow supports two display aspect ratios, standard and widescreen.

People who downloaded this PowerPoint presentation also viewed the following :

Content of this Powerpoint Presentation

Slide 1: This slide introduces Disaster Recovery Management. State Your Company name and begin.

Slide 2: This slide displays Content.

Slide 3: This slide displays Introduction.

Slide 4: This slide explains the Purpose.

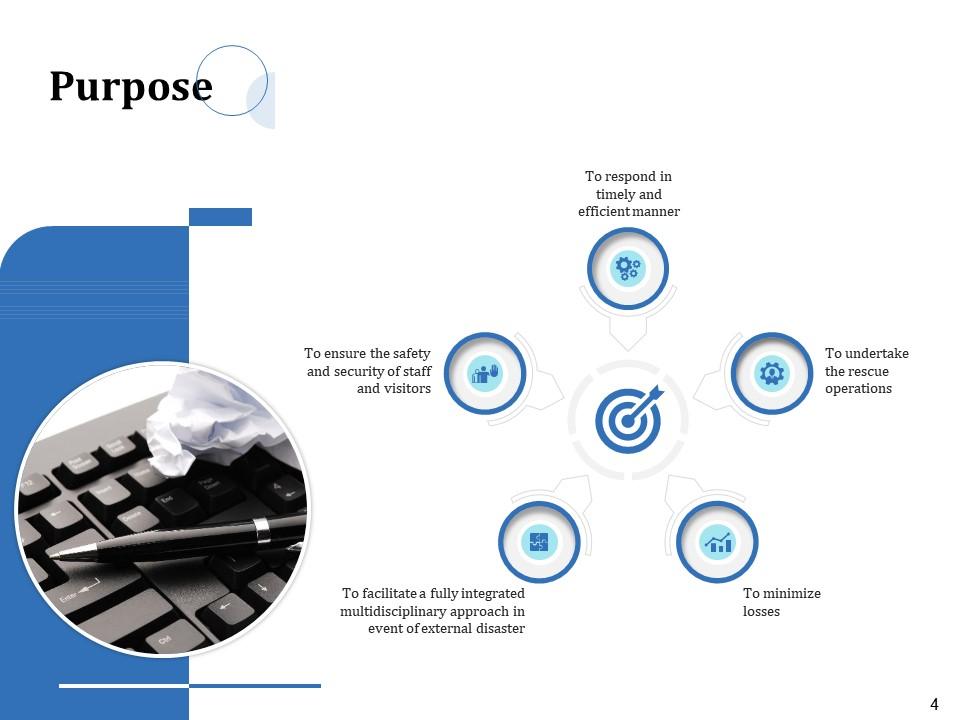

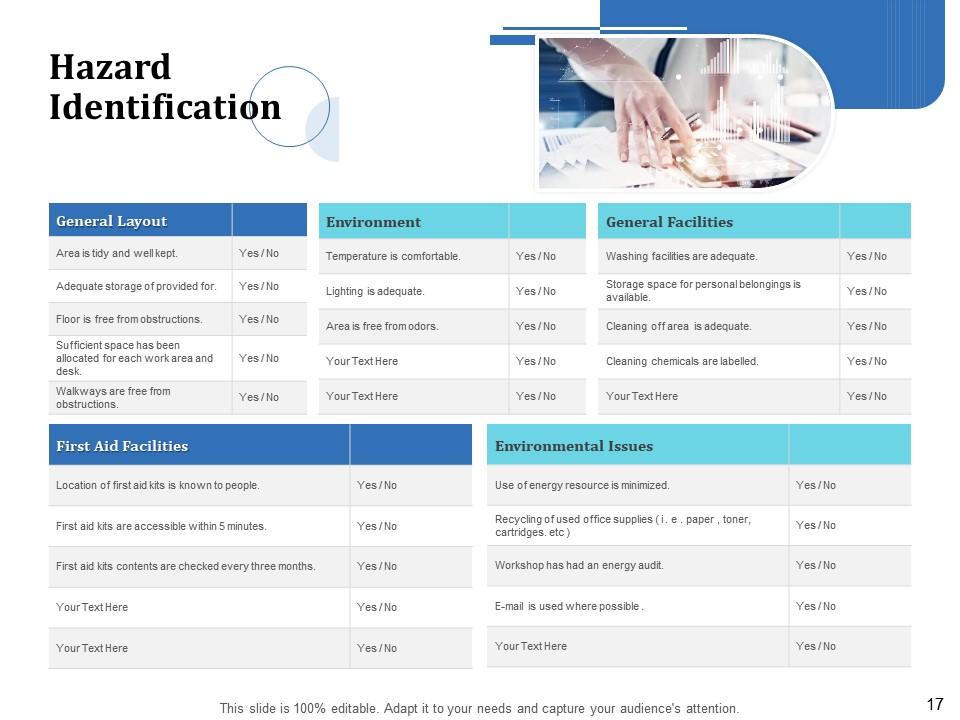

Slide 5: This slide showcases Hazard Identification / Safety Assessment.

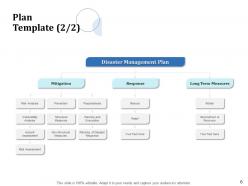

Slide 6: This slide depicts Disaster Management Plan.

Slide 7: This slide showcases Maintenance Review.

Slide 8: This slide represents Scope.

Slide 9: This slide allows to Create a solid pre, during & post event approach.

Slide 10: This slide depicts the Goals.

Slide 11: This slide shows Emergency Planning Governance Structure.

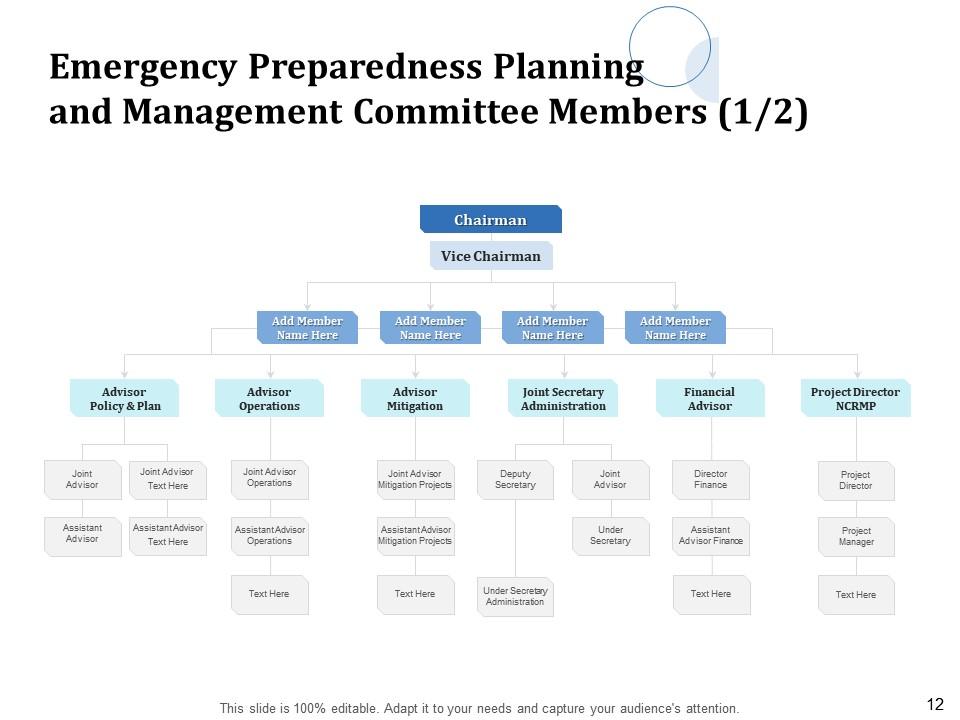

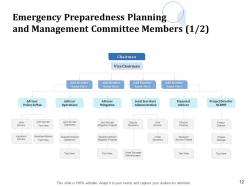

Slide 12: This slide depicts Emergency Preparedness Planning and Management Committee Members.

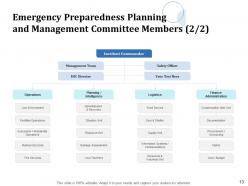

Slide 13: This slide showcases Emergency Preparedness Planning and Management Committee Members.

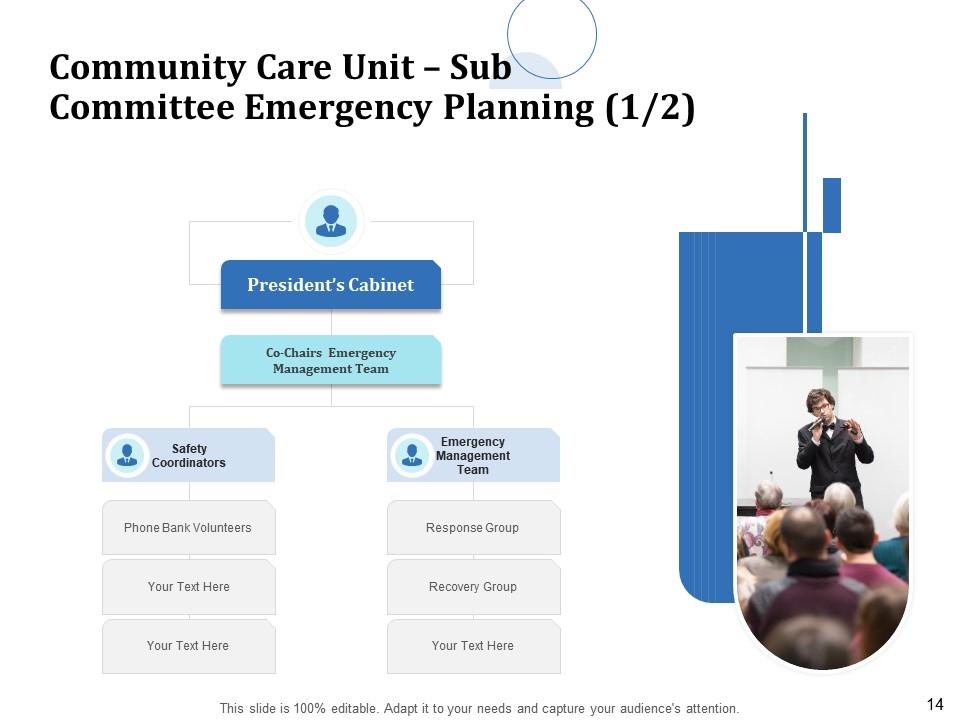

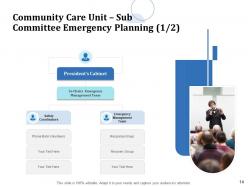

Slide 14: This slide depicts Community Care Unit – Sub Committee Emergency Planning.

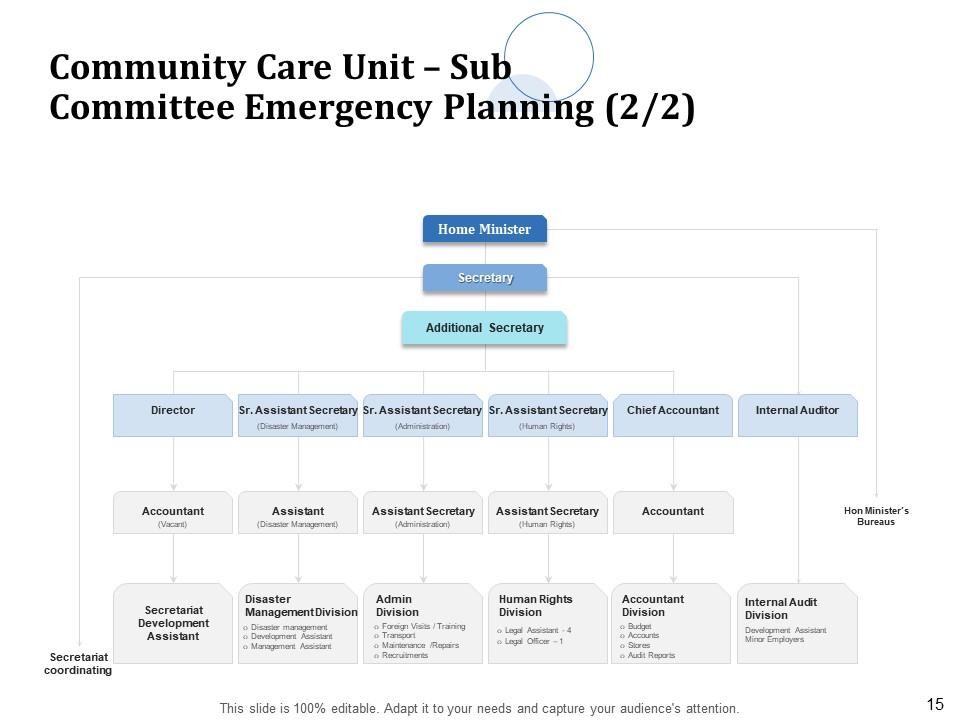

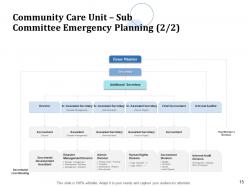

Slide 15: This slide shows Community Care Unit – Sub Committee Emergency Planning.

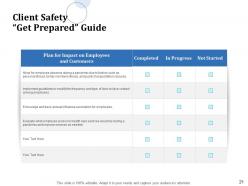

Slide 16: This slide showcases Client Safety Guide.

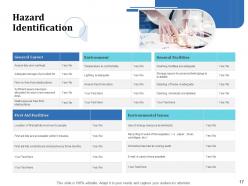

Slide 17: This slide displays Hazard Identification.

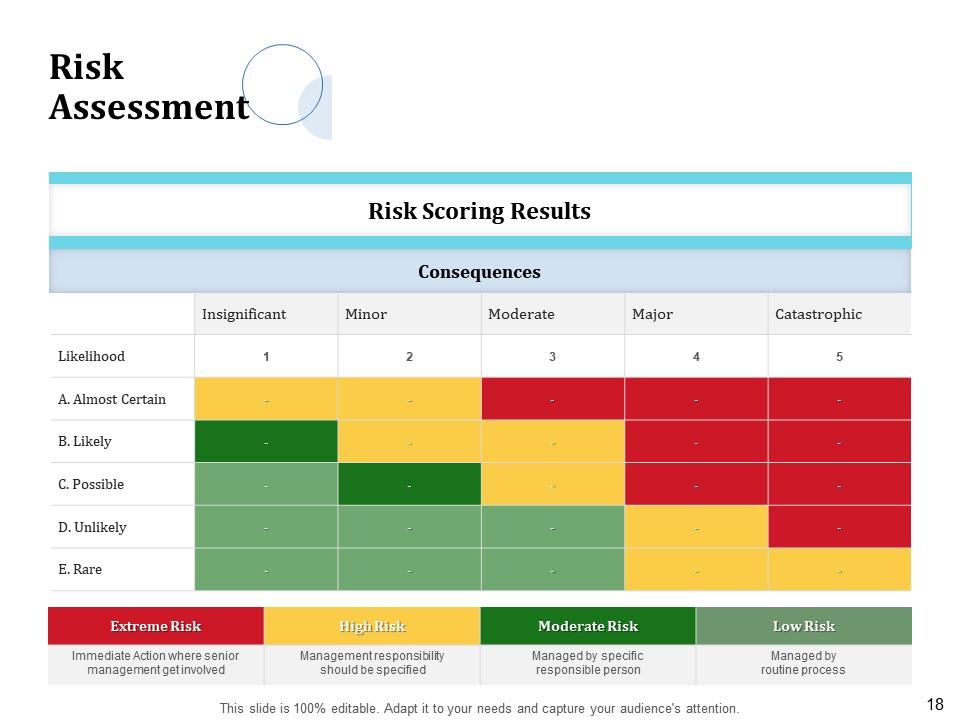

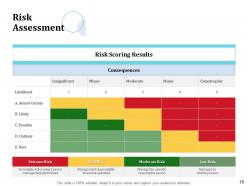

Slide 18: This slide shows Risk Assessment.

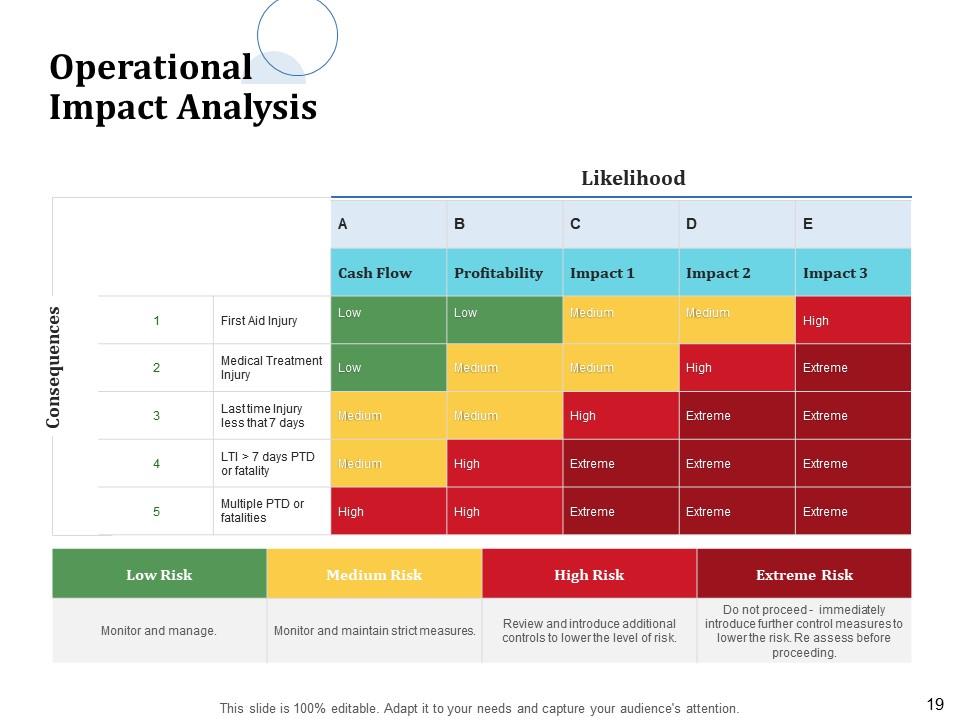

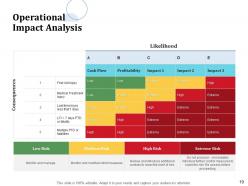

Slide 19: This slide represents Operational Impact Analysis.

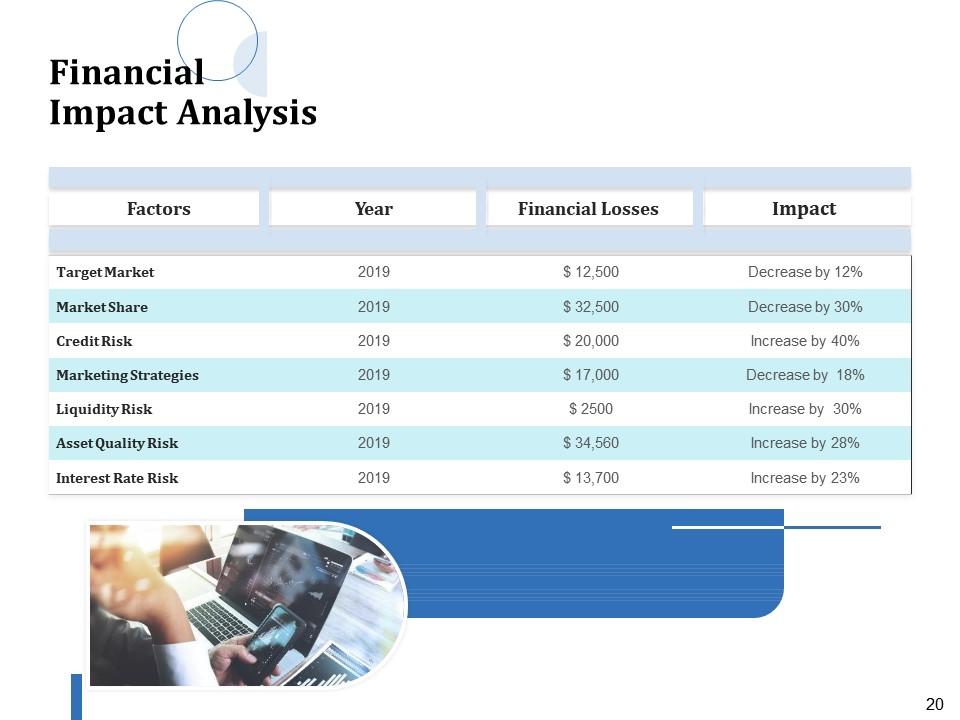

Slide 20: This slide highlights Financial Impact Analysis.

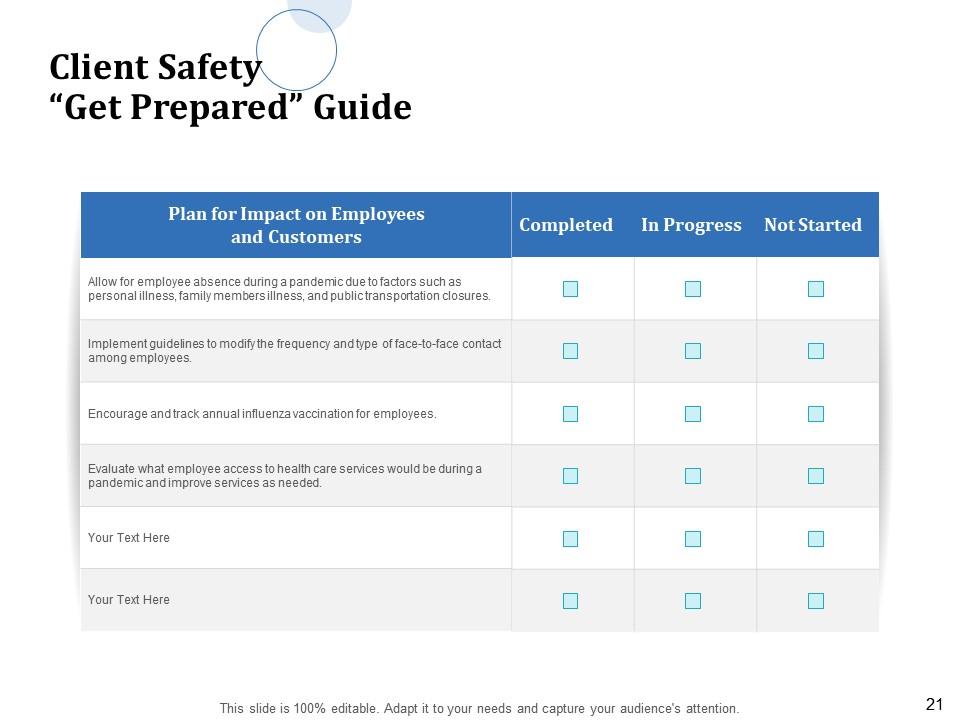

Slide 21: This slide allows to create Plan for Impact on Employees and Customers.

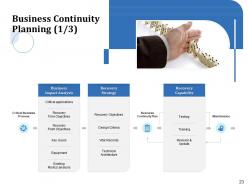

Slide 22: This slide displays Business Continuity Planning.

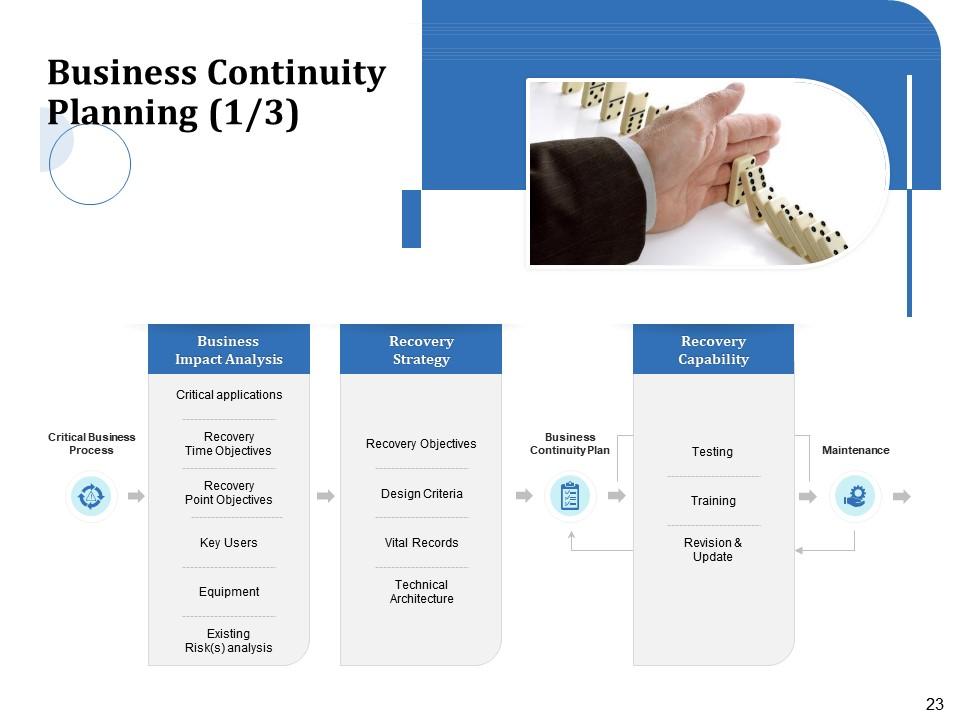

Slide 23: This slide highlights Business Continuity Planning.

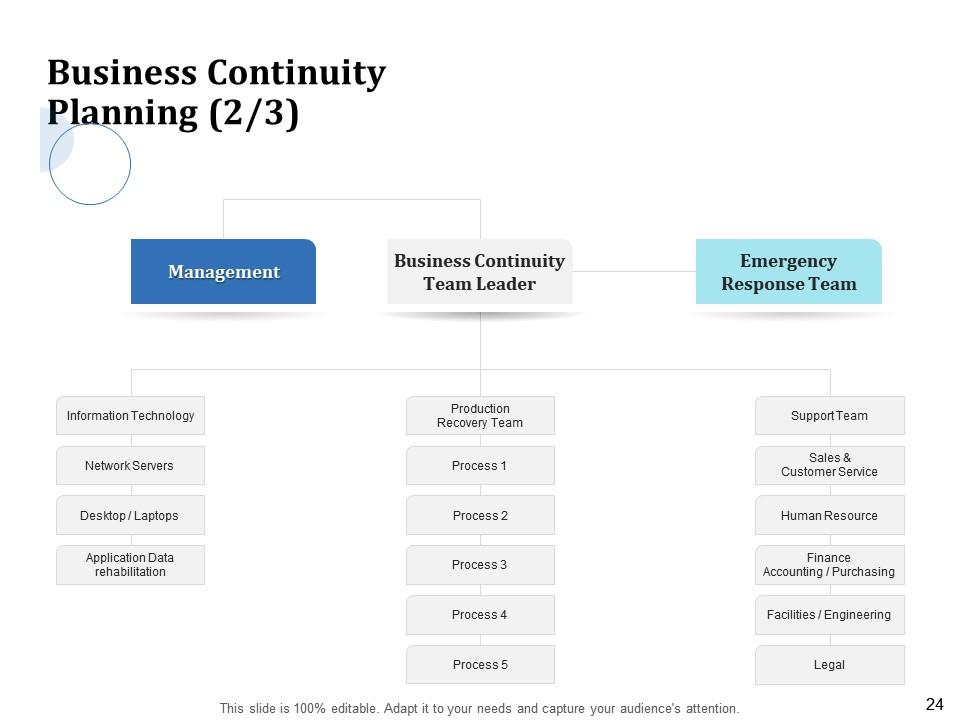

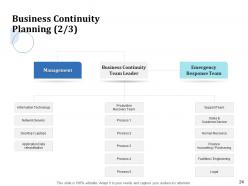

Slide 24: This slide showcases Business Continuity Planning Team.

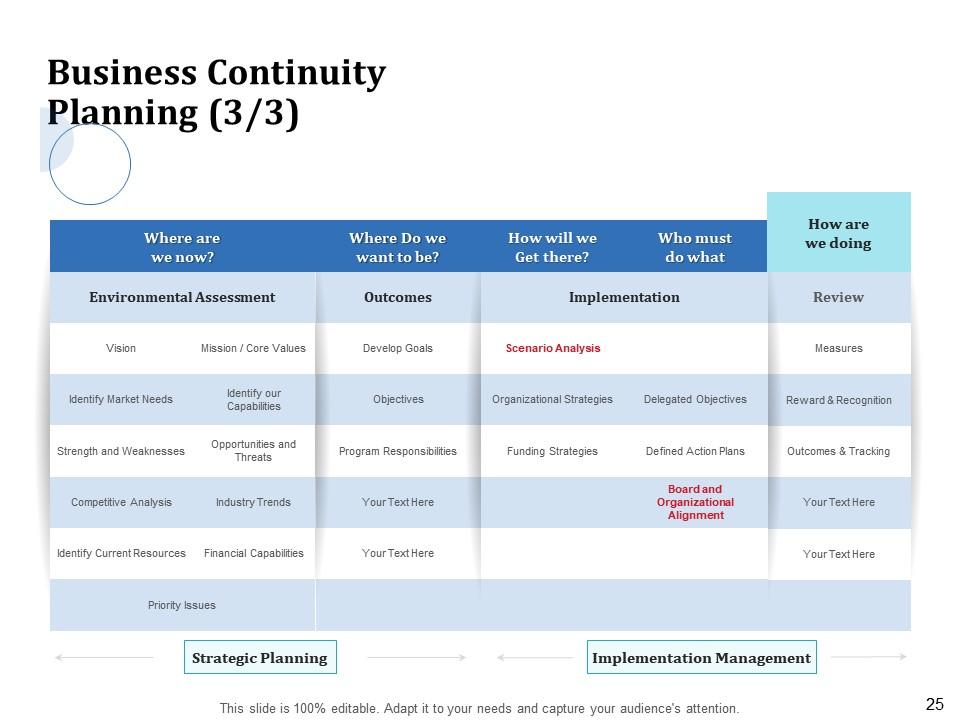

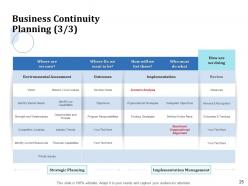

Slide 25: This slide shows Business Continuity Planning.

Slide 26: This slide showcases Immediate steps to take in an Emergency.

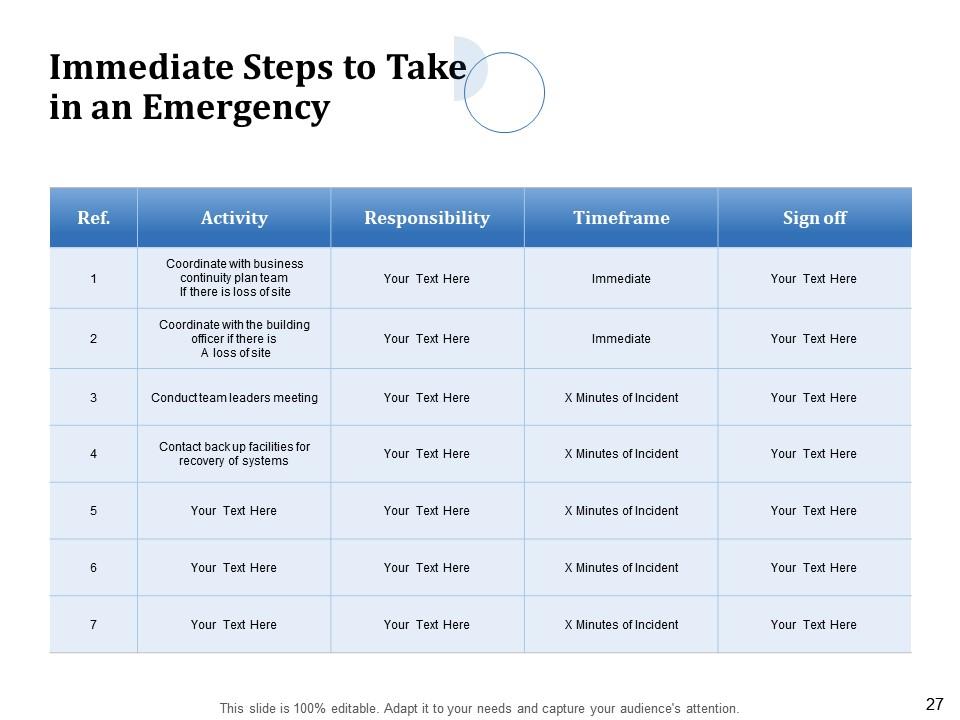

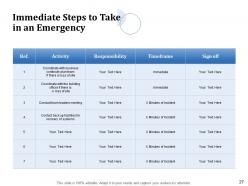

Slide 27: This slide showcases Immediate steps to take in an Emergency.

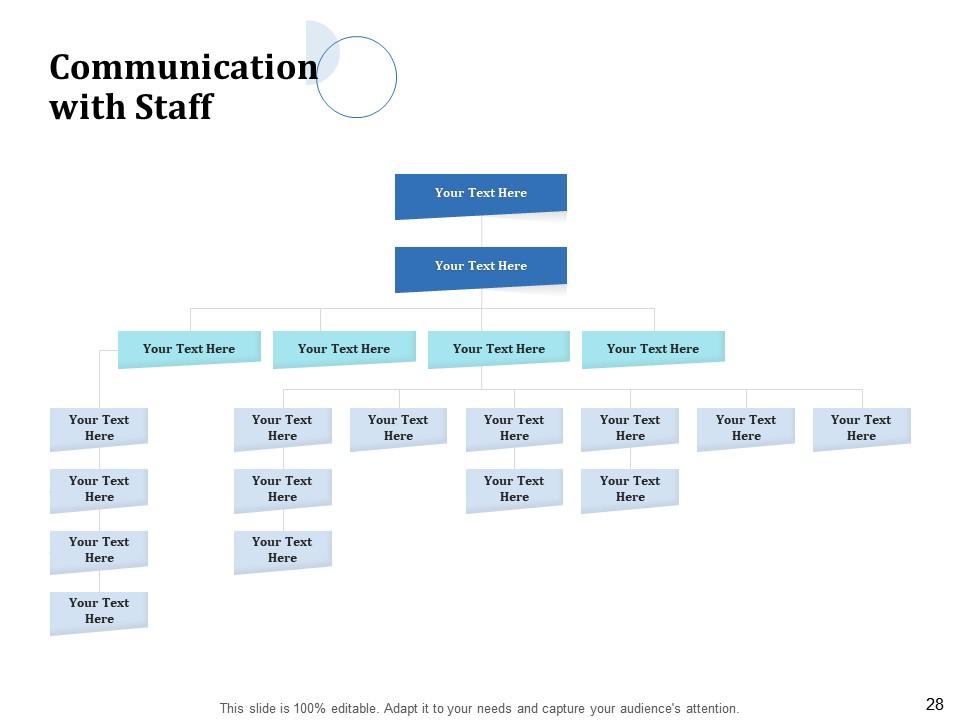

Slide 28: This slide presents Communication with Staff

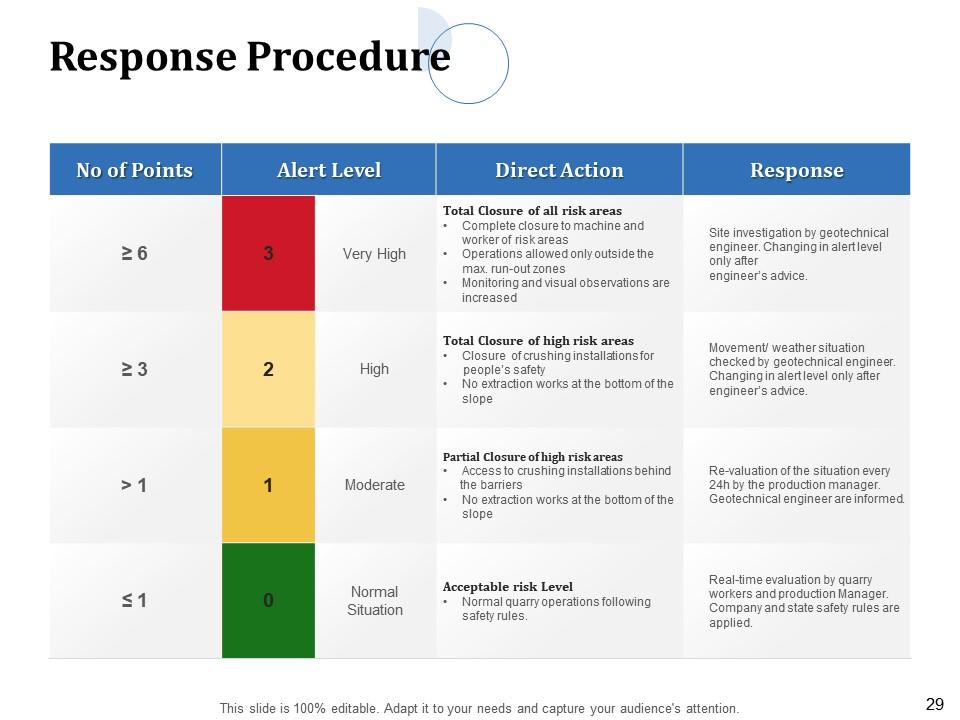

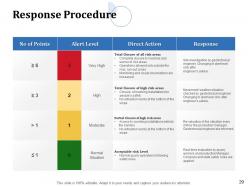

Slide 29: This slide displays Response Procedure

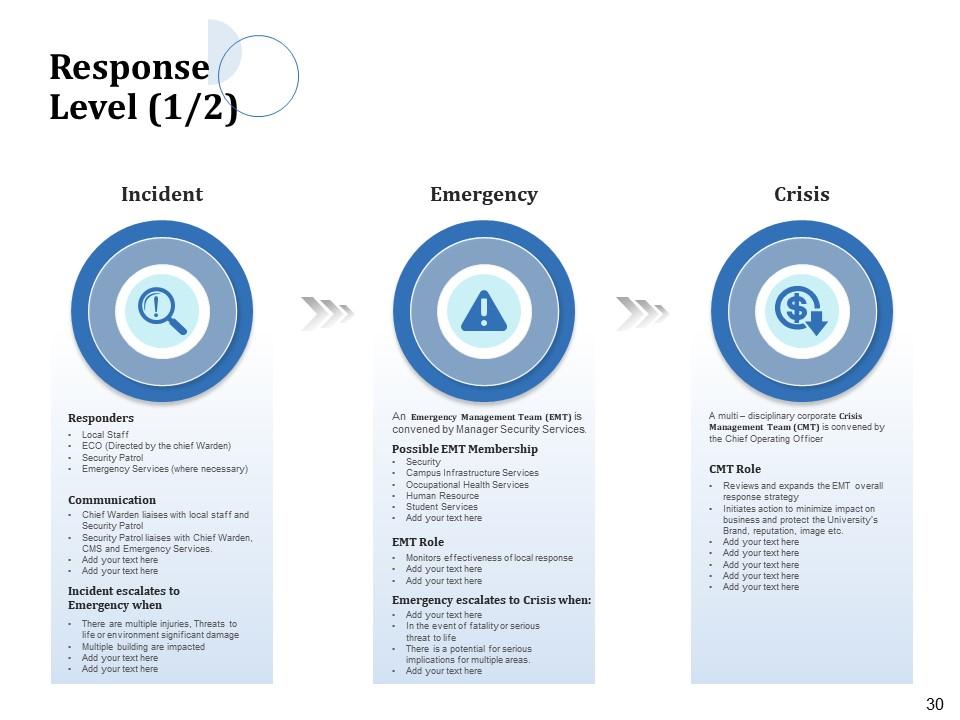

Slide 30: This slide highlights Response Level. Initiates action to minimize impact on business and protect the University’s Brand, reputation, image etc.

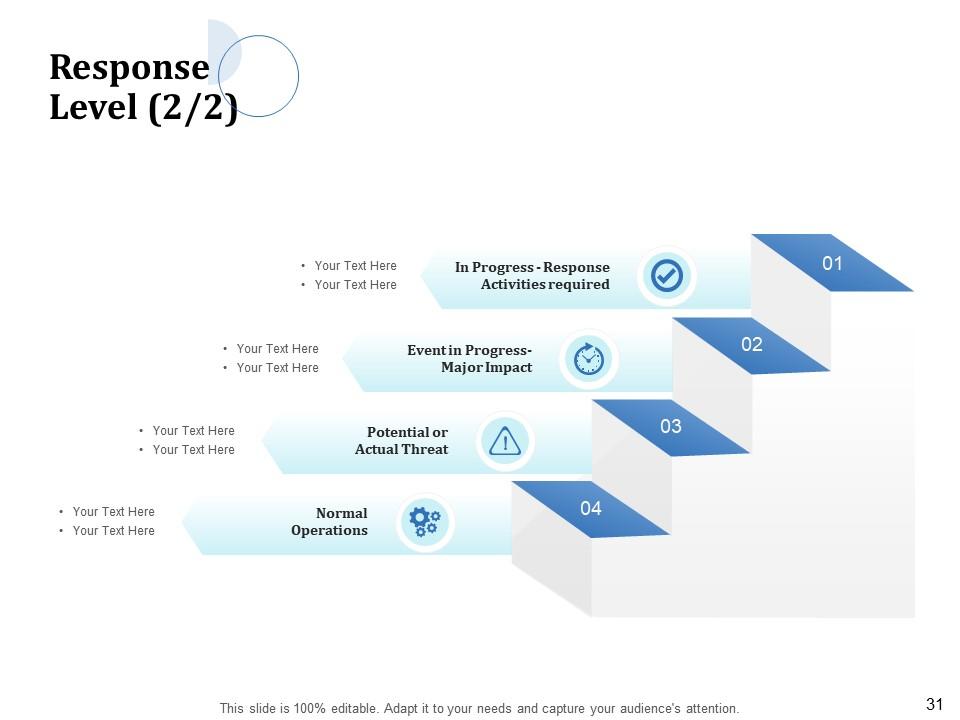

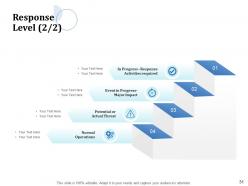

Slide 31: This slide depicts Response Level.

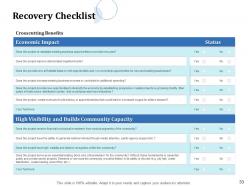

Slide 32: This slide displays Recovery Checklist.

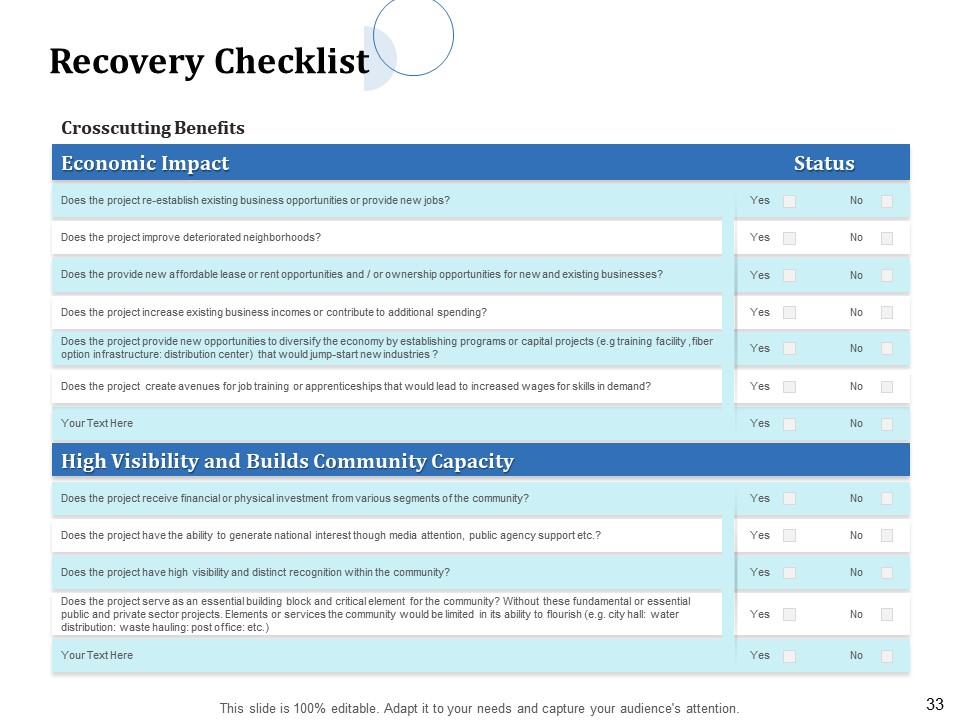

Slide 33: This slide shows Recovery Checklist.

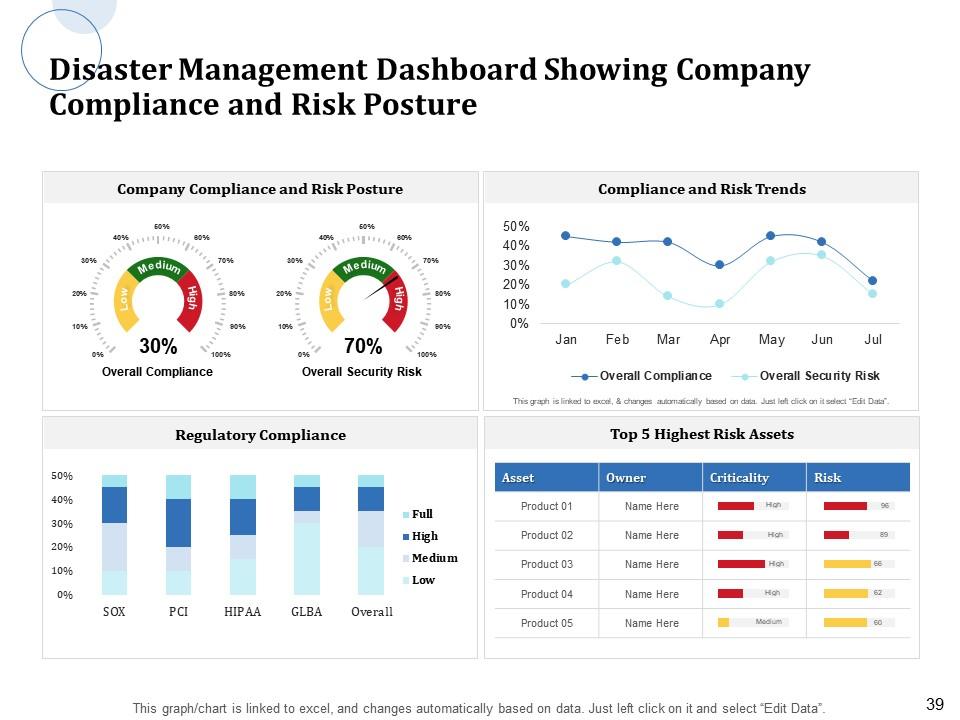

Slide 34: This slide displays KPI & Dashboards.

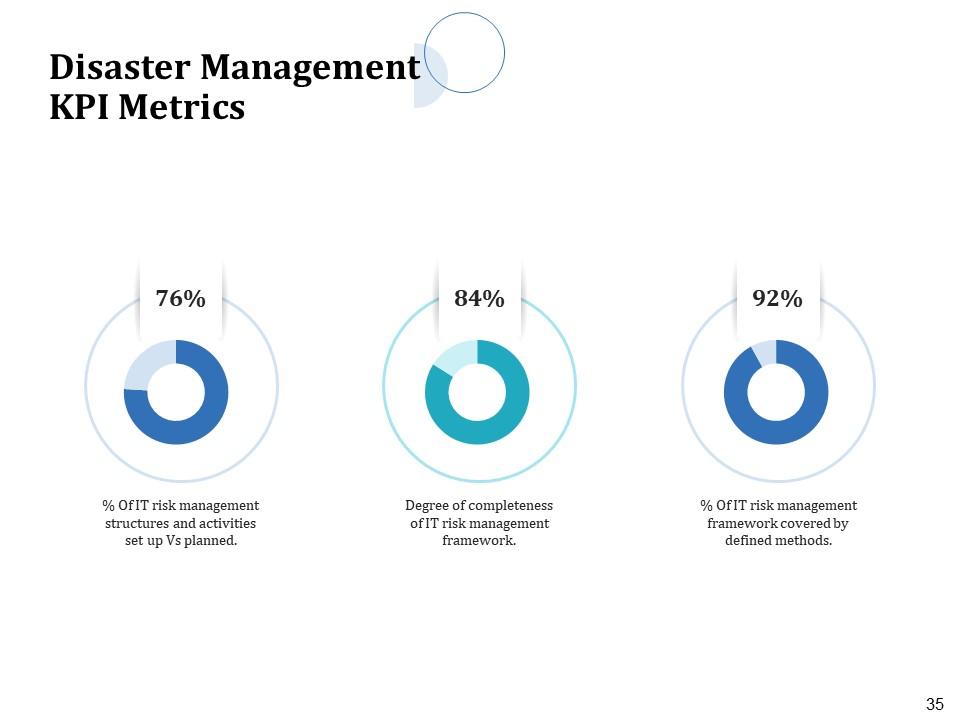

Slide 35: This slide shows Disaster Management KPI Metrics.

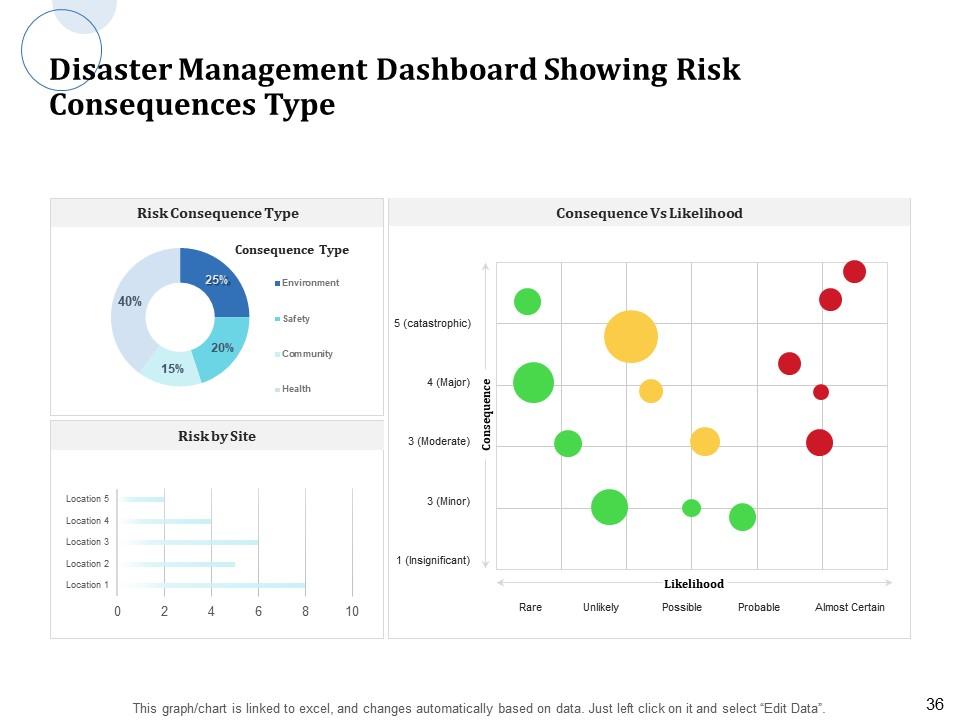

Slide 36: This slide shows Disaster Management Dashboard showing Risk Consequences Type.

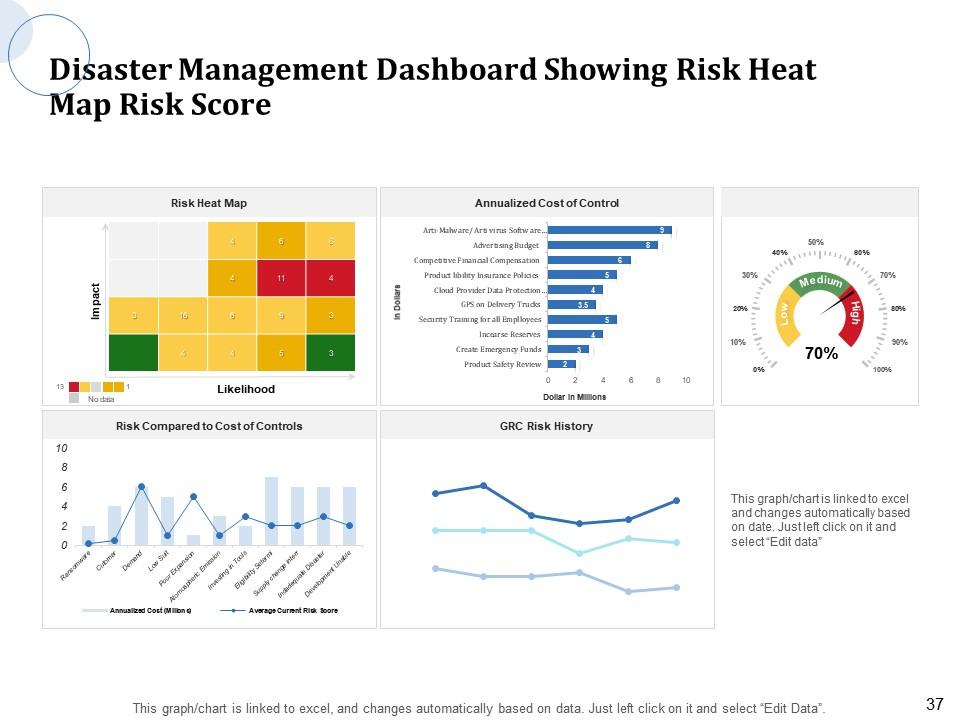

Slide 37: This slide displays Disaster Management Dashboard showing Risk Distribution by Business Process.

Slide 38: This slide highlights Disaster Management Dashboard showing Risk Distribution by Business Process.

Slide 39: This slide shows Disaster Management Dashboard showing Company Compliance and Risk Posture.

Slide 40: This is Disaster Management Recovery Management Icons Slide.

Slide 41: This slide reminds of Coffee Break.

Slide 42: This slide displays Graphs and Charts.

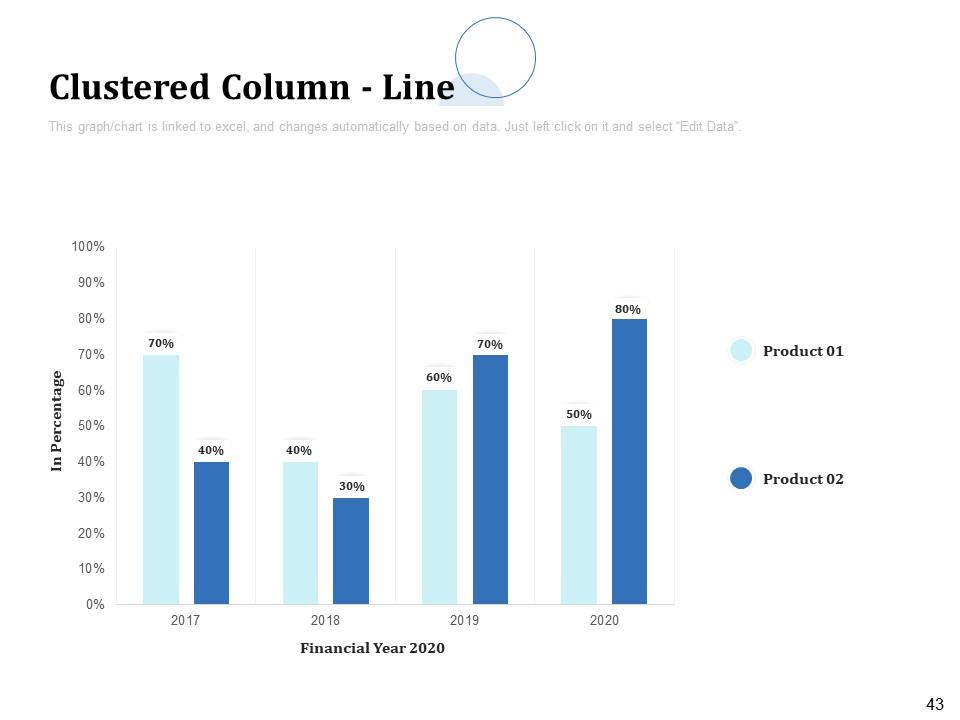

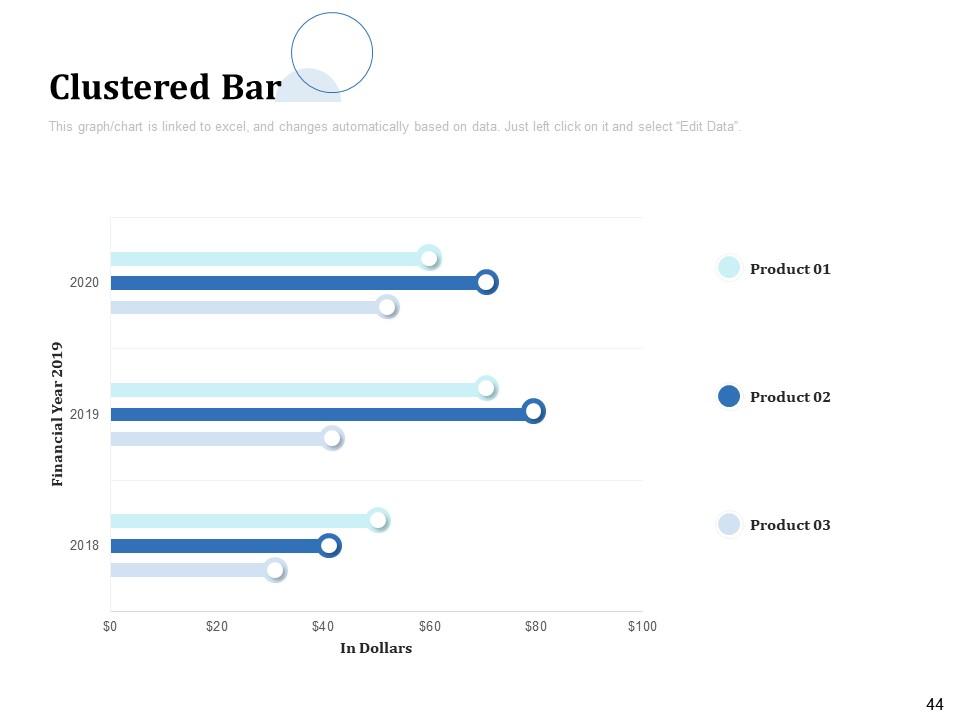

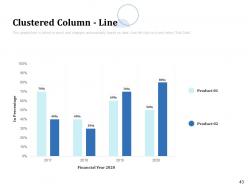

Slide 43: This slide displays Clustered Column chart with product comparison.

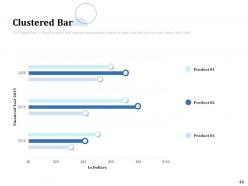

Slide 44: This slide shows Clustered Bar chart with product comparisons.

Slide 45: This slide is titled as Additional Slides for moving forward.

Slide 46: This slide displays Our Mission, Vision and Goal.

Slide 47: This is Our Team slide with Names and Designations.

Slide 48: This is About Us slide to showcase Company specifications.

Slide 49: This slide displays venn.

Slide 50: This is Thank You slide with Address, Contact number, Email address.

Disaster Recovery Management Powerpoint Presentation Slides with all 50 slides:

Use our Disaster Recovery Management Powerpoint Presentation Slides to effectively help you save your valuable time. They are readymade to fit into any presentation structure.

-

Thanks for all your great templates they have saved me lots of time and accelerate my presentations. Great product, keep them up!

-

Great product with effective design. Helped a lot in our corporate presentations. Easy to edit and stunning visuals.

-

Good research work and creative work done on every template.

-

Unique design & color.